Best Rootly alternative for Slack-native teams

Updated Apr 3, 2026

TL;DR: If your team coordinates incidents entirely in Slack, the gap between a "Slack integration" and a "Slack-native platform" costs you real coordination overhead per incident. Rootly brings solid incident workflows into Slack and is easier to configure than legacy tools, but incident.io is built from the ground up as a Slack-native platform where the entire lifecycle runs through slash commands, automatic timeline capture, and AI-drafted post-mortems without a web UI fallback. Intercom's engineers went on-call in 3 days instead of 2 weeks, and MTTR dropped 37% at Favor both after switching to a fully Slack-native approach. For Slack-first SRE teams, incident.io is the stronger choice.

Picture a typical database failover scenario: the technical fix takes 90 minutes, but the remaining time is pure coordination overhead hunting for the DBA on-call in a spreadsheet, manually creating a Zoom link, and missing a critical fix because it landed in a Slack thread nobody was watching. The post-mortem problem isn't technical complexity. It's tool sprawl.

If you're evaluating Rootly against alternatives like incident.io, the question isn't which platform has the longer feature list. It's which one eliminates the most coordination overhead when production is down at 3 AM.

End SRE toil: Slack-native incident response

SRE toil isn't just the time spent debugging a broken service. It's the minutes before your engineers even start debugging manual channel creation, copy-pasted alert links, hunting down the right on-call, and a Google Doc opened for notes that nobody fills in. That team assembly phase alone eats 10 to 15 minutes per incident before a single line of code gets touched. Across 15 incidents per month, that's 150 to 225 minutes of pure coordination overhead, none of it moving you closer to a fix.

How context-switching slows incident MTTR

The root cause is tool sprawl in incident response: PagerDuty fires the alert, Datadog holds the metrics, Slack is where your team communicates, Jira tracks follow-ups, and Confluence is where post-mortems go to be forgotten. Every context switch during a live incident adds cognitive load and delays the actual fix. For junior engineers handling their first on-call shift, that load causes them to freeze entirely, forcing senior SREs to take over and burning everyone out faster.

Spotting true Slack-native incident tools

"Slack-native" gets misused in vendor marketing. A practical distinction: web-first tools typically send Slack notifications but rely on their web UI for most actions, while Slack-native tools position Slack as the primary interface.

With a web-first tool like PagerDuty, an alert fires, a message lands in #alerts, and you click a link that opens the web UI. Managing the incident often requires switching between the web dashboard and Slack. With a truly Slack-native tool, you type /inc declare and the platform creates a dedicated incident channel, pages on-call engineers, surfaces service catalog context, assigns roles, and starts capturing the timeline without leaving Slack. The incident workflow stays centralized in your team's primary communication tool rather than requiring constant context switches to a separate interface.

Rootly's fit: strengths and workflow gaps

Rootly is a legitimate modern incident management platform, not a legacy tool, and it genuinely brings more of the incident workflow into Slack than PagerDuty or Opsgenie ever did. Teams actively evaluating Rootly are making a reasonable choice, and the end-to-end Rootly Slack demo shows how it handles incident declaration and responder coordination through chat.

Rootly: Rapid incident response in Slack

Rootly's /rootly declare command, as shown in the end-to-end Rootly Slack demo, creates incidents, handles channel naming, sets the channel topic, and invites responders based on service ownership. For teams coming from a completely manual process, Rootly removes several manual steps from incident declaration.

Rootly's audit-ready incident timelines

Rootly captures incident timelines and generates post-mortems, which can support SOC 2 and compliance audit needs for timestamped incident records. Teams running SOC 2 Type II programs and needing ITSM compliance in DevOps environments may find Rootly's post-mortem output helpful for documentation requirements.

Where Rootly falls short for Slack-first teams

In team evaluations, two areas of friction show up consistently: setup time and AI depth. Rootly requires more upfront configuration than incident.io's opinionated defaults, which typically means longer initial setup. On AI capabilities, Rootly's features focus on compressing MTTR after declaration through faster triage and coordination. incident.io's AI SRE goes further by correlating the likely code change or deployment that triggered the incident and can open a draft fix pull request directly in Slack based on the identified change (available on the Pro plan). When your team is debugging a production issue at 2 AM, "faster coordination" is table stakes. "Here is the likely deployment that caused this, and here is a draft PR to fix it" is a different category of help.

incident.io: purpose-built for Slack-native workflows

incident.io is the Slack-native incident management platform that auto-creates channels, auto-captures timelines, and auto-drafts post-mortems, so engineering teams can reduce MTTR by up to 80% without adding headcount or training. The entire incident lifecycle runs through Slack. As Torq's team found, one standardized incident workflow replaced both PagerDuty and Rootly and eliminated the fragmented tooling that was slowing their response.

Run incident workflows via Slack commands

When a Datadog alert fires, incident.io automatically creates a dedicated incident channel, pages the on-call engineer, pulls in service owners based on the Service Catalog, and starts recording the timeline. The on-call engineer types /inc summary and adds context. /inc assign @sarah-sre makes Sarah incident commander. /inc severity high updates the severity. No browser tab opened, no web UI loaded.

"The Slack commands feel natural and approachable for team members in our workspace." - Carmen G. on G2

Automatic timeline capture and AI-drafted post-mortems

Every Slack message in the incident channel, every role assignment, and every call transcript from Google Meet or Zoom gets captured automatically into a structured timeline. When the incident resolves, the AI SRE drafts the post-mortem at roughly 80% completion from that captured data. Your job is 10 minutes of refinement, not reconstructing a crime scene three days later with no photos fuzzy details, incomplete timelines, and engineers who've already moved on.

Intercom saw post-mortems close within 24 hours instead of the previous 3 to 5 days after migrating to incident.io, and their engineers went on-call in as little as 3 days instead of the previous 2 weeks. Adrián M. calls the post-mortem capability a standout feature:

"1-click post-mortem reports - this is a killer feature, time saving, that helps a lot to have relevant conversations around incidents (instead of spending time curating a timeline)." - Adrian M. on G2

The AI SRE automates up to 80% of incident response, identifying the likely deployment or configuration change behind the incident and suggesting next steps based on past incidents. This is not log correlation. It is root cause identification backed by your service catalog, recent deployment history, and current infrastructure state.

Integration depth with Datadog, Prometheus, and PagerDuty

incident.io integrates natively with Datadog, Prometheus, New Relic, PagerDuty, Jira, Linear, GitHub, Confluence, and Grafana. Critically, it does not replace your monitoring tools. PagerDuty is the smoke detector, and incident.io is the fire response team.

Time-to-value: operational in days, not weeks

incident.io's opinionated defaults help teams get operational quickly. Strong default workflows cover SEV1 through SEV3 incident types, on-call schedule templates, and pre-built integrations that work out of the box. Bruno D. rolled out incident.io to a small engineering team and found the integration experience fast.

incident.io vs. Rootly: impact on MTTR

| Platform | Slack-native UX | AI post-mortems | Time-to-value | Pricing with on-call |

|---|---|---|---|---|

| incident.io | Full lifecycle via /inc commands | Drafts at ~80% completion from timeline and calls | Days to first incident | $45/user/month (Pro: $25 base + $20 on-call add-on) |

| Rootly | Slack integration with web configuration steps required, per Rootly documentation | AI-assisted capabilities, per Rootly documentation | Reported multi-week setup | Contact sales |

| FireHydrant | Slack-first with web UI feature parity | AI summaries and meeting transcripts | Setup in minutes | Starter $20/user/month, Advanced $44/user/month |

Slack-native workflow and slash command UX

During a 3 AM Priority 1 incident, the difference between /inc declare resolving in Slack and /rootly declare can require additional steps outside of Slack for configuration. incident.io's Slack-native design means critical incident management tasks stay in Slack. When you need to override escalation paths or reassign roles during an active incident, staying in Slack matters.

Audit-ready incident timelines

Both tools capture timelines that support compliance audit requirements. The advantage incident.io holds is in automation: incident.io transcribes incident calls, captures key decisions without a dedicated note-taker, and pulls key moments into the incident channel.

Verifying AI root cause findings

incident.io's AI SRE automates up to 80% of incident response by identifying the likely change behind an incident and opening a draft fix pull request directly in Slack (available on the Pro plan). Rootly's AI compresses the MTTR clock through faster coordination and context delivery. If your team's biggest pain point is spending 20 minutes during an incident asking "what changed recently?", incident.io's AI addresses that directly through service catalog correlation and deployment history analysis, surfacing the answer in the incident channel automatically.

Streamlining new SRE on-call training

Intercom's engineers went on-call in 3 days instead of 2 weeks because structured runbooks in the tooling itself replaced Confluence wikis nobody reads under pressure. This is the payoff of Slack-native architecture: new engineers typically don't need to memorize a new tool's UI. They use slash commands directly in Slack, and the first incident becomes a guided process rather than a solo archaeology expedition.

Transparent pricing: predictable TCO

For a 20-person on-call team, the math is concrete.

- incident.io Pro with on-call: $45/user/month ($25 Pro base + $20 on-call add-on) for a 20-person team, approximately $10,800/year

- Rootly Incident Response: Pricing varies by tier; contact Rootly for current rates (on-call pricing separate)

Rootly's Essentials tier costs less at the base price. Our Pro plan costs more, but includes features like AI post-mortem generation, Microsoft Teams support, private incidents, and the Insights dashboard. We recommend calculating the value of MTTR reduction in your environment: Favor reduced MTTR by 37% after adopting incident.io, meaning reclaimed engineering hours often justify the cost difference within a quarter.

Top incident tools beyond Rootly and incident.io

For SRE teams doing a full market evaluation, two other platforms appear consistently in comparisons: PagerDuty and Opsgenie.

PagerDuty's Slack integration limitations

PagerDuty is a battle-tested alerting tool with sophisticated alert routing. It integrates with Slack, but many teams find it's primarily designed as a web-first platform. Incident channels created by PagerDuty may require switching between Slack and the web UI for full incident management functionality, with timelines living in the PagerDuty web interface. PagerDuty offers extensive alert routing capabilities. If you want incidents to live entirely in Slack, its architecture may require some adjustment. See our PagerDuty alternatives guide for 2026 for a full breakdown.

Opsgenie sunset and migration

We don't recommend evaluating Opsgenie as a viable target for new incident management platforms. Opsgenie's end of support is April 2027. If your team is currently on Opsgenie, you're on a forced migration path. incident.io offers migration tooling for Opsgenie users to carry over on-call schedules and alert routing, and the beyond the Opsgenie sunset webinar is a practical starting point for teams in this position.

Choose the best Slack-native incident tool

The right tool depends on your team's biggest bottleneck. If it's alert routing sophistication, PagerDuty wins on customization depth. If it's Slack-native workflow and automated post-mortems, incident.io wins on architecture. Here is how to run a structured 30-day evaluation anchored to real incidents and measurable coordination metrics.

Run real incidents during your evaluation

Run your next 5-10 actual production incidents through the platform you're evaluating, not synthetic test scenarios. Track key coordination metrics during this period and note whether the captured timeline matches what your engineers actually remember happening. Consider establishing a baseline from your current process so you can compare improvement after the evaluation period. Real-world usage during actual incidents will reveal whether the tool truly streamlines your response workflow.

Measure post-mortem completion rates and integration setup time

Consider tracking post-mortem completion rate and time-to-publish for incidents during the evaluation. If you're currently publishing post-mortems 3-5 days after resolution, you may want to aim for faster turnaround with timeline and basic analysis, and the post-mortem problem is well-documented. Also check the installation process with your Slack workspace admin and whether your Datadog monitors can be configured to route through PagerDuty or Opsgenie to incident.io. The Slack command conflicts guide may provide additional setup guidance.

Switching incident platforms: key steps

Migrating incident tooling while production incidents keep happening requires careful risk management. Running both systems in parallel allows you to build confidence in the new platform before completing the switch.

Onboard your initial on-call team

Start with your core on-call rotation for the pilot, not the entire engineering organization.

Configure a few key workflows based on your severity tiers (such as SEV1 critical, SEV2 high, SEV3 moderate), connect Datadog and PagerDuty, and import your service catalog from existing runbook documentation. Run the pilot and track metrics that are meaningful for your team, such as incident response times, follow-up completion, or team feedback.

Phased migration with parallel ops

Keep PagerDuty or Opsgenie running in parallel initially so no alerts get dropped. incident.io integrates with PagerDuty directly, meaning you can receive PagerDuty alerts in incident.io channels while maintaining your existing paging rules. Once coordination metrics are tracking in the right direction, migrate the paging rules fully and decommission the old tool.

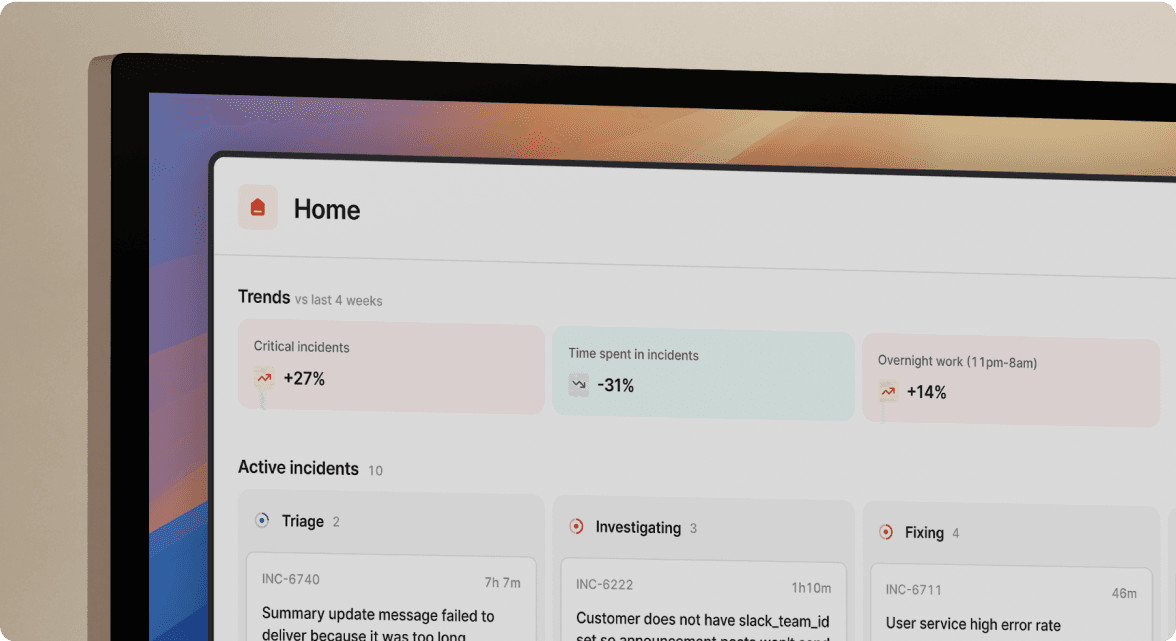

Track MTTR improvements quarter-over-quarter

Our Insights dashboard surfaces key incident metrics and trends automatically from incidents the platform handles. You don't need a manual spreadsheet or Jira query to answer "are we getting better?"

Over time, you can present a clear narrative to leadership showing incident trends, service patterns, and team distribution. That data comes from the platform passively, not from anyone "remembering to update the dashboard." For teams also evaluating SRE alerting best practices alongside their platform migration, the Insights data may support alert fatigue reduction work through service-level incident visibility.

Want to see how teams cut MTTR by up to 80%? Schedule a demo to watch the AI SRE and automated post-mortems work through a real incident, end to end.

Key term glossary

MTTR (Mean Time To Resolution): The average time from alert firing to incident fully resolved.

Slack-native: A platform where Slack is the primary interface for the entire incident lifecycle, not a secondary notification channel bolted onto a web UI.

AI SRE: incident.io's autonomous assistant that investigates incidents by identifying likely changes and suggesting next steps based on past incidents, helping to automate portions of incident response.

Post-mortem: A structured document capturing incident timeline, root cause, impact, and follow-up actions, used for organizational learning and compliance audits.

Service Catalog: A structured map of services, their owners, dependencies, runbooks, and current health status, used to automatically route incidents to the right teams and notify stakeholders.

Coordination overhead: Time often spent assembling responders, finding context, and updating tools during an incident rather than directly diagnosing and fixing the underlying technical issue.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization