The post-mortem problem

Post-mortems are one of the most consistently underperforming rituals in software engineering. Most teams do them. Most teams know theirs aren't working. And most teams reach for the same diagnosis: the templates are too long, nobody has time, and nobody reads them anyway.

These aren't wrong observations. But they're symptoms, not causes.

The actual problem is that somewhere along the way, the post-mortem stopped being a piece of communication and became a compliance artifact. Something to file, not to read. Something to finish, not to learn from. Post-mortems fail not because of bad templates or missing tooling — they fail because we forget that they're written by people, for people.

That distinction matters more than it might seem. It reframes almost every post-mortem problem worth solving.

Why writing falls flat

The timing is almost comically cruel. You've spent hours — maybe days — fighting a fire. You're exhausted, you're relieved it's over, and the first thing on your list is sitting down to write a thorough account of everything that just happened.

Here's what we've found: that discomfort is actually a feature. The best post-mortems are written while the incident still stings a bit. That's when you remember what people were actually thinking, not just what they did. When the context is sharp, the emotion is honest, and the writing has urgency to it. Wait a week, and you've got a cleaner story but a worse document. The details fade. The why gets smoothed over.

The blank page compounds this. A 10-section template doesn't solve the blank page problem — it makes it worse, because now the blank page comes with expectations attached. Engineers with deep technical expertise stare at these templates and produce two hollow sentences per section, not because they don't understand the incident, but because they genuinely don't know how to start.

Post-mortem writing is a real skill. It asks you to take a complex, chaotic technical event and explain it clearly to a mixed audience, while being honest about what went wrong. That's hard. Most engineers didn't sign up for long-form technical writing, and we tend not to invest in teaching it.

There's also a cultural failure mode worth naming directly: post-mortems written purely because process requires it. If the only reason you're writing is because someone told you to, it will probably read that way. No template fixes a culture that treats the post-mortem as a punishment for failure.

Why reading falls flat

Even a well-written post-mortem can fail if nobody actually reads it.

The most common failure is the log problem. "At 14:32, the alert fired. At 14:35, the on-call acknowledged." That's a log, not a story. And as humans, we're wired for stories, not logs.

A good post-mortem walks you through the incident as an experience: what the team knew at each point, what they tried, what worked, what didn't. A narrative arc gives readers something to follow, and it makes the learning stick. People remember stories. They don't remember timelines.

There's also the audience problem. Most post-mortems write for one reader and leave everyone else stranded. Engineers want technical depth. Leaders want business impact and an action plan. Adjacent teams want to know whether something similar could hit them. Good post-mortems acknowledge these are different needs — and meet them, even imperfectly.

The Swiss cheese model is useful here. Human systems are like layers of Swiss cheese, each with random holes — an incident happens when a threat passes all the way through. When you write a post-mortem with that mental model, you stop looking for a single root cause and start looking for the system of failures that aligned. The 2024 CrowdStrike incident is a clean example: bad content, a missed validation step, deployments that should have been staged but weren't. Multiple layers, multiple holes. A post-mortem that only names one of those isn't doing its job.

What good actually looks like

Here's something practical, not just principles.

Write it quickly. The best post-mortems are written while the incident still stings. That's when the context is sharpest and the honesty is easiest.

Tell a story, not a log. Walk readers through what happened chronologically, through the lens of what your team knew and when. The timeline matters, but the experience matters more.

Be specific. "The database was slow" tells nobody anything. "Replication lag hit 45 seconds because the primary ran out of connections" is something you can actually learn from. Specificity is what makes a post-mortem useful rather than cathartic.

Name people — but don't blame them. We name people in our own post-mortems, and we think you should too. The distinction isn't whether names appear — it's what you say about them. "Alex deployed the change" is context. "Alex should have known better" is blame. One describes what happened. The other assigns judgment. Good post-mortems do the former, never the latter.

Be honest about your dependencies. Third-party incidents are a particular trap. The temptation is to point at the vendor. But you chose the dependency. You agreed to the risk. Even when the underlying failure wasn't yours, the accountability stays with you.

Make actions concrete and owned. "Improve monitoring" is not an action. It's a wish. "Alex: add an alert for replication lag exceeding 30 seconds by end of sprint" is an action — it has a name, a verb, and a measurable outcome. You can tell whether it's done. If your actions don't meet that bar, they'll drift out of the backlog and into nothing.

Include what went well. Incidents are stressful enough without every post-mortem reading as a catalogue of failure. Fast detection, clean communication, a well-timed escalation — these deserve acknowledgement. Positive reinforcement is part of how culture gets built.

On AI: what it should and shouldn't do

AI and post-mortems is a question we think about a lot, given what we're building. The honest take is more nuanced than "use it" or "don't."

A lot of the value in writing a post-mortem comes from the act of writing it. You pull together the timeline. You look through Slack. You work out the sequence of events. That process forces you to understand exactly what happened — and that understanding is often where the most important learning lives.

If AI takes that process away from you, it also takes the learning.

The right model is AI handling the grunt work — summarising the incident channel, pulling key moments, generating a first draft — while humans own the analysis. What happened can be automated. Why it happened and what you're going to do about it can't. Automate the latter and you've automated away the most valuable part.

The framing we come back to: AI should get you past the blank page, not past the thinking.

The bar is lower than you think

The threshold for writing a post-mortem should be lower than you probably think it is.

Some of the most useful post-mortems we've seen are short. Three sections. Two paragraphs each. Written in 15 minutes. They don't need to be exhaustive — they just need to exist.

Small incidents, written up quickly and honestly, do two things. They surface patterns you'd otherwise miss: the rate-limiting quirk that turns out to be a systemic gap in your architecture. And they build a habit. They make post-mortem writing feel like a normal part of how engineering works, not a punishment that follows the bad ones.

The culture shift isn't complicated, even if it takes time. Lower the bar for writing. Raise the bar for reading. Treat follow-up actions like real work — in your actual backlog, with real owners — not appendices to a document nobody opens after the debrief. And make people feel like the effort they put in actually matters, because it does.

Post-mortems don't fail because of bad templates. They fail because we treat them as documents instead of conversations — and conversations are what engineering teams are actually built on.

Want to go deeper? We recently ran a full webinar on this — with worked examples, a look at real post-mortems, and a Q&A. Watch it on demand

See related articles

Who's on call? How Claude helped us calculate this 2,500x faster

A look at how on-call schedules work, and how we made rendering them 2,500× faster — through profiling, smarter algorithms, and some Claude.

Rory Bain

Rory Bain

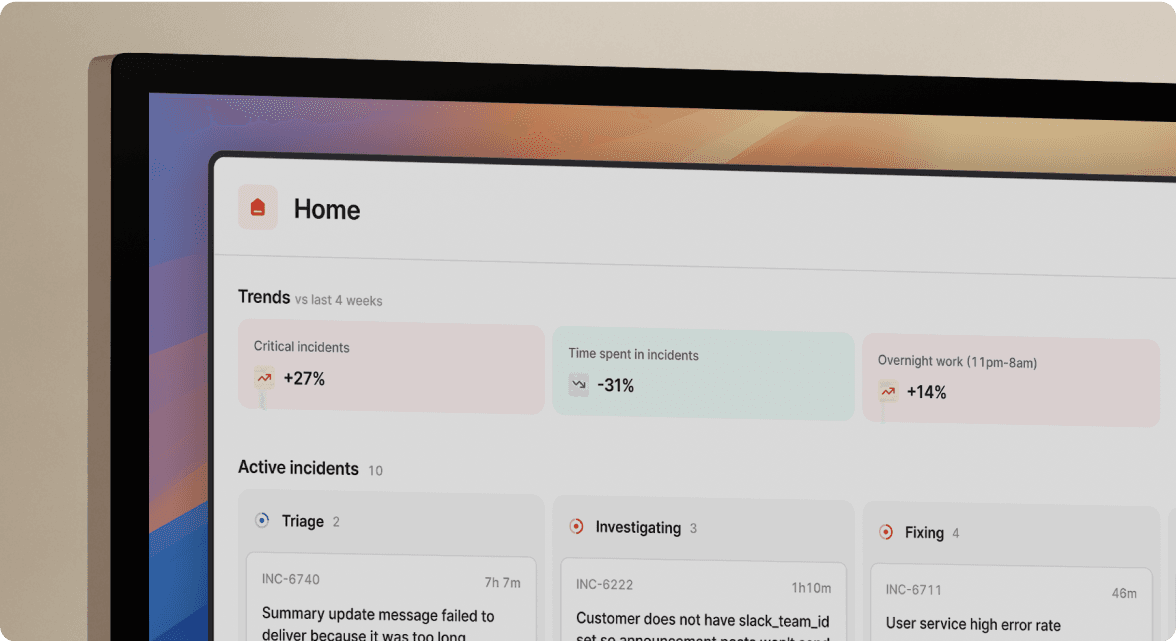

How it feels to run an incident with AI SRE

For the last 18 months, we've been building AI SRE, and one of the things we've learned is that UX matters more than you think. This week, I used AI SRE to run a real incident, and I walk you through it end-to-end.

Chris Evans

Chris Evans

What does using AI for post-mortems actually mean?

Everyone is using AI to help with post-mortems now. We've built AI into our own post-mortem experience, pulling your Slack thread, timeline, PRs, and custom fields together and giving your team a meaningful starting point in seconds. But "AI for post-mortems" can mean very different things.

incident.io

incident.ioSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization