ITSM automation for DevOps: Runbooks, workflows, and AI-assisted root cause analysis

Updated March 27, 2026

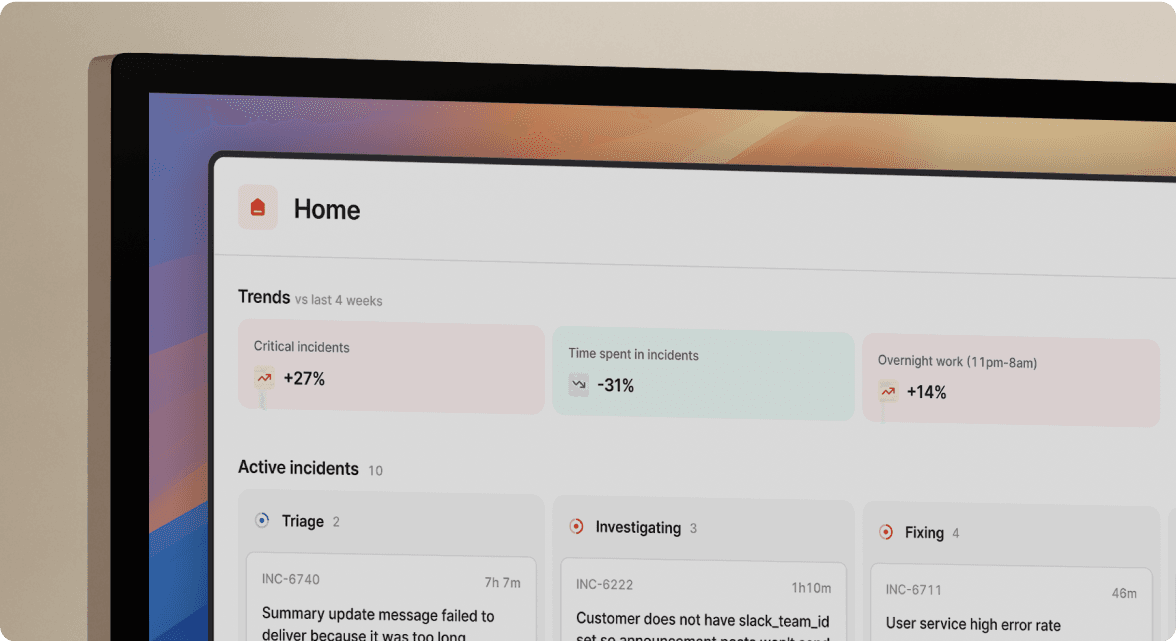

TL;DR: ITSM and DevOps converge when you automate the manual work that slows incident response. Workflow automation handles escalation and stakeholder communication. Runbook automation standardizes technical fixes so junior engineers execute them safely. AI-assisted root cause analysis accelerates diagnosis by connecting code changes, alerts, and past incidents surfacing likely causes in seconds rather than 30+ minutes of manual log triage.

The biggest bottleneck in your incident response isn't technical complexity. It's the time your on-call engineer spends juggling alerting tools, Slack, Jira, and a status page before even looking at a log. Coordination overhead keeps growing.

ITSM processes exist to ensure rigor and auditability. DevOps practices exist to ship fast and recover faster. During a 3 AM outage, these two goals don't have to fight each other, because automation makes them allies. This article breaks down how to automate the incident lifecycle, from alert to post-mortem, using runbooks, workflows, and AI-assisted root cause analysis (RCA) so your engineers focus on fixing systems instead of managing processes.

Why ITSM and DevOps must converge for modern reliability

Traditional ITSM enforces structure: documented change requests, manual Change Advisory Boards (CABs), stage-gated approvals, and post-incident reports filed in triplicate. These controls exist for good reason, because uncontrolled changes cause outages and auditors need paper trails.

DevOps pushes the opposite direction. Deployments happen dozens of times per day, and waiting for a weekly CAB meeting to approve a rollback during an outage is absurd. The friction shows up most painfully during incidents: your monitoring tool fires an alert, but ITSM says you need a ticket before you escalate. DevOps says fix it now, document later. The result? Engineers either skip documentation (failing audits) or slow down to fill out forms (increasing Mean Time To Resolution, or MTTR).

DevOps and ITIL aren't enemies. The answer isn't choosing one over the other. It's automating the administrative tasks that create friction: ticket creation, escalation, timeline capture, and stakeholder updates that happen automatically as a byproduct of resolving the issue in Slack.

Defining ITSM for DevOps and its core benefits

ITSM for DevOps (sometimes called high-velocity IT) adapts traditional service management principles for teams deploying code multiple times per day. ITIL's high-velocity IT guidance was built specifically for organizations using Lean, Agile, DevOps, CI/CD, SRE, and ChatOps practices.

In practice, this means three things:

- Faster MTTR through automated coordination: Instead of manually creating tickets and paging responders, alerts trigger structured incident workflows that pull in the right people and context automatically. Etsy reduced MTTR by 35% after switching to Slack-native workflows that eliminate coordination overhead.

- Built-in auditability without extra work: Every

/inccommand, role assignment, and status update gets captured in a timestamped timeline with no separate documentation step required. - Proactive incident prevention: Structured incident data (which services break most, what root causes recur) feeds back into reliability investments, so you stop guessing where to spend engineering time and start proving it with data.

"incident.io allows us to focus on resolving the incident, not the admin around it. Being integrated with Slack makes it really easy, quick and comfortable to use for anyone in the company, with no prior training required." - Andrew J. on G2

Workflow automation: from alert to post-mortem

Workflow automation manages the people and process side of an incident:

- Who gets paged based on service ownership

- What channel gets created and with what context

- Which stakeholders get notified at each severity level

- What happens after resolution (post-mortem, follow-ups)

When done right, the entire lifecycle runs on autopilot while your engineers focus on the technical fix. Here's the automated sequence:

- Alert fires from Datadog or Prometheus indicating high API latency

- Incident channel auto-created in Slack (e.g.,

#inc-2847-api-latency-spike) - On-call engineer paged based on affected service from the service catalog

- Incident commander assigned automatically based on severity

- Service context surfaced in the channel: owners, dependencies, recent deployments, runbooks

- Status page updated through configured workflows as severity and status change

- Timeline captured from every Slack message, role assignment, and escalation

- Post-mortem auto-drafted from captured timeline data after resolution

Without automation, each of these steps requires a human to remember and execute them in the right order, under pressure, at 3 AM. incident.io auto-creates incidents from alerts and runs configurable workflows that handle everything from channel creation to stakeholder notification.

Automating incident coordination and communication

The first minutes of an incident set the tone for the entire response. If assembling the team takes significant time, you may lose valuable minutes of your response window before anyone even looks at a dashboard.

Automated incident creation eliminates this. When an alert meets predefined criteria (severity threshold, affected service, error rate), the platform creates the incident automatically with no human intervention. Predefined escalation paths route to the right team, and if the primary responder doesn't acknowledge, the alert escalates to secondary.

Once the incident is underway, two tasks drain responder attention: status page updates and stakeholder communication. Workflow automation handles both by tying updates to incident severity and state changes. Workflows can auto-post summaries to a leadership channel, send email digests to customer success teams, and ping legal if the incident involves data exposure. These workflows run on private incidents too, which matters for security-sensitive events.

The key principle: every action during triage should be a single/inc command, not a multi-tool scavenger hunt.

How incident.io streamlines ITSM and DevOps workflows

Everything described above, automated incident creation, intelligent escalation, and timeline capture, requires a platform built for this workflow from the ground up. This is where incident.io helps.

Slack-native architecture means zero context-switching. Your team declares incidents with /inc declare, assigns roles with /inc assign, escalates with /inc escalate, and resolves with /inc resolve. You can also tag @incident in any channel and use natural language to draft updates, create follow-ups, or ask questions about the incident. Engineers don't learn a new interface because the interface is Slack.

AI SRE automates up to 80% of incident response. It triages alerts, analyzes root cause, spots the likely pull request behind the incident, and can generate a fix PR directly from Slack. It also suggests next steps based on similar past incidents, reducing cognitive load from "figure out everything" to "validate this suggestion."

Service Catalog integration provides instant context. When an incident fires, the affected service's catalog entry surfaces in the Slack channel: owners, dependencies, recent deployments, SLOs, and linked runbooks. No hunting through wikis.

Three trade-offs worth checking

incident.io requires Slack or Microsoft Teams. It's not a standalone web UI. If your team isn't already standardized on one of those platforms, it won't fit. That's a hard dependency, not a configuration option.

The platform is also opinionated by design. You get sensible defaults out of the box, which means most teams are operational in days rather than weeks. The trade-off: if your ITSM workflows are heavily customized complex approval chains, non-standard escalation logic, rigid ticket taxonomy expects some adaptation work. The workflow API handles a lot of that, but it's not zero effort.

Finally, on-call alerting is a paid add-on. The Pro plan starts at $25/user/month, and on-call scheduling and alerting adds $20/user/month on top of that. If you're evaluating total cost, use $45/user/month as your baseline for a fully loaded Pro seat. No hidden fees beyond that, but factor it in before running the ROI math.

If your team runs Slack or Teams, wants opinionated defaults over a blank-canvas tool, and needs on-call coverage, incident.io is a strong fit. If any of those three are blockers, better to know now.

The workflow API also lets you build custom automation that fits your specific ITSM requirements, from auto-creating Jira tickets to triggering diagnostic scripts. Favor reduced MTTR by 37% after implementing Slack-native workflows, and teams can reduce MTTR by up to 80% with AI-powered incident management.

Runbook automation for faster, consistent resolution

While workflow automation manages the people side, runbook automation handles the technical side. A runbook is a set of executable steps that diagnose or fix a specific issue: restarting a Kubernetes pod, rolling back a deployment, or scaling infrastructure.

The problem with traditional runbooks is that they live in static Confluence pages nobody reads under pressure. An engineer at 3 AM isn't going to carefully follow a lengthy document when the site is down. Automated runbooks solve this by turning static steps into executable, context-aware actions. Instead of reading "check the database connection pool size in Grafana," automated runbooks can help surface the current pool size alongside the incident context.

This is especially powerful for onboarding. Junior engineers can safely execute complex diagnostic steps on their first on-call rotation because the automation enforces the correct sequence and guardrails.

The ROI math compounds quickly across a team handling multiple incidents per month time saved per incident, multiplied across your on-call rotation, adds up to meaningful engineering hours reclaimed for proactive reliability work.

For setup complexity and TCO across platforms, see our runbook automation tools guide.

Integrating runbooks with your service catalog and monitoring

A runbook in isolation is a recipe. A runbook connected to your service catalog is a recipe with the ingredients already on the counter. When a critical API fails, the service catalog provides owner contacts and runbook links needed for swift action.

| Step | Without catalog integration | With catalog integration |

|---|---|---|

| Identify service owner | Search Slack, check wiki, ask around | Auto-surfaced in incident channel |

| Find relevant runbook | Search Confluence, hope it's current | Linked directly from catalog entry |

| Check dependencies | Check architecture docs, ask service owners | Dependency map pulled automatically |

| Review recent deploys | Open GitHub, check CI/CD pipeline | Recent deployments listed in context |

Teams using service-level objectives tied to their catalog can also correlate SLO breaches with root causes more quickly, spotting patterns such as when a service's error budget consistently burns after certain team deployments.

AI-assisted root cause analysis in ITSM

Traditional RCA relies on experienced engineers mentally correlating alert timing with recent changes, then working backward through logs and metrics. It works when the engineer has deep system knowledge and the incident matches a familiar pattern. It can struggle with novel failure modes, particularly during off-hours incidents handled by less experienced responders.

AI-assisted RCA changes this by synthesizing data sources that would take a human 30+ minutes to manually review: across recent GitHub commits, CI/CD events, feature flag changes, infrastructure modifications, and similar past incidents. Instead of the engineer checking five dashboards, the AI surfaces the most likely contributing factors within seconds.

This goes beyond simple log correlation, because machine learning models can identify incident patterns that humans miss when data volume is too large or correlations span too many systems. The AI doesn't just say "CPU spiked." It connects the spike to a recent deployment that modified a connection pool configuration and flags a similar pattern from a past incident.

AI also accelerates the "5 Whys" methodology. When you ask "Why did the API return 500 errors?", the AI correlates symptoms with changes, deployment dates, and configuration updates to suggest a likely answer. Instead of spending 30+ minutes manually correlating logs, metrics, and recent changes, your SRE can validate or reject the AI’s suggestion in minutes.

Handling the limitations of AI RCA

AI-assisted RCA is powerful, but it has clear boundaries.

Data quality dependency: AI models are only as good as their training data. Traditional RCA can miss causes when data is incomplete, and AI amplifies this. If your telemetry has blind spots, the AI will too.

Novel "black swan" events: AI excels at pattern-matching against historical incidents but struggles with genuinely unprecedented failures because there's no historical data to match against.

Explainability gap: A study from MIT Lincoln Lab found that half the papers claiming human-interpretable AI methods didn't validate them with humans. Your incident commander needs to understand why the AI reached its conclusion.

According to RTInsights' Revisiting Root Cause Analysis in the Age of AI: "Human expertise is still essential for validating results and implementing corrective actions." AI augments human judgment. It does not replace the incident commander.

Maintaining ITSM compliance at DevOps speed

Compliance and speed often feel like opposing forces in traditional toolchains. Updating an audit log typically means opening a separate system. Writing a post-mortem means reconstructing the timeline from memory days later.

Automation dissolves this tradeoff. When key incident actions (declaration, escalation, role assignment, status update, resolution) happen through structured commands in Slack, the platform captures a complete, timestamped record automatically. There's no extra documentation step because the documentation IS the incident response.

| Compliance need | Manual approach | Automated approach |

|---|---|---|

| Incident timeline | Reconstruct from memory days later | Auto-captured from Slack and API events |

| Role assignment audit | Reconstruct who was involved | Timestamped /inc assign records |

| Escalation log | "I think I paged the DBA around 2:15 AM" | Exact timestamps with acknowledgment |

| Post-mortem completion | Often delayed or skipped entirely | Auto-drafted, completed within 24 hours |

This matters for SOC 2, ISO 27001, and GDPR compliance. Audit logs are key detective controls within these frameworks, answering who did what, when, and what the outcome was for every interaction.

Automated timeline capture for audit-ready documentation

The core of audit-ready documentation is a timeline that nobody had to manually create. During an incident, automated capture records:

- Structured commands: Every

/inc declare,/inc escalate,/inc assign, and/inc resolvewith exact timestamps - Key Slack messages: Messages pinned or flagged during the incident

- Role changes: Who was assigned as incident commander, communications lead, or responder

- Status page updates: When external status changed and what was communicated

- API events: Deployment triggers, alert state changes, and integration actions

The Google SRE Workbook explains that uniform incident response cuts cognitive load and makes it easier to write automation that works across services. incident.io captures decisions as they happen and uses that data in its post-incident workflow to generate an 80% complete post-mortem by pulling structured timeline data, role assignments, and escalation records captured automatically during the incident.

Clearly built by a team of people who have been through the panic and despair of a poorly run incident. They have taken all those learnings to heart and made something that automates, clarifies and enables your teams to concentrate on fixing, communicating and, most importantly, learning from the incidents that happen." - Rob L. on G2

Ready to see this in action? Schedule a demo to see how AI SRE handles triage, root cause analysis, and post-mortem generation.

Key terms glossary

ITSM for DevOps (high-velocity IT): An adaptation of traditional IT service management principles for high-velocity DevOps environments, where ITSM controls like change management and incident documentation are automated rather than manual.

Runbook automation: Converting static incident response documentation into executable, context-aware technical steps that can be triggered automatically or run with minimal manual intervention.

AI RCA (AI-assisted root cause analysis): Using machine learning to correlate alert timing, code changes, infrastructure events, and historical incident data to identify the most likely contributing factors to an incident.

Service catalog: A structured database of all services in your organization, including ownership, dependencies, SLOs, runbooks, and recent deployment history, used to surface immediate context during incidents.

MTTR (Mean Time To Resolution): Average time from alert detection to verified incident resolution, including coordination overhead, investigation, remediation, and confirmation.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization