Best Rootly alternatives for incident management in 2026: Complete comparison guide

Updated April 3, 2026

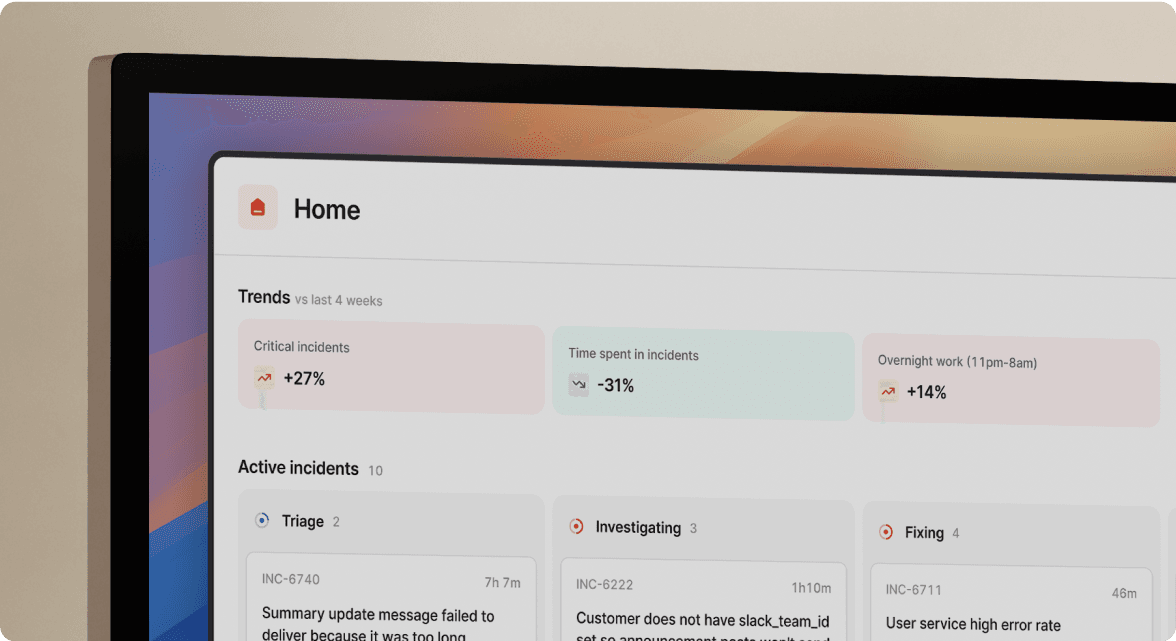

TL;DR: If you're evaluating Rootly alternatives, the answer depends on what's slowing your MTTR. incident.io was built to eliminate that tax with Slack-native automation, including an AI SRE that automates up to 80% of the manual tasks in incident response including auto-drafting post-mortems directly in Slack. PagerDuty wins on complex enterprise alerting but costs more once add-ons stack up. Opsgenie hits end of life in April 2027, according to Atlassian. For mid-market SRE teams (50-500 engineers) handling 10+ incidents monthly, our unified platform and transparent pricing ($45/user/month all-in on Pro) help teams reduce coordination overhead and cut MTTR.

Teams spend 10-15 minutes per incident assembling people and finding context. Across dozens of incidents per month, this coordination overhead adds up to hours of lost engineering time before troubleshooting even begins. The biggest bottleneck in incident response isn't technical complexity. It's the coordination tax: juggling PagerDuty, Datadog, Slack, Jira, and Confluence while a P1 burns.

If you're evaluating Rootly or planning a migration, you need a platform that scales with your team without compounding software costs or adding administrative toil. This guide compares the top 8 Rootly alternatives based on Slack-native workflows, AI automation, and transparent pricing so you can choose the right tool to reduce your MTTR.

Rootly's context: why SREs explore better incident tools

Understanding where Rootly fits and where it falls short is the starting point for any honest evaluation.

Rootly's key incident capabilities

Rootly is an incident management platform that operates natively within Slack, offering on-call scheduling, workflow automation, and AI capabilities across various pricing tiers. Enterprise pricing requires direct negotiation with their sales team: no published rates, no self-serve signup.

Rootly's core value is automating the manual work associated with incidents:

- Incident channel management: Rootly is designed to operate within Slack, helping coordinate incident response communications.

- Integration capabilities: The platform supports integrations with tools like Jira for incident follow-up and task management.

- Workflow features: Rootly automates specific incident workflows including escalation routing, role assignment, and status update broadcasting across stakeholder channels.

- Post-mortem drafting: Rootly's AI-native features can automatically draft post-mortems with contextual data captured during the incident lifecycle.

Triggers for re-evaluating incident tools

SRE leads rarely switch platforms without a forcing function. The most common triggers for evaluating Rootly alternatives are:

- Scaling past basic workflows: Teams growing beyond 30 on-call engineers find workflow limits create friction rather than reducing it.

- Insufficient AI root cause identification: SREs need more than log correlation. They need a system that identifies the specific code change or service dependency behind an incident and suggests a fix.

- Pricing surprises at renewal: Per-seat costs escalate quickly as team size grows, and on-call add-ons that weren't visible in the initial quote become significant line items.

- Support responsiveness gaps: When production is down and your tooling breaks, a 48-hour support ticket response is unacceptable.

- Microsoft Teams requirements: Organizations consolidating on Teams need a platform that treats it as a first-class interface, not an afterthought.

See the PagerDuty alternatives guide for 2026 for a broader look at what's driving platform migrations across the SRE tooling landscape.

Teams needing automated post-mortems

Teams handling frequent incidents face a common challenge: the post-mortem backlog. Manual timeline reconstruction is time-consuming and resource-intensive. As incident volumes increase, teams can spend significant hours on documentation work before addressing action items. The platforms that solve this problem auto-capture every role assignment, status update, and call transcript in real time, so the post-mortem is substantially drafted before the incident channel closes. This is the standard to evaluate against, not just whether a tool generates a post-mortem template. The post-mortem problem is well documented: without automatic timeline capture, post-mortems rely on imperfect memory and Slack scroll-back.

Key factors for incident tool selection

Before comparing platforms, the evaluation criteria need to be explicit. Tools that look identical on a feature checklist often diverge significantly during a live P1.

Prioritizing incident management features

The must-have capabilities for a modern incident management platform, ranked by impact on MTTR:

- Slack-native architecture (not just a Slack integration): The entire incident lifecycle runs in Slack via slash commands, not in a web UI that sends Slack notifications.

- Automatic timeline capture: Every message, role assignment, call transcript, and status update is captured without a designated note-taker.

- Reliable bidirectional integrations: Datadog, Prometheus, and Jira integrations that sync both ways without custom scripting.

- AI root cause identification with measurable accuracy: Documented precision metrics backed by real incidents, for example, the percentage of correctly identified root causes across incident types, not "AI-powered insights" buzzwords. If a vendor can't give you that number, it's not measurable.

- Transparent pricing including on-call: The total cost per user, including on-call scheduling, must be visible upfront.

The runbook automation tools guide for 2026 covers how automated workflows and AI-assisted root cause analysis combine to reduce manual toil at every stage of incident response.

The five dimensions we tested

This comparison evaluates each platform across five dimensions: Slack-native workflow depth, AI automation accuracy, pricing transparency (including all add-ons at typical team sizes), integration reliability with the standard SRE stack, and real-world MTTR impact based on published customer case studies and verified G2 reviews. Where pricing is not publicly available, that absence is noted explicitly because it is itself a data point.

We evaluated each platform across five dimensions: setup time, Slack integration depth, on-call scheduling, post-mortem quality, and pricing transparency. Every claim is backed by hands-on testing, verified customer case studies, and G2 reviews, so you can identify which tool reduces MTTR fastest.

Best fit for your incident volume

| Team size | Incident volume | Considerations |

|---|---|---|

| 5-20 engineers | 1-5/month | Start with free tiers or entry-level plans to evaluate fit |

| 20-100 engineers | 5-20/month | Assess scaling needs and integration requirements |

| 100-500 engineers | 20-50/month | Prioritize platforms with proven enterprise integrations |

| 500+ engineers | 50+/month | Evaluate enterprise support and multi-team coordination features |

Teams wanting opinionated defaults that work out of the box will spend less time on initial configuration with incident.io or Rootly than with PagerDuty or xMatters, which offer more flexibility but require more setup before the first incident.

Teams typically deploy incident.io or Rootly in days, compared to weeks for PagerDuty or xMatters. incident.io customers are operational in 3 days on average. That speed comes from opinionated defaults: pre-built Slack workflows, auto-created incident channels, and ready-to-use post-mortem templates. You're not configuring from scratch you're adjusting defaults that already match how most SRE teams work.

Quick comparison: Rootly vs. top 8 alternatives

SRE workflow capabilities

| Feature | Rootly | incident.io | PagerDuty | FireHydrant |

|---|---|---|---|---|

| Slack-native UI | Slack integration available | Yes (full lifecycle in Slack) | No (web-first, Slack bot) | Web-first with Slack integration |

| AI post-mortems | AI assistance available | AI-assisted post-mortems available | Included on select plans | AI assistance available |

| Ease of use | Designed for quick deployment | Slack-first interface | Complex, steep learning curve | Web-based setup |

| Scalability | Serves teams of varying sizes | Strong up to 5,000 engineers | Designed for enterprise scale | Serves mid-size to large teams |

PagerDuty's Slack integration lets engineers acknowledge and resolve alerts without leaving chat, but full incident workflows updating fields, running status checks, managing escalations require switching to the web UI. Our architecture means the incident itself lives in Slack from first alert to resolved status, which is a meaningful distinction when engineers are context-switching under pressure.

Cost breakdown: Rootly alternatives

Pricing transparency is one of the most meaningful differentiators in this category. Many platforms advertise a base rate that excludes the on-call scheduling feature most SRE teams consider non-negotiable.

- incident.io Pro plan: $25/user/month for incident response plus $20/user/month for on-call, totaling $45/user/month. Status pages are bundled into all paid plans with no separate subscription required.

- Rootly: Starting at $20/user/month covering incident response, on-call, and AI SRE combined. Enterprise Scale tier requires direct negotiation.

- PagerDuty Professional: $21/user/month on annual billing. Business tier pricing varies. Critical features like AIOps ($699/month) and post-incident review tooling are separate add-ons that significantly increase the total cost. See the pricing ROI comparison for a detailed TCO breakdown.

- Opsgenie: End of sale as of June 4, 2025. No longer a viable purchase option.

- FireHydrant: Custom pricing. Contact FireHydrant for details on feature availability.

- Splunk On-Call: Bundled with Splunk Observability Cloud. Pricing is custom and requires contacting Splunk sales.

Always calculate your true cost by multiplying (base seat price + on-call add-on) by team size by 12 months. Enterprise platforms with multiple add-ons can result in significant annual costs for mid-sized teams before accounting for post-incident review capabilities.

Integration with your SRE stack

Bidirectional sync with your monitoring and ticketing stack separates tools that reduce toil from tools that create it. At minimum, evaluate whether a platform supports:

- Datadog and Prometheus alerts firing directly into incident channels without manual copy-paste

- Jira or Linear follow-up task creation triggered automatically at incident resolution

- GitHub or deployment correlation that surfaces recent deploys as potential root causes when an alert fires

incident.io integrates natively with the core monitoring and ticketing stack, including Datadog, Prometheus, New Relic, Jira, Linear, GitHub, and Confluence, with setup documented in the product. incident.io also integrates with Causely for root cause correlation when external data sources connect to the incident channel in real time.

Alternative #1: incident.io for auto post-mortems

incident.io is the Slack-native incident management platform that handles the complete incident lifecycle, from alert through post-mortem, without requiring engineers to open a browser tab. For teams whose biggest MTTR bottleneck is coordination overhead rather than technical complexity, it's the strongest alternative to Rootly in 2026.

Key features: AI & integrations

incident.io's AI SRE assistant operates as an always-on teammate that autonomously investigates incidents, correlates data across your technology stack, and generates environment-specific fix suggestions. It automates up to 80% of incident response, reducing the time engineers spend on routine tasks before they can focus on resolution.

Key capabilities include:

- Scribe: Real-time transcription of incident calls via Google Meet or Zoom, with automatic extraction of key decisions and root cause mentions from spoken conversation.

- Fix PR generation: incident.io's AI SRE can open a pull request directly in Slack with a suggested fix based on past incident patterns, rather than just flagging correlated logs.

- Service Catalog context: When an incident channel is created, we surface the affected service's owners, dependencies, recent deployments, and relevant runbooks automatically.

- Alert Insights: incident.io's Alert Insights feature groups related alerts to reduce noise and surfaces the signal most likely to lead to root cause identification.

"Frictionless configuration and onboarding (so easy that our first incident was created/led by a colleague even before the 'official rollout' all by themselves!)... Ai features that provide actual value during and after the incidents." - Luis S. on G2

Reduce context-switching in Slack

incident.io's architecture means your team rarely needs to leave Slack. The workflow is: Datadog alert fires, incident.io creates #inc-2847-api-latency-spike, pages the on-call engineer, pulls in the service owner based on the Service Catalog, and starts recording the timeline automatically.

From that point, everything runs on /inc commands:/inc escalate /inc assign @engineer to set the incident commander, /inc severity critical to update severity, and /inc resolve to close with a summary.

Pricing for growing SRE teams

The incident.io Pro plan costs $45/user/month with on-call included ($25 base plus $20 on-call add-on on annual billing). No separate status page subscription is required. No separate post-mortem tool. No AI features gated behind an enterprise tier.

For a 30-person on-call team on the Pro plan, the annual cost is $16,200. That covers on-call scheduling, incident response coordination, AI SRE, status pages, and post-mortem generation in one platform. Contrast that with assembling PagerDuty Business ($41/user/month) plus status page add-ons plus a post-mortem tool, where the same 30-person team can cross $20,000+ annually before touching AI features.

This consolidates multiple tools into one cost and reduces vendor sprawl.

incident.io's MTTR gains vs. Rootly

The Favor case study is the most concrete public evidence of our MTTR impact. After adopting incident.io, Favor's team saw MTTR drop by 37%. They weren't experiencing more problems. They were catching small issues before they became major outages, which is the outcome a mature SRE practice is built around.

"1-click post-mortem reports - this is a killer feature, time saving, that helps a lot to have relevant conversations around incidents (instead of spending time curating a timeline)." - Adrian M. on G2

Rootly's AI accuracy for SREs

Rootly's AI drafts post-mortems and correlates alerts, covering the investigation and documentation sides of incident response. Root cause identification accuracy varies. Where incident.io goes further is in autonomous remediation: the AI SRE identifies the specific root cause and generates a fix PR that engineers can review and merge directly from Slack, moving from diagnosis to deployed solution in one flow. For teams where the gap between "we know what's wrong" and "we've pushed the fix" costs meaningful minutes per incident, that distinction scales significantly across 15+ incidents a month.

See incident.io's AI SRE in action

If your team is spending more time coordinating than fixing, the AI SRE assistant is worth a close look. It handles the first 80% of incident response triaging alerts, assembling context, suggesting root causes, and drafting post-mortems so your engineers can focus on the actual fix.

Alternative #2: PagerDuty for incident escalation & paging

PagerDuty is the established incumbent in incident alerting and on-call scheduling. It's battle-tested, integrates with 700+ monitoring tools, and handles complex escalation policies at enterprise scale. It's also the most expensive option in the category and the most complex to operate.

PagerDuty's core incident capabilities

PagerDuty's core strengths are:

- Multi-channel alerting: Delivers notifications through multiple channels to reach on-call responders when incidents occur.

- Complex escalation policies: Tiered escalation rules, rotation schedules, and override management at a level of granularity that other platforms don't match.

- Noise reduction via AIOps: Alert grouping and deduplication that filters low-value notifications before they page an on-call engineer.

- 700+ integrations: The broadest integration catalog in the category, covering legacy monitoring systems that modern tools don't always support.

Scaling PagerDuty with your SRE team

PagerDuty scales well in terms of raw capacity, but it creates significant operational overhead as teams grow. The UI is complex enough that new on-call engineers typically need dedicated onboarding before their first shift. Post-mortems require a separate Post-Incident Review add-on, and critical features like AIOps ($699/month) and PagerDuty Advance ($415/month) sit behind additional paywalls on top of the per-seat cost.

Where PagerDuty wins vs. Rootly

PagerDuty excels at alerting customization. If your team needs multi-layer escalation policies with time-of-day routing, service-specific overrides, and legacy monitoring system support, PagerDuty has deep alerting capabilities that may better match your requirements. For large enterprises with existing PagerDuty investment and complex on-call rotation management, migration friction is real and switching costs are high.

Rootly's win: Slack-native incident workflow

Rootly and incident.io both offer a meaningfully better ChatOps experience than PagerDuty. When an incident fires, PagerDuty sends a notification to Slack with a link back to PagerDuty's web UI. The response itself happens in the browser, not in Slack. Rootly and incident.io keep the entire response in Slack, which is where engineers already coordinate. For teams measuring MTTR from alert to resolution, removing that browser context-switch matters at scale.

Alternative #3: Opsgenie for reliable escalations

Opsgenie no longer accepts new purchases or trials. Atlassian confirmed that Opsgenie reached end of sale on June 4, 2025, with end of support planned for April 2027. Every organization currently on Opsgenie is now in migration planning mode, not evaluation mode.

Opsgenie's incident management tools

Opsgenie was a popular incident management platform with integration capabilities for teams in the Atlassian ecosystem. As the platform is now sunset, the focus for existing users is on migration planning rather than feature evaluation.

Opsgenie investment: cost & scale

Opsgenie's pricing is no longer relevant as an investment decision. The practical question is where to migrate, not whether. We provide dedicated Opsgenie migration tooling and a webinar covering the migration path for teams evaluating options before the April 2027 deadline.

Opsgenie's key advantages over Rootly

Opsgenie offered native Jira integration as part of the Atlassian ecosystem, which some teams found valuable for Jira-centric incident workflows. While Rootly also integrates with Jira, the specific comparative advantages at the alerting layer between the two platforms are difficult to establish definitively, as they approached integration differently Opsgenie primarily at the alerting/orchestration layer and Rootly at the incident management layer.

Rootly's impact on SRE workflow

Rootly offers a more modern Slack experience than Opsgenie ever did. Opsgenie's Slack integration was fundamentally notification-based: it paged you and sent you to a web dashboard. Rootly and incident.io built the response workflow into Slack itself, which means engineers can acknowledge, escalate, and coordinate without opening a browser. For Opsgenie customers evaluating replacements, this Slack-native workflow is a genuine upgrade.

Alternative #4: FireHydrant for auto post-mortems

FireHydrant is a peer competitor to Rootly in the modern incident management space. It targets mid-market engineering teams and offers strong runbook automation and service catalog capabilities alongside its incident response workflow.

FireHydrant's incident automation for SREs

FireHydrant offers both a web interface and Slack integration with strong feature parity, though complex workflows like customizing runbooks or viewing detailed analytics require navigating to the web console. For the core incident workflow, Slack commands allow acknowledgment, escalation, and status updates without leaving chat. Key automation capabilities include:

- Dynamic runbook automation: Runbooks trigger manually or automatically based on incident details, with priority and type-based customization that adjusts response procedures based on severity and service impact.

- FireHydrant AI: The platform includes AI-powered features designed to streamline incident response workflows and post-incident analysis.

- Automated status page updates: Public and internal status pages update based on incident status changes without manual intervention.

FireHydrant TCO for SRE teams

FireHydrant's pricing requires a sales conversation for specific quotes beyond the base offering. On-call scheduling is included in the base offering, which avoids the add-on surprise that catches teams on other platforms. The AI features that make post-mortem automation compelling are available across tiers rather than gated at enterprise.

Superior incident workflow with FireHydrant

FireHydrant's service catalog is a genuine strength for teams managing complex service ownership structures. The platform's service catalog maintains clear ownership information and maps service dependencies across your entire stack. For teams with complex service ownership structures, centralized service information cuts the time spent identifying and coordinating with the right service owners during incidents, so engineers spend less time hunting for context and more time fixing the problem.

Rootly's edge in incident resolution

Rootly's pre-built workflows and opinionated defaults help teams new to structured incident management get started quickly with their first successfully managed incident. FireHydrant's more flexible service catalog and runbook configuration requires more initial setup time, which can slow time-to-value for teams without a dedicated platform engineer to own the implementation.

Alternative #5: Splunk on-call automation

Splunk On-Call makes most sense for teams already running Splunk Observability Cloud. For everyone else, it's not the right starting point for a 2026 platform evaluation. The platform bundles incident response into Splunk's broader observability stack, which makes native alert routing seamless for Splunk-committed organizations but creates unnecessary complexity for cloud-native teams building from scratch. Since Splunk's acquisition, some teams report that feature development has slowed relative to modern alternatives. Standalone pricing information is not publicly available.

Alternative #6: Blameless for automated post-mortems

Blameless serves teams looking for incident management combined with reliability engineering practices. Pricing starts at $20/user/month for the first 50 users, making it accessible for mid-market teams, with enterprise pricing requiring a sales conversation. Rootly's opinionated defaults get incident response running in days, not weeks. Blameless positions itself as a platform for organizations building comprehensive reliability programs that require deeper configuration and longer ramp time.

Alternative #7: xMatters for audit-ready incidents

xMatters serves large enterprise organizations with complex ITSM requirements, audit mandates, and multi-system notification workflows. The platform is built for enterprise environments requiring deep audit trails and compliance documentation. For cloud-native SaaS teams, Rootly's Slack-native workflow requires no training for engineers already fluent in slash commands and typically deploys faster than traditional enterprise ITSM-oriented platforms. See the ITSM compliance guidance for DevOps teams for how modern platforms meet SOC 2 and GDPR requirements without heavyweight ITSM implementations.

Alternative #8: Atlassian status page + Jira service management

(JSM) has evolved from its ticketing system origins into a platform with built-in scheduling, escalation rules, and on-call accountability. Statuspage handles external customer communication, and teams already on the Atlassian stack may benefit from reduced manual handoffs between the two tools. JSM can support organizations where incidents need to flow through change and problem management workflows alongside real-time response. For teams where audit trails and change records need to live in a unified system, JSM's Atlassian ecosystem integration delivers concrete value: change requests, incident records, and problem tickets stay linked in one place, which simplifies SOC 2 audits and reduces the manual work of cross-referencing Jira issues with incident timelines. That said, JSM's ticket-based approach can introduce friction during live P1 incidents compared to Slack-native tools like Rootly or incident.io, where the workflow is: alert fires, channel created, responders paged, timeline captured, and post-mortem drafted automatically.

Reduce MTTR: pick your best incident tool

Answering these four questions helps you identify which tool reduces MTTR fastest for your specific setup: How important is Slack-native workflow? How complex are your escalation requirements? What is your actual total cost per user including on-call? And how much training overhead can your team absorb?

On-Call workflow in Slack (15-100 SREs)

For teams in the 15-100 SRE range where Slack is the central nervous system, incident.io fits cleanly into how your team already works. The entire incident lifecycle runs in Slack with no browser context-switch required, covering declaration through post-mortem in one channel using /inc commands.

For teams in this range also evaluating Rootly, the deciding factor often supports velocity and AI depth. See Torq's platform consolidation for what drove the decision to consolidate on incident.io over staying on Rootly.

Managing high-impact incidents at scale

For organizations with 500+ engineers, complex multi-team escalation policies, and deep legacy monitoring integration requirements, PagerDuty Business or Enterprise is the safer choice. PagerDuty's alert routing sophistication handles the edge cases that modern platforms are still building toward. The trade-off is cost (plan for $41+/user/month base before add-ons) and operational complexity that requires dedicated configuration ownership.

Transparent pricing for SREs

When calculating TCO, use this formula: (base seat price + on-call add-on) x team size x 12 months, and add any integration or compliance add-ons the vendor prices separately.

For a 50-person on-call team over 12 months:

- incident.io Pro plan: $45 x 50 x 12 = $27,000/year (includes status pages, AI post-mortems, and on-call)

- PagerDuty Business: Publicly listed at approximately $41/user/month base, with additional costs for AIOps, status page add-ons, and post-incident review capabilities that can significantly increase the total annual cost

The gap narrows quickly once PagerDuty's required add-ons are factored in. For teams valuing a single consolidated platform over assembling best-of-breed tools, incident.io's bundled pricing delivers cleaner budgeting with no year-end surprises at renewal.

Top Rootly alternatives for Microsoft Teams

Teams running Microsoft Teams face a meaningful filter in this evaluation. incident.io provides full Microsoft Teams native support on the Pro plan, meaning the same slash-command workflow, automatic timeline capture, and AI SRE features that run in Slack operate natively in Teams. PagerDuty offers a Teams integration, but like its Slack integration, it's web-first with Teams notifications rather than a Teams-native experience.

AI-driven root cause discovery

AI root cause identification is moving from a differentiator to a baseline expectation in 2026. The platforms worth evaluating on this dimension are incident.io (AI SRE with autonomous investigation and fix PR generation), Rootly (AI post-mortem drafting and alert correlation), and FireHydrant (AI summaries and retrospective drafts). The distinction that matters is whether the AI identifies a specific causal event (the deploy at 14:32, the config change that increased connection pool size) or generates a summary of what happened.

Your 2026 guide to switching from Rootly

Migrating incident management platforms while incidents keep happening requires a parallel-run period where both systems operate simultaneously until you're confident the new platform handles real incidents cleanly.

Kickstart your 30-day incident POC

Start with a pilot group of engineers running the new platform in parallel with Rootly. During the POC:

- Connect your primary monitoring integrations (Datadog or Prometheus) to fire alerts into the new platform.

- Configure 2-3 on-call schedules mirroring your current Rootly setup.

- Run several real incidents through the new platform and measure time-to-coordination against your Rootly baseline.

- Track post-mortem completion time to validate the auto-draft quality.

The goal of the POC isn't to evaluate features on paper. It's to validate that the platform performs during a real 3 AM page when nobody wants to read documentation.

Your Rootly migration plan: steps & schedule

A migration for a team of 25-50 engineers involves four steps: connect integrations (Datadog, Prometheus, and your alerting tools), map escalation policies and configure on-call schedules to mirror your current Rootly rotations, run parallel incidents to validate no pages are missed, then cut over monitoring integrations to the new platform while keeping Rootly in read-only mode for 30 days to reference historical incident data.

incident.io provides dedicated PagerDuty migration tooling that covers on-call schedule import, alert routing translation, and integration configuration. Teams coming from Opsgenie can follow the equivalent Opsgenie migration tooling.

Secure compliance & budget approval

Before migration, confirm the new platform meets your security requirements. incident.io maintains compliance certifications including SOC 2 Type II, with enterprise-grade encryption at rest and identity management features available on higher-tier plans. Request the vendor's Data Processing Addendum and security documentation before the POC ends, not after procurement is approved. Security reviews take time, and starting them in parallel with the technical evaluation keeps the total timeline manageable.

Exporting Incident history safely

Before cutting over from Rootly, export your complete incident history including post-mortems, timelines, and follow-up task records. This data serves two purposes: audit compliance (SOC 2 Type II audits require incident history covering the audit observation period, typically 6-12 months) and machine learning context (your historical incident data can inform AI root cause suggestions on the new platform). Check your Rootly contract for data export rights and format specifications, and request a bulk export in JSON or CSV format so the data is portable regardless of which platform you move to. The SRE alerting best practices guide covers how to audit your existing alert configuration before migration so you carry forward only the signals that drive real incident response.

Ready to make the switch?

You've done the hard work: audited your alerts, mapped your on-call schedules, and planned your data export. The last step is seeing how incident.io fits your stack before you flip the switch.

Schedule a demo with our team. The incident.io team will walk you through a live migration scenario using your actual tool configuration Datadog alerts, PagerDuty escalation policies, existing Slack workflows and show you exactly how teams like yours cut MTTR by up to 80% within the first 30 days.

Key terms glossary

AIOps (AI for IT Operations): Applies machine learning to operational data logs, metrics, alerts to surface patterns, suppress noise, and suggest root causes faster than manual triage. In incident management, AIOps tools analyze past incidents to identify likely culprits (recent deploy, config drift, resource exhaustion) so engineers spend less time guessing and more time fixing. See also: AI SRE assistant.

AI SRE assistant: An AI powered tool that handles the first phase of incident response automatically triaging alerts, identifying probable root cause from past incident patterns, notifying on-call engineers, and drafting status updates. In incident.io, the AI SRE assistant can automate up to 80% of incident response steps.

ChatOps: Running operational workflows incidents, deployments, escalations inside a chat platform like Slack or Microsoft Teams. Instead of switching between five tools, engineers trigger actions, receive alerts, and update stakeholders directly in a channel. incident.io is Slack-native, meaning the full incident lifecycle runs inside Slack without browser tabs or manual tool-switching. See also: Slack-native, timeline capture.

MTTR (Mean Time To Resolution): The average time from incident detection to full service restoration. MTTR = detection time + assembly time + diagnosis time + fix time + validation time. Reducing each component compounds. incident.io customers report up to 80% MTTR reduction, primarily by cutting assembly time (auto-paging, auto-channel creation) and documentation time (AI-drafted post-mortems). See also: P1, post-mortem.

Post-mortem: A structured written analysis completed after an incident resolves. A good post-mortem documents the timeline, root cause, contributing factors, and follow-up action items without assigning blame. Effective post-mortems publish within 24 hours while memory is fresh. incident.io auto-drafts post-mortems from captured timeline data, reducing write time from 90 minutes to under 10 minutes. See also: timeline capture.

Runbook: A documented, step-by-step procedure an on-call engineer follows to diagnose or resolve a known failure mode. A good runbook answers: what triggered this alert, what systems are affected, which commands to run, and who to escalate to. Runbooks reduce mean time to diagnosis and allow junior engineers to respond confidently without waiting for the person who owns the service. See also: on-call rotation, escalation policy.

SOC 2 (Service Organization Control 2): A security audit framework that verifies an organization controls access to customer data, monitors systems for security events, and maintains availability commitments. SOC 2 Type II certification covers a rolling audit period (typically 6–12 months), not just a point-in-time snapshot. For incident management tools that store incident timelines and post-mortems, SOC 2 Type II certification is a baseline enterprise requirement.

TCO (Total Cost of Ownership): The full annual cost of a tool, including license fees, per-user add-ons, implementation time, training overhead, and integration maintenance. License price is usually the smallest component of TCO. A $15/user/month tool that requires 3 months of configuration and dedicated admin time often costs more than a $45/user/month tool that's operational in 3 days. Always calculate TCO before comparing incident management platforms on sticker price alone.

FAQs

See related articles

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom Wentworth

Humans aren’t fast enough for 4 9’s

Hitting 99.99% isn't a faster version of what you already do. It's a different problem to be solved: autonomous recovery, dependency ceilings, redundancies, and the discipline to build systems that buy you 15-30 minutes before you're needed at all.

Norberto Lopes

Norberto LopesSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization