Best Resolve AI alternatives 2026: a buyer's guide for SREs

Updated February 27, 2026

TL;DR: If you're evaluating alternatives to Resolve AI in 2026, you need a platform that reduces toil through Agentic AI, not static runbooks. We built incident.io as the strongest choice for cloud-native teams: it's Slack-native, ships a genuine multi-agent AI SRE that investigates root causes autonomously, and teams are operational in days. PagerDuty remains the incumbent for enterprise alerting but its AI capabilities require expensive add-ons. BigPanda excels at AIOps event correlation but doesn't cover the full incident lifecycle. Rootly offers solid Slack-based workflows but its automation is fundamentally rule-driven rather than agentic.

The incident management tooling market has split into two clear camps: platforms that automate process (fill in a field, create a ticket, page the on-call) and platforms that automate reasoning (analyze the deployment graph, surface the likely root cause, draft the stakeholder update). Resolve AI, the well-funded AI SRE startup founded by ex-Splunk executives and valued at $1B following a $125M Series A in December 2025, sits squarely in the second camp. So do we.

That shared positioning means the evaluation is genuinely competitive. This guide compares the top alternatives on real SRE criteria: depth of AI capabilities, Slack-native workflow, integration speed, and pricing transparency.

Why teams evaluate alternatives to Resolve AI

Resolve AI entered a crowded market with strong backing and a clear pitch: an autonomous AI SRE that investigates and resolves incidents without waiting for human prompts. As a 2024-founded company, it's building fast. But SRE leads evaluating it consistently flag three questions before committing.

- Integration depth at scale: Resolve AI's AI SRE capabilities are compelling, but connecting it to Datadog, Prometheus, PagerDuty, Jira, and GitHub in a way that survives a 3 AM P1 requires verified, documented integrations. Established platforms with multi-year integration libraries carry less risk here.

- Full lifecycle coverage: An AI SRE that investigates root causes is valuable. But does the tool also handle on-call scheduling, status pages, post-mortem generation, and audit-ready documentation? Teams switching from a legacy platform want to consolidate, not add another point solution.

- Pricing transparency at growth stages: Series A enterprise pricing is rarely published. Teams that budget annually need predictable per-user costs without consumption-based surprises on top.

None of these concerns make Resolve AI a bad choice. They're the questions you should pressure-test in your evaluation, alongside the same questions you should ask every vendor on this list, including us.

The state of AI incident management in 2026: agents vs. chatbots

Before evaluating any platform, separate the marketing from the mechanics. Three categories of "AI" exist in incident management right now, and they're not equivalent.

Chatbots and slash commands. These tools respond to direct instructions. You type /inc create, the bot creates a channel. Useful automation, but not AI. It's a better interface for manual steps.

Rule-based automation (RBA). You define an if-this-then-that workflow: if alert severity is P1, page the on-call, create a Jira ticket, post to #incidents. Powerful when configured correctly, but static because it can't adapt to a novel failure mode, and maintaining the rule library becomes toil in itself.

Agentic AI. This is the 2026 frontier. According to IBM's definition, agentic AI "exhibits autonomy, goal-driven behavior and adaptability" and can "plan and execute goals on its own, based on its understanding of context and data, rather than only reacting to direct instructions." AWS reinforces this: agentic systems "understand context, break tasks into steps, take action through tools and systems, and improve from experience." Applied to SRE, the AI doesn't wait for you to ask a question. It monitors the incident, queries your GitHub history, correlates past incidents, and surfaces a root cause hypothesis within minutes of the alert firing.

Key evaluation criteria for AI-powered incident platforms

Based on what matters most for SRE teams managing 5-20+ incidents per month, weight your evaluation across four criteria:

- Slack-native workflow (not just Slack integration): Can you run the entire incident lifecycle inside Slack without opening a browser tab? "Slack integration" means the tool sends notifications to Slack. "Slack-native" means the incident lives in Slack: declaration, role assignment, escalation, timeline capture, and resolution. The coordination overhead difference is measurable, and tool-switching between five platforms compounds fast across a team handling 10-15 P1s per month.

- AI root cause analysis depth: Does the AI find the bad deploy, or does it just summarize what people said in the Slack channel? Ask for a live demo on a novel incident, not a canned replay. Push for precision and recall metrics. "AI-powered" without those numbers is a marketing claim.

- Integration speed: Your existing stack (Datadog, Prometheus, PagerDuty for alerting, Jira, GitHub) needs to connect within hours, not weeks. A tool that requires a professional services engagement to integrate with Datadog is not a modern platform. Check whether Datadog monitor migration is documented and self-serve.

- Pricing transparency: The questions to ask: is the AI feature included or an add-on? Is on-call scheduling included? Are there per-event or per-node charges on top of per-user fees? You should be able to calculate your annual TCO in under five minutes. If you can't, that's a signal.

Top Resolve AI alternatives for modern engineering teams

1. incident.io: best for Slack-native coordination and Agentic AI

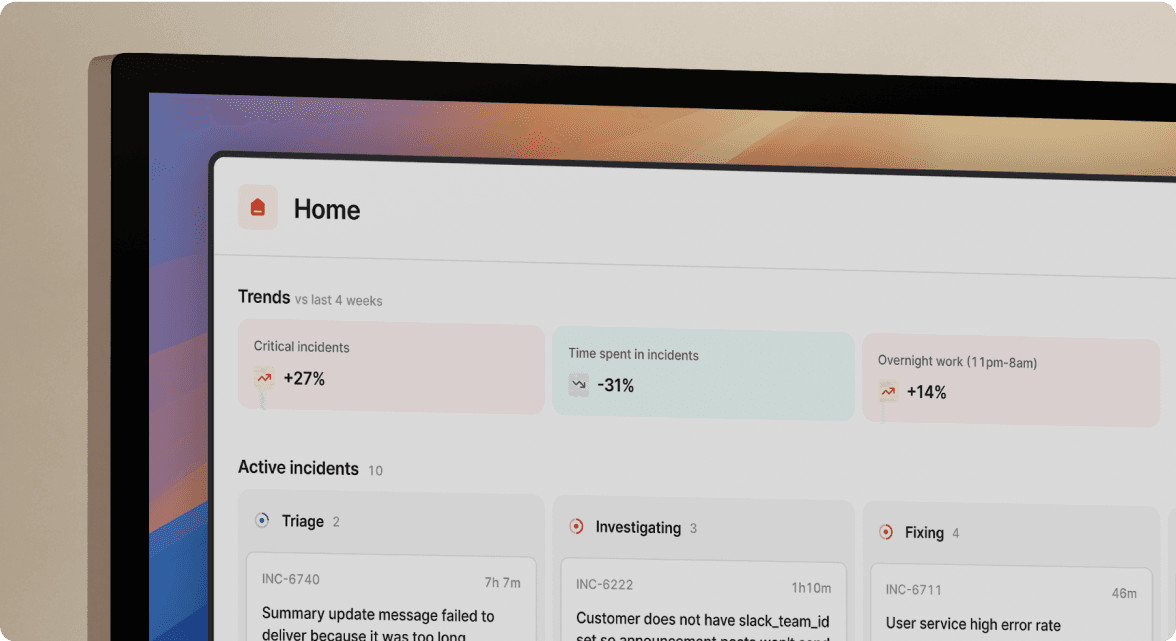

We built incident.io from the ground up as a Slack-native platform, meaning the incident channel is the source of truth, not a downstream notification target. Every /inc command, every role assignment, every call transcript feeds a live timeline that our AI uses in real time.

Our AI SRE is what separates us from the field in 2026. It "uses a multi-agent system to analyze incidents by searching through GitHub pull requests, Slack messages, historical incidents, logs, metrics, and traces to build hypotheses about root causes," according to ZenML's LLMOps database analysis. When an alert fires, it "will triage and investigate your alerts, analyse root cause then recommend whether you should act now or if you can defer until later" and can "draft a fix for you, from spotting the failing PR to drafting the fix."

Scribe, our AI transcription feature, captures call recordings so post-mortems reflect what was actually said and decided, not what engineers remember three days later. Post-mortems auto-draft from the captured timeline, and our published pricing is transparent and per-user.

"I appreciate how incident.io consolidates workflows that were spread across multiple tools into one centralized hub within Slack, which is really helpful because everyone's already there. The automation is great for handling repetitive tasks, which many engineers are eager to cut down on." - Alex N. on G2

Teams using incident.io can reduce MTTR by up to 80%, with the primary gains coming from eliminating coordination overhead and automating post-incident documentation. We also offer a dedicated PagerDuty migration guide and Opsgenie migration tooling for teams coming from those platforms.

Best for: Cloud-native SaaS teams (50-500 engineers) who want genuine Agentic AI and a Slack-first workflow without a months-long implementation.

2. PagerDuty: best for enterprise alerting and legacy stacks

PagerDuty remains the industry standard for on-call alerting. Its integration library is unmatched and it's deeply embedded in most enterprise stacks. If your primary pain is alert routing and escalation policy management, it handles that well.

The challenge in 2026 is cost complexity. Vendr's marketplace analysis shows PagerDuty's AI capabilities (branded as Advance and AIOps) are add-ons, not included in base plans. For larger teams, stacking these add-ons on top of a Business plan subscription can substantially increase total annual spend. The AI features, while improving, still feel assembled rather than native. Most post-incident work still requires browser tabs outside Slack.

Best for: Large enterprises with complex alerting hierarchies who need a mature on-call routing system and can absorb the add-on costs for AI features.

3. Rootly: a configurable alternative for platform teams

Rootly offers solid Slack-based incident management and a flexible workflow engine. Its automation is built on condition-action logic: when an incident is declared at SEV1, execute this set of steps. For teams that want granular control over every automation step, Rootly gives you the levers.

The limitation is the same as most workflow-first tools: the automation is only as good as the rules you write upfront. AI features are a newer addition and focus primarily on analyzing past incident data rather than running autonomous investigation in real time. Teams with dedicated platform engineering bandwidth to tune the system get good results. Teams that want opinionated defaults and faster time to value will find our approach at incident.io more practical.

Best for: Platform engineering teams with strong internal tooling practices who want maximum workflow customization and can invest time in configuration.

4. BigPanda: best for AIOps event correlation

BigPanda solves a specific problem: alert noise. If your monitoring stack generates thousands of events per hour and you need ML to group them into actionable incidents before routing to a human, BigPanda's correlation engine is strong.

BigPanda sits squarely in the AIOps event correlation category, distinct from lifecycle incident management. On-call scheduling, Slack-native coordination, post-mortem generation, and status pages are not core features. You still need a separate tool to manage the human response once BigPanda hands off the correlated incident, making it a pre-processing layer rather than a standalone solution.

Best for: Large-scale infrastructure teams drowning in alert volume where the primary problem is noise reduction, not coordination or root cause investigation.

Comparison table: AI capabilities, pricing, and time-to-value

| Platform | AI type | Slack-native | Time to value | Pricing model |

|---|---|---|---|---|

| incident.io | Agentic (multi-agent investigation) | Fully native | Days | Per-user, publicly listed |

| Resolve AI | Agentic AI SRE | Partial | Weeks | Enterprise (not published) |

| PagerDuty | Add-on (Advance + AIOps) | Notifications only | 1-4 weeks | Per-user + multiple add-ons |

| Rootly | Rule-based + AI layer | Strong integration | Hours to days | Per-user |

| BigPanda | ML event correlation | Limited | Weeks | Consumption-based |

How to spot "AI washing" during your evaluation

Every vendor in 2026 claims AI. Here's how to separate real agentic capability from a relabeled rule engine:

- Ask for precision and recall metrics. Real AI systems are measured with real numbers. If a vendor can't give you a precision/recall figure, their "AI" isn't being measured, which means it's not being systematically improved. Our analysis of AI-powered platforms covers how to evaluate these claims across tools.

- Test it on a novel incident. Demand a live demo where you describe an incident type the vendor hasn't prepared for. Canned demos always look good. A genuine agentic system should analyze a new scenario and surface a hypothesis without a pre-configured runbook to drive it.

- Check where the AI lives in the pricing. If the AI capability requires a separate product tier or add-on (as it does with PagerDuty's Advance and AIOps products), it's bolt-on functionality. Bolt-on AI gets deprioritized when the core product roadmap competes for engineering resources.

- Ask who maintains the rules. If the answer is "your team builds and maintains the automation workflows," that's toil in a different form. True agentic AI learns from your environment rather than requiring constant human curation.

Making the switch: a migration framework for SRE leads

Migrating off a legacy incident management platform doesn't require a big-bang cutover. Here's a three-step approach that keeps your existing P1 coverage intact during the transition.

- Audit your current runbooks before migrating them. Most teams carry runbooks that haven't been touched in over a year. Migrating stale runbooks into a new tool preserves the debt without the benefit. Understand what makes a good runbook first, then identify which automations your team actually uses versus which were set up and forgotten. Migrate the active ones, not all of them.

- Run a parallel trial under lower-pressure conditions. Keep your existing tool handling P1s while you route P2s and P3s through incident.io. This gives your team time to build muscle memory with

/inccommands without the pressure of a critical outage. We support sandbox mode for testing if you want to run practice incidents before going live. - Measure toil reduction explicitly. Before the switch, track time from alert to coordinated response (team assembled, channel active, first status update sent). After two weeks on the new platform, measure the same metric. Automated runbooks cut MTTR on their own, but the full gain comes from combining automation with agentic investigation to eliminate both coordination overhead and investigative delay.

The right tool depends on your actual problem

If your primary pain is alert noise at scale (thousands of events per hour from heterogeneous monitoring sources), BigPanda handles the correlation layer well. You'll still need a coordination tool on top.

If your primary pain is coordination overhead (assembly time, post-mortem archaeology, junior engineers freezing on first on-call) and you want AI that investigates rather than routes, the Slack-native approach wins. Book a demo and ask us to walk through a root cause investigation on a scenario from your own stack.

For organizations with complex migrations or enterprise requirements, reach out to our team to discuss how the AI SRE maps to your specific service catalog and integration stack.

Key terminology

Agentic AI: An AI system that autonomously plans, reasons, and executes toward a goal without requiring constant human instruction. In incident management, this means the AI investigates root causes, queries your GitHub history, and surfaces hypotheses independently rather than waiting for you to ask specific questions.

Runbook automation (RBA): Pre-configured if-this-then-that workflows that trigger fixed actions when specific conditions are met. Powerful for predictable failure modes, but brittle when incidents don't match the pre-written script.

MTTR (Mean Time To Resolution): The average time from incident declaration to resolution, and the primary metric for measuring the impact of AI tooling on your incident response practice.

Toil: Manual, repetitive, automatable work that provides no lasting value. Creating Slack channels manually, copy-pasting alert context into docs, and writing post-mortems from memory are all toil. Eliminating toil is the core value proposition of any legitimate AI incident management platform.

AIOps: The application of ML and AI to IT operations, specifically for event correlation and alert noise reduction. Distinct from AI incident management: AIOps handles the pre-incident classification problem, not the human coordination and resolution problem.

Slack-native: An architecture where Slack is the primary interface for the full incident lifecycle, not a notification destination. Slack-native means you declare, manage, escalate, and close incidents without leaving Slack. Slack integration (the alternative) means the tool mirrors some actions to Slack while the source of truth lives in a separate web UI.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization