Best incident response tools 2026: the SRE's guide to reducing MTTR

Updated February 27, 2026

TL;DR: The biggest MTTR bottleneck in 2026 isn't detection. It's coordination overhead: the 12+ minutes per incident you spend assembling the team before troubleshooting even starts. We've compared 12+ platforms to help you eliminate that overhead. For Slack-centric engineering teams, we believe incident.io is the strongest all-in-one coordination layer. PagerDuty remains mature for alerting-first enterprises. Opsgenie reaches end-of-life April 2027, making it a risky bet. Evaluate incident.io, Rootly, or FireHydrant if reducing MTTR through automation is your goal.

If you're opening five browser tabs to declare a single incident in 2026, your tooling is the problem. Coordination overhead can consume a significant share of your MTTR, with you and your team burning 12 minutes per incident just on logistics before reviewing a single line of code. That's not a monitoring gap. It's a coordination gap.

We've compared 12+ incident response platforms based on what matters to SRE leads: how fast you can coordinate a response, how deeply the tool automates toil, and what you'll pay when you factor in every add-on. No filler. No vendor marketing copy. Just the data you need to build your shortlist and justify the decision to your VP of Engineering.

The state of incident response in 2026: why alerting isn't enough

For the last decade, incident tools competed on who could page you fastest. That problem is mostly solved. Google's incident management framework identifies three distinct phases: coordination, communication, and control. Most legacy tools wake you up, then leave you to figure out the rest manually.

The result is tool sprawl. Your typical incident response workflow spans five separate tools: PagerDuty for alerting, Datadog for metrics, Slack for comms, Google Docs for notes, Jira for tickets. Each context switch costs time and cognitive load. By the time real troubleshooting starts, 12 minutes have already gone to coordination overhead, not problem-solving.

The shift you're seeing across SRE teams right now is from alerting-first to coordination-first tooling. Detecting the incident is a solved problem. The real lever for MTTR reduction is how fast you can get the right people, context, and communication channels in place once the alert fires. According to the Google SRE Workbook, managing an incident means coordinating responders efficiently and ensuring communication flows simultaneously between technical teams and stakeholders. Tools that treat coordination as an afterthought force you to do that work manually, burning minutes that compound across hundreds of incidents per year.

Evaluation criteria: how to judge modern incident platforms

Not all 'incident management' tools solve the same problem. Before you compare features, get clear on what you're buying.

Slack-native workflow vs. Slack-connected

"Works with Slack" can mean anything from a one-line notification to a full incident lifecycle running inside Slack via slash commands. The distinction matters because ChatOps reduces cognitive load by keeping response where your team already works. Look for tools where you can declare, escalate, assign, update, and resolve via slash commands, with zero browser tab switches required.

Real AI vs. AI-washing

The difference between genuine AI and AI-washing in incident response comes down to specificity. Generic "AI features" summarize chat logs or search knowledge bases. A real AI SRE analyzes your observability data, correlates recent deployments with error spikes, and generates environment-specific fix recommendations. One saves you five minutes of reading. The other saves you 30 minutes of investigation. When you're evaluating AI claims, ask for precision and recall metrics, not marketing copy.

On-call management and pricing transparency

On-call scheduling sounds simple until you're managing a 20-person rotation across three time zones with last-minute overrides. Check whether on-call is included in the base price or sold as a separate add-on. We're transparent about this: at incident.io, on-call is a paid add-on ($10/user/month on Team annual or $12 monthly, $20/user/month on Pro). At PagerDuty, on-call is bundled but the overall contract cost runs significantly higher. Neither is "free." Price them both out before comparing.

Integration depth

The tools you already run (Datadog, Prometheus, Jira, GitHub) need to connect without custom scripting. We support creating incidents automatically via alerts from your existing monitoring stack, mapping alert severities directly to incident priorities. Verify setup time, not just logos on a marketing page.

Compliance and audit trails

SOC 2 Type II certification, GDPR compliance, SSO/SCIM, and immutable incident timelines matter when your CISO signs off on tooling. incident.io is SOC 2 Type II certified and GDPR compliant. Defining your post-incident process with a structured flow also ensures audit-ready documentation after every incident.

Quick comparison: top 12 incident response platforms at a glance

The table below reflects pricing and capabilities as of February 2026. "Slack-native" means the full incident lifecycle runs in Slack via slash commands, not just notifications.

| Tool | Slack-native | On-call included | AI post-mortems | Status page | Pricing model |

|---|---|---|---|---|---|

| incident.io | Yes (full lifecycle) | Add-on (+$10-20/user/mo) | Yes (AI SRE) | Yes (limited on free) | Per-user + on-call add-on |

| PagerDuty | Partial (ChatOps + web UI) | Yes (bundled) | Yes (AI agent) | Separate product cost | Per-user, high TCO |

| Opsgenie (JSM) | Notifications only | Yes (bundled) | Limited | Separate add-on | Per-user, EOL April 2027 |

| Rootly | Yes (full lifecycle) | Integrated | Yes (confidence scores) | Included | Per-user (undisclosed) |

| FireHydrant | Partial (interactive) | Integrated | Yes (AI assistant) | Integrated | Per-user (undisclosed) |

| BetterStack | Notifications | Yes (bundled) | Limited | Included | Per-user (tiered) |

| Splunk On-Call | Notifications | Yes (bundled) | Limited | Requires integration | Enterprise (Splunk bundle) |

| xMatters | Notifications | Yes (advanced) | No | Separate | Enterprise (undisclosed) |

| ServiceNow | Notifications/integration | Via add-ons | No | Module-based | Enterprise per-user |

| BigPanda | Notifications | Via integration | No (AIOps focus) | N/A | Enterprise (undisclosed) |

| Moogsoft | Notifications | Via integration | No (AIOps focus) | N/A | Enterprise (undisclosed) |

| Squadcast | Notifications | Yes (bundled) | Limited | Included | ~$15-25/user/mo (est.) |

A note on BigPanda and Moogsoft: Both are AIOps tools focused on alert correlation and noise reduction before an incident is declared. They group noisy alerts into actionable incidents but don't manage the incident lifecycle itself. If you're evaluating them alongside incident.io or PagerDuty, you're comparing two different categories. AIOps platforms excel at reducing alert noise, but you'll still need a separate coordination layer for what happens after the incident is declared.

Deep dive: best Slack-native platform (incident.io)

We built incident.io on a single premise: incidents should start, run, and close entirely within Slack, without opening a browser. When a Datadog alert fires, we auto-create a dedicated channel (e.g., #inc-2847-api-latency), page the on-call engineer, pull in the service owner, and start capturing the timeline automatically. Total coordination time: under two minutes.

That ease of adoption is what sets it apart: new on-call engineers can run their first incident with /inc commands without a 47-step Confluence guide.

Key features

Workflows and automation: Our workflow engine auto-creates channels, assigns roles, sends stakeholder updates, and creates Jira follow-up tickets without manual input. You configure it once per severity level (SEV1, SEV2, SEV3) and every incident follows the same structure.

AI SRE: Our AI SRE assistant automates up to 80% of incident response, autonomously investigating issues, correlating recent deployments with error spikes, and generating environment-specific recommendations. The distinction from generic AI summaries is that it analyzes observability data rather than just chat logs. The result is a root cause suggestion that cites specific pull requests and data sources, not a ChatGPT wrapper.

Intercom reduced incident time by 40% and cut post-mortem turnaround from 3-5 days to under 24 hours after adopting incident.io's AI-assisted workflow.

Scribe (real-time timeline capture): Every Slack message in the incident channel, every /inc command, every role assignment gets recorded automatically. When you type /inc resolve, we draft the post-mortem from that captured data. Engineers spend 10 minutes refining it, not 90 minutes writing from scratch.

Service Catalog: Ownership data lives inside incident.io, so when an alert fires for your payment service, we know who owns it and pull them in automatically. No more hunting through a Confluence spreadsheet at 2 AM.

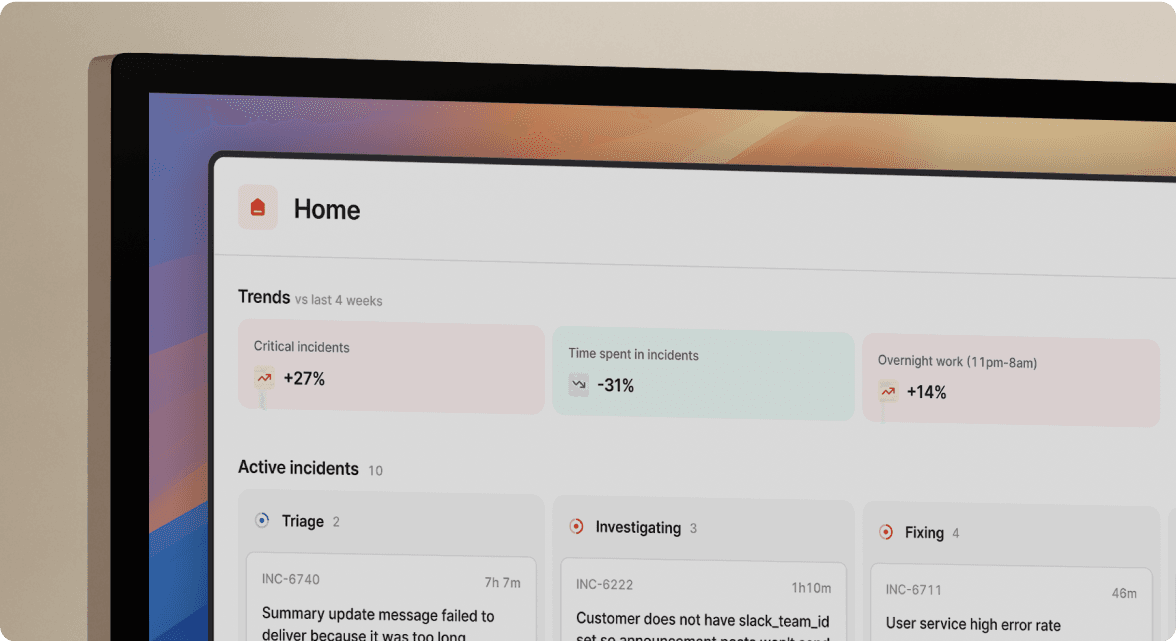

Insights dashboard: MTTR trends, incident volume by service, on-call load distribution. You can export a 90-day MTTR chart to show your VP of Engineering exactly where reliability is improving and which services are repeat offenders.

"Clearly built by a team of people who have been through the panic and despair of a poorly run incident. They have taken all those learnings to heart and made something that automates, clarifies and enables your teams to concentrate on fixing, communicating and, most importantly, learning from the incidents that happen." - Rob L. on G2

Pros and cons

| Pros | Cons |

|---|---|

| Full incident lifecycle in Slack, zero browser tabs | On-call is a paid add-on, not included in base price ($10-20/user/month depending on plan and billing term) |

| AI SRE automates up to 80% of response | Pricing scales quickly as user count grows |

| Auto-drafted post-mortems from real-time timeline | Teams not fully Slack-centric may need adaptation time |

| Ranked #1 in G2's Relationship Index for support responsiveness | Advanced workflow configuration has a learning curve |

| SOC 2 Type II certified, GDPR compliant | Microsoft Teams is supported but Slack is the primary experience |

Pricing

We publish our pricing transparently:

- Team plan: $15/user/month base + $10/user/month on-call = $25/user/month all-in (annual billing). Monthly billing: $19/user/month base + $12/user/month on-call = $31/user/month all-in (monthly billing)

- Pro plan: $25/user/month base + $20/user/month on-call = $45/user/month all-in

Full pricing is publicly documented. No hidden per-incident fees. No surprise renewal increases. For most growing engineering teams handling on-call, the Pro plan at $45/user/month is the right starting point.

Best for: Engineering teams of 50-500 people running Slack as their primary communication platform, handling 5-20+ incidents per month, and wanting coordination automation built in from day one.

Deep dive: best for legacy alerting (PagerDuty)

PagerDuty has been the on-call pager of record for enterprise engineering teams for over a decade. Its alerting reliability, escalation policies, and 700+ integrations are genuinely battle-tested. What PagerDuty does well is wake up the right person, fast, with the right alert context.

What it doesn't handle as well is what happens next. PagerDuty sends a notification to #alerts with a link. That link opens PagerDuty's web UI. You create a Slack channel manually. You assign an incident commander in PagerDuty (which doesn't sync to Slack roles). You copy-paste updates between PagerDuty and Slack. Two sources of truth, never in sync.

PagerDuty has added AI features and a ChatOps experience, but the core architecture remains alerting-first. Coordination features feel bolted on rather than built in. The platform increasingly feels like a legacy enterprise offering, with annual price increases in the 10-15% range and support quality that has visibly declined for non-enterprise tiers.

Average contract cost: $64,621 per year, with 100-person teams exceeding $119,000 annually.

Best for: Large enterprises that need maximum flexibility in escalation policies, have built custom internal tooling for coordination, and prioritize mature alert routing over a streamlined user experience.

Think of it this way: PagerDuty is a great smoke detector. incident.io is the fire response team. Both have a role. For teams that want one tool to do both, PagerDuty will leave you building the coordination layer yourself.

Deep dive: best for Atlassian ecosystems (Jira Service Management / Opsgenie)

If your organization runs everything on Atlassian, Jira Service Management (JSM) with Opsgenie handles alerting and on-call management within that ecosystem. The Jira integration is tight, ticket creation is automatic, and for teams already paying for JSM, the on-call features feel "free."

The reality is more complicated. Opsgenie is reaching end-of-life on April 5, 2027, with new sales stopped as of June 4, 2025. Atlassian has not clearly communicated the full migration path. Investing in a platform being phased out is a significant operational risk, especially for teams running 24/7 on-call rotations.

Beyond the EOL issue, Opsgenie's user experience reflects its origins as a standalone alerting tool merged into the Atlassian suite. The configuration is heavy, the UI is slow compared to modern alternatives, and coordination features require significant manual work or third-party integrations.

Our recommendation: If you're already on Opsgenie, start evaluating migration options now. If you're considering Opsgenie as a new deployment in 2026, look at alternatives. The April 2027 EOL isn't far away, and migrating a 24/7 on-call rotation mid-year is a risk you can avoid by choosing a platform with a clear long-term roadmap.

Alternative tools for specific use cases

Rootly and FireHydrant: the modern challengers

Rootly and FireHydrant are the closest alternatives to us in terms of philosophy. Both are coordination-first, both support Slack-native workflows, and both offer AI-assisted post-mortems.

Rootly brings strong AI capabilities with confidence scores for root cause analysis, including highlighted code diffs and configuration changes. Its transparency in showing reasoning chains (why a root cause was flagged, not just what it is) is a genuine differentiator. The limitation: Rootly doesn't ingest telemetry independently. It relies entirely on what your existing observability tools expose. Pricing requires a sales call.

FireHydrant offers comprehensive workflow automation and retrospective features in a web-first interface. Its AI assistant helps draft status updates and identify likely causal code changes. For teams where Slack is the operational hub, FireHydrant's web-first approach adds friction during active incidents. Pricing is also undisclosed.

The honest comparison: our opinionated defaults reduce configuration time versus both. If your team wants maximum AI reasoning transparency, Rootly's approach may appeal. If you want to be operational in 3-5 days without deep configuration work, we believe incident.io is faster to value.

BetterStack: for smaller teams and UI-focused buyers

BetterStack combines monitoring and incident response in one product, with a clean UI and bundled on-call. For teams under 50 engineers who want a simple all-in-one solution without advanced workflow automation or deep Slack integration, it's worth evaluating. Pricing doesn't require a sales call, which makes early-stage evaluation fast.

ServiceNow: for large ITIL-heavy enterprises

ServiceNow is a full IT Service Management (ITSM) platform. It fits organizations standardizing on ITIL processes with formal change management, a CMDB, and complex approval workflows. If your organization runs ITIL with hundreds of IT staff managing enterprise infrastructure, ServiceNow makes sense. For a 200-person cloud-native SaaS company running Kubernetes, it's significantly over-engineered and under-optimized for developer velocity.

Squadcast: for mid-market teams wanting unified SLO tracking

Squadcast combines on-call management, incident response, and SLO tracking with machine learning-based alert grouping. For teams that need SLO tracking built into their incident workflow and want a cost-effective alternative to PagerDuty, it's worth evaluating. Pricing is estimated at $15-25/user/month based on market positioning.

Feature showdown: AI, automation, and on-call scheduling

AI capabilities: what's real vs. what's marketing

The AI landscape in incident management has a signal-to-noise problem. Here's how we recommend cutting through it.

Generative summaries (copying Slack messages into a prompt to produce a post-incident summary) are available in almost every tool claiming AI. They save writing time but don't accelerate resolution.

Root cause identification (analyzing telemetry, deployment history, and past incident patterns to suggest the likely cause) is where real leverage exists.

According to Dash0's 2026 comparison of AI SRE tools, an AI SRE that analyzes observability data and correlates recent deployments saves 30 minutes of investigation time per incident, not just 5 minutes of documentation time.

| Platform | Root cause analysis | Deployment correlation | Timeline auto-capture | Post-mortem draft |

|---|---|---|---|---|

| incident.io | Yes (AI SRE, high precision) | Yes (cites specific PRs) | Yes (real-time) | Yes (80% complete) |

| Rootly | Yes (confidence scores + code diffs) | Yes (code diff highlights) | Yes | Yes |

| FireHydrant | Partial (identifies likely changes) | Partial | Yes | Yes (draft assistance) |

| PagerDuty | Yes (AI agent, runbook suggestions) | Partial | Partial | Partial |

| Opsgenie | No | No | No | No |

On-call scheduling: ease vs. power

On-call scheduling looks identical on paper across platforms but differs significantly in practice.

PagerDuty has the most powerful scheduling engine: multi-layer escalations, complex rotation rules, and extensive customization. The tradeoff is configuration complexity. Setting up a 10-person rotation with geographic overrides and business-hours policies takes significant time to configure.

incident.io prioritizes simplicity. Our schedule creation uses natural language overrides ("Kaushik today from 11 pm to tomorrow 7 am") and supports concurrent shifts for pairing junior and senior engineers. You can't clone entire schedules (a real gap for teams with multiple similar rotations), but for most teams with straightforward needs, setup is faster. On-call integrates directly with your alert pipeline so escalations trigger incident channels automatically.

The key trade-off: PagerDuty gives you more power at higher cost and configuration complexity. We give you enough for most SRE teams with far less toil. If you're running a complex global NOC with 50+ rotation rules, PagerDuty's flexibility is worth the overhead. If you're managing a 20-person on-call rotation for a cloud-native SaaS product, our approach is faster to operate.

Status pages: integrated vs. bolted on

Managing status page updates during an incident is one of the highest-friction tasks in incident response. The responder is deep in troubleshooting but needs to update customers in near-real-time.

We include status page functionality in incident.io. Updates can trigger automatically from workflow rules (e.g., "when SEV1 is declared, post 'Investigating' to status page"). PagerDuty's status page capability comes from Statuspage, a separate product with its own pricing tier. Opsgenie requires a third-party integration. This gap means manual updates fall through the cracks during your highest-severity incidents.

Pricing breakdown: hidden costs and TCO analysis

The "per-user" pricing model hides significant variation in total cost of ownership. Here's an honest breakdown for a 50-person engineering team with 25 engineers on on-call rotation.

TCO comparison: 50-person team (annual)

| Cost component | incident.io (Pro) | PagerDuty (Professional) |

|---|---|---|

| Base platform (50 users) | $25 x 50 x 12 = $15,000 | ~$41 x 50 x 12 = $24,600 |

| On-call (25 users) | $20 x 25 x 12 = $6,000 | Bundled |

| Status page | Included | ~$348-5,000 (separate product) |

| Process automation add-on | Included in workflows | ~$20 x 50 x 12 = $12,000 (est.) |

| Annual total | $21,000 | $37,000-42,000 |

| Savings with incident.io | $16,000-21,000/year (43-50%) |

A 50-person team using incident.io Pro with on-call pays approximately $21,000 per year. The equivalent PagerDuty setup (Professional plan plus status page plus process automation) runs $37,000-42,000 per year based on average PagerDuty contract data. That's a $16,000-21,000 annual difference.

We're transparent about the on-call add-on because it's a fair criticism: on-call costs extra rather than being bundled into base pricing. The breakdown is fully documented so you can model your specific configuration. The rule for any platform you evaluate: price every option at the all-in cost for your specific user count and feature requirements. Base price comparisons are marketing, not budgeting.

Making the switch: a 90-day migration plan

Migrating incident management tooling is like changing engines mid-flight. Incidents keep happening while you're setting up. Here's a four-phase plan that keeps operations running during the transition.

Phase 1: Discovery and planning (Week 1) - Audit your current integrations, service definitions, and on-call schedules. Set up your incident.io trial and connect your primary monitoring tool. We provide dedicated migration tools that import PagerDuty schedules and policies automatically. Define your baseline metrics: MTTR, coordination time, post-mortem completion rate.

Phase 2: Parallel run (Weeks 2-3) - Run both systems simultaneously for non-critical alerts. When a SEV2 fires, let incident.io handle coordination while your old tool runs in the background. After five to ten incidents, you'll have direct comparison data.

Phase 3: Gradual migration (Weeks 3-4) - Migrate service-by-service. Update Datadog webhook URLs one service at a time. Use our game day feature to run fake incidents and build confidence before engineers take their first live on-call shift.

Phase 4: Full cutover and optimization (Week 4+) - Complete migration of all services, decommission legacy tooling, and run your first 30-day retrospective using the Insights dashboard. Fine-tune workflows: add custom fields for deployment correlation, integrate Jira for follow-up tasks, and configure automated status page rules for SEV1 incidents. Most teams complete the full migration in 14-30 days. Our automated runbook guide covers how to reduce MTTR further during the optimization phase.

Etsy, for example, dropped MTTR from 42 to 28 minutes within 90 days of full cutover.

Which tool fits your team?

Here's our honest match by company stage and technical maturity:

- Startup (10-50 engineers): incident.io Team plan ($25/user/month all-in with on-call on annual billing) or BetterStack. You need fast setup and low configuration overhead, not 700+ integrations you'll never use.

- Mid-market (50-500 engineers, Slack-centric): incident.io Pro ($45/user/month all-in). This is the strongest option for teams running Kubernetes, handling 5-20+ incidents per month, and wanting coordination automation from day one.

- Mid-market evaluating Rootly or FireHydrant: All three are credible. We win on adoption speed and opinionated defaults. Rootly wins on AI reasoning transparency. FireHydrant wins if you prefer a web-first workflow. All three will beat a manual Slack + PagerDuty setup.

- Enterprise (500+ engineers, existing PagerDuty contract): Keep PagerDuty for alerting and add incident.io for coordination. They integrate directly, and many teams run both: PagerDuty handles the page, incident.io handles everything after the alert fires.

- Enterprise (ITIL-heavy, ServiceNow standardization): ServiceNow is the right fit for formal ITSM with CMDB and change management. It's not built for SRE velocity, but it handles ITIL compliance comprehensively.

- Teams currently on Opsgenie: Start your migration now. The April 2027 EOL is not far. incident.io, Rootly, and BetterStack all offer migration tooling. Waiting until Q1 2027 to migrate a 24/7 on-call rotation is a risk you can avoid.

"My favourite thing about the product is how it lets you start somewhere simple, with a focus on helping you run incident response through Slack. Once you're ready for more, it's got great features you can dive into (post-mortem templates, action item tracking) and integrations with all the major tools (Statuspage, GitHub, etc) you'd expect." - Chris S. on G2

If your team is ready to move from tool sprawl to a single coordination layer, book a demo with our team and see how 2-minute coordination time compares to your current baseline.

Key terminology

MTTR (Mean Time To Resolution): The average time from when an incident is detected to when service is fully restored. The single most important metric for measuring incident response effectiveness. Track it quarter-over-quarter using your platform's Insights dashboard.

Toil: Repetitive, manual work that scales linearly with incident volume and produces no lasting improvement. Creating Slack channels manually, updating status pages mid-incident, and reconstructing post-mortem timelines from memory are all toil. Automated runbooks and workflow automation directly reduce toil.

ChatOps: The practice of running operational workflows inside a chat platform (Slack or Microsoft Teams) via commands and bots. In incident management, ChatOps means declaring, escalating, and resolving incidents via slash commands without leaving the chat interface. It reduces cognitive load by keeping response in context.

SRE (Site Reliability Engineering): A discipline that applies software engineering principles to infrastructure and operations problems. As defined in Google's SRE Workbook, SREs own reliability targets (SLOs), on-call rotations, and post-incident reviews.

Post-mortem: A structured analysis conducted after an incident to document what happened, why it happened, and what changes prevent recurrence. A blameless post-mortem focuses on system and process failures, not individual mistakes. Modern platforms capture timelines automatically so post-mortems reflect reality rather than reconstructed memory.

Coordination overhead: The time spent assembling the team, creating incident channels, finding context, and updating stakeholders before troubleshooting begins. Coordination overhead can consume a significant share of MTTR in teams using manual or legacy tooling. Eliminating it is the primary lever for MTTR reduction.

Service Catalog: A structured inventory of your services, their owners, dependencies, and associated runbooks. In incident.io, the Service Catalog connects to your alert pipeline so the right owner is automatically pulled into an incident channel the moment the alert fires.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization