Best incident postmortem software for SRE teams in 2026

Updated February 5, 2026

TL;DR: The best postmortem software captures incident data automatically during response, not after. Manual reconstruction takes 60-90 minutes per incident and produces inaccurate timelines. Modern platforms like incident.io automate timeline capture through Slack-native workflows, reducing postmortem completion time to 15 minutes. Look for tools that integrate with your observability stack, eliminate tool sprawl, and provide measurable MTTR insights. Total cost of ownership matters: hidden on-call add-ons can double your actual spend.

Manual postmortem reconstruction costs engineering teams thousands of dollars monthly. After a P1 incident resolves at 3 AM, engineers wait three days before opening a blank document. They scroll through 400 Slack messages, check PagerDuty history, and try to remember Zoom call details. Ninety minutes later, they have an incomplete timeline that is probably inaccurate because memory fades and context disappears.

This is the postmortem tax. The solution is not a better text editor or template. The solution is automated data capture during the incident itself, so your postmortem writes itself from objective facts rather than subjective recall.

What SREs actually need in postmortem tools

The incident management market is crowded with platforms claiming to "streamline" your postmortem process. Most hand you a template and call it automation. That misses the point entirely. SREs need tools that eliminate the reconstruction burden by capturing timeline data as the incident unfolds.

Automated timeline capture vs. manual reconstruction

Manual postmortem reconstruction takes 60-90 minutes per incident when engineers piece together data from Slack, monitoring tools, and call recordings after the fact. The problem compounds with distributed teams where context lives in multiple time zones and communication channels. SREs spend hours, sometimes days, manually reconstructing an incident's timeline, piecing together data from Slack, Jira, and CI/CD pipelines.

Our automated timeline capture works differently. We record every action as it happens: Slack messages in incident channels, role assignments via slash commands, decisions made during video calls, and severity changes. When you type /inc resolve, we've already written 80% of your postmortem using data captured during response.

"Incident has transformed our incident response to be calm and deliberate. It also ensures that we do proper post-mortems and complete our repair items." - Mike H. on G2

The accuracy difference is substantial. Manual reconstruction relies on memory, which degrades rapidly. Google's SRE book emphasizes that effective postmortems require objective data rather than subjective recall. Automated capture provides precise timestamps for when specific actions occurred, who made key decisions, and what data informed those choices.

Deep integration with observability and chat tools

Tool sprawl kills postmortem quality. When incident response happens across PagerDuty for alerting, Slack for coordination, Datadog for metrics, and Jira for follow-ups, critical context gets trapped in silos. Engineers waste time context-switching instead of troubleshooting.

Slack-native incident management means we live where your team already works. When Datadog fires an alert, we auto-create a dedicated incident channel, page the on-call engineer, and start capturing the timeline without anyone leaving Slack. Our commands like /inc escalate and /inc assign feel natural because they're messages, not separate UI interactions.

Integration depth matters beyond chat. We pull graphs directly from Datadog or Prometheus into postmortem reports, sync follow-up tasks to Jira or Linear automatically, and export finished documents to Confluence or Notion with one click. This creates a single source of truth instead of scattered artifacts.

"My favourite thing about the product is how it lets you start somewhere simple, with a focus on helping you run incident response through Slack... It's got great features you can dive into (post-mortem templates, action item tracking) and integrations with all the major tools (Statuspage, GitHub, etc) you'd expect." - Chris S. on G2

Teams reduce MTTR by 37% simply by eliminating the coordination overhead that comes from juggling multiple tools during an active incident.

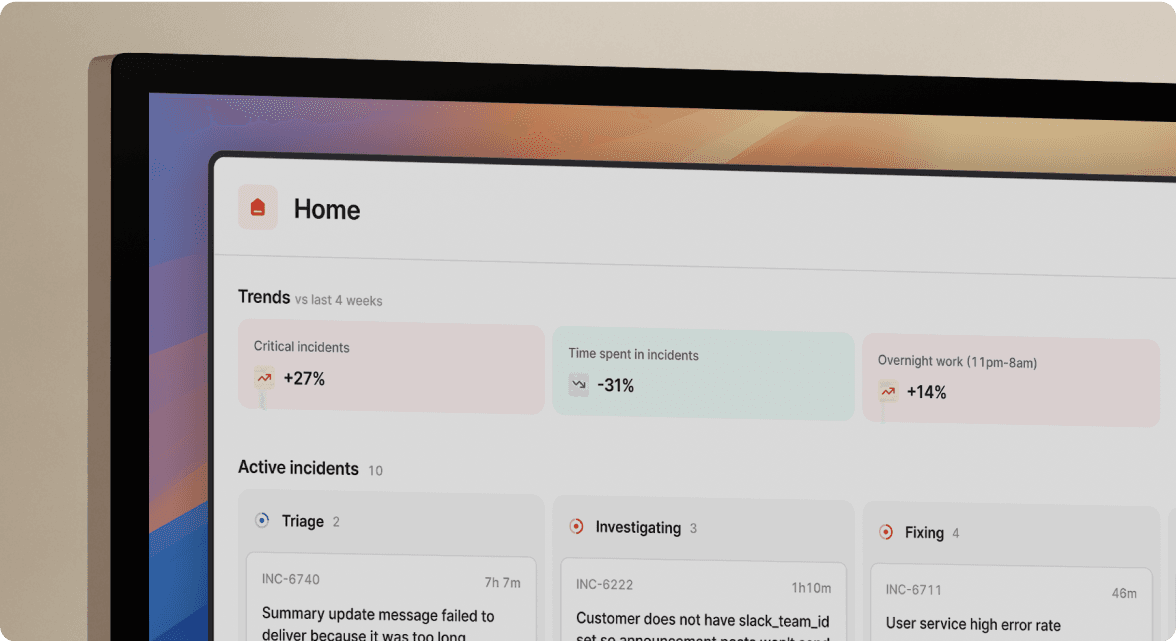

Actionable reliability insights and MTTR tracking

Postmortems should do more than document what happened. They should reveal patterns: which services generate the most incidents, whether MTTR is improving quarter over quarter, and how many incidents stem from recent deployments versus infrastructure failures.

Most teams cannot answer these questions because incident data lives in disparate systems. We aggregate data automatically, showing MTTR trends, incident frequency by service, and top incident types in dashboards that require no manual data entry.

This visibility turns postmortems from compliance exercises into strategic tools. When your VP of Engineering asks whether that Kubernetes migration reduced outages, you pull up the dashboard showing incidents dropped 35% in the affected services. When planning next quarter's reliability budget, you have data proving database connection pool issues caused 40% of incidents.

Structured postmortems with data analysis help teams identify patterns and prevent recurring incidents, but only when teams actually analyze the data. Platforms that make analysis automatic rather than aspirational deliver measurable reliability improvements.

Top 5 incident postmortem tools for SREs

We evaluated platforms based on timeline automation, integration depth, AI capabilities, pricing transparency, and setup time. These five tools represent different approaches to the postmortem challenge, each with specific strengths for different team needs.

1. incident.io: Best for automated, Slack-native postmortems

We treat incident response as the source of the postmortem. When alerts fire from Datadog or Prometheus, we auto-create dedicated incident channels, page on-call engineers via Slack or Microsoft Teams, and start capturing timeline data immediately. Every message, command, and role assignment gets logged automatically.

Our AI SRE assistant automates up to 80% of incident response, analyzing past incidents to suggest likely causes. Scribe transcribes incident calls from Google Meet or Zoom in real-time, extracting key decisions without requiring a dedicated note-taker. When you resolve the incident, your postmortem is already 80% complete.

"Without incident.io our incident response culture would be caustic, and our process would be chaos. It empowers anybody to raise an incident - and helps us quickly coordinate any response... Its post-mortem and follow-up tooling is simple, yet detailed, and gives us the structure to quickly share learnings from our incidents." - Matt B. on G2

We get you operational quickly. Teams report integrating with existing tools in less than 20 days and full implementation across multiple teams in just 45 days for organizations with 200+ engineers. We integrate with Datadog, PagerDuty, Jira, GitHub, and Confluence.

Pricing: Pro plan costs $25/user/month for incident response. On-call management adds $20/user/month, making the total $45/user/month if you need full capabilities. A free Basic plan covers essential features for small teams testing our platform.

Best for: Engineering teams wanting Slack-native workflows, fast deployment, and AI-powered automation without extensive training requirements.

Limitations: We're opinionated with strong defaults. Teams wanting infinite workflow customization may find constraints frustrating. We integrate with existing monitoring tools rather than replacing them, so you still need Datadog or Prometheus.

"I didn't know about incident.io until I've had the opportunity to learn from him at the current company I'm working for. I really like its integration with Slack, quickly and visually alerting us to an issue. The possibility of customization and the alerts about actions to be completed and executed is very guiding." - Cassio F. on G2

2. PagerDuty: Best for teams needing legacy alerting continuity

PagerDuty dominates the alerting market with 700+ integrations and battle-tested reliability. The platform excels at alert routing, escalation policies, and on-call scheduling. The Incident Response product includes postmortem capabilities, though they feel secondary to alerting. Generative AI Summaries and Copilot help create status updates, but the platform remains web-first rather than chat-native. User reviews note that PagerDuty lacks serious workflow management for incident coordination.

Pricing: Starts at $21/user/month with tiered plans. Average contracts run $64,621 per year, with 100-person teams frequently exceeding $119,000 annually when adding necessary features.

Best for: Organizations with existing PagerDuty investments who need proven alerting reliability.

Limitations: The platform focuses on alerting, leaving teams to coordinate response in separate tools and document postmortems elsewhere.

3. Blameless: Best for strict SRE methodology and SLO tracking

Blameless prioritizes SRE practices and reliability engineering, offering deep SLO tracking, error budget management, and structured blameless retrospectives. The platform provides integrations with alerting and ticketing tools and Generative AI for crafting updates.

Pricing: Essentials tier starts at $20 per user per month for the first 50 users. Enterprise pricing requires contact for SLO Manager and advanced AI features.

Best for: Engineering organizations with mature SRE practices who need comprehensive SLO tracking alongside incident management.

Limitations: Can feel heavyweight for smaller teams or those just establishing reliability practices.

4. Rootly: A strong alternative for Slack-based workflows

Rootly offers Slack-native incident management with automated timeline logging and postmortem generation. AI capabilities include automated summaries that speed up postmortem creation, though with less feature depth than incident.io's AI capabilities.

Pricing: Not publicly disclosed. Contact required for quotes.

Best for: Teams wanting Slack-native incident coordination with customizable workflows.

5. Jira Service Management: Best for Atlassian-heavy ecosystems

Jira Service Management (JSM) serves organizations already invested in the Atlassian ecosystem. The challenge is that JSM originates as an IT service desk platform, not a real-time incident coordination tool. When your API returns 500 errors, navigating JSM's service desk interface costs precious minutes.

Pricing: Premium tier pricing varies by team size, starting around $47-55 per agent per month. Setup is complex, taking 1-2 weeks with 2-4 hours training per user.

Best for: Organizations with heavy Atlassian investment who prioritize ecosystem consistency over specialized incident management capabilities.

Comparison table: Features, pricing, and integrations

| Platform | Automated timeline | AI capabilities | Pricing (per user/month) | Setup time | Best for |

|---|---|---|---|---|---|

| incident.io | Yes, we capture Slack messages, commands, call transcriptions automatically | Our AI SRE automates up to 80% of response, Scribe transcription, automated postmortem drafts | $25 base + $20 on-call = $45 total | Days to weeks | Slack-native teams wanting fast deployment and AI automation |

| PagerDuty | Limited, requires manual documentation | AI summaries, Copilot assistant, AIOps alert grouping | $21+ base, averages $64K annually for 100 users | 1-2 weeks | Teams needing proven alerting with 700+ integrations |

| Blameless | Yes, with SLO/error budget tracking | Generative AI for updates, AI-driven incident goals | $20+ Essentials, Enterprise custom | 2-3 weeks | SRE teams focused on service reliability methodology |

| Rootly | Yes, Slack-native logging | AI-assisted summaries | Contact for pricing | 1-2 weeks | Teams wanting customizable Slack workflows |

| Jira Service Management | Manual, ticket-based | Limited, relies on Atlassian Intelligence | $47-55 Premium tier | 1-2 weeks | Atlassian-committed organizations |

The pricing differences matter more than they appear. PagerDuty's low entry price hides costs that accumulate as you add necessary features. Our transparent pricing includes capabilities competitors gate behind enterprise tiers. JSM requires the Premium tier for meaningful incident management, making it more expensive than alternatives with better-suited architectures.

How to calculate ROI for postmortem software

Engineering time is expensive. A senior site reliability engineer costs approximately $89-115 per hour fully loaded with benefits and overhead. When that engineer spends 90 minutes reconstructing a postmortem from memory, you burn $133-172 per incident in pure labor cost.

Multiply by incident frequency. A team handling 18 incidents monthly spends:

- 18 incidents × 90 minutes = 1,620 minutes (27 hours) monthly

- 27 hours × $100/hour average = $2,700 monthly

- $2,700 × 12 months = $32,400 annually just on postmortem reconstruction

With automated timeline capture reducing that work to 15 minutes of editing:

- 18 incidents × 15 minutes = 270 minutes (4.5 hours) monthly

- 4.5 hours × $100/hour = $450 monthly

- $450 × 12 months = $5,400 annually

Net savings: $27,000 per year in engineering time for one team. That does not count the accuracy improvements that reduce repeat incidents, or the faster time-to-learning that prevents similar failures.

Compare that to platform costs. For a 50-user organization:

- Our Pro plan: $45/user/month × 50 users = $27,000 annually

- PagerDuty (average): $64,621 annually for 100 users ≈ $32,000 for 50 users

- Jira Service Management Premium: Approximately $28,000-32,000 annually for 50 users

When structured postmortems help identify and prevent even a few recurring incidents, the operational savings compound beyond pure postmortem time.

"Incident.io stands out as a valuable tool for automating incident management and communication... Another handy feature is its ability to automate routine actions, such as postmortem reports generation. This automation can significantly reduce the time spent on manual, repetitive tasks." - Vadym C. on G2

TCO analysis should include opportunity cost. What could your senior engineers build if they reclaimed 22 hours monthly currently spent reconstructing postmortems? That time redirected to proactive reliability work delivers compounding value beyond direct cost savings.

Building a blameless culture with the right tools

Blameless postmortems focus on identifying contributing causes without indicting individuals for bad judgment. Google's SRE book defines the principle: assume everyone involved had good intentions and made reasonable decisions with available information. The goal is organizational learning, not fault assignment.

Tools enable blameless culture by providing objective data. When timelines capture exactly what happened and when, postmortems shift from "who messed up?" to "what system allowed this to occur?" Automated capture removes subjective memory bias.

Three practices strengthen blameless postmortems:

1. Focus follow-ups on system improvements, not individual performance. Every postmortem should generate action items that improve resilience: better monitoring, clearer run books, automated rollback capabilities, or additional testing.

2. Separate incident review from performance management. Make it explicit that postmortem discussions will not factor into performance evaluations. This gives people confidence to escalate issues without fear.

3. Celebrate effective incident response publicly. When teams handle incidents well, highlight those actions. This reinforces positive behaviors and makes incident participation feel valuable rather than punitive.

"incident.io is extremely easy to use... It helps both during an incident and the post-incident/post-mortem process by allowing users with little training to manage incidents like pros." - Roro O. on G2

The right platform makes blameless culture practical. When postmortems write themselves from captured data, teams spend review time analyzing root causes and designing preventive measures instead of arguing about who said what. When our AI identifies likely code changes that caused incidents, discussions focus on deployment practices and testing gaps rather than blaming the engineer who merged the PR.

Stop writing postmortems from memory

Manual postmortem reconstruction is technical archaeology. You dig through Slack threads, PagerDuty logs, and fading memories to piece together what probably happened three days ago. The result is inaccurate timelines, missed learnings, and 90 minutes of senior engineer time wasted.

The fix is automated timeline capture during the incident itself. Platforms that record every action as it happens produce postmortems from objective data rather than subjective recall. This improves accuracy, reduces engineering burden, and shifts team focus from documentation to analysis.

We've shown that SRE teams save $27,000 annually in reconstruction time alone when using platforms with automated capture. Add the reliability improvements from better data analysis, the reduction in repeat incidents from structured learning, and the cultural benefits of blameless review processes, and the ROI becomes compelling.

Choose tools based on how they fit your existing workflow. If your team lives in Slack and you want operational capability quickly, schedule a demo to see automated postmortem generation in action. Run one real incident through our platform, type /inc resolve, and watch your postmortem draft itself from captured timeline data. If you need deep SLO tracking and formal reliability engineering methodology, evaluate Blameless. If you have heavy Atlassian investment, consider JSM.

The postmortem software decision comes down to how much your engineers' time is worth. Every hour spent reconstructing timelines is an hour not spent improving reliability, building features, or preventing the next incident. We don't eliminate postmortems. We help you do them right without burning expensive engineering hours on clerical work.

Key terms glossary

MTTR (Mean Time To Resolution): Average time from incident detection to full resolution. Industry benchmarks range from 30-60 minutes for P1 incidents.

Automated timeline capture: Systems that record incident actions in real-time (Slack messages, commands, role changes) without manual note-taking during response.

Slack-native: Platforms architected to handle the full incident lifecycle within Slack using slash commands rather than requiring separate web interfaces.

AI SRE: Machine learning systems that analyze past incidents to identify root causes, suggest fixes, and automate coordination tasks during active response.

Blameless postmortem: Retrospective analysis focusing on systemic contributing factors rather than individual fault, following Google SRE practices for organizational learning.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization