AI Root Cause Analysis: Accuracy Testing Guide

Updated February 20, 2026

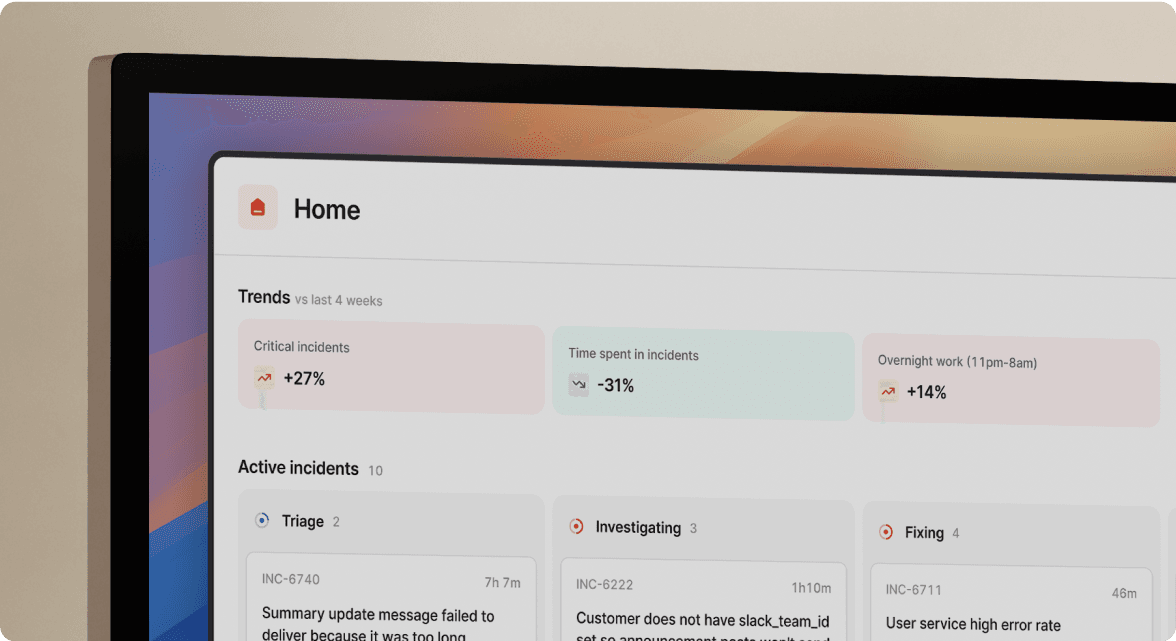

TL; DR: Evaluate AI in incident management using measurable precision and recall, not marketing promises. Target tools with greater than 80% precision (relevant suggestions) even if recall is lower, because false positives destroy trust during high-stress outages. The difference between a ChatGPT wrapper and a true AI assistant lies in integration depth. Can it access your Service Catalog, recent deploys, and alert history, or just Slack logs? Test AI accuracy during trials by running historical incidents with known root causes through the system and scoring AI suggestions against actual causes.

Marketing claims about AI-powered incident response flood your inbox weekly. Vendors promise self-healing infrastructure and automated root cause analysis. But when production is down at 3 AM and customers are impacted, you need metrics, not magic. Here's how to evaluate whether AI actually helps or just adds another dashboard to ignore.

The reality of AI in incident response: Correlation vs. Causation

AI excels at pattern matching. It scans thousands of data points, identifies temporal correlations, and surfaces relationships humans might miss. But correlation is not causation, and this distinction creates the primary failure mode in incident management AI.

Consider a database migration that starts at 2:00 PM, causing increased latency as it holds table locks. At 2:03 PM, a routine cache flush job runs (scheduled daily), causing a brief CPU spike. An AI trained on correlation might suggest "high CPU from cache flush caused latency" because these events co-occur. The actual cause is the database migration holding locks. Without deep integration understanding causal relationships between system components, AI defaults to temporal correlation.

Traditional AIOps platforms focus on event correlation and anomaly detection, clustering hundreds of alerts into manageable groups. Generative AI, as seen in AI-powered incident management platforms, analyses observability data, correlates deployments with error spikes, and explains potential causes in plain language., analyses observability data, correlates deployments with error spikes, and explains potential causes in plain language. The distinction matters because one reduces noise while the other suggests fixes with explanations.

AI functions as a pattern-matching engine, not a reasoning engine. This makes it excellent at surfacing context (recent deploys, config changes, unusual metrics) but requires human judgment to understand why a specific change caused a specific failure in your unique system.

Defining the metrics: Precision and recall in root cause analysis

We evaluate AI using two standard machine learning metrics: precision and recall. These quantify AI accuracy in ways that transfer directly to incident management.

Precision: The "no noise" metric

Calculate precision as True Positives divided by True Positives plus False Positives. Applied to incident root cause AI, precision equals relevant AI suggestions divided by total AI suggestions.

If an AI flags 10 potential causes and only 6 are actually relevant to the incident, precision is 60%. Higher precision means fewer rabbit holes. Every false positive burns engineer time during the most stressful moments.

Target greater than 80% precision. At 70% precision, three out of every ten suggestions waste investigation time. At 50% precision, you're essentially flipping coins. Most security teams prioritize false positive reduction because consistently overloading the team is unsustainable.

Recall: The "don't miss it" metric

Calculate recall as True Positives divided by True Positives plus False Negatives. Applied to incident root cause AI, recall equals relevant AI suggestions divided by total actual relevant causes.

If there were 3 actual contributing factors to an incident but the AI only identified one, recall is 33%. High recall means the algorithm returns most relevant results, whether or not irrelevant ones are also returned.

Target approximately 60% recall for AI assistants. As a platform, incident.io's AI SRE handles up to 80% of incident response, automating the most common patterns rather than attempting to catch every edge case. A 60% recall means the AI reliably flags frequent root causes (recent deploys, config changes) while accepting that novel failures will still require human expertise.

Why SREs should favour precision over recall

False positives (low precision) actively waste time. AI-powered incident management platforms help reduce alert fatigue, which otherwise impairs decision-making during incidents. You investigate the CPU spike for 10 minutes, rule it out, and move on, but that's 10 minutes of customer impact. The difference between 200 alerts and 3 meaningful alerts is the difference between panic and focus.

False negatives (low recall) are recoverable. If the AI misses a cause, you continue your normal investigation. No net harm occurs beyond the AI not helping. But when AI suggests 15 potential root causes, you stop trusting it and turn it off. When AI suggests 3 highly relevant causes, it becomes the first tool you check.

A framework for testing AI root cause accuracy

Test AI features during your trial with real data from your environment. Here's a concrete three-step evaluation plan.

Step 1: The historical backtest

Run historical incidents with known root causes through the system to measure AI accuracy.

- Select test incidents: Choose 5-10 closed incidents where you definitively know the root cause. Mix severity levels and types (deployment failures, infrastructure issues, third-party dependencies).

- Export incident data: Gather Slack transcripts, timelines, alerts, and resolution details. By design, incident.io connects pull requests and surfaces updates from GitHub and GitLab integrations.

- Import into trial environment: Feed historical data during your trial. You can run fake incidents to sandbox incident.io without impacting billing.

- Score AI suggestions: Mark each AI suggestion as relevant (true positive) or irrelevant (false positive). Calculate precision per incident, then average across test cases.

- Measure recall: Did the AI flag the actual root cause? If the known cause was "bad database migration in PR #4872," did it surface that specific code change?

Target greater than 80% precision and approximately 60% recall across your test set.

Step 2: The context window stress test

In practice, incident.io allows you to sync existing catalogs from Cortex or OpsLevel, or build from scratch. AI quality depends heavily on what systems it can access. A ChatGPT wrapper that only sees Slack messages will miss the database migration that happened in GitLab 5 minutes before the alert fired.

Test whether the AI understands your service dependency map:

- Good behaviour: "I searched the last 50 deploys to payment-service and found PR #8934 deployed at 14:23, 4 minutes before the first alert."

- Bad behaviour: "I don't have that information" when the data exists in connected systems, or worse, fabricating details.

Query for information requiring multi-system integration. "Who is the on-call engineer for the database team?" requires schedule system access. "Show me the runbook for error code DB_CONN_POOL_EXHAUSTED" requires knowledge base access. "What changed in the payment service in the last 30 minutes?" requires deployment pipeline visibility.

AI SRE connects telemetry, code changes, and past incidents, letting AI connect the dots between code changes, alerts, and historical patterns.

Step 3: The hallucination check

AI hallucinations occur when generative models confidently present incorrect or fabricated information as fact. During incident response, fabricated runbook steps or non-existent error codes can send responders down catastrophic paths.

Test intentionally:

- Query non-existent services: "What is the status of service-xyz-9999?" A trustworthy AI responds "I cannot find a service named service-xyz-9999 in the catalog." A hallucinating AI invents details about a fictional service.

- Request fake runbooks: "Pull up the runbook for error code FAKE-404-DOES-NOT-EXIST." The AI should state it cannot find documentation for that code.

- Ask about fictional incidents: "Summarize the incident from March 15, 2027" (a future date). The AI must recognize when data is missing rather than confabulating incident details.

Common AI failure modes in incident management tools

Understanding how AI fails helps you spot these patterns during evaluation and set appropriate expectations.

Log correlation fallacy

AI identifies two simultaneous events and assumes causation. CPU spiked when errors increased, so AI suggests CPU exhaustion as root cause, missing that both are symptoms of an upstream database deadlock.

The wrapper problem

AI lacks access to external tools (GitHub, Jira, PagerDuty) and relies solely on chat logs. You copy a record and ask the model to summarize it. Nothing stays connected. The AI can't answer "what changed in the payment service?" because it has no payment service awareness beyond what someone typed in Slack.

Stale context

AI suggests fixes based on outdated runbooks. A 2022 runbook recommends restarting Redis cache, but your architecture migrated to Memcached in 2024. The AI surfaces the old Redis runbook because it appeared in past incidents.

Over-reliance on recency

AI assumes the most recent change caused the incident. It flags a frontend CSS deploy 2 minutes before the alert, missing that the actual cause was a backend API timeout introduced 3 days ago that only surfaced under high load.

"I appreciate how incident.io consolidates workflows that were spread across multiple tools into one centralized hub within Slack... I also like that incident.io takes a very opinionated stance on incident management, providing needed structure for teams that handle things differently." - Alex N. on G2

ChatGPT wrapper vs. true AI assistant

| Capability | ChatGPT Wrapper | True AI Assistant (incident.io AI SRE) |

|---|---|---|

| Data access | Slack logs you manually paste | Service Catalog, GitHub PRs, PagerDuty alerts, deployment history |

| Context window | Last 50 Slack messages | Full incident graph including service dependencies and past incident patterns |

| Root cause suggestions | "High CPU caused errors" (symptom correlation) | "PR #4872 changed DB pool settings 5 min before alert" (causal connection) |

| Knowledge freshness | Suggests fixes from pasted runbooks | Connects current architecture to live deployment data |

| Hallucination check | Invents plausible-sounding details for non-existent services | Returns "Cannot find service-xyz in catalog" |

How incident.io approaches AI: An assistant, not a replacement

Generic AI summarizes chat logs or searches knowledge bases. True AI incident management platforms act as an AI SRE teammate that investigates issues, identifies root causes, and suggests fixes. The distinction matters because one saves you 5 minutes of reading, the other saves you 30 minutes of investigation.

AI SRE: Context-aware root cause investigation

AI SRE pulls data from alerts, telemetry, code changes, and past incidents. When an alert fires, the system automatically searches recent deployments, configuration changes, and similar historical incidents to build context before a human even joins the incident channel.

AI SRE pulls context from alerts, code changes, and past responses, like knowing a specific engineer rolled back a deploy and brought in the database team to fix a previous similar incident. This organizational memory eliminates the "didn't we see this before?" question that burns 10 minutes of every recurring incident.

Importantly, incident.io connects 40+ integrations with tools like PagerDuty, Opsgenie, Jira, GitHub, and Datadog. AI quality depends on integration breadth. An AI that sees GitHub PRs, Datadog metrics, and past incident outcomes provides suggestions grounded in your actual system behaviour.

The AI suggests specific pull requests with rationale. "I suggest looking at the deploy to the auth-service by @jane.doe 5 minutes ago, as it changed the database connection pool settings which could be related to the High Latency alert." This level of specificity (exact PR, exact engineer, exact config change, specific correlation to alert type) only works when AI accesses the service dependency graph, not just Slack logs.

"Clearly built by a team of people who have been through the panic and despair of a poorly run incident. They have taken all those learnings to heart and made something that automates, clarifies and enables your teams to concentrate on fixing, communicating and, most importantly, learning from the incidents that happen." - Rob L. on G2

Scribe: Automated timeline capture and transcription

Manual notetaking during incidents creates a terrible trade-off. Either pull an engineer away from troubleshooting to document what's happening or lose critical context for the post-mortem. Scribe provides AI-powered transcription and summarization for incident calls, eliminating this tax.

The system captures every Slack message, every /inc command, every role assignment, and transcribes incident calls automatically. When you type /inc resolve, incident.io immediately drafts automated post-mortems using captured timeline data, transcribed call notes, and key decisions. Engineers spend 10-15 minutes refining instead of 90 minutes writing from scratch.

"incident.io provides a one stop shop for all the orchestrations involved when managing an incident. Since implementing incident.io we have been able to manage all elements of an incident in a single place due to the integrations that the product offers, hugely improving our communication capabilities and response times." - Kay C. on G2

You can manually add or remove Scribe from incident calls when dealing with sensitive discussions or privacy concerns.

When AI says "I suggest looking at PR #4872 because it modified the database connection pool 5 minutes before the alert," you can verify that reasoning. When it says "I cannot find recent changes to service-payment in the last hour," you know the boundaries of its knowledge.

Try incident.io free to test AI SRE and Scribe features on real incidents in your environment, or book a demo to see it for yourself.

Key terminology for AI in incident management

Precision (in AI root cause analysis)

The percentage of AI-suggested root causes that are actually relevant to the incident. High precision means fewer false positives and less noise for the team.

Recall (in AI root cause analysis)

The percentage of actual root causes that the AI successfully identifies. High recall means the AI rarely misses a connection, though it might suggest some irrelevant ones.

Hallucination

When a generative AI model confidently presents incorrect or fabricated information (citing a non-existent error code or runbook) as fact.

Root Cause Analysis (RCA)

The process of identifying the fundamental reason for an incident to prevent recurrence. AI accelerates this by correlating alerts, code changes, and infrastructure metrics.

AIOps

AI for IT Operations focused on event correlation, anomaly detection, and predictive analytics using statistical methods rather than generative language models.

FAQs

See related articles

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom Wentworth

Humans aren’t fast enough for 4 9’s

Hitting 99.99% isn't a faster version of what you already do. It's a different problem to be solved: autonomous recovery, dependency ceilings, redundancies, and the discipline to build systems that buy you 15-30 minutes before you're needed at all.

Norberto Lopes

Norberto LopesSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization