AI SRE explained: what it is, how it works, and the human vs. AI reality

Updated February 27, 2026

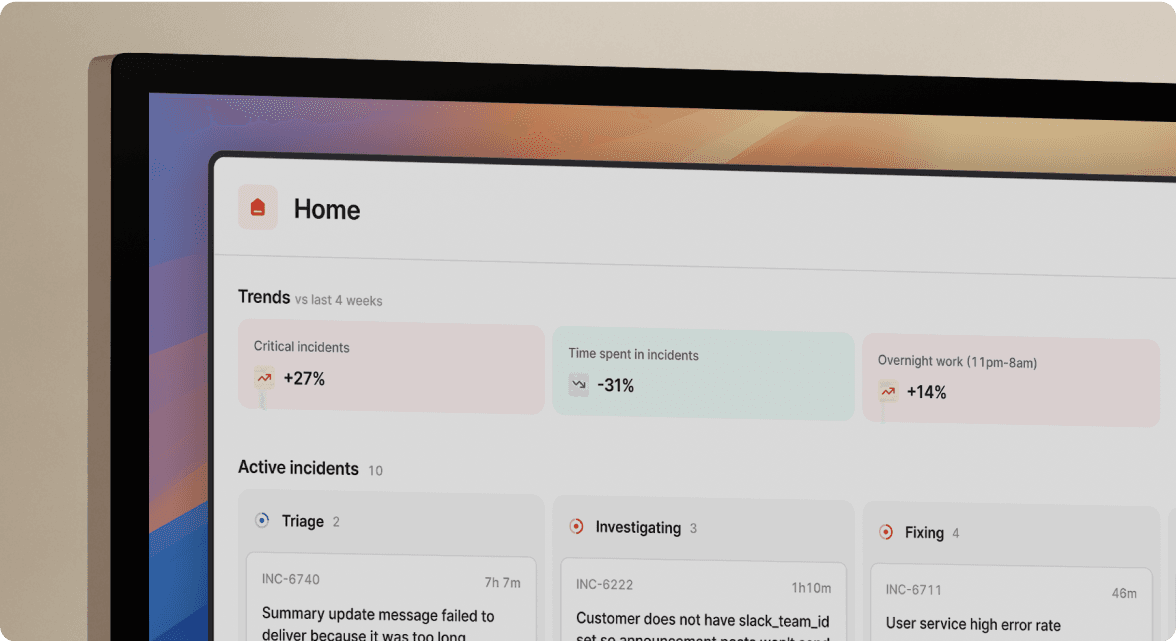

TL;DR: AI SRE uses LLMs grounded in your specific infrastructure data (logs, runbooks, service topology) via Retrieval-Augmented Generation (RAG) to automate the investigation, documentation, and coordination phases of incident response. AI SRE augments SRE teams by reducing manual coordination and documentation overhead — including the burden of managing on-call schedules that keep coverage continuous. It can significantly decrease the time spent on post-mortem reconstruction, team assembly, and cross-tool context gathering, allowing engineers to focus on investigation and remediation. The highest-value applications today include automated root cause correlation, real-time timeline capture, and AI-assisted post-mortem drafting. Autonomous remediation remains limited, with human oversight and decision-making central to production environments.

Most "AI" in DevOps is fancy regression analysis with a better marketing budget. Alert deduplication dressed up as intelligence, noise reduction renamed "AIOps." If you've sat through vendor demos promising automatic incident remediation, your skepticism is earned.

Something genuinely different appeared in the last 18 months, and it's worth your attention. By combining Large Language Models (LLMs) with your organization's actual telemetry, topology, and runbooks, AI systems can now do something they previously couldn't: explain what happened, not just detect that something did. That distinction matters enormously for how you evaluate tools, structure your on-call process, and decide where to invest engineering time.

This guide covers the technical architecture behind AI SRE, the realistic use cases working in production today, an honest comparison of AI versus human capabilities, and a framework for evaluating tools without falling for the hype.

What is an AI SRE? Defining the capabilities

Before buying into any vendor pitch, you need a clean definition of terms, because "AI SRE" covers a wide spectrum of actual capability.

AIOps (the older approach) focuses on noise reduction, alert deduplication, and statistical pattern detection. It groups related alerts, suppresses known-noisy signals, and predicts anomalies using clustering algorithms. As Thoughtworks noted in their 2025 AIOps analysis, traditional AIOps identifies that something is related but doesn't explain why. Useful, but fundamentally reactive and limited to detection.

AI SRE (the newer approach) uses generative, agentic models capable of multi-step reasoning. The shift, as DevOps.com describes in their AIOps for SRE analysis, is from detecting to explaining. An AI SRE system can:

- Investigate: Query logs and metrics using natural language, correlate observability spikes with recent code changes, and surface likely root causes with citations.

- Coordinate: Summarize incident threads, page the right teams based on service ownership, and auto-populate incident channels with relevant context.

- Document: Convert hundreds of Slack messages into a structured timeline, then draft 80% of a post-mortem from that captured data.

Think of it as the difference between a smoke detector and an arson investigator — a distinction the AI SRE agent definition makes precise. AIOps tells you the building is on fire. AI SRE tells you which room, what started it, and who was last in there.

How AI SRE works under the hood: LLMs, RAG, and topology

The most important architectural concept you need to understand is Retrieval-Augmented Generation (RAG). Without it, LLMs guess. With it, they reason from your actual infrastructure data.

RAG: grounding the model in your reality

AWS defines RAG as the process of optimizing LLM output by having it reference an authoritative knowledge base before generating a response. In plain terms: the model fetches relevant context first, then answers.

Here's the flow in an SRE context. A latency spike fires on checkout-service. The AI queries a vector database containing your runbooks, past incident post-mortems, service manifests, and recent deployment logs, then surfaces the most relevant documents. Those documents get injected into the prompt alongside the alert context, with an explicit instruction to ground the answer in those facts. The LLM produces a response with citations, pointing back to specific log lines or commit hashes it used to reach its conclusion.

Google Cloud's RAG documentation describes the goal: the LLM has access to the most pertinent and grounding facts from your vector database, reducing the likelihood of hallucination. Research from arxiv on domain-specific RAG applications reinforces this: retrieval-augmented generation addresses domain coverage issues by first retrieving the necessary domain knowledge, which the model then uses as grounding context.

This is what separates genuine AI SRE from a chatbot wrapper around a generic LLM. Without RAG anchoring responses to your specific infrastructure, you're getting pattern-matched guesses, not investigated findings.

Service topology: understanding that Service A talks to Database B

RAG retrieves documents. Topology graphs understand relationships. You need both for accurate root cause analysis (RCA).

Your service catalog encodes the dependency graph: checkout-service depends on payment-gateway, which depends on auth-db. When an AI SRE system ingests this graph, it can reason about blast radius rather than just observing that one service is slow. It traces the dependency chain and investigates upstream and downstream services simultaneously. Our Catalog feature is built precisely around this: our AI understands your specific service dependencies to accurately scope the impact of any incident.

The combination of RAG (document retrieval) and topology (relationship graphs) allows the AI to generate a specific, verifiable root cause hypothesis rather than a generic guess.

Core use cases: what AI can realistically automate today

Automated root cause analysis and investigation

AI SRE delivers the most immediate, measurable value here. The mechanism is straightforward: AI correlates two distinct data streams simultaneously.

Stream 1: Observability spikes (latency jumps on checkout-service at 14:32 UTC, error rate climbs to 2.3%, CPU on payment-gateway hits 94%). Stream 2: Change events (GitHub commit abc123 deployed at 14:28 UTC, Kubernetes deployment payment-gateway-v2.1.4 rolled out at 14:29 UTC).

You work through these sequentially when investigating manually, jumping between Datadog, GitHub, and deployment logs. Our AI SRE pulls data from alerts, telemetry, code changes, and past incidents simultaneously to cut through the noise and pinpoint the problem fast, surfacing the most likely hypothesis with citations to the specific PR and corresponding latency spike. You verify and act.

A well-built AI SRE system methodically invalidates hypotheses without supporting evidence and digs deeper into promising leads, classifying each as validated, invalidated, or inconclusive. That's the investigative cadence of a thorough senior engineer, running in parallel across your entire log corpus.

Generative AI for incident timelines and post-mortems

This use case delivers the most direct, quantifiable ROI for SRE teams today. Manual post-mortem reconstruction wastes 60-90 minutes per incident, and the output is often incomplete because memories fade and Slack threads get buried.

We built Scribe to eliminate this entirely. Scribe joins your incident call automatically and transcribes everything in real-time. When someone says "I think this correlates with the 2:30 AM deployment," Scribe flags it as a key moment. When the team decides "let's roll back first," that decision gets captured and pushed into the incident timeline automatically. No designated note-taker. No one pulled away from troubleshooting.

Scribe supports Zoom, Google Meet, and Microsoft Teams. Our timeline generation feature combines Scribe's call captures with Slack message events, severity changes, role assignments, and pinned messages to build a complete, timestamped record of the incident as it unfolds.

As our Scribe impact review notes, adoption among customers with supported call platforms reached 44% shortly after launch without any mandatory rollout. Engineers use it because it removes toil, not because they're required to.

Once the incident resolves, the captured timeline populates the post-mortem draft automatically. The draft includes an incident summary, complete timeline of events, contributing factors, and suggested follow-up actions. You spend roughly 10 minutes editing and refining rather than 90 minutes reconstructing from scratch, and the output exports natively to Confluence, Notion, or Google Docs with one click.

"Fantastic Product... It takes all the pain out of incident management and lets you focus on working the incident itself." - Verified user on G2

Intelligent triage and routing

Not every alert warrants a P1 response. One of the highest-leverage applications of AI triage is accurate severity classification before anyone gets paged.

The mechanism: the AI analyzes the incoming alert payload against historical incident data and current service context — capabilities built into incident.io's on-call software. An alert reading "Database CPU High" could be a P1 crisis or a scheduled backup job. With access to your service catalog and past incidents, the AI distinguishes between the two and routes accordingly.

The best AI triage systems recommend whether you should act now or defer until later, rather than blanket paging every alert. That recommendation feeds into smart escalation paths, reducing alert fatigue without sacrificing coverage.

AI SRE vs. human SRE: a capabilities comparison

The goal here is to understand where humans and AI excel so you can combine them effectively.

| Capability | AI SRE | Human SRE | Notes |

|---|---|---|---|

| Data processing speed | Milliseconds across millions of log lines | Minutes to hours for manual log review | AI wins decisively on volume and speed |

| Pattern recognition | Correlates signals across full history simultaneously | Limited by what a human can manually review and remember | AI identifies patterns across past incidents a human would miss |

| Contextual nuance | Limited. Lacks intuition for business politics, team dynamics, or undocumented behavior | Strong. Understands why a "minor" service is actually customer-critical | Humans maintain the decisive edge here |

| Decision-making authority | Suggests and drafts. Should not act autonomously in production | Assesses risk, weighs trade-offs, and executes with accountability | Humans must remain the decision-makers |

| Parallel hypothesis testing | Tests multiple root cause theories simultaneously | Sequential investigation, one thread at a time | AI dramatically reduces time-to-identify |

| Documentation | Generates structured timelines and post-mortem drafts from captured data | Manual reconstruction from memory and scroll-back | AI turns 90 minutes into 10 minutes |

| Novel failure modes | Struggles without historical precedent to draw from | Adapts through creative problem-solving and first-principles reasoning | Human judgment is irreplaceable for genuinely new failures |

| Fatigue | None. Consistent performance at 3 AM and 3 PM | Significant. Cognitive load degrades decision quality during extended incidents | AI as first responder reduces human fatigue |

AI excels at handling repetitive, data-intensive tasks, not replicating the uniquely human skills of intuition, creative problem-solving, and strategic system design. The sbmi.uth.edu analysis of AI versus human intelligence makes the boundary precise: human decision-making incorporates empathy, social considerations, and ethical judgment, elements that are difficult to quantify but crucial in real-world contexts.

The practical model that works: think of AI SRE as an indefatigable assistant that handles the investigation grunt work, so your senior engineers can focus on the fix. AI reads the logs, summarizes the thread, checks recent deploys, and surfaces the likely culprit. Your engineer evaluates the hypothesis, applies judgment about risk, and executes the remediation. Human plus AI outperforms either alone.

The accuracy question: hallucinations, precision, and recall

Your skepticism about AI hallucinating root causes is correct and healthy. LLMs can generate confident-sounding wrong answers. The question isn't whether hallucinations happen, but what architectural safeguards prevent them from causing harm.

The "glass box" vs. "black box" distinction is the key evaluation criterion.

A black box AI says: "The root cause is a memory leak in auth-service." You have no idea why it thinks that. A glass box AI says: "Based on this log line [link] from 14:31 UTC showing auth-service memory usage at 98%, correlated with this commit [link] that modified session caching logic, I believe the likely root cause is the session cache not being evicted correctly." You can verify both sources in under 30 seconds.

This is the standard to hold every vendor to: every suggestion should be transparent and explainable, allowing engineers to see exactly which data led to a recommendation, keeping humans in the loop while enabling faster, data-driven decisions.

The practical implication for accuracy requirements:

- For suggestions (root cause hypotheses, severity recommendations): High but imperfect precision is useful. The AI surfaces the likely answer and you verify. When it's wrong, you catch it before acting.

- For autonomous actions (running a rollback, scaling a fleet): You need much higher confidence, and human approval is mandatory. The AI drafts the action. You click the button.

The word "recommend" is doing important work. AI SRE triages alerts, investigates root cause, and recommends whether to act now or defer. Suggestion accuracy is productive for investigation. It's not sufficient for autonomous production actions.

Evaluating AI SRE tools: a buyer's framework

When you evaluate tools in this space, these questions separate genuine capability from marketing positioning — see our guide to AI-powered SRE tools for an objective comparison.

1. Does it connect to your actual stack?

The AI is only as good as the data it ingests. Verify integrations with your specific monitoring (Datadog, Prometheus, New Relic), code systems (GitHub, GitLab), and deployment tooling (Kubernetes, CI/CD pipelines). Our guide on migrating Datadog monitors to incident.io shows exactly how this connection is configured, which is the kind of documentation specificity worth asking every vendor for.

2. Does it show its work?

Ask for a live demo where the AI surfaces a root cause. Before you care whether it's correct, ask: can you see which log line, commit, or past incident it used? If the vendor can't show you the citation trail, it's a black box. Walk away.

3. Where does your data go?

Your incident data contains sensitive service architecture, security vulnerabilities, and customer impact details. Verify: Does the vendor use your data to train their models? What's the data retention policy? Are they SOC 2 Type II certified? We publish our AI data handling policy openly, and we're SOC 2 Type II certified and GDPR compliant. Ask every vendor for equivalent documentation before signing anything.

4. What's the ROI calculation?

Use this framework: if your team handles 18 incidents per month and each post-mortem takes 90 minutes manually versus 10 minutes with AI assistance, you reclaim 24 engineer-hours per month from post-mortems alone. Add MTTR reduction and assembly time savings on top of that. Compare that to the tool's seat cost. The math typically favors the investment quickly.

5. How fast can you run your first real incident through it?

You need to pilot a real incident through the tool inside your first week. If the vendor requires a multi-week professional services engagement before you can run your first incident, implementation overhead will kill adoption before you see results.

How we implement AI SRE at incident.io

We built our AI SRE on three interconnected capabilities, all living in Slack where your team already works.

AI SRE assistant: catalog-aware investigation

Our AI SRE assistant integrates with your full service catalog, which means it understands your actual dependency graph, not generic topology. When checkout-service latency spikes, our AI doesn't just look at checkout-service. It traces the dependency chain to payment-gateway and auth-db, investigates each, and surfaces findings with citations to the specific alerts, commits, and past incidents it used.

You interact through Slack. Tag @incident to investigate deeper. Ask "Have we seen similar issues before?" and within seconds you get concise, cited answers drawn from your historical incident data. The AI surfaces next steps and drafts fixes, but you keep decision authority throughout.

"incident.io makes incidents normal. Instead of a fire alarm you can build best practice into a process that everyone... can understand intuitively and execute. The tool is flexible to your business and the team have a deep understanding of incident response that is reflected in the UX. it gets out of the way, it puts everything you need front and centre and you are confident that you can build a repeatable culture around incident response." - Verified user on G2

Scribe: real-time transcription and timeline construction

Scribe joins your incident call automatically and captures everything in real-time. Key moments from the call, decisions made, and context surfaced are flagged and pushed directly into the incident channel's timeline. No designated note-taker and no one pulled away from troubleshooting.

Our Scribe feature supports Zoom, Google Meet, and Microsoft Teams. Our timeline generation combines Scribe's call captures with Slack message events, severity changes, role assignments, and pinned messages to build a complete, timestamped record of the incident as it unfolds.

AI-suggested summaries and auto-drafted post-mortems

Our AI suggested summaries feature generates concise incident updates throughout the response, keeping stakeholders informed without requiring the incident commander to context-switch.

At resolution, we use the captured timeline to auto-draft the post-mortem, including incident summary, timeline of events, contributing factors, and suggested follow-up actions. Custom sections like "Lessons Learned" remain for your engineers to complete, because those require human judgment. Everything else is pre-populated.

"In the past our incident process was very manual and haphazard. incident.io has automated all the parts like creating a Slack channel, ensuring the right people are there, and nudging responders to provide timely status updates." - Jamie L. on G2

The future of the AI-augmented SRE

The current generation of AI SRE tools are copilots: they surface context, draft documentation, and suggest next steps. The next generation will be agents capable of taking multi-step actions with appropriate human approval gates.

The distinction matters. Salesforce's analysis of AI agents versus chatbots makes it precise: chatbots help users talk. AI agents help businesses get work done. A well-designed AI agent can plan steps to achieve a goal, call external tools or APIs, and execute tasks autonomously. In SRE terms: today's AI SRE says "I think the fix is rolling back deploy #4872." Tomorrow's AI SRE agent says "I've opened a rollback PR, review and approve to proceed."

The critical safeguard is the human-in-the-loop requirement. It's important to test AI SRE actions in non-critical environments before deploying them in production to ensure reliability and safety. Agents that act autonomously in production without approval gates are a liability risk, not a productivity gain. The right architecture is: AI proposes, human approves, AI executes.

The SRE role doesn't disappear in this future. It evolves. You shift from manually executing incident response steps to designing, governing, and improving the AI systems that handle those steps — start by learning the 7 ways SRE teams reduce MTTR that AI makes possible. The expertise required goes up, not down.

See our AI SRE in your Slack workspace

The gap between reading about AI SRE and experiencing it during a real incident is significant. The architecture makes sense on paper. Watching our AI surface a root cause citation in your actual incident channel, in real time, while Scribe captures the call and the post-mortem begins drafting itself, makes it operational.

Book a demo to see our AI SRE assistant, Scribe, and automated post-mortem generation working with your service topology and alert stack.

Key terms glossary

RAG (Retrieval-Augmented Generation): An architectural pattern where an LLM retrieves relevant documents from a vector database before generating a response. In SRE contexts, the retrieved documents are runbooks, past post-mortems, logs, and service manifests. RAG grounds the model in your specific infrastructure data rather than relying on generic training knowledge.

MTTR (Mean Time To Resolution): The average time from when an incident is detected to when it is fully resolved and service is restored. The primary operational metric AI SRE tools are measured against.

RCA (Root Cause Analysis): The process of identifying the underlying cause of an incident, not just its symptoms. AI SRE tools automate parts of this by correlating observability data with recent change events to surface likely root causes.

LLM (Large Language Model): A type of AI model trained on large text datasets, capable of generating, summarizing, and reasoning about text. In AI SRE, LLMs process incident context (alerts, logs, Slack threads) to produce actionable outputs like root cause hypotheses, incident summaries, and post-mortem drafts.

Toil: Repetitive, manual, automatable work that scales linearly with system growth. Post-mortem reconstruction, manual timeline building, and assembling on-call teams by hand are all examples of toil that AI SRE directly targets.

Vector database: A database that stores content as numerical embeddings, enabling semantic similarity search. In RAG pipelines, the vector database holds your runbooks, past incidents, and service documentation so the AI can retrieve the most relevant context for any given query.

AI SRE: A category of AI tools that uses LLMs grounded in organizational infrastructure data to automate the investigation, coordination, and documentation phases of incident response. Distinct from AIOps (which focuses on pattern detection) in that AI SRE generates explanations, drafts content, and enables natural language interaction with incident data.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization