Tools like Rootly: Complete list of incident management alternatives

Updated April 3, 2026

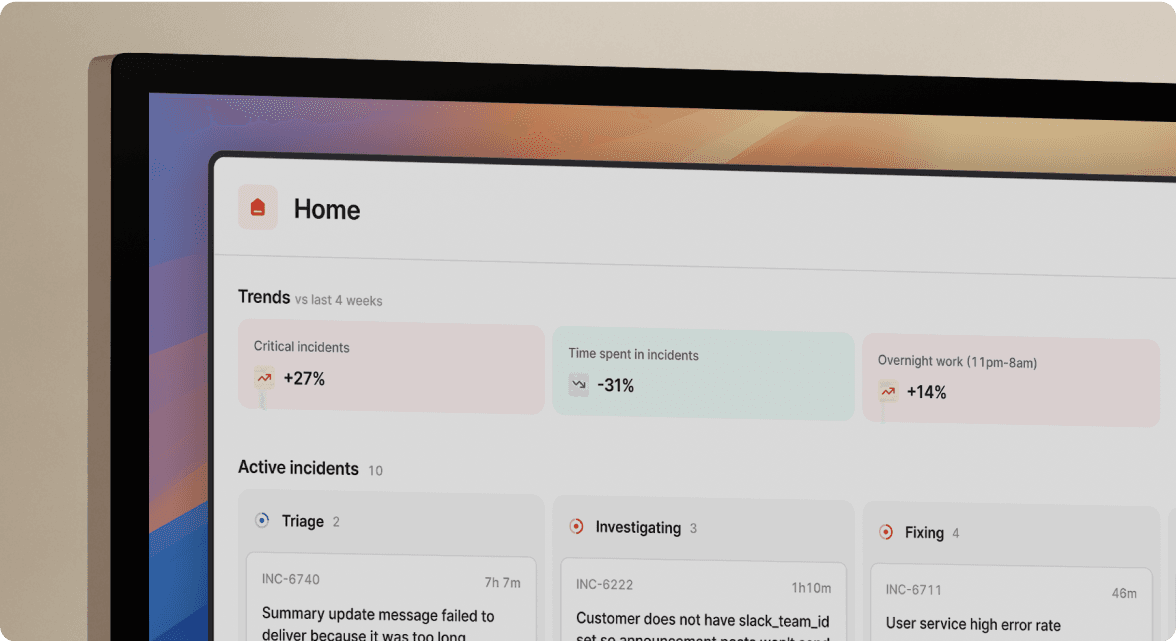

TL;DR: Rootly alternatives split into four architectures: Slack-native (incident.io, FireHydrant), enterprise suites (PagerDuty, xMatters), monitoring-native (Datadog, Grafana), and open-source (Grafana OnCall OSS, Dispatch). For mid-market SRE teams handling 5-15 incidents monthly, truly Slack-native platforms like incident.io can reduce MTTR by up to 80% by running the entire incident lifecycle via /inc commands without a browser tab. Enterprise tools offer deeper alert routing but typically require more extensive setup. Monitoring-native tools keep metrics and incidents together but exclude non-engineers. For most teams, assembling the team, finding context, and updating tools consumes 10-15 minutes per incident, that's 2 to 3 hours monthly across 15 incidents before your engineers touch the actual fix.Most SRE teams obsess over execution latency while ignoring the coordination overhead their team spends juggling multiple tools just to declare an incident. The coordination tax often rivals the time spent on the actual fix.

Rootly is a capable platform, but growing SRE teams increasingly find that feature richness alone doesn't solve coordination toil. This guide examines Rootly alternatives, with real pricing numbers and specific use-case fits.

Rootly's purpose: Incident management for SREs

Rootly targets engineering teams that want deep automation and a broad integration library. It connects to 40+ tools including PagerDuty, Opsgenie, Jira, GitHub, Datadog, and Zendesk. It correlates alerts with deploys and config changes.

How Rootly's features work

Rootly offers features for incident declaration, role assignment, and workflow automation. The platform provides integrations with communication tools, though the core workflow and configuration live in a web UI. This matters because during a 3 AM SEV1, engineers default to where they already are. If the platform requires a browser tab to configure escalation paths or access the service catalog, you add cognitive load at exactly the wrong moment.

Rootly's strength is automation depth. You can build sophisticated workflows triggered by incident attributes. The trade-off is setup time: those workflows require upfront configuration before your first incident runs cleanly.

Ideal team size and use cases

Rootly is a strong fit for engineering organizations running complex multi-team escalation paths and needing granular control over automation triggers. Teams wanting opinionated defaults and fast time-to-value often find the configuration overhead high relative to the benefit early on.

Benchmarking the best Rootly alternatives

Different incident management platforms take fundamentally different architectural approaches, and your choice determines whether coordination toil decreases or just shifts to a different tool. When evaluating platforms, focus on metrics that directly impact MTTR: time from alert to coordinated team, post-mortem completion rate, and on-call onboarding speed.

Criteria for faster MTTR and less toil

For SRE leads managing 5-15 incidents per month across a 15-30 person rotation, the highest-impact variables are:

- Time-to-coordination: How long from alert to a dedicated channel with the right people and context?

- Timeline capture: Does the platform automatically record decisions, role assignments, and call transcriptions, or does someone need to take notes?

- Post-mortem automation: Is the post-mortem 80% drafted from captured data, or does it start as a blank doc three days later?

- Onboarding speed: Can a new on-call engineer participate effectively in their first incident, or do they need a 47-step runbook under pressure?

- Pricing transparency: Is the on-call add-on priced separately, and does the true monthly cost require a spreadsheet to calculate?

Incident tool capabilities

The table below compares the top tools across architecture, best use case, and base price. Note that "starting price" rarely reflects the cost with on-call included, which we break down in the pricing section.

| Tool | Primary strength | Best for | Starting price |

|---|---|---|---|

| incident.io | Slack and Teams-native coordination + AI SRE | Mid-market teams wanting fast time-to-value | $25/user/month base, $45/user/month with on-call (Pro, annual) |

| Rootly | Workflow automation + integrations | Teams needing highly customized automation | Contact sales |

| PagerDuty | Alert routing + ecosystem | Enterprise teams needing complex routing rules | $21/user/month |

| FireHydrant | Runbook automation + tiered flat pricing | Growing startups under 10-20 responders | $9,600/year (20 responders) |

| Opsgenie | Basic on-call scheduling (sunsetting April 2027) | Atlassian-committed teams migrating to JSM | Contact sales |

| Splunk On-Call | Rules engine + ChatOps | Existing Splunk shops | Contact sales |

| xMatters | Alert routing + enterprise workflows | Large enterprises with complex escalations | Contact sales |

| Datadog Incidents | Metrics and incidents in one view | Teams wanting monitoring-native coordination | Add-on to Datadog plan |

| Grafana Incident | Grafana ecosystem integration | Teams running Grafana dashboards | Contact sales |

| New Relic | Alerting tied to APM data | New Relic-centric engineering orgs | Contact sales |

| Grafana OnCall OSS | Open-source on-call scheduling | Teams with DevOps bandwidth to self-host | Free (hosting costs apply) |

| Netflix Dispatch | Open-source incident coordination | Large engineering orgs with custom needs | Free (high maintenance) |

| Zenduty | Pricing + integrations | Small teams watching per-user cost | Contact sales |

| Better Stack | AI capabilities + status pages | Teams wanting all-in-one monitoring and response | Contact sales |

Best Slack-native incident management platforms

There's a meaningful difference between "Slack-integrated" and "Slack-native." A Slack-integrated tool sends you a notification and a link. You click the link, open the web UI, create the incident, assign roles, then come back to Slack to coordinate. A truly Slack-native platform enables you to manage key incident workflows directly in chat, minimizing the need to switch between your messaging tool and /incexternal dashboards.

incident.io: Slack-first incident response

incident.io built its platform architecture around chat from day one. When a Datadog alert fires, incident.io automatically creates a dedicated #inc-2847-api-latency-spikeincident channel, pages the appropriate on-call engineer, pulls in service catalog context including owners, dependencies, and runbooks, and starts capturing a live timeline. No manual channel creation. No copy-pasting alert links. No hunting down who owns the affected service.

From that point, the entire response happens via /inc commands:

/inc escalateto bring in a specialist/inc assign @sarah-devopsto assign the incident commander/inc severity criticalwhen checkout is affected/inc resolveto close the incident and trigger post-mortem generation

incident.io's AI SRE assistant can automate up to 80% of incident response. It suggests next steps based on past similar incidents, pulls metrics and logs directly into the channel, and can open a fix pull request (PR) without leaving Slack. Scribe transcribes incident calls, extracting key decisions and flagging root cause correlations in real time.

When the incident resolves, the post-mortem is 80% complete drafted from captured timeline data, call transcriptions, and role assignments. Your job is 10 minutes of refinement, not hours of reconstruction. Post-mortems that previously took 3-5 days now close within 24 hours. Intercom migrated from PagerDuty and Atlassian Status Page to incident.io in a matter of weeks. The result: "Now everything is centralized in incident.io, simplifying incident response significantly."

Users consistently report zero-training adoption:

"The gold standard in incident management/on call tooling... Frictionless configuration and onboarding (so easy that our first incident was created/led by a colleague even before the 'official rollout' all by themselves!)" - Luis S. on G2

incident.io supports both Slack and Microsoft Teams (on Team plan and above) and holds SOC 2 Type I certification.

Quick verdict: incident.io is a strong choice for mid-market teams wanting Slack-native coordination, transparent pricing, and AI that automates rather than just suggests.

FireHydrant: Reduce MTTR in Slack

FireHydrant takes a tiered flat pricing approach: Platform Pro starts at $9,600 per year for up to 20 responders and 50 monthly alerts, covering runbook automation, status pages, service catalog, SSO, and on-call features.

FireHydrant's runbook automation is strong, letting teams trigger specific steps based on incident severity or service. Its Slack integration is deeper than PagerDuty's.

Best for: Startups under 20 responders that want runbook automation and predictable flat-fee pricing.

Rootly: Auto-capture incident timelines

Rootly's timeline capture happens through its Slack app and web dashboard simultaneously. Updates from incident channels may appear across both interfaces. This can mean context is split between the Slack thread and the Rootly web dashboard. For teams that need highly customized automation triggers and have SRE bandwidth to configure them upfront, Rootly's workflow depth is genuine.

Best for: Engineering organizations with dedicated platform SRE time to build and maintain custom automation workflows.

Minimize incident toil in Slack

The pattern across all truly Slack-native platforms is consistent: when engineers coordinate where they already are, they don't context-switch, they don't miss updates posted in a separate tool, and they adopt the process faster because it feels like using Slack. One user puts it clearly:

"For our engineers working on incident, the primary interface for incident.io is slack. It's where we collaborate and where we were gathering to handle incident before introducing incident.io." - Alexandre R. on G2

Web-first tools with Slack bolted on still require that context switch. That's the architectural decision that drives MTTR outcomes.

Enterprise tools for data-driven incident response

For large organizations (500+ engineers) running complex multi-team escalation paths, enterprise-focused platforms offer deeper routing customization and broader integration libraries. The trade-off is typically longer setup time, higher total cost, and more complex UIs that require dedicated training.

PagerDuty's core incident workflows

PagerDuty is the established market incumbent with battle-tested alerting and on-call scheduling. Its alert routing capabilities are among the most sophisticated in the market, with extensive support for complex escalation scenarios through configurable routing logic and noise management.

Pricing structures vary, with advanced features and enterprise capabilities increasing costs significantly beyond base plans. Support has shifted from live chat to email-only with multi-day response times, which creates friction when you're filing a bug during an active incident. Initial setup requires a substantial time investment to configure routing rules and integrations effectively.

PagerDuty's integration model is fundamentally web-first: it sends Slack notifications, but coordination, timeline capture, and role assignment happen in the PagerDuty UI. The PagerDuty alternatives guide for 2026 details how this architecture means teams are always toggling between two primary interfaces.

Best for: Large enterprises needing maximum alerting customization and willing to invest in a longer setup period. See our PagerDuty migration guide if you're evaluating a switch.

Splunk On-Call: On-call automation

Splunk On-Call offers a reliable rules engine and solid ChatOps integration. It works well for teams already running Splunk's broader observability stack where bundled pricing makes sense.

Best for: Existing Splunk infrastructure teams where incident management is part of the broader Splunk investment.

xMatters: Rootly alternative for SREs

xMatters focuses on smart alert routing through predefined schedules and escalation policies, integrating with a wide range of enterprise systems. It's designed for organizations with complex multi-team dependencies and stringent compliance requirements.

Best for: Large enterprises running complex multi-team incident response with strict regulatory compliance requirements.

Evaluating enterprise incident features

Enterprise suites win on alerting sophistication and ecosystem breadth. They lose on adoption speed, coordination efficiency, and pricing predictability. If your primary need is complex conditional alert routing across 50+ services with custom escalation logic, enterprise platforms earn their cost. If your primary need is getting engineers into a coordinated response channel in under 2 minutes, the overhead of enterprise tools creates more toil than it eliminates.

Monitoring data for incident insights

Monitoring-native incident tools embed incident management directly inside the observability platform. This gives you immediate access to correlated metrics, traces, and logs when an incident fires. The downside is that non-engineering stakeholders (product managers, leadership, customer success) don't have access to the monitoring platform, creating a separate communication gap during major incidents.

Datadog incident tools for SREs

Datadog's Incident Management module is a separate seat-based SKU and isn't automatically included in standard Datadog plans. For teams that purchase it, they can declare and manage incidents directly from dashboards or alert notifications with direct access to correlated metrics and traces. Datadog's Watchdog uses ML to detect anomalies and surface likely root causes.

Datadog incident management is engineer-focused and dashboard-centric. While it includes coordination features and post-mortem generation, its workflow centers on the observability platform rather than chat-based collaboration. incident.io integrates directly with Datadog's monitoring stack, so you keep your observability platform and add chat-native coordination on top.

Best for: Teams that want to declare incidents directly from alert context without leaving Datadog, as a complement to a coordination platform rather than a replacement.

Grafana Incident

Grafana Incident integrates tightly with Grafana dashboards, Prometheus alerts, and the broader Grafana observability ecosystem. If your team runs Grafana as its primary dashboard layer, Grafana Incident surfaces alert context directly within the incident workflow. Our runbook automation tools guide covers how Grafana-native tools compare to dedicated incident platforms for automation depth.

Best for: Teams running Grafana and Prometheus as their primary observability stack who want monitoring-native incident declaration.

New Relic: Automating incident workflow

New Relic's incident response capabilities connect directly to its APM and observability data, helping teams gain context during incidents. Teams already using New Relic for observability can extend into basic incident coordination without adding a new vendor.

Best for: New Relic-centric engineering organizations that want monitoring-native incident declaration and don't need deep coordination or post-mortem automation.

Faster incident response with native tools

Monitoring-native tools are useful for alert correlation and initial triage, though they often have limited capabilities for human coordination aspects like status page updates, role assignments, cross-functional stakeholder communication, and post-mortem generation. Teams that try to run the full incident lifecycle inside their monitoring platform typically end up back in Slack for coordination anyway, recreating the same two-tool problem. When a Prometheus alert fires, incident.io automatically pages the right team, pulls in relevant specialists, and surfaces recent deployment history, all without requiring your engineers to live inside monitoring dashboards.

Build your own incident response stack

Open-source and self-hosted options give you complete control over data residency and workflow customization. They cost nothing to license and a significant amount to build, maintain, and evolve.

Grafana OnCall for incident response

The open-source version of Grafana OnCall provides on-call scheduling, escalation paths, and alert routing that you deploy and manage yourself. Deployment options include Docker Compose for evaluation and smaller production setups, or Kubernetes with Helm charts for larger production environments. Self-hosting eliminates per-user license fees but requires engineering time for ongoing platform maintenance as dependencies and integrations evolve.

Best for: Teams with strict data residency requirements or budgets that make per-user licensing prohibitive, and dedicated DevOps capacity to maintain the platform.

ilert: Self-hosted on-call management

ilert covers on-call scheduling, alert routing, and escalation policies as a fully managed cloud-hosted solution for teams needing reliable on-call management. Check ilert's current documentation for the latest configuration options and support tiers.

Best for: Organizations in regulated industries (healthcare, finance, government) requiring controlled deployment of on-call tooling.

Streamline incidents with Netflix Dispatch

Netflix open-sourced Dispatch, their internal incident management platform, which coordinates incident response across multiple systems (Slack, Jira, PagerDuty) through a unified interface. As a platform built for Netflix's specific infrastructure and workflows, teams considering Dispatch should evaluate their capacity for customization and ongoing maintenance to adapt it to their environment.

Best for: Organizations with scale and complexity similar to Netflix that have dedicated platform engineering resources and are prepared for substantial investment in setup and ongoing maintenance.

Top open-source IR tools analyzed

Open source is free to download and expensive to operate. Calculate the true cost by factoring in DevOps engineer hours to deploy, integrate, and maintain the platform over 12 months at your team's fully-loaded cost rate. In many cases, teams handling 5-15 incidents per month find that a managed platform with predictable per-user pricing costs less total than the engineering time consumed by self-hosted maintenance. The ITSM automation guide covers when custom tooling investment pays off versus when a managed platform delivers faster ROI.

Pricing models and hidden fees revealed

The incident management market follows a consistent pattern: base plans look affordable, on-call scheduling costs extra, AI features cost extra, and status pages sometimes cost extra. A team evaluating three tools based on "starting at" pricing often discovers the true cost is 2-3x the advertised number once on-call is included.

Plans by engineering team size

Here's how real costs scale across team sizes for the top platforms with on-call included:

| Team size | incident.io Pro(all-in, incl. on-call) | PagerDuty (estimated) | FireHydrant | Zenduty |

|---|---|---|---|---|

| 20 engineers | ~$900/month ($45/user) | ~$420-820/month* | Custom pricing | Custom pricing |

| 50 engineers | ~$2,250/month* | ~$1,050-2,050/month* | Custom pricing | Custom pricing |

| 100 engineers | ~$4,500/month* | ~$2,100-4,100/month* | Custom pricing | Custom pricing |

*Estimates based on publicly available pricing. Actual costs may vary based on contract terms and add-ons.

PagerDuty pricing is estimated and may vary by plan tier. Features like AIOps and automation add-ons aren't included in these estimates.

On-call add-on pricing explained

On the Pro plan, on-call costs $45/user/month all-in: a $25/user/month base plus a $20/user/month on-call add-on. No hidden fees, no surprises.

Opsgenie, before its April 2027 shutdown, offered multiple pricing tiers. Atlassian ended new Opsgenie sales on June 4, 2025, with the full shutdown scheduled for April 2027. If you're currently running Opsgenie, migration isn't optional. Our Opsgenie migration guide covers the parallel-run strategy and technical steps.

Zenduty offers a free tier and paid plans for growing teams. Better Stack bundles on-call and status pages in one product without separating them as add-ons.

Calculate your incident tool's true cost

Use this formula to calculate 12-month TCO before signing a contract:

- Base license: (users on the platform) x (monthly price) x 12

- On-call add-on: (engineers in on-call rotation) x (on-call monthly price) x 12

- Integration maintenance: hours per month maintaining custom webhooks x your loaded engineer cost

- Training overhead: hours getting new on-call engineers productive x loaded engineer cost

- Migration cost: estimated engineer days to switch tools x daily loaded cost

For a typical engineering team on the Pro plan, costs are transparent and straightforward. No integration maintenance overhead because incident.io ships pre-built connectors for Datadog, Prometheus, and PagerDuty. New on-call engineers get productive fast because the /inc command workflow requires no formal training.

How to choose the right incident management tool

Choosing tools for 10-30 member teams

At this scale, setup time and adoption speed matter most. You don't have a dedicated platform SRE to build and maintain complex workflow automation. You need opinionated defaults that get you through your first incident cleanly within 3-5 days of signup. The Pro plan at $45/user/month ($25 base + $20 on-call add-on) provides Slack-native workflows, on-call scheduling, unlimited integrations, and full AI SRE capabilities. If your team is early-stage and cost is the primary constraint, the Team plan offers a lighter entry point. Either way, rollout is fast, a user running a 15-person team reported full deployment in under 20 days:

"The onboarding experience was outstanding — we have a small engineering team (~15 people) and the integration with our existing tools (Linear, Google, New Relic, Notion) was seamless and fast." - Bruno D. on G2

Zenduty is worth considering if per-user cost is the primary constraint. FireHydrant's tiered flat pricing becomes competitive if your team stays near 10-20 responders.

Scaling incident response (100-500 engineers)

At this scale, teams typically need capabilities like role-based access control, custom incident types, private incidents for security issues, and advanced insights to prove MTTR trends to leadership. The Pro plan includes workflows, custom fields, and AI-assisted post-mortem generation to support these requirements. The ITSM compliance guide covers how to maintain SOC 2 and GDPR compliance within your incident workflow as the team grows.

For teams running Microsoft Teams rather than Slack, Teams support is available alongside the private incidents capability needed for security-sensitive incidents.

PagerDuty is the main alternative at this scale if alert routing complexity is the primary requirement, but the coordination overhead and pricing structure favor incident.io for teams where coordination efficiency and post-mortem quality are the primary metrics.

Scaling incident response workflows (200+ people)

At enterprise scale, you need enterprise-grade authentication, provisioning, and dedicated support. The Enterprise plan includes SAML SSO, SCIM provisioning, and a dedicated customer success manager, with custom pricing based on your organization's needs. For large organizations using Slack Enterprise Grid, our integration documentation covers the technical requirements for deployment at scale.

Enterprise teams evaluating PagerDuty should model total cost including AIOps and automation add-ons, which aren't included in base pricing. ServiceNow and xMatters fit IT-centric enterprises with ITSM workflows, but both require significant implementation investment and aren't optimized for engineering-first incident response.

Your Rootly migration action plan

Migrating incident management mid-stream is like changing engines in flight: incidents don't stop happening while you configure the new platform. Follow this parallel-run approach:

- Week 1-2: Set up incident.io alongside Rootly. Connect Datadog, Prometheus, and PagerDuty integrations. Import your service catalog and configure 2 on-call schedules.

- Week 2-3: Run new incidents through incident.io while keeping Rootly for in-flight incidents. Compare coordination time and timeline quality side-by-side.

- Week 3-4: Migrate the full on-call rotation once you've validated workflows on 3-5 real incidents. Decommission Rootly workflows for resolved incident types.

- Week 4+: Refine workflows based on real incident data. Add custom fields, integrate Linear or Jira for follow-up tracking, and enable AI post-mortem generation.

Our PagerDuty migration tooling docs and Opsgenie migration tooling docs cover the technical steps for common starting points. If you want to see the AI SRE automating up to 80% of incident response before you commit, schedule a demo.

Key terms glossary

MTTR (Mean Time To Resolution): The average time from when an incident is detected to when it is fully resolved, including diagnosis, coordination, and fix deployment. MTTR is one of several key metrics for measuring incident management effectiveness.

On-call rotation: The schedule determining which engineer is responsible for responding to production alerts at any given time, including primary, secondary, and escalation layers. A well-managed rotation distributes on-call burden evenly across the team to prevent burnout.

Incident commander: The designated lead for a specific incident, responsible for coordinating the response effort, making decisions about escalation, and communicating status to stakeholders. The incident commander owns the incident from declaration to post-mortem.

Post-mortem: The structured written review of an incident conducted after resolution, documenting the timeline, root cause, contributing factors, and action items to prevent recurrence. A complete post-mortem should be published promptly after resolution to capture accurate context while it's still fresh.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization