Monitoring and alerting for retail: Detecting incidents before customers notice

Updated Mar 27, 2026

TL; DR: Monitor business metrics (checkout conversion rate, cart abandonment) alongside infrastructure health. Tune alert thresholds dynamically for peak traffic periods to reduce false positives. For security incidents, compliance requires private channels with RBAC, immutable audit trails for PCI DSS Requirement 10, and automated SOC 2 evidence collection.

A page load delay of one second reduces conversion by 7%, according to research frequently cited from Aberdeen Group a figure that has held directionally consistent across more recent studies. The bigger problem isn't the delay itself. It's finding out from angry customers on social media rather than your monitoring stack, five minutes before your VP of Engineering calls you.

During Black Friday, your monitoring stack fires hundreds of alerts. Some signal a real checkout failure draining revenue. Others flag a legitimate traffic spike your infrastructure is handling fine. The challenge is separating the two fast enough to act, while keeping your compliance posture intact when a security event surfaces in the noise.

This guide covers the monitoring strategy, alerting approach, and incident response workflow that retail SRE and security teams need to catch problems before customers notice, and to handle security incidents with the rigor that SOC 2 and PCI DSS auditors require.

If you're a DevOps or SRE manager owning on-call rotations and MTTR targets at a retail organization, this guide is written for you. In ecommerce, security incidents are availability incidents. PCI DSS compliance, RBAC policies, and immutable audit trails aren't someone else's problem they're part of your incident response workflow, and this guide treats them that way.

What is ecommerce monitoring and why it matters

Ecommerce monitoring measures your platform's technical health, security posture, and business performance continuously, ensuring customers can browse, add to cart, and complete purchases without friction or data exposure.

Most teams start with infrastructure monitoring: CPU, memory, disk I/O, database connections. That's necessary but not sufficient. A server can be healthy while your payment API silently fails for a percentage of checkout attempts. Infrastructure metrics alone won't surface that.

Effective ecommerce monitoring spans four layers:

- Technical health: Server uptime, API latency, database query times, and error rates at the service level.

- Performance and user experience: Page load speed, Core Web Vitals, Time to First Byte (TTFB), and mobile responsiveness.

- Business metrics: Checkout conversion rate, cart abandonment rate, add-to-cart rate, and payment failure rate.

- Security and compliance: Intrusion detection, vulnerability scanning, fraud signal monitoring, and audit trail integrity.

The distinction most monitoring guides miss is the difference between operational incidents and security incidents. An operational incident (slow checkout page, broken promo code) needs fast coordination. A security incident (unauthorized access to cardholder data, zero-day in your payment gateway) needs fast coordination plus strict access controls and an immutable audit trail that satisfies PCI DSS Requirement 10 and SOC 2 evidence standards. Our incident management for retail teams guide covers the full operational picture if you want the broader context.

The cost of retail downtime and security breaches

During peak seasons, large retail ecommerce platforms can lose $1 to $2 million hourly during outages, according to Erwood Group's 2025 downtime cost analysis figures that vary significantly by retailer size and traffic volume. A 15-minute undetected checkout failure during a flash sale erases meaningful revenue before most teams have assembled a response.

Security breaches layer regulatory costs on top of the immediate financial hit. GDPR fines can reach 4% of annual global revenue for serious violations. According to the PCI Security Standards Council, PCI DSS non-compliance penalties can reach tens of thousands of dollars per month, and a confirmed cardholder data breach triggers forensic audits, mandatory card brand assessments, and potential loss of your merchant account. The reputational damage in customer churn and lost enterprise deals often compounds the direct fine exposure significantly.

The two risks compound each other. A security incident that surfaces during peak traffic is harder to isolate, harder to contain, and harder to document for auditors when your team is simultaneously fighting a checkout degradation in a public Slack channel.

Key areas of ecommerce platform monitoring

Technical health and uptime monitoring

Problem: Your team gets paged at 3 AM because a server health metric crossed a static threshold, but the checkout flow is fully functional.

Quick fix: Add synthetic transaction monitoring on the checkout path as a primary alert trigger, and demote raw server metrics to informational dashboards rather than pager-worthy alerts. You can use tools like Datadog or UptimeRobot to run automated probes on configurable intervals, catching failures before real users trigger them.

How incident.io helps: We connect directly to Datadog. According to the documentation, you can migrate Datadog monitors to automatically create incidents when alert conditions fire, eliminating the manual step of declaring an incident channel in Slack.

Performance and user experience metrics

Three checkout performance indicators directly affect conversion: Largest Contentful Paint (LCP), Time to First Byte (TTFB), and Interaction to Next Paint (INP). Google's Core Web Vitals standard sets LCP under 2.5 seconds and INP below 200 milliseconds as the thresholds for a good user experience. Monitor these continuously with Real User Monitoring (RUM) tools, not just during load tests.

Mobile performance deserves particular attention. Desktop sessions convert at roughly 3.9%while mobile sessions convert at 1.8% according to Convertibles.dev, meaning a mobile-specific performance regression hits your most price-sensitive conversion segment directly.

Business metrics and checkout conversion rates

The confusion around ecommerce conversion rates comes from measuring different funnel stages. Site visitor-to-purchase rates vary significantly depending on industry. According to the Baymard Institute, cart abandonment rates average around 70% globally, meaning that only a portion of shoppers who add items complete a purchase. When you set alerts on checkout conversion rate drops, you're watching that final funnel stage, where completion rates are highest because shoppers have already expressed strong purchase intent. The ecommerce funnel KPIs breakdown covers add-to-cart rate and payment failure rate formulas, both of which warrant dedicated alerts.

Security monitoring and compliance

Preventive measures: Build your security monitoring across three tiers: continuous vulnerability scanning for CVEs in your application layer and dependencies, SIEM-based correlation rules that flag anomalous authentication patterns and privilege escalation attempts, and real-time fraud signal monitoring that analyzes transaction patterns and account takeover indicators at checkout.

For PCI DSS compliance, you need to capture audit trail entries for all system components. According to PCI DSS Requirement 10, organizations are expected to log specific categories of events, including all individual access to cardholder data, all actions by users with administrative privileges, invalid logical access attempts, and changes to audit log configurations. Requirement 10.3 specifies the fields each entry must contain: user identification, event type, date and time, success or failure indication, event origination, and identity of affected data or system components. Logs must be retained for 12 months with three months of immediate access. Teams manually reconstructing this evidence from fragmented Slack threads, Jira tickets, and handwritten notes can consume days per audit cycle before they've written a single word of the control narrative.

| Monitoring type | Primary tool examples | What it detects | Sample alert threshold |

|---|---|---|---|

| Uptime and synthetic | UptimeRobot, Datadog | Endpoint failures, checkout path breaks | e.g., 2 consecutive |

| APM (performance) | Datadog, New Relic | Latency spikes, error rates | LCP > 4.0s, TTFB > 1800ms |

| SIEM (security) | Splunk, Datadog Cloud SIEM | Auth anomalies, intrusion attempts | e.g., 5 failed logins then successful login from new IP |

| Business analytics | Custom dashboards, GA4 | Conversion drops, cart abandonment spikes | e.g., checkout completion drops >15% from 7-day rolling average |

How to set up retail alerting without the fatigue

Impact: Alert fatigue is a direct safety risk. When your team treats every alert as noise, a real checkout failure sits unacknowledged because nobody trusts the pager anymore. The fix isn't silencing alerts. It's making each alert meaningful.

Tuning thresholds for peak traffic events

Problem: Static thresholds fail during Black Friday because your normal traffic volume is far lower than your peak. An alert configured to fire when checkout requests exceed a fixed threshold triggers constantly during a successful launch, desensitizing your team. When a real payment API failure occurs during your flash sale, the alert sits unacknowledged because the on-call engineer assumes it's another false positive.

Anomaly detection algorithms learn the normal shape of Black Friday traffic (elevated, predictable) and alert only when the actual pattern deviates from that learned shape, such as a sudden drop in checkout completions during a period when they should be climbing. Adaptive thresholding uses machine learning to dynamically calculate time-dependent thresholds based on historical traffic patterns, so alerts match the expected workload hour by hour rather than firing against a static value set during a quiet Tuesday in October.

Configure your peak-traffic alerting using this pattern:

- Use anomaly detection for business metrics (conversion rate, checkout completion, payment success rate) rather than static thresholds.

- Set direction-aware alerts so a checkout conversion rate drop during peak traffic triggers a page, but a conversion rate increase does not.

- Widen your anomaly detection sensitivity bands during known peak events like Black Friday and Cyber Monday to reduce false positives from legitimate traffic surges, then tighten them again after the event window closes.

- Apply business hour awareness so a P2 database latency alert at 2 AM on a Tuesday routes to your standard on-call queue, while the same alert at noon on Cyber Monday escalates directly to your incident commander.

Integrating SIEM and threat intelligence

Connect your SIEM to your monitoring stack and route alerts into incident.io via webhooks. When a Datadog monitor or connected monitoring tool fires an alert, it notifies incident.io. incident.io creates a dedicated Slack channel, routes it to your configured on-call schedule, and begins timeline capture automatically. Our alert severity mapping can help translate monitoring tool severity levels directly to incident.io priority levels, so escalation paths fire correctly without manual triage.

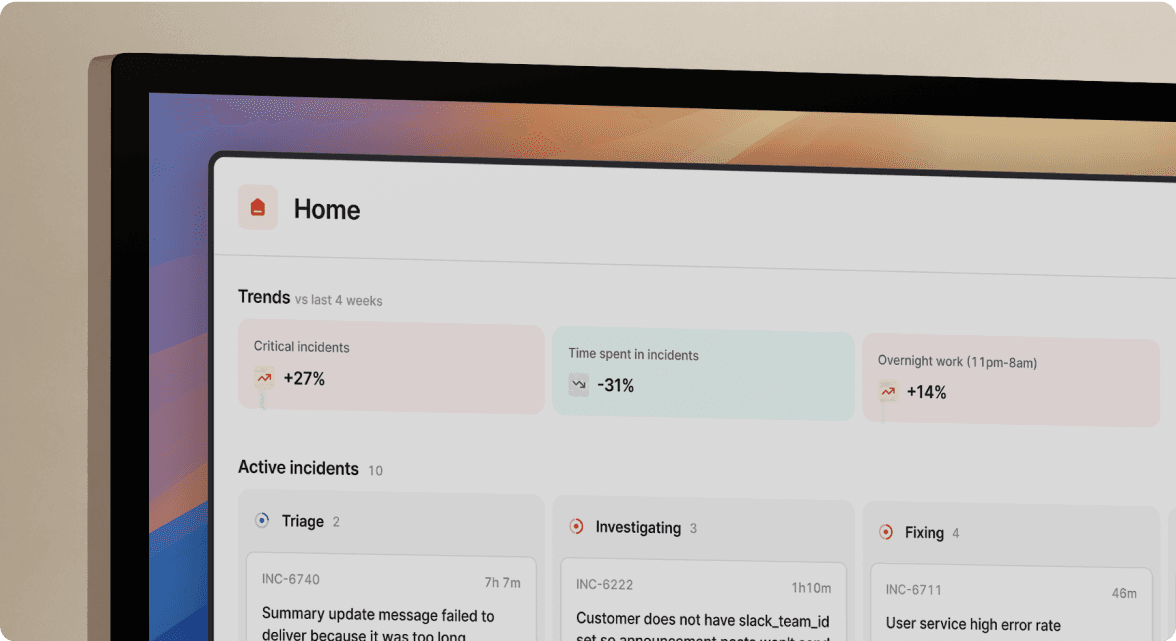

The incident.io Alert Insights dashboard surfaces patterns in your alert volume over time, showing which sources generate the most noise and which correlate with genuine incidents. Use this to systematically prune your alert configuration rather than guessing.

Managing retail incidents: operational vs. security events

Operational and security incidents share the same initial response steps: detect the signal, declare an incident, assemble the right people, start capturing the timeline. But they diverge sharply at access control and documentation.

PagerDuty treats all incidents the same, routing them to an on-call schedule without distinguishing between a slow API (public incident) and a potential cardholder data breach (private incident requiring RBAC). incident.io handles both workflows natively, so you can declare a private security incident from Slack and enforce SAML-provisioned access controls without manual channel permission management.

According to Etsy's engineering team, MTTR dropped from 42 to 28 minutes (a 35% reduction) within 90 days of adopting incident.io. This means your team spends less time on coordination overhead and more time on the actual technical fix, whether that's a slow API or a security event requiring restricted access.

Handling sensitive data with private incidents and RBAC

Problem: A junior on-call engineer posts credentials or PII details in a public Slack channel during a security incident because the tooling didn't restrict access.

Impact: A single PII exposure in a public channel during a breach investigation creates a secondary compliance violation on top of the original incident, and your SOC 2 auditors will ask pointed questions about your access control implementation.

How incident.io helps: When your team needs to isolate a security incident, /inc privatethe incident channel can be converted to a private Slack channel. We then enforce access rules provisioned via your SAML/SCIM integration. SCIM handles the provisioning, syncing user group memberships from your IdP to the platform, while incident.io applies access control rules based on those provisioned roles. Only users with explicitly authorized security roles see the channel.

"[incident.io] stands out as a valuable tool for automating incident management and communication, with its effective Slack bot integration leading the way... The platform's compatibility with multiple external tools makes it an excellent central hub for managing incidents." - Vadym C. on G2

Automating immutable audit trails for SOC 2 and PCI DSS

An immutable audit trail is a permanent, unalterable record of every action taken during an incident, capturing who did what, when, and what the outcome was. PCI DSS Requirement 10 mandates tamper-proof logs that cannot be altered retroactively without breaking forensic integrity, with 12-month minimum retention and three months of immediate access.

Without automated capture, you reconstruct this evidence manually from five sources: Slack exports, PagerDuty logs, Jira ticket histories, Zoom recordings, and handwritten notes. Teams commonly report spending over an hour per incident on manual reconstruction, and across multiple incidents sampled per SOC 2 audit cycle, that process can consume days before you've started writing the control narrative.

Implementation steps:

- Declare all incidents through incident.io rather than ad-hoc Slack channels, so we capture every action (who joined, what commands ran, who updated the status page, who resolved) in a structured timeline automatically.

- Enable Scribe, our AI note-taking feature, on all incident calls so decisions made verbally on Google Meet or Zoom are transcribed and attached to the timeline without manual note-taking.

- Export timelines in CSV format after each incident as SOC 2 control evidence covering incident response and change management controls.

- Map our timeline fields to PCI DSS Requirement 10 in your compliance documentation so external auditors have a clear traceability matrix showing user identification, event type, timestamp, and outcome for every incident action.

"1-click post-mortem reports - this is a killer feature, time saving, that helps a lot to have relevant conversations around incidents (instead of spending time curating a timeline)" - Adrian M. on G2

Communication protocols during a retail crisis

Communication failures compound the technical damage. Customers discover outages from Twitter. Your legal team learns about a breach from journalists. The board hears about data exposure from regulators. Clear protocols prevent these scenarios by letting incident commanders delegate communication tracks rather than personally managing every stakeholder.

Organize your communication across critical stakeholder groups:

- Internal and executive: Board, investors, executives, HR, finance. Consider regular updates for P0 events, focusing on business impact, containment status, and estimated resolution time.

- External and customer: Customers, PR, media, support, sales, marketing. Your status page is the primary channel. incident.io automates status page updates when incident states change (declared, investigating, resolved), so the incident commander focuses on resolution rather than copying updates into multiple tools.

- Legal and compliance: Counsel, compliance officers, risk, auditors, regulators. Activate immediately for any confirmed data exposure. Many data protection regulations require prompt notification to supervisory authorities upon becoming aware of a breach.

- Third-party: Partners, suppliers, vendors, contractors. Notify early if they're involved in root cause or customer-facing impact.

"I like that with incident.io, issues are right there in Slack, giving really good visibility into what sort of issues are being submitted and ensuring that people are responding." - Alex N. on G2

Step-by-step checklist for ecommerce monitoring setup

- Define critical business metrics. Document baseline checkout conversion rate, cart abandonment rate, add-to-cart rate, and payment failure rate for standard traffic periods and peak events. Set alert thresholds as percentage deviations from these baselines rather than absolute values.

- Select and integrate monitoring tools. Connect your APM (Datadog, New Relic) and SIEM (Splunk, Datadog Cloud SIEM) to incident.io via webhooks. Our alert routing feature directs security alerts appropriately, ensuring they reach the security on-call schedule rather than the general engineering rotation.

- Configure dynamic alerting thresholds for peak events. Switch from static to anomaly-based thresholds for business metrics. Pre-configure peak event threshold profiles well in advance so the anomaly detection algorithms have time to learn the expected traffic shape.

- Establish clear escalation paths and SLAs for security vs. operational events. Define separate on-call schedules and escalation chains for security incidents (routes to security team with private incident creation) and operational incidents (routes to engineering on-call with standard channel creation). Document MTTD and MTTC targets based on your organization's risk tolerance and compliance requirements.

- Implement a Slack-native incident management platform. Deploy incident.io across your security and SRE teams. Connect us to your identity provider via SAML/SCIM for RBAC enforcement on private incidents. Configure your Datadog monitors to create incidents when critical thresholds breach. New security engineers reach incident response proficiency in days using intuitive

/incSlack commands instead of weeks spent mastering fragmented tool stacks with complex multi-step runbooks. - Run game days and tabletop exercises. Simulate a checkout failure scenario and a parallel security breach scenario at least quarterly. Use incident.io's incident dashboard to review team response time, role assignment completeness, and post-mortem quality after each exercise.

According to Intercom's engineering team, the team saved 40% of incident time overall after adopting incident.io. This means your retail security team can onboard seasonal hires, contract engineers, or newly promoted on-call staff without the weeks-long ramp that fragmented tooling demands and every engineer who comes up to speed faster is one less gap in your 24/7 coverage rotation.

A few honest trade-offs to factor in: incident.io is built for teams where engineers live in Slack, if your store operations staff, logistics coordinators, or compliance officers work outside Slack, they'll need a separate communication layer for incident updates. On-call scheduling is an add-on to the Pro plan ($45/user/month with on-call, $25 base + $20 on-call add-on on the Pro plan), so factor that into your total cost if your retail security team runs 24/7 rotations. And unlike PagerDuty's highly configurable rule engine, incident.io ships with opinionated defaults: that's a feature if you want fast setup, but a constraint if your incident workflows require deep custom logic.

For teams migrating from Opsgenie or from PagerDuty, our incident.io vs. PagerDuty comparison and PagerDuty alternatives guide cover the migration approach in detail. The ITSM and DevOps integration guide addresses connecting your existing toolchain to a Slack-native platform without rebuilding your entire stack.

Schedule a demo of incident.io and see how we handle private security incidents with RBAC, automated audit trails, and SOC 2/PCI DSS compliance evidence in a live retail security incident simulation.

Key terms glossary

SIEM: Security Information and Event Management. A platform (Splunk, Datadog Cloud SIEM) that aggregates security alerts from across your infrastructure, correlates them into threat patterns, and triggers automated responses via webhooks and playbooks.

RBAC: A permissions model that grants system access based on a user's defined role rather than individual assignment. In incident management, RBAC ensures only authorized security personnel can view private incident channels, enforced by the platform based on identity provider group memberships.

MTTD: The average time between when an incident starts and when your monitoring system identifies it. Set your MTTD target based on your organization's specific risk profile and compliance requirements, not industry averages.

MTTC: The average time from detection to stopping an incident from spreading. For critical security events like potential cardholder data exposure, setting an aggressive MTTC target directly limits the scope of your regulatory exposure.

MTTR: The average time from when an incident is detected to when it is fully resolved and service is restored. MTTR is the primary metric for measuring incident response effectiveness, reducing it directly cuts downtime, limits revenue loss, and lowers regulatory exposure. Track MTTR separately for operational incidents (payment processing outages, cart failures) and security incidents (data exposure, fraud spikes), since each has different resolution drivers and compliance implications.

Immutable audit trail: A permanent, unalterable record of all actions taken during an incident, capturing who performed each action, when, and with what outcome. Required for PCI DSS Requirement 10 compliance and SOC 2 Type II evidence.

Adaptive thresholding: An alerting technique that uses machine learning to calculate dynamic alert thresholds based on historical traffic patterns, preventing false positives during legitimate traffic spikes like Black Friday while still catching genuine anomalies.

Core Web Vitals: Google's performance benchmarks for user experience, including Largest Contentful Paint (LCP, generally recommended under 2.5 seconds), Interaction to Next Paint (INP, typically targeting under 200 milliseconds, which replaced First Input Delay in March 2024), and Cumulative Layout Shift (CLS, generally recommended under 0.1).

FAQs

See related articles

Who's on call? How Claude helped us calculate this 2,500x faster

A look at how on-call schedules work, and how we made rendering them 2,500× faster — through profiling, smarter algorithms, and some Claude.

Rory Bain

Rory Bain

How it feels to run an incident with AI SRE

For the last 18 months, we've been building AI SRE, and one of the things we've learned is that UX matters more than you think. This week, I used AI SRE to run a real incident, and I walk you through it end-to-end.

Chris Evans

Chris Evans

What does using AI for post-mortems actually mean?

Everyone is using AI to help with post-mortems now. We've built AI into our own post-mortem experience, pulling your Slack thread, timeline, PRs, and custom fields together and giving your team a meaningful starting point in seconds. But "AI for post-mortems" can mean very different things.

incident.io

incident.ioSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization