Incident management for retail teams 2026: protecting revenue during peak traffic and outages

Updated February 27, 2026.

TL; DR: In retail, every minute of downtime is a revenue event, not just a technical one. A checkout failure during peak shopping periods can result in significant revenue losses, especially when traffic is higher than usual. Generic DevOps processes move too slowly for these stakes. The retailers protecting revenue in 2026 combine pre-season load testing with Slack-native incident coordination, automated status pages, and immutable audit trails that satisfy PCI DSS v4.0. This guide gives you the framework to build that practice, from pre-season load testing to automated post-mortems.

For large ecommerce platforms, even short checkout disruptions during major sales events can translate into measurable revenue impact. Monitoring systems typically detect these issues quickly, but response speed often depends on how efficiently teams coordinate investigation and communication.

In retail environments, incident management extends beyond technical resolution. It directly affects revenue protection and customer trust. As distributed commerce architectures grow more complex, response strategies must align with the pace and scale of transaction volume. Here is your guide to building a revenue-aware incident management practice.

The high stakes of retail reliability: Why every minute costs money

Retail traffic is nothing like B2B SaaS traffic. A SaaS product sees predictable daily usage curves. A retail platform sees 10-second spikes when a flash sale email hits 2 million inboxes at noon. That burst, emotionally-charged traffic pattern means your reliability decisions directly cost or save revenue in ways that most DevOps frameworks never anticipated.

Retail platforms often measure business impact through revenue implications of performance and reliability. Research shows that added latency correlates with reduced sales, for example, Amazon found that every 100 ms of additional latency was associated with a measurable drop in revenue, a finding that has endured as a widely cited industry benchmark.

Slower page load times also tend to reduce conversion rates and increase bounce rates, which can translate into lost revenue for ecommerce sites. Faster performance has been shown to improve engagement and conversions, emphasizing that responsiveness and reliability directly affect business outcomes.

Calculating the true cost of downtime in ecommerce

Understanding your RPM gives you the lever to justify investment in better tooling. Here's how to calculate it and what to factor in beyond the obvious.

The base formula:

Start with your annual revenue distributed evenly across the year (525,600 minutes in a year):

Revenue Per Minute = (Annual Revenue ÷ 525,600 minutes) × Peak Traffic Multiplier

For a $100M ARR retailer: $100,000,000 ÷ 525,600 = $190/minute on a normal day. During Black Friday at a 4x traffic multiplier, that becomes approximately $760/minute. A 20-minute P1 incident costs roughly $15,200 in direct lost sales, before you account for hidden costs.

The direct revenue loss is just the starting point. The full incident cost typically runs 2-3x your initial RPM calculation once you factor in these downstream effects:

| Cost category | What happens | Typical impact |

|---|---|---|

| Support ticket surge | Customers flood support channels during and after outages | Significant agent-hour burden per P1 incident |

| Cart abandonment residual | Customers who experience a bad checkout often don't return | Long-tail revenue loss, especially for first-time buyers |

| SLA penalties | Marketplace sellers (Amazon, Google Shopping) face contract penalties | Varies by contract terms |

| Brand reputation | Social media amplification accelerates during peak events | Hard to quantify, long-tail revenue impact |

Nearly a quarter of retail companies lack a plan for when their websites go down. That's not just a technical gap: it's an unmitigated financial risk sitting on your balance sheet.

[IMAGE]

Preparing for peak: A Black Friday incident management checklist

The teams that survive Black Friday with clean MTTR numbers don't improvise when the checkout service goes down. They run the same mental reps before the event that an incident commander runs during one. Complete this checklist 4-6 weeks before any major peak event.

Teams that practice chaos simulations and game days report faster MTTR during real incidents. Run your pre-peak game days bi-weekly in the month before the event, then weekly in the final two weeks.

Pre-peak readiness checklist

Technical readiness:

- Run load tests using real peak data shapes from last season, including peak traffic minutes and error rates by service (search, checkout, payment)

- Test all critical paths: search, checkout, payment processing, and order confirmation under simulated peak load

- Validate CDN configuration and confirm product pages load in under 2 seconds under peak concurrency

- Confirm database connection pool limits are set above peak concurrency estimates

- Audit third-party dependencies (payment gateway, tax service, loyalty API) and document fallback behavior if each one fails

- Verify your incident lifecycle automations trigger correctly under load

Process readiness:

- Lock all major promotional dates and offers before your freeze period begins

- Confirm you have set all on-call schedules with backups for each rotation

- Pre-draft status page update templates for checkout-down, payment-degraded, and search-unavailable scenarios

- Assign incident commander and communications lead roles before the event starts

- Test escalation paths: verify you can reach each on-call engineer in under 3 minutes

- Define severity thresholds specific to peak season (e.g., P1 = checkout failure affecting >5% of sessions vs. the normal >20%)

People readiness:

- Brief customer support on the communication protocol: they should never learn about an incident from a customer tweet

- Confirm logistics and fulfillment contacts are in your on-call bridge for order-affecting incidents

- Run a 30-minute tabletop game day simulating a checkout gateway failure during the 9 AM peak window

- Confirm all engineers have tested slash command workflows (

/inc,/inc escalate,/inc resolve) before the event

Post-event:

- Schedule a post-peak retrospective within 48 hours and review MTTR trends in your Insights dashboard before declaring the season a success

Structuring your retail incident response plan

Generic incident response flows can't handle retail pressure because they ignore non-engineering stakeholders, don't weight severity by revenue, and miss the communication cadence customers expect when a sale they planned their week around suddenly stops working. Here's how to build one that does.

Automating detection and triage for ecommerce platforms

Monitoring platforms such as Datadog, New Relic, and Prometheus detect technical anomalies quickly. However, alerts like “cart service latency p99 > 800ms” do not inherently communicate business impact to stakeholders outside the SRE team.

When technical signals are not mapped to revenue-critical services, teams spend additional time translating system metrics into business context before coordinated action begins.

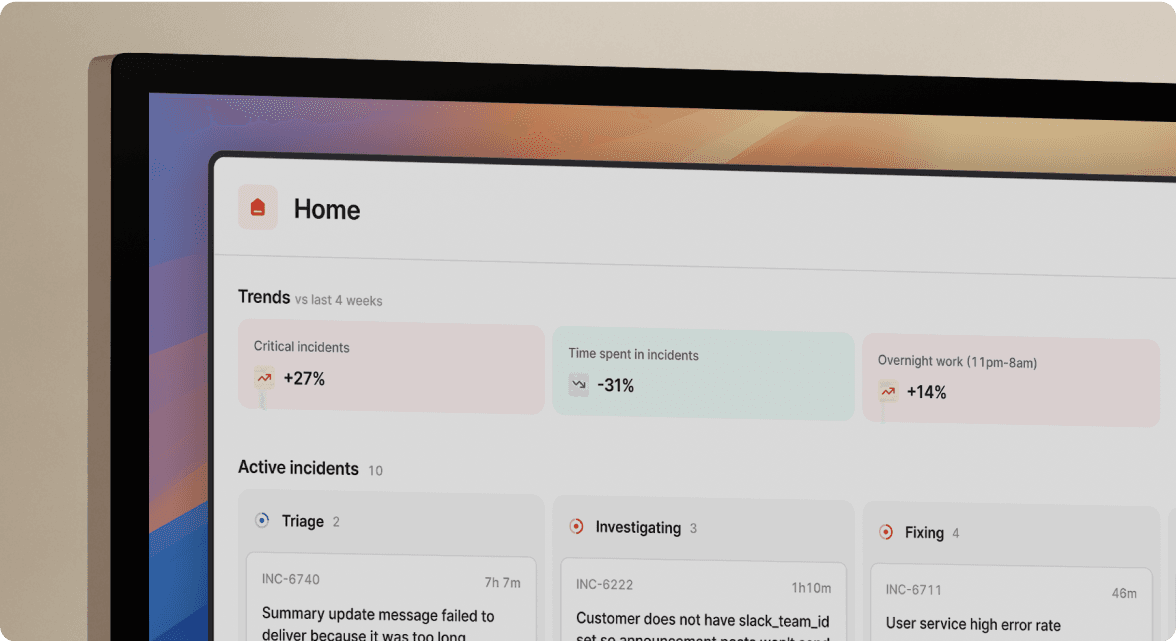

Map technical services to business functions before an incident starts. Using the incident.io Service Catalog, teams can define entries like svc-payment-gateway and link them to business context: "Checkout Flow," severity level "Critical," and owner "Payments Team." When a Datadog alert fires against that service, everyone joining the incident channel immediately understands the business impact without a separate briefing.

This mapping also powers incident types, so a checkout failure automatically triggers a different response workflow than a search degradation, pulling in the right on-call rotation without manual triage.

"incident.io has dramatically improved the experience for calling incidents in our organization, and improved the process we use to handle them... new users have found the product easy enough to grasp that training isn't generally required." - Verified User in Computer Software on G2

Coordinating cross-functional teams

Your major retail incidents affect more than your engineering team. When checkout goes down, you immediately impact customer support (ticket volume spikes), logistics (order processing stalls), and PR (social media starts within minutes). If those teams find out about an incident from an angry customer tweet instead of an automated alert, your response is already behind.

Your incident channel needs to include:

- Engineering: SRE/on-call engineer, incident commander, service owner

- Customer Support Lead: Manages agent scripts and updates customer-facing teams in real time

- Logistics/Fulfillment contact: Assesses impact on order queue and shipping SLAs

- Communications/PR lead: Approves and publishes any public statements

- Executive stakeholder: For P1s affecting checkout, someone with business context needs visibility without interrupting engineering work

The platform's Decision Flows automate role assignments based on incident type, so a "payment degraded" incident automatically pages the Payments Team on call, invites the Customer Support Lead, and triggers the appropriate communication runbook without manual setup.

"I can easily understand what is going wrong and what customers are affected during an incident... Customers love the Status Page, it's easy to navigate, looks really slick and takes the stress away from my team from having to manually send out communications." - Will P. on G2

Managing internal and external communication

Your engineering team cannot fix the incident and field questions from 50 customer support agents at the same time. Split the communication onto a separate, automated track.

One engineer owns the incident channel, everyone else focuses on the fix, and your status page updates automatically from Slack commands. That's the pattern that works. Within the incident.io platform, customer updates feature lets you publish to an external status page directly from /inc update in Slack, without switching to a separate browser tab.

When the incident resolves with /inc resolve, the status page updates automatically, and a post-mortem draft generates from the captured timeline. No one needs to remember to close out the public communication in the chaos of recovery. The status pages severity mapping ensures the right level of transparency: a degraded payment experience displays differently to customers than full checkout unavailability, which prevents overcommunication that drives unnecessary support volume.

The role of SRE in modern retail

Digital retail has no concept of "if the store opens, we're live." Any degradation in checkout performance shows up in real-time revenue data, and your customers have no patience for latency that didn't exist yesterday.

That reality creates a specific tension for retail SRE teams: balancing feature velocity (new storefronts, personalization, A/B testing) with stability. The teams that handle this well have shifted from hero culture, where one senior engineer carries every incident in their head, to systematic reliability, where the process runs independently of who's on call.

Picnic, the digital-native grocery delivery company, demonstrates exactly this model. Watch how they combine physical and digital reliability to protect operations where a technical failure directly means undelivered groceries, not just an unfulfilling web experience.

The shift from hero culture to systematic reliability requires three things:

- Documented process: The

/inc tutorialworkflow means a new engineer on their first on-call shift knows exactly what to do without a senior engineer standing over them. - Reliable escalation: Automated paging based on service ownership, not tribal knowledge about who knows what.

- Improvement data: MTTR trends, incident frequency by service, and time-to-resolution distributions give your VP Engineering the evidence to defend reliability investment at the board level.

Security and compliance: Handling data breaches and PCI DSS

When an incident involves customer payment data, you're not just writing a post-mortem. You're creating evidence for a PCI DSS audit.

Retail security incidents fall into three main categories: Magecart/digital skimming attacks, where injected JavaScript steals payment card data from checkout pages (formjacking drives roughly three-quarters of web breaches and hits retail especially hard); Account Takeover attempts, where 15% of all login requests are malicious ATO attempts that spike during peak shopping seasons when support teams are stretched thin; and application-layer DDoS attacks, which degrade checkout performance without triggering standard volume-based alerts and often arrive with ransom demands during high-traffic events.

PCI DSS v4.0 requirements you can't afford to miss:

PCI DSS v4.0 Requirement 12.10 mandates a documented incident response plan covering role, responsibilities, communication strategies (including how to notify acquirers and payment brands), containment procedures, and recovery processes. Requirement 10 requires tamper-proof audit trails protected from unauthorized modification and backed up to a system that cannot be altered retroactively.

Manual incident documentation fails this requirement because Google Docs can be edited retroactively, destroying your audit trail. An auto-captured timelines in incident.io provide the immutable documentation PCI DSS v4.0 evidence requirements demand, with every action timestamped and logged through the audit logs API.

For security-sensitive incidents (suspected skimming attacks, account takeover investigations), incident.io's Private Incidents mode keeps the incident channel visible only to authorized responders, so a security investigation doesn't broadcast cardholder data concerns across your engineering org.

How to choose the right incident management platform for retail

Why Slack-native coordination wins during traffic spikes

During peak retail incidents, engineers operate under significant cognitive load while monitoring dashboards, evaluating remediation steps, and responding to stakeholder questions. Frequent tool switching can fragment attention, and repeated context changes may slow coordinated response efforts.

Slack-native incident management reduces context switching by allowing declaration, coordination, updates, and resolution to occur within the same communication environment teams already use.

"The integration with slack is slick and the web experience flows easily. Incident.io makes working through stressful incidents smooth, there are better places to be focusing your energy during an incident than your reporting software." - Verified User in Computer Software on G2

Switching to Slack-native incident management eliminates the coordination tax of toggling between PagerDuty, Slack, Jira, and Confluence, with teams reporting up to 80% MTTR reductions after making the switch.

PagerDuty is widely used for alert detection and on-call paging. incident.io focuses on coordinating the full incident lifecycle by automating channel creation, assembling responders, capturing timelines, and managing communication through resolution. We integrate with PagerDuty rather than replace it, handling the coordination PagerDuty wasn't built for.

This Slack for Incident Management ChatOps guide walks through exactly how this coordination model works in practice.

Leveraging AI to reduce MTTR and protect revenue

During major retail incidents, delays often stem from coordination and stakeholder alignment rather than technical debugging alone. When executives or business leaders join mid-incident, engineers may need to pause troubleshooting to provide status context, which can slow resolution efforts.

The platform's AI SRE assistant handles that automatically. Scribe transcribes incident calls in real time and generates a live summary that any stakeholder can read without interrupting the response team. The AI uses the captured timeline to identify what's been tried, what worked, and what the current hypothesis is, so a joining VP or CTO gets full context in 30 seconds instead of pulling engineers off the fix.

The AI also runs automated investigations against your monitoring data and past incidents to surface likely root causes based on similar patterns your team has resolved before. For retail teams handling 15-25 incidents per month, that pattern-matching becomes increasingly powerful over time.

After resolution, the AI auto-drafts the post-mortem from the captured timeline, requiring about 15 minutes of editing rather than 90 minutes of reconstruction.

"1-click post-mortem reports - this is a killer feature, time saving, that helps a lot to have relevant conversations around incidents (instead of spending time curating a timeline)." - Adrián M. on G2

For retail teams running 20 incidents per month at $150/hour loaded engineer cost, eliminating 75 minutes of post-mortem reconstruction per incident recovers (20 incidents × 1.25 hours × $150) = $3,750 monthly, or $45,000 annually in engineering time that returns to proactive reliability work instead of incident archaeology. Calculate your specific numbers using the incident.io postmortem ROI calculator.

See incident.io: Supercharged with AI for a walkthrough of how the AI SRE assistant works across the full incident lifecycle.

Ready to protect your ecommerce revenue during peak season? Book a demo with incident.io to see how retail and high-transaction teams handle Black Friday-scale incidents in Slack.

Key terminology for ecommerce reliability

Peak traffic: A period of significantly elevated user traffic during major sales events like Black Friday, Cyber Monday, or flash sales triggered by promotional emails. Shopify's brief Cyber Monday 2025 outage illustrated how even brief technical difficulties at peak moments have outsized business impact, regardless of how concentrated or distributed that traffic is.

Freeze period: A designated window before a peak event during which no non-critical changes deploy to production systems. Typically, 24-72 hours before the event opens. The goal is to maximize stability by removing the risk of regression-related incidents during your highest-revenue window.

Revenue Per Minute (RPM): Your platform's expected revenue output per minute at a given traffic level. The primary metric for framing incident severity in retail, and the basis for any MTTR-to-revenue conversion calculation. RPM during peak events typically runs 3-4x your normal RPM.

MTTR (Mean Time to Resolution): The average time from incident detection to full resolution. In retail terms, MTTR is your primary lever for controlling total revenue lost per incident.

PCI DSS: Payment Card Industry Data Security Standard, the security rules governing any organization that processes branded payment cards. Version 4.0, released March 2022, strengthened incident response requirements under Requirement 12.10 and mandates immutable audit trails under Requirement 10, both of which directly affect how retail teams must document incidents.

Cart abandonment rate: The percentage of shoppers who add items to a cart but do not complete the purchase. Two-second delays push abandonment to 87%, making it a leading indicator of latency-related incident severity before a full outage is declared.

Game day: A structured exercise where your team intentionally triggers failure scenarios in a staging environment that mirrors production traffic patterns. The goal is to verify that your incident response process works under realistic conditions before an actual peak event reveals gaps in your process or tooling.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization