New: AI-native post-mortems are here! Get a data-rich draft in minutes.

Common postmortem software mistakes to avoid during selection and implementation

Updated February 16, 2026

TL;DR: The costliest mistake teams make is selecting postmortem software that requires manual data entry after the fact rather than capturing the timeline automatically while the incident happens. Tools that don't integrate deeply with your chat platform create data silos that make root cause analysis incomplete. Manual timeline reconstruction wastes 60-90 minutes per incident and delays learning. Before purchasing, verify SOC 2 Type II compliance and test with real incidents to ensure your team actually adopts the tool during high-stress outages.

Selecting the right postmortem software is an important decision that affects how your team learns from incidents. Many teams discover too late that their chosen tool creates unnecessary work or doesn't fit their workflow. This guide outlines the most common pitfalls to avoid during the selection and implementation process.

Mistake 1: Selecting tools that isolate incident data from where work happens

Problem: Choosing a standalone web-based tool that requires engineers to copy-paste logs from Slack, alerts from Datadog, and decisions from Zoom calls into a separate documentation platform.

Impact: Context switching kills momentum during incidents. When your team toggles between PagerDuty, Slack, a web-based incident tool, and a Google Doc, critical information lives in five places. Teams using fragmented tooling spend 12+ minutes on logistics before troubleshooting starts. That's 12 minutes of customer-facing downtime caused by tool sprawl.

Post-mortems become incomplete because no one remembers which Slack thread contained the deployment rollback decision.

The fix: Prioritize Slack-native or Teams-native tools that capture data where your team already works. Look for platforms that automatically record Slack messages, pinned screenshots, and slash command executions directly into the incident timeline without requiring manual data entry.

We capture timeline data automatically by monitoring incident channels for pinned messages, slash commands, and status changes. When you run an incident using /inc commands, every action auto-populates the timeline: role assignments, severity changes, Slack threads, and shared links. We stamp pinned items at the time of their actual posting, not when they were pinned, so you can catch up on documentation during the incident without losing chronological accuracy.

"Without incident.io our incident response culture would be caustic, and our process would be chaos. It empowers anybody to raise an incident and helps us quickly coordinate any response across technical, operational and support teams." - Matt B. on G2

Test integration depth during your pilot. Fire a real alert from your monitoring tool. Does the postmortem tool automatically create an incident channel? Does it capture the alert context without manual copy-paste? If you're still opening browser tabs and taking screenshots, the integration isn't deep enough.

Mistake 2: Underestimating the "manual reconstruction" tax

Problem: Assuming engineers have time or memory to reconstruct timelines manually 24 hours after an incident resolves.

Impact: Manual post-mortem creation wastes 60-90 minutes per incident as teams hunt through chat history, monitoring tools, and call recordings trying to piece together what happened. Engineers spend hours piecing together incident timelines from scattered sources like Slack messages, Jira tickets, monitoring tool alerts, and deployment logs.

This delay creates information decay. Details are forgotten. Timestamps become approximate. Critical context disappears because it happened in a Zoom call nobody transcribed. When facts are fuzzy, guesswork and subjective recollections can lead to finger-pointing rather than systems analysis. Postmortems become rushed, incomplete, or skipped entirely, which negates their learning value.

The fix: Look for auto-capture capabilities. The software should record what happens during the incident, not after. Evaluate tools that offer real-time call transcription, automatic Slack message capture, and integration event logging.

| Aspect | Manual process | Automated capture |

|---|---|---|

| Timeline creation | 60-90 min reconstruction | 10-15 min refinement |

| Data sources | Hunt across 5+ tools | Captured in one place |

| Time to publish | 3-5 days post-incident | Within 24 hours |

| Accuracy | Fuzzy memories, missing context | Real-time capture |

Our approach: We use the captured timeline to generate post-mortem drafts with AI, including incident summary, timeline of events, contributing factors, and suggested action items. Engineers spend 10-15 minutes reviewing and refining instead of 90 minutes writing from scratch. Our AI automates up to 80% of incident response work, and Scribe listens to incident calls, transcribes in real time, and surfaces key points so critical context never gets lost.

Favor reduced MTTR by 37% after implementation. The key driver wasn't faster troubleshooting. It was eliminating coordination overhead and documentation toil that previously consumed hours per incident.

Test this during your pilot by running two incidents side-by-side. Use your current process for one incident and the new tool for another. Measure actual time-to-publish-post-mortem for both. If the new tool doesn't save at least 45 minutes per incident, it's not solving the problem.

Mistake 3: Ignoring the learning curve for on-call engineers

Problem: Buying a complex enterprise platform that requires extensive training before engineers can run their first incident.

Impact: During a 3 AM outage, stressed engineers revert to what they know, ad-hoc Slack channels, manual Google Docs, because the official tool is too hard to use under pressure. Navigation complexity, context loss from switching between systems, and access barriers create friction when speed matters most.

At Favor, their previous tooling created coordination pain. OpsGenie handled alerting but Status Page required manual updates through a separate login. Engineers without licenses couldn't post updates at all. During incidents, people asked "Where's the group chat? Can you add me?" These questions waste minutes while customers are impacted.

New on-call engineers feel this friction most acutely. If the tool requires remembering a 47-step runbook or navigating through nested menus, junior engineers won't use it confidently. They'll ping senior engineers at 3 AM instead.

The fix: Test for time-to-first-incident. Can a new hire run an incident with zero formal training? The interface should feel intuitive during high-stress moments.

Look for tools that use familiar patterns. Slash commands in Slack (/inc escalate, /inc resolve) feel like natural chat messages. Web dashboards with 12 navigation options feel like homework.

We designed the platform so teams can declare an incident instantly from any Slack message using shortcuts or slash commands. No context switching. No training required. The entire incident lifecycle happens where your team already works.

During your pilot, involve a junior engineer who's new to on-call. Give them 5 minutes with the tool then have them run a test incident. If they struggle with basic actions like escalating to another team or updating severity, the tool is too complex.

Mistake 4: Overlooking security and compliance requirements

Problem: Selecting a tool without verifying SOC 2 compliance, GDPR requirements, and data encryption standards upfront.

Impact: Your CISO blocks the purchase after you've already piloted the tool and convinced your team to adopt it. Or worse, sensitive incident data leaks through a tool that wasn't designed for compliance-conscious industries.

The fix: Verify these standards before starting a pilot:

- SOC 2 Type II certification: Request a copy of the report. Type II proves ongoing operational security over 6+ months, not just point-in-time design.

- GDPR compliance: Ensure data residency options and data retention policies meet requirements.

- Data encryption: At-rest (AES-256) and in-transit (TLS 1.3).

- Access controls: SAML/SCIM support for Enterprise SSO.

- Private incidents: Can you restrict access to security-sensitive incidents?

We successfully completed our SOC 2 Type I audit and are working toward Type II certification. Our platform is GDPR compliant with EU data residency and removes data on request within 30 days. For security-sensitive incidents, you can mark incidents as private and restrict channel access to specific responders.

Schedule a security review meeting early in your evaluation cycle. Invite your CISO to review the vendor's security documentation before you invest time in a pilot. This prevents championing a tool internally only to have it blocked by compliance at the purchase stage.

Mistake 5: Failing to define actionable success metrics

Problem: Implementing a tool but having no way to prove it's working. You can't show executives whether MTTR improved or whether the investment was worth it.

Impact: Six months after rollout, your VP of Engineering asks "Did this tool reduce incidents?" and you have no data. Without metrics, you can't justify renewal costs or identify which parts of your incident process still need improvement.

Track mean time to detect (MTTD), mean time to resolution (MTTR), incident frequency, and severity distribution. MTTR is the average time it takes to recover from system failure, including the full outage time. Without visibility into these metrics, you don't know if that weekend outage was an anomaly or part of a trend. You can't identify that 60% of your incidents are database-related and justify hiring a dedicated database SRE.

The fix: Ensure the tool has built-in analytics before you buy. Look for dashboards that show MTTR trends over time, incident volume by service, incident breakdown by severity, post-mortem completion rate, and time-to-assemble team.

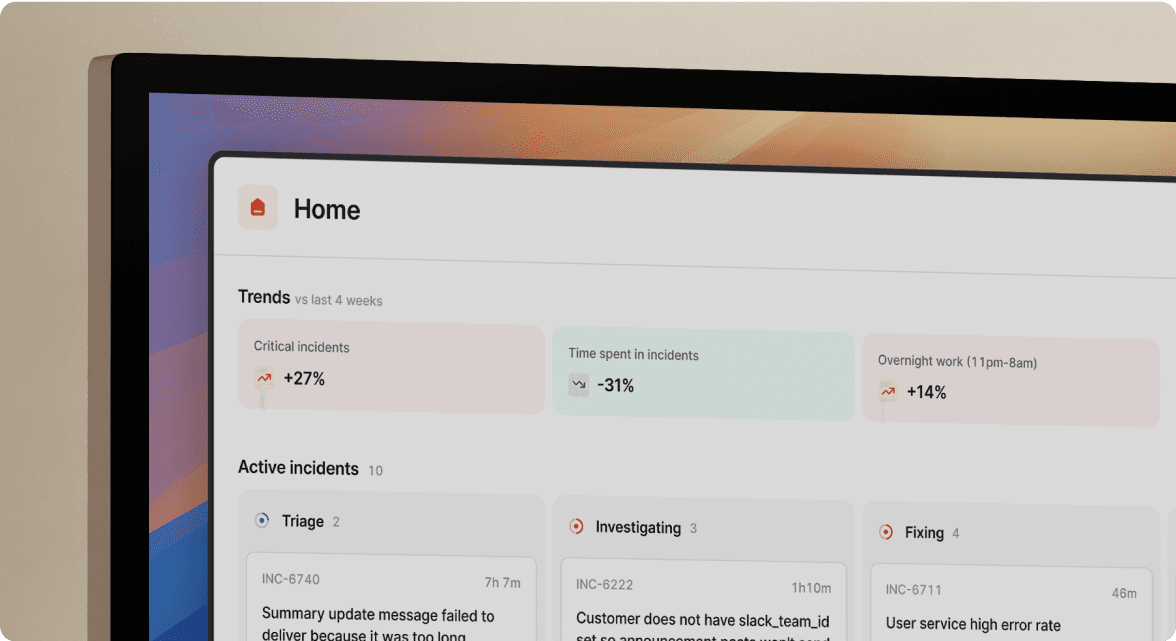

Our Insights dashboard provides these analytics out of the box, enabling you to track reliability improvements over time and justify incident management investments to executives with concrete data.

During your pilot, establish baseline metrics from your current process:

- Median MTTR for P1/P2 incidents (last 90 days)

- Time to publish post-mortem (incident resolution to published doc)

- Post-mortem completion rate (% of incidents with completed post-mortems)

- On-call engineer satisfaction (survey: "cognitive load during incidents" rated 1-10)

After 30 days with the new tool, measure the same metrics. If you don't see measurable improvement in at least two metrics, the tool isn't delivering value.

"Feedback has been consistently positive with incident.io. It's quickly making a difference... it's the source of truth for incidents we've always needed." - Braedon G. on G2

Buffer saw a 70% reduction in critical incidents after implementing incident.io. That's measurable impact your executive team can understand.

How to pilot postmortem software effectively

Don't evaluate postmortem tools through vendor demos alone. Run a proof-of-value using real alerts. Scenario-based testing reveals how the tool performs under actual conditions.

Step 1: Define success criteria

Write down your must-haves, nice-to-haves, and blockers. For example:

- Must-have: Automatic timeline capture from Slack

- Nice-to-have: AI-generated post-mortem drafts

- Blocker: Requires more than 30 minutes of training for new users

Step 2: Run real incidents through the tool

Focus on core scenarios that reveal how the tool performs. Don't create fake test incidents. Wait for actual production issues and use the pilot tool to coordinate response. This surfaces real friction points that demos hide.

Step 3: Measure time-to-publish

Track how long it takes to publish a complete post-mortem after incident resolution. Compare this to your current baseline. If the new tool saves 60+ minutes per incident, that's 10+ engineer hours saved per month for a team handling 10 incidents monthly.

Step 4: Gather qualitative feedback

Ask pilot users to rate cognitive load during incidents (1-10 scale), ease of finding context (1-10), and likelihood to recommend the tool (1-10). Qualitative feedback catches usability issues that metrics miss.

"Unlike other similar incident management tools it's super easy to use. This means we spend less time figuring out how to use incident.io, and more time focused on responding to the incident." - Matt B. on G2

Don't just check feature boxes. Rate how well features actually work during a 3 AM incident when your brain is running at 60% capacity.

Why modern teams are choosing automated timeline capture

The incident management industry is shifting from manual documentation to automated reliability. Teams at high-growth companies can't afford to spend hours per incident on manual reconstruction work.

Modern postmortem tools eliminate this toil by capturing data automatically while the incident happens. We built the entire post-mortem workflow inside Slack. When you run an incident using /inc commands, every action auto-populates the timeline.

The competitive advantage isn't faster troubleshooting. Your engineers are already good at that. It's eliminating the 60-90 minutes of reconstruction work that happens after every incident, multiplied by 15-20 incidents per month. That's 15-30 hours of engineering time reclaimed monthly to work on proactive reliability improvements instead of documentation archaeology.

Don't buy a tool that gives you more homework. Buy a tool that does the homework for you.

Ready to see automated post-mortems in action? Try incident.io free and run your first incident in Slack. You'll see the auto-generated timeline and AI-drafted post-mortem within minutes of resolution.

For teams migrating from legacy tools, we've built migration guides for PagerDuty and Opsgenie to make the transition straightforward.

Key terminology

MTTR (Mean Time To Resolution): The average time it takes to recover from system failure, including the full outage time from when the system fails to when it becomes fully operational again.

Blameless post-mortem: A review process focused on systems and process improvement rather than individual error. Creating a blame-oriented culture risks sweeping incidents under the rug, leading to greater organizational risk.

Timeline capture: Automatic recording of incident events (alerts, messages, commands, decisions) in chronological order while the incident happens, eliminating the need for manual reconstruction after resolution.

Tool sprawl: Using multiple disconnected tools during incident response (PagerDuty for alerts, Slack for communication, Jira for tracking, Confluence for documentation), causing context switching and data fragmentation.

FAQs

See related articles

How to migrate your paging tool without breaking your team

Migrating your paging tool is disruptive no matter what. The teams that come out ahead are the ones who use that disruption deliberately. Strategic CSM Eryn Carman shares the four-step framework she's used to help engineering teams migrate — and improve — their on-call programs.

Eryn Carman

Eryn Carman

How Catalog changes the game for long-term maintenance

Model your organization once, and let every workflow reference it dynamically. See how Catalog replaces hardcoded incident logic with scalable, low-maintenance automation.

Chris Evans

Chris Evans

The post-mortem problem

Post-mortems are one of the most consistently underperforming rituals in software engineering. Most teams do them. Most teams know theirs aren't working. And most teams reach for the same diagnosis: the templates are too long, nobody has time, nobody reads them anyway.

incident.io

incident.ioSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization