Best Opsgenie alternatives for DevOps teams in 2026

Updated February 20, 2026

TL;DR: Opsgenie handles alerting but leaves coordination to you. Modern DevOps teams need platforms that automate the entire incident lifecycle. We built incident.io as a Slack-native platform that reduces MTTR by 37% (proven by Favor's engineering team), with teams achieving up to 80% reduction through automated coordination. PagerDuty offers enterprise alerting with 700+ integrations but premium pricing and legacy UX. FireHydrant excels for complex service catalogs with structured runbooks. Blameless focuses on SRE culture and SLO management. For teams prioritizing speed and adoption, our opinionated defaults work immediately with minimal setup.

You're paying a "coordination tax" on every incident. Fifteen minutes lost assembling the team, context switching between Opsgenie alerts, Slack threads, Jira tickets, and Google Docs for postmortems. At 15-20 incidents monthly, that's 5-10 engineer-hours burned on logistics instead of fixes.

For DevOps teams running micro-services on Kubernetes, the bottleneck isn't getting paged anymore. It's coordinating the response while juggling five browser tabs at 2 AM. This guide ranks the top alternatives that go beyond basic alerting to automate your entire incident response workflow.

Why teams are migrating from Opsgenie

Stagnation under Atlassian

Since Atlassian acquired Opsgenie, users report declining innovation and quality. Capterra reviews reveal consistent frustration with reporting and UI complexity. One user notes "Reporting was a nightmare, as the list of users and groups wasn't available, and creating incidents was complicated."

The cloud migration created new friction. PeerSpot reviews show users observing that "the Data Center version we used earlier was much more user-friendly" and "when we switched to the cloud version, many of the features have become a bit complicated." Core integrations degraded too: "Initially, Opsgenie had bidirectional integration with Jira Service Management, but that functionality has been scaled back."

The alerting vs. management gap

Opsgenie pages you. Then you're mostly on your own. While Opsgenie does offer basic incident timeline capture and Slack integration, users note gaps in the incident management workflow compared to modern alternatives. PeerSpot reviews consistently suggest "they can improve to be more than just alerting, to be used for incident management."

When an alert fires, you still manually coordinate response teams, manage context switching between tools, and piece together post-incident timelines. That coordination overhead adds 10-15 minutes of logistics before troubleshooting even starts.

The hidden cost of tool sprawl

The real cost isn't your Opsgenie subscription. It's the engineer time burned on coordination overhead. Favor's engineering team reduced MTTR by 37% after moving to a platform that consolidated incident management into a single Slack-native workflow. At 15-20 incidents monthly across a 50-person engineering team, eliminating coordination friction reclaims meaningful engineer time.

Performance issues compound the problem. Capterra reviews report that "the website is slow, and few UI glitches are very annoying" with "the lack of real-time dashboard of the alerts is a big miss." PeerSpot users note that "Opsgenie has issues handling a large number of alerts in a short period, suggesting room for improvement in its alert management capabilities."

5 criteria for evaluating incident management tools

When evaluating alternatives, focus on these five criteria that directly impact your team's MTTR and on-call experience:

1. Slack-native workflow

Can you run the entire incident without leaving Slack? This isn't about "Slack integration" where a tool posts notifications. It's about running your response with slash commands, role assignments, and timeline capture natively in your chat platform.

Research on Slack-native platforms distinguishes "true chat-native platforms feel like using Slack, not like using a web tool that posts to Slack." The distinction matters at 3 AM when cognitive load is already maxed out. Tools that require browser tabs add friction exactly when you can't afford it.

2. On-call management

Is scheduling intuitive? Does it handle complex rotations, overrides, and calendar sync? Your on-call tool should feel familiar to anyone who's used Google Calendar, not require extensive configuration.

Look for features like iCal sync (so engineers see on-call shifts in their personal calendars), click-to-override scheduling, and multiple rotation layers. The faster a new engineer can understand who's on-call and when, the faster they contribute during incidents.

3. Automation capabilities

Does the platform auto-create channels when alerts fire? Auto-assign incident commanders based on service ownership? Auto-populate Jira tickets with timeline data? Research on AI-powered incident management shows "teams using AI-powered incident management platforms report reducing MTTR by 17.8% on average, with leading implementations achieving up to 80% reductions through deep automation."

Automation eliminates the coordination tax. Instead of manually executing a 10-step runbook (create channel, page on-call, update status page, start timeline doc), workflows trigger automatically based on alert metadata.

4. Post-mortem efficiency

Does the tool auto-capture timelines to eliminate the 90-minute reconstruction tax? Post-mortems shouldn't require archaeology. Platforms with AI-powered post-mortem generation automatically capture Slack messages, role assignments, and commands in structured timelines, with some offering real-time transcription of incident calls.

5. Ecosystem integration

Does it play nice with Datadog, GitHub, Terraform, and your existing stack? You're not ripping out your monitoring tools. You need bidirectional sync: alerts from Datadog create incidents, incidents create Jira tickets, timeline data flows into Confluence.

Check for webhook support, API documentation, and Terraform providers. Teams running infrastructure-as-code need to manage their incident response configuration the same way they manage everything else.

Top 4 Opsgenie alternatives for DevOps teams

We evaluated platforms based on hands-on testing, customer case studies, and analysis of G2 reviews and third-party comparisons. Our methodology prioritized tools actually built for modern DevOps workflows, not legacy ITSM platforms retrofitted for SRE work.

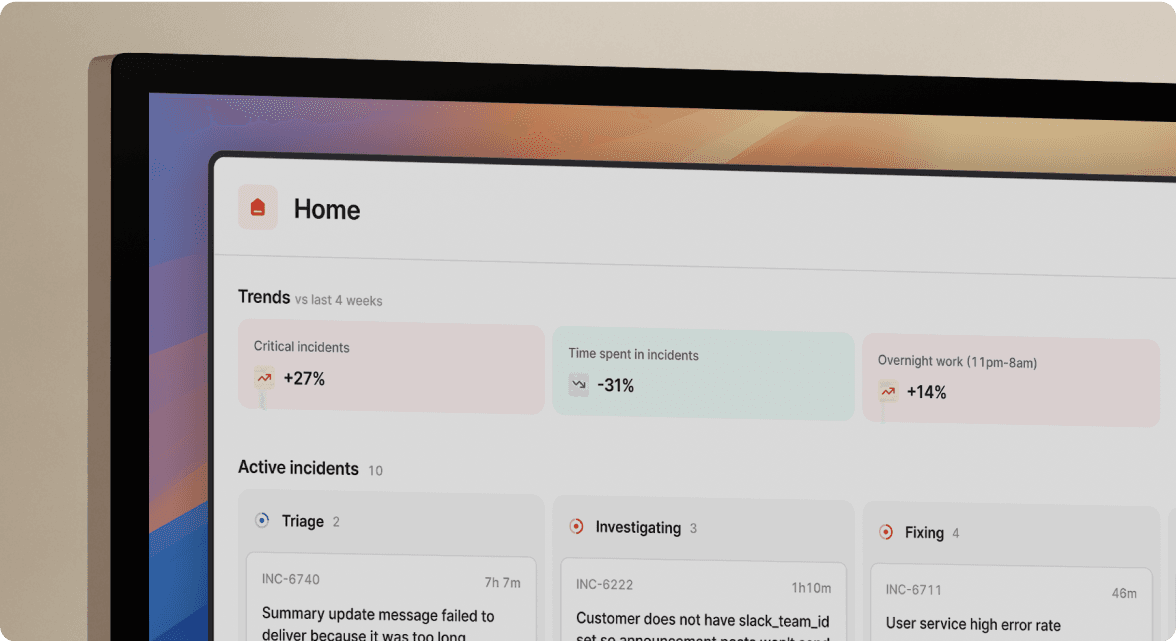

1. incident.io: Best for Slack-native automation and adoption

Why teams choose us: We unify on-call scheduling, incident response, status pages, and AI-powered post-mortems in Slack and Microsoft Teams with minimal training required.

Key differentiators:

On-call management: We offer flexible on-call scheduling with rotations, multiple layers, and calendar sync via iCal. Overrides work by clicking directly on the calendar. Our opinionated approach means teams can start running incidents quickly with sensible defaults.

Workflow automation: Configure triggers based on alert metadata. When Datadog fires a critical alert, we auto-create the Slack channel, page the on-call SRE, pull in the service owner from the Catalog, and start timeline capture. All before you type a single command.

AI capabilities: Our AI SRE "analyzes patterns from similar past incidents and suggests likely root causes based on recent deployments or configuration changes." During incidents, AI Scribe transcribes Zoom calls in real-time, eliminating the need for a dedicated note-taker.

DevOps angle: It feels like a CLI for incidents. Engineers already comfortable with /deploy and /release commands immediately understand /inc escalate @database-team and /inc severity high.

Proven results: Favor's engineering team reduced MTTR by 37% after implementing incident.io. Our platform saves us hours per incident when considering the need for us to write up the incident, root cause and actions, communicate it to wider stakeholders and overall reporting."

Trade-offs: We're opinionated with strong defaults. If you need infinite workflow customization, PagerDuty offers more flexibility but slower time-to-value. We're built for Slack and Microsoft Teams, so teams not using these chat platforms will find us less useful.

Pricing: OurPro plan costs $25 per user per month for incident response, with on-call management as a $20 per user per month add-on ($45/user/month for full capabilities). Team plan starts at $19 per user per month with on-call at $12 per user per month. For a 50-engineer team on Pro with on-call, expect $27,000 annually.

2. PagerDuty: Best for complex enterprise alerting

Why it wins: PagerDuty built its reputation on battle-tested alerting, extensive integrations, and proven enterprise reliability. If you're running legacy on-prem infrastructure or need integrations with specialized systems, PagerDuty's integration library is comprehensive.

The scheduler and escalation policies are robust, handling multi-layer rotations with time-based and urgency-based routing. For enterprises with intricate compliance requirements, PagerDuty's ITIL-aligned workflows provide the structure auditors expect.

Trade-offs:

Cost: Base pricing starts at $21-$40/user/month but critical features cost extra. Platform comparison research notes that pricing opacity is driving teams away. PagerDuty's advertised base pricing ($21-$40/user/month) excludes critical features. Noise reduction, AI capabilities, and advanced runbooks all cost extra. For a 50-person team, annual costs quickly exceed $30,000 with necessary add-ons.

Legacy experience: TechRadar's evaluation from November 2024 observed "The main drawback we observed with PagerDuty is its cluttered user interface." The platform feels built for earlier-generation IT operations rather than modern SRE workflows.

Weak ChatOps: PagerDuty offers Slack integration but remains web-first. Comparative research notes "PagerDuty offers Slack integration but remains web-first," requiring engineers to toggle between Slack and browser tabs during active incidents.

Best for:

- Enterprises prioritizing alerting infrastructure over response coordination

- Teams with legacy on-prem systems requiring specialized integrations

- Organizations already heavily invested in the PagerDuty ecosystem who need gradual modernization paths

3. FireHydrant: Best for service catalog-heavy workflows

Why it wins: FireHydrant excels for teams with complex microservices architectures who need structured, checklist-driven processes. Comparative analysis notes "FireHydrant's advantage is the structured, checklist-driven approach that ensures no steps are missed... If you need to replicate complex workflows with maximum customization, FireHydrant's Runbook flexibility provides more structural control."

The service catalog capabilities help teams model dependencies and ownership relationships across dozens or hundreds of microservices. If understanding "which services depend on the user authentication service" is critical to your incident response, FireHydrant provides that visibility.

FireHydrant also offers AI-powered post-mortem capabilities, with users noting "The use of IA for post-mortem reports is great. It saves a lot of time and give great reports."

Trade-offs:

Web-first approach: While FireHydrant integrates with Slack, platform research notes it uses a web-first approach with customizable runbooks rather than being truly chat-native. The experience feels like "using a web tool that posts to Slack" rather than managing incidents entirely within Slack.

Complexity: The deep customization FireHydrant offers comes with setup overhead. Teams report longer time-to-value compared to opinionated platforms that work immediately with sensible defaults.

Best for:

- Platform engineering teams managing 50+ microservices with complex dependency graphs

- Organizations requiring elaborate compliance workflows with detailed checklists

- Teams comfortable investing setup time for precise workflow control

4. Blameless: Best for SRE reliability culture

Why it wins: Blameless focuses on SLO monitoring, error budget management, and building reliability culture according to SRE platform comparisons. The platform helps mature SRE teams shift from reactive firefighting to proactive reliability engineering through metrics-driven decision making.

If your goal is establishing error budget policies, tracking SLO compliance, and generating monthly reliability reports for executives, Blameless provides the framework and tooling to support that cultural transformation.

Trade-offs:

While Blameless handles incident workflow, its primary focus is reliability metrics rather than real-time response coordination. Teams prioritizing faster MTTR and better on-call experience during active incidents will find platforms like incident.io more tactically helpful for the firefighting workflow.

Best for:

- Mature SRE organizations focused on reliability culture transformation

- Teams already tracking SLOs who need better error budget tooling

- Enterprises requiring detailed monthly reporting on reliability metrics

Comparison table: Features, pricing, and capabilities

| Feature | incident.io | PagerDuty | FireHydrant | Blameless |

|---|---|---|---|---|

| Slack-native | Yes, entire workflow in Slack | Partial, web-first with notifications | Partial, web-first with integration | Limited |

| On-call scheduling | Native (add-on), $20/user/month | Native, included in base | Native, included | Native, included |

| AI post-mortems | Yes, auto-drafted from timeline | Basic (extra cost for AI features) | Yes, AI-powered reports | SRE-focused learnings |

| Status pages | Included in all plans | Separate product (Statuspage) | Included | Limited |

| Automation | Workflow engine with integrations | AIOps available (extra cost) | Runbook automation | SLO-focused automation |

| Best for | Slack-native teams prioritizing speed | Enterprise legacy compatibility | Service catalog complexity | SRE culture transformation |

Pricing (50 users, estimated annual):

- incident.io: $27,000/year (Pro with on-call)

- PagerDuty: $30,000+/year with add-ons

- FireHydrant: Custom pricing

- Blameless: Custom pricing

How to plan your migration from Opsgenie

Parallel run strategy

Run both platforms simultaneously while you validate the new tool handles your alert volume and workflow requirements. Implementation research recommends you "connect your observability tools (Datadog, Prometheus, Grafana) and run the AI in shadow mode alongside your existing process."

Configure your monitoring tools to send alerts to both Opsgenie and your new platform via webhooks. Test that on-call rotations trigger correctly, escalation policies work as expected, and integrations with Jira and status pages function properly. We provide specific migration tools for Opsgenie users including schedule import utilities and configuration mapping guides.

Import and validate

Export your existing on-call schedules from Opsgenie. Most modern platforms provide import utilities that map users, rotations, and escalation policies. For incident.io specifically, our migration documentation walks through the import process step-by-step.

Verify that user mappings are correct, especially for engineers with different email addresses or usernames across systems. Test that override schedules carry over properly and that time zone handling is accurate.

Update webhooks and alert routing

Update your monitoring tools (Datadog, Prometheus, CloudWatch) to send alerts to your new platform's webhook endpoints. Our Datadog migration guidance shows how teams can migrate monitors systematically without disrupting active alerting.

Keep Opsgenie active during the parallel-run phase while you validate alert volumes, test deduplication rules, and confirm that critical alerts route correctly. Implementation best practices recommend teams "have a small team (5-10 engineers) handle incidents using both platforms" during validation.

Full rollout

Rollout guidance suggests you "announce the full rollout to all engineering teams... Make clear to all teams: ad-hoc Slack threads and Google Docs are replaced. All new incidents go through incident.io."

Communicate the change clearly: "Starting Monday, all incidents use the new platform. Here's the quickstart guide. Questions go in #incident-management-help." Provide a clear escalation path for issues during the transition.

Choose speed over legacy alerting

Opsgenie handles alerting. Modern platforms handle the entire lifecycle. The choice comes down to what you prioritize: legacy alerting compatibility (PagerDuty), service catalog complexity (FireHydrant), reliability culture metrics (Blameless), or Slack-native speed with AI automation.

We built incident.io for teams prioritizing MTTR reduction and engineer adoption. Our opinionated approach eliminates the coordination tax that traditional alerting-focused tools leave unaddressed. Favor proved 37% MTTR reduction is achievable when you eliminate tool sprawl and automate response workflows.

The migration risk is lower than staying on a stagnant platform. Parallel-run strategies ensure zero downtime during the switch. Teams can start running incidents quickly with platforms that offer sensible defaults rather than requiring weeks of configuration.

Ready to eliminate the coordination tax? Schedule a demo and we'll run a real incident in Slack so you can see how it feels for your team.

Key terminology

On-call rotation: The schedule determining which engineer receives alerts during specific time periods, typically with weekly or daily handoffs between team members.

Escalation policy: Rules defining who gets paged if the primary on-call engineer doesn't acknowledge an alert within a specified timeframe, often including multiple layers and fallback contacts.

ChatOps: Managing operations and incidents directly within chat tools like Slack using slash commands and automated workflows rather than web interfaces.

MTTR (Mean Time To Resolution): The average time from when an incident is detected until it's fully resolved, a key metric for measuring incident response effectiveness.

Service catalog: A centralized registry of services, dependencies, and ownership information used to automatically page the right team when alerts fire for specific services.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization