Optimizing incident response: Understanding DORA's Time to Restore Service metric

You know that feeling when you’re in the middle of doing work against a deadline, and your internet suddenly goes out? You know how much you hate when that happens?

Yeah, same here.

And to really pile on, you know how frustrated you get when hours go by and your internet still isn’t working? And there’s radio silence from your provider?

Yeah, me too.

The scenario represents DORA’s Time to Restore Service metric to a tee. But there’s a lot more to this metric behind the scenes worth noting. And there’s loads of actionable advice worth implementing to reduce the time it takes to restore service after a disruption.

In this comprehensive guide, I’ll dive into the details of this crucial metric, explore its benchmarks, and provide practical tips to optimize your incident response time.

💭 This article is part of our series on DORA metrics. Here are some links to the rest:

What is DORA’s Time to Restore Service metric?

In the context of DORA, the TTRS metric measures the time it takes for a team to restore service after an incident or service disruption occurs. It represents the time between the detection of an incident and the complete restoration of normal service.

By measuring TTRS, organizations can evaluate and analyze their incident management processes and identify any areas for improvement. In general, a lower TTRS indicates better incident response processes, which is typically associated with higher-performing teams and increased product reliability.

Benchmarking Time to Restore Service

To assess their incident response performance, teams can look to DORA's benchmarks for Time to Restore Service. These benchmarks serve as a yardstick for measuring success:

- Elite performers: Less than one hour

- High performers: Between one hour and one day

- Medium performers: Between one day and one week

- Low performers: More than one week

It’s essential to note that the time it takes to restore service will depend on a myriad of contextual factors, so blindly targeting these benchmarks tends not to be especially helpful. Things like the complexity of the systems involved, the people who are responding, and the degree of understanding across the domain all introduce a number of variables that can affect restoration times.

Nonetheless, as an enduring goal, we can all agree a lower time to restore service is better. And if we see trends or outliers, or if we have hard constraints like deploy times that push restoration times high, TTRS can be a helpful starting point for an investigation.

How can I reduce my amount of downtime?

It’s all in the details!

To minimize your Time to Restore Service, you have to really prioritize your incident response process. With a half-baked or ad hoc approach for responding to incidents, not only will your downtime extend more than necessary, your customers will suffer as a result.

With that in mind, let's explore some practical tips to optimize (and reduce) your incident response times.

Hone in on your incident response plan

I’ve noted this a few times already, but it’s of utmost importance that you develop a dedicated, coherent, and efficient incident response plan. This means creating a plan that outlines clear roles, responsibilities, and escalation procedures.

With it, you should be able to confidently answer questions such as:

- What happens when an incident is declared?

- Who do higher-severity incidents get routed to?

- What’s your on-call process?

- Do you have incident triages?

- How do you escalate incidents?

But remember, incident response doesn’t end once the incident is closed out. So make sure you have a well-thought-out post-incident process as well. This includes creating post-mortem document templates, holding blameless post-incident meetings, and prioritizing learning from your incidents. We’ll touch on this again later.

Be proactive about monitoring and alerting

Needless to say, every minute counts when it comes to incident response and cutting back on downtime. So if you aren’t currently using a monitoring tool, such as Datadog, or an alerting tool, such as Pagerduty, you’ll want to do so ASAP!

Tools like these can alert you the minute an incident is detected and kick off your incident response process. This way, even the smallest incidents won’t slip through the cracks.

Streamline your communication and collaboration processes

Your incident response processes will be made or broken by your communication and collaboration—point blank. If either of these isn’t where they need to be, you can expect your downtime to suffer as well.

That’s why it’s important to prioritize how you communicate during incidents and how you collaborate to resolve them as well.

To start, each incident should have a single Slack channel, preferably named after some description of the incident—for example, #inc-our-server-is-down. With a single channel, all communication about that incident is in a central hub, eliminating the need to chase context across various DMs and channels.

Second, you should use an incident response solution that makes it easy to create responsibilities. For example, responders should be able to designate someone or volunteer to be an incident lead easily.

And as a bonus, having workflows that automate several steps of the incident response process is a major plus here. For example, a workflow that notifies folks what the “next step” in the response process is, as laid out by you.

In the end, this all may seem like table stakes, but like I said earlier, every minute counts when responding to incidents. And it’s always the things that feel the most trivial that add up to the most downtime.Don’t overlook your post-incident analysis!

Remember, incidents don’t end when they’re resolved. By prioritizing your post-incident workflow, including meaningful learnings from incidents, you can improve your response processes and build more resilient products in the long run.

I mentioned a few of these earlier, but this could look like holding blameless incident debrief meetings and using a dedicated post-mortem document.

Additionally, any insights you can gather into the efficiency of your incident response can go a long way here. For example, try to determine which teams respond to the biggest share of incidents or who’s been paged the most over the last 3 months.

Both of these data points, and others, can give you meaningful insights into what’s working and what isn’t, what should be improved, and what’s a building block for future success.

How incident.io's Insights can help you cut back on downtime

Unfortunately, incidents will happen despite your best efforts. And with those incidents will come a bit of downtime. It's an unfortunate combination that's best to accept as reality and do what you can to best circumvent it.

That said, everyone wants insights into ways to cut back on their downtime from incidents. But the process of compiling appropriate data can be pretty complicated, especially when it comes to critical incident response metrics that can give you meaningful insights to help you reduce downtime.

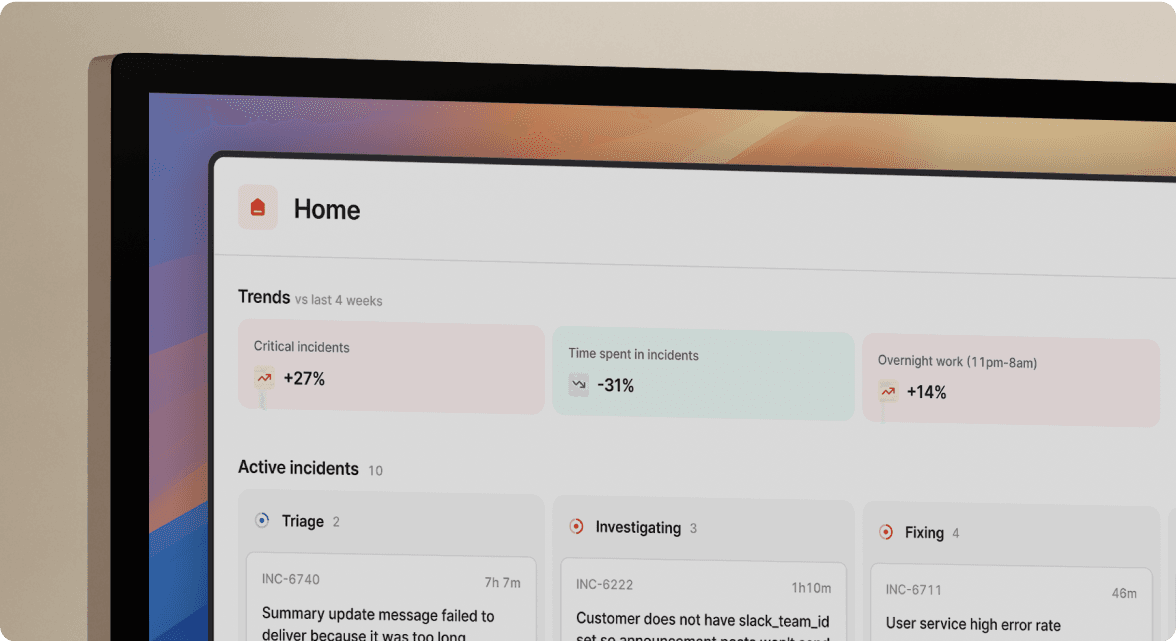

This is where incident.io's Insights dashboard comes in.

With it, team members and engineering leaders can glean dozens of insightful response metrics, allowing them to make meaningful changes to how they operate before, and during, incidents.

The best part? Many of these dashboards are pre-built, so you can jump right in and analyze key metrics without the overhead. But don't worry; you can set up your dashboards as well. Here are just a few of the metrics you can track right out of the box:

- Seasonality: To help you answer questions such as, "Do our incidents concentrate around certain days of the month?" or "What do we expect incident workload to be around the Christmas holidays?"

- Pager load: A measure of how your team is responding to on-call, helping to answer questions like "What's the trend in the number of times my team is being paged?" or "Are there only a few people who have been woken up out of hours?"

- Readiness: A set of data points that gives insight into questions like "How many people have recently responded to incidents involving this service and are likely to know how to handle future incidents?" and "Is our responder base growing or shrinking?"

- MTTX: Data points that can help you answer questions such as, "Is our mean-time-to-respond increasing?" or "Which of our services has the lowest time-to-detection?"

...and more.

If you're interested in seeing how Insights work and how its metrics can fit alongside Time to Restore Service, be sure to contact us to schedule a custom demo.

More from DevOps Research and Assessment (DORA) guide

What are DORA metrics and why should you care about them?

Google's DORA metrics can help organizations create better products, build stronger teams, and improve resilience long-term.

Luis Gonzalez

Luis Gonzalez

Development efficiency: Understanding DORA's Mean Lead Time for Changes

By using DORA's Mean Lead Time for Changes metric, organizations can increase their speed of iteration

Luis Gonzalez

Luis Gonzalez

Shipping at speed: Using DORA's Deployment Frequency to measure your ability to deliver customer value

By using DORA's deployment frequency metric, organizations can improve customer impact and product reliability.

Luis Gonzalez

Luis GonzalezSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization