How to migrate from PagerDuty: Step-by-step checklist and timeline

Updated April 24, 2025

TLDR: Most engineering teams delay moving off PagerDuty not because they love the tool, but because they fear dropping a critical alert mid-cutover. A phased migration removes that risk: audit your current configuration, run a parallel test where both platforms receive identical alerts, then decommission PagerDuty after confirming zero missed pages. We automate the heaviest steps, including guided schedule and escalation policy imports, Datadog monitor migration, and a Python script for historical incident data transfer.

The hardest part of replacing PagerDuty isn't the API integration but untangling years of undocumented on-call overrides, dead escalation paths pointing to engineers who left two years ago, and custom webhooks no one remembers building. Once you've audited all of that, the actual cutover is straightforward.

This guide gives you a concrete 14-day plan, specific go/no-go checkpoints, and a step-by-step guide to running a zero-downtime parallel cutover. By the end, your team manages incidents where they already work: in Slack, without opening a single browser tab.

Is PagerDuty impeding your MTTR goals?

PagerDuty built a solid alerting foundation. The problem is everything that happens after the alert fires: coordination, timeline capture, status page updates, and post-mortems all require separate tools or expensive add-ons. For a 120-person engineering team, that sprawl adds up fast.

Assessing PagerDuty's true cost of ownership

PagerDuty's pricing model includes per-user base costs plus additional fees for advanced features. For comparison purposes, a typical configuration might include the Business plan plus add-ons like AIOps, advanced features, and status pages, which can add significant costs on top of the base per-seat pricing.

For a 120-person team, the annual Total Cost of Ownership (TCO) compares like this:

| Platform | Annual total (estimate for 120 users) |

|---|---|

| PagerDuty Business + typical add-ons | $59,040/yr base (120 users × $41/user/mo annual). AIOps, Status Pages, and AI add-ons priced separately. Contact PagerDuty for total. |

| incident.io Pro (incl. on-call) | $64,800/yr (120 users × $45/user/month × 12 months) |

Our Pro plan costs $45/user/month ($25 base + $20 on-call add-on) and includes on-call scheduling, AI post-mortems, and Slack-native coordination at one combined price. A public status page is included on the Pro plan.

High cognitive load during incidents

PagerDuty is a web-first tool with a Slack integration bolted on. During a P0, your engineers context-switch between PagerDuty's web UI, ad-hoc Slack threads, Datadog metrics, a Jira ticket, and a Confluence doc before anyone starts troubleshooting. We built the entire incident lifecycle inside Slack and Microsoft Teams so that doesn't happen. When a Datadog alert fires, we auto-create a dedicated channel, page on-call responders, and start capturing a live timeline. Everything from /inc escalate to /inc resolve happens in chat.

Before you start: migration readiness check

Treat this migration like any other engineering deployment. Before you touch a single API key, run a clear audit of what you're migrating, confirm who's approving it, and define what success looks like.

Audit your current PagerDuty setup

PagerDuty configurations accumulate technical debt fast. Run this audit before Day 1:

- Services: List every PagerDuty service and flag any with no recent incidents or no active owner.

- Escalation policies: Map every tier including primary, secondary, and fallback. Identify policies with no valid fallback.

- On-call schedules: Export all schedules and verify timezone settings. Note any active overrides.

- Integrations: Document every alert source (Datadog, Prometheus, AWS CloudWatch, etc.) and the webhook or API key it uses.

- Custom webhooks: Find any custom scripts hitting PagerDuty's REST API. These need manual recreation.

Define migration goals and KPIs

Set pre-migration baselines before you touch anything so you can prove ROI afterward. Capture from PagerDuty:

- MTTR (mean time to resolve): median incident resolution time this quarter.

- MTTA (mean time to acknowledge): average time from alert trigger to first acknowledgment.

- Alert volume: incidents per service per week.

- Post-mortem completion rate: percentage of incidents that get a written post-mortem.

- Current annual tool spend: PagerDuty base cost plus any add-ons.

Who approves your PagerDuty exit?

For a 120-person team, budget approval typically involves three stakeholders:

- CTO or VP Engineering: owns the tool decision and MTTR goals.

- CISO: needs to verify compliance and data residency. We hold SOC 2 Type II certification, maintain GDPR compliance, use AES-256 encryption at rest, and offer SAML/SCIM on Enterprise.

- CFO/Finance: needs the all-in TCO comparison. Use the table above.

Your 14-day PagerDuty migration checklist

Days 1-3: audit and export

- Complete the PagerDuty configuration audit (services, escalation policies, schedules, integrations, custom webhooks).

- Export on-call schedules from PagerDuty: navigate to People → Schedules, select the schedule, and choose Export to download schedule data. Export formats are iCal/.ics and WebCal feeds only, covering approximately one month of historical data plus up to six months of upcoming on-call. For CSV or JSON output, use PagerDuty's REST API.

- Use PagerDuty's REST API to export services and escalation policies for configurations not available via the UI.

- Document all custom webhook endpoints and API integrations.

- Set MTTR, MTTA, and post-mortem completion baselines.

- Go/No-Go: Proceed only when the audit covers all active services and escalation policies.

Days 4-7: configure your new on-call

- Connect your PagerDuty integration in incident.io via Settings → Integrations.

- Navigate to On-call → Escalation paths. You'll see your PagerDuty escalation policies listed by import status. Select the ones ready to import and click Import. Our migration guide walks you through importing your PagerDuty configuration into the Catalog.

- Resolve any user-matching errors. The most common issue is when we can't match a PagerDuty user to an incident.io account.

- Enable our schedule mirroring feature if needed during the parallel phase.

- Reconfigure Datadog monitors to point to incident.io.

- Go/No-Go: The majority of on-call responders have configured notification methods (app, phone, SMS) in incident.io.

Days 8-11: launch pilot and test

- Deploy incident.io to a pilot group from your Platform or API team.

- Route non-critical alerts to incident.io only. Keep PagerDuty live for P0/P1 alerts.

- Run 3+ test incidents through incident.io in Slack. Validate channel auto-creation, on-call paging, timeline capture, and

/inc resolvetriggering a status page update. - Verify that every on-call responder has configured notification methods (app push, SMS, or phone call) and can receive pages from incident.io.

- Go/No-Go: Pilot team successfully handles multiple incidents with no missed pages and acceptable response times.

Days 12-14: parallel run and go-live

- Configure Datadog to dual-route all alerts to both PagerDuty and incident.io simultaneously (see the "Configure alert routing" section below).

- Run the parallel system for an extended period to validate both platforms receive and route every alert correctly.

- When ready: if zero alerts are missed in incident.io, disable PagerDuty webhooks from all Datadog monitors. Keep read-only PagerDuty access active for a period as a fallback.

- Announce cutover to the full engineering org via a Slack message in your #engineering channel.

- Go/No-Go: All alerts correctly received and routed in both systems for the full parallel run window.

Build on-call schedules and escalation paths

Our import process lets you select and import escalation policies from PagerDuty into incident.io, but a few steps require manual attention.

Export on-call schedules from PagerDuty

- Go to People → Schedules in PagerDuty.

- Next to the schedule you want, select Export and choose from the available formats: iCal/.ics file, iCal URL, or WebCal feed.

- These export formats cover approximately one month of historical data plus up to six months of upcoming on-call. For CSV or JSON, use PagerDuty's REST API (

GET /schedules). - For services and escalation policies, use PagerDuty's REST API (

GET /escalation_policies) to export programmatically.

Note that some PagerDuty features aren't directly replicable in our on-call model. If your escalation policies use advanced Event Orchestration rules for automated alert routing, document those separately so you can evaluate which incident.io Workflows cover the equivalent behavior.

Migrate PagerDuty escalations to incident.io

Our escalation paths let you define who to notify at each level, how long to wait before escalating, and how many times to retry if an alert goes unacknowledged. You can target schedules, individuals, or Slack channels at each tier.

When mapping from PagerDuty's escalation policies, pay particular attention to your fallback tiers. A missing final escalation layer is a leading cause of dropped alerts during migration. Don't skip this check.

Configure alert routing for a zero-downtime cutover

The parallel run is the single most important risk mitigation step in the entire migration. Skipping it is how teams drop P0 alerts.

Run a parallel test with Datadog

Datadog webhooks let you send alerts to multiple external systems simultaneously. Here's how to set up dual-routing:

- In Datadog, navigate to Integrations → Webhooks → + New.

- Create Webhook 1 pointing to your PagerDuty integration endpoint.

- Create Webhook 2 pointing to your incident.io webhook URL (found under Settings → Integrations → Datadog in incident.io).

- In each Datadog monitor's notification body, add both:

@webhook-pagerduty @webhook-incidentio. - Monitor both platforms for a sufficient period. Every alert that fires in PagerDuty must also appear in incident.io.

For Prometheus-based alerting, apply the same dual-routing logic using Alertmanager's receiver configuration to target both endpoints in parallel.

Verify alert delivery and deduplication

After the parallel run begins, confirm that:

- Alert deduplication is working correctly (no duplicate channels for the same incident).

- Severity mapping is consistent between platforms.

- On-call responders receive pages from incident.io within the same SLA window as PagerDuty.

Onboard engineers in days, not weeks

This is where we have a structural advantage over PagerDuty. Because the entire workflow lives in Slack, there's no new interface for engineers to learn.

On-call incident commands in Slack

Our on-call shortcuts cheatsheet covers the commands your engineers need. Key commands include:

/incin any non-incident channel: opens the incident creation form./incinside an#inc-...channel: shows a menu of all available actions on that incident./inc coverin Slack: creates an on-call override without opening the web UI.

You can also @incident in any incident channel to draft updates, create follow-ups, or pause an incident through natural language instead of commands.

Keep the onboarding simple and focused:

- Join incident.io and configure notification preferences (app push, SMS, phone call).

- Run a test incident in the #sandbox channel using

/inc. - Practice the three core commands:

/inc,/inc escalate,/inc resolve. - Shadow one live incident before taking your first solo on-call shift.

- Confirm you're reachable via all notification methods (app push, SMS, and phone call) before your first on-call shift.

Engineers who have migrated from other platforms describe the onboarding as straightforward:

"Moved from Statuspage and PagerDuty and didn't look back... For non-admin users, incident.io is extremely user friendly, and you can learn as you go." - Rodrigo Q. on G2

Common PagerDuty migration mistakes and how to avoid them

Escalation mapping errors: The most dangerous omission is a missing fallback tier. Before cutover, manually walk every escalation path end-to-end and confirm each tier resolves to a valid, active user or schedule. Also check for timezone mismatches. Schedules with timezone-specific handoffs can silently misconfigure if incident.io defaults to a different timezone, so set all schedules to UTC first, then convert to local time.

Skipping the parallel run: A hard cutover disabling PagerDuty before validating incident.io is how teams drop P0 alerts at 2 AM. Running both platforms in parallel for a period lets you validate alert routing before committing fully.

Losing undocumented configurations: Custom Event Orchestration rules and manual API webhooks often live only in one engineer's memory. Before the audit closes, interview your most senior on-call engineers and ask: "What custom rules did you add that aren't in the official docs?" These need to be evaluated against incident.io Workflows before cutover.

Neglecting cutover communication: When you flip the switch, support, execs, and customer success teams need to know where the status page lives and what #inc channels look like. Send a Slack announcement on Day 14 and update your incident response runbook with the new channel naming convention.

Migration rollback plan

Keep this ready before the parallel run. If critical issues surface, you need to revert quickly.

Step 1: Announce rollback decision in your migration coordination Slack channel. Notify the on-call rotation immediately.

Step 2: In Datadog, remove @webhook-incidentio from all monitor notification bodies. Re-enable @webhook-pagerduty. Fire a canary alert on a non-critical service to confirm PagerDuty is receiving alerts.

Step 3: Confirm incident.io shows no new incoming alerts. Verify the on-call team is receiving PagerDuty pages normally.

Step 4: Keep incident.io fully configured for at least one billing cycle. Do not delete schedules or escalation paths. Schedule a retrospective within 30-60 days to identify the root cause before attempting the migration again.

Critical rule: Maintain read-only PagerDuty access for a period post-cutover even after a successful migration. You may need historical data during incident post-mortems.

Measure and prove ROI after migration

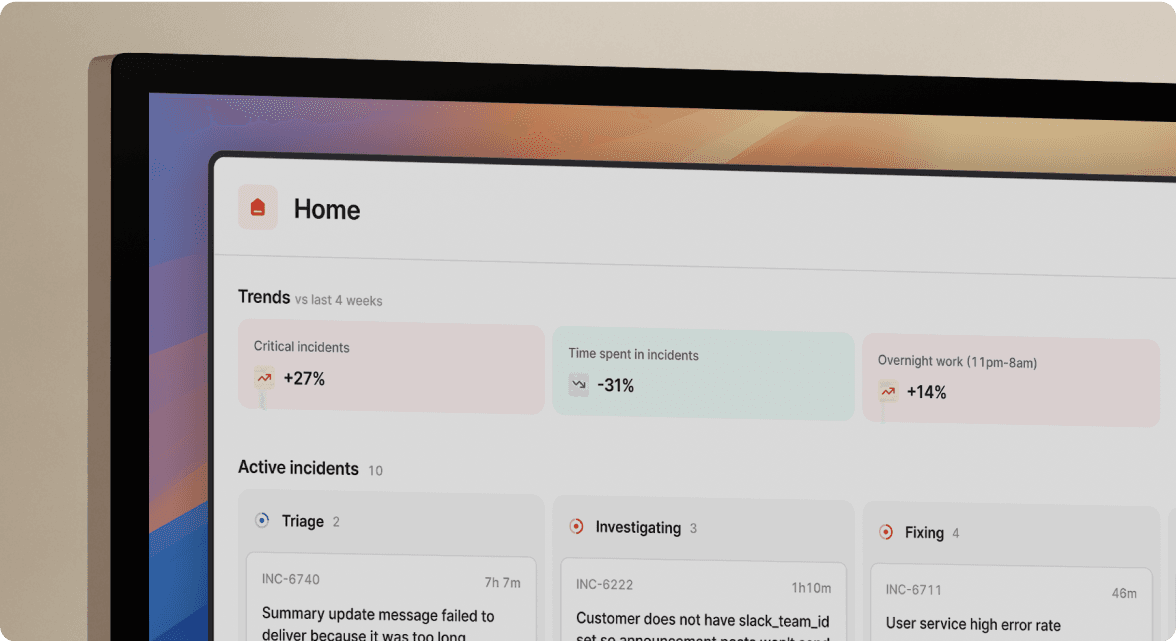

Our Insights dashboard provides MTTR trends, incident volume, and performance data using timeline data captured during every incident. You don't export anything manually. You show the exec team a live dashboard.

Track these KPIs in your first post-migration board review:

- MTTR trend: incident.io can reduce MTTR by up to 80%. Favor's team saw a 37% reduction within weeks of cutover.

- Post-mortem completion rate: track whether auto-drafted post-mortems drive higher completion.

- Engineer adoption:

/inccommand usage vs. web UI. Higher command usage means faster, less error-prone response. - Team assembly time: how quickly the right people are in the incident channel after an alert fires.

AI for faster MTTR and post-mortems

Our AI SRE assistant can automate up to 80% of incident response work by analyzing GitHub pull requests, Slack messages, historical incidents, logs, metrics, and traces to surface root cause hypotheses automatically.

Post-mortems auto-draft from captured timeline data as soon as you run /inc resolve. The post-mortem drafts 80% complete. Your engineer spends about 10 minutes refining it instead of 90 minutes reconstructing it from Slack scrollback.

Migration tool comparison

Migration tooling varies significantly across platforms and a poor import experience adds days to your cutover timeline. Here's how incident.io compares to the main alternatives on the capabilities that matter most.

Table 1: Migration capabilities

| Platform | Schedule import | Escalation import | Historical data |

|---|---|---|---|

| incident.io | Guided import | Guided import | API + Python script |

| FireHydrant | Interactive tool | Interactive tool | Varies |

| Rootly | Varies by source | Varies by source | Varies |

| Zenduty | Guided flow | Guided flow | Varies |

*Competitor capabilities are directional based on publicly available information at time of writing and may vary by platform version or release. "Slack-native" as used for incident.io describes a full workflow that runs inside Slack without requiring web UI navigation. Rootly self-describes as Slack-native; their architecture is classified here as Slack-integrated to reflect that distinction.

Table 2: Platform architecture and pricing

| Platform | Slack architecture | Pricing (per user/month) |

|---|---|---|

| incident.io | Full platform | $45/user/month ($25 base + $20 on-call add-on) |

| FireHydrant | Integration-based | Platform Pro $9,600/yr (up to 20 responders). Enterprise custom. |

| Rootly | Slack-integrated (self-described as Slack-native) | Essentials: $20/user/month (Incident Response) + $20/user/month (On-Call) (~$40/user/month combined). Enterprise custom. |

| Xurrent IMR (formerly Zenduty) | Integration-based | Starter $6/user/month. Growth $16/user/month. Enterprise custom. |

If incident.io looks like the right fit, schedule a demo and we'll show you the guided import flow live including schedule syncing, escalation policy mapping, and how to configure your parallel run.

Key terms glossary

Event orchestration: The process of using automated rules to triage, route, enrich, and suppress incoming alerts before they page a human. In PagerDuty, this is the Event Orchestration feature. We use Workflows for equivalent automation, which trigger actions based on incident conditions.

Schedule mirroring: A feature that syncs an incident.io on-call schedule with PagerDuty. This lets teams test schedules in incident.io during the parallel run phase while PagerDuty continues to process alerts.

Parallel run: A migration testing technique where alerts route to both PagerDuty and incident.io simultaneously for a defined window (at minimum 48 hours, ideally longer for complex environments). This verifies that the new platform correctly receives, deduplicates, and routes every alert before the old system is decommissioned.

Escalation path: A pre-defined set of rules that determines the order in which responders are notified, how long to wait before escalating to the next tier, and how many retry attempts to make if an alert goes unacknowledged. Our escalation paths can target schedules, individual users, or Slack channels at each level.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization