Incident escalation policies: the complete guide to intelligent alert routing and on-call assignment

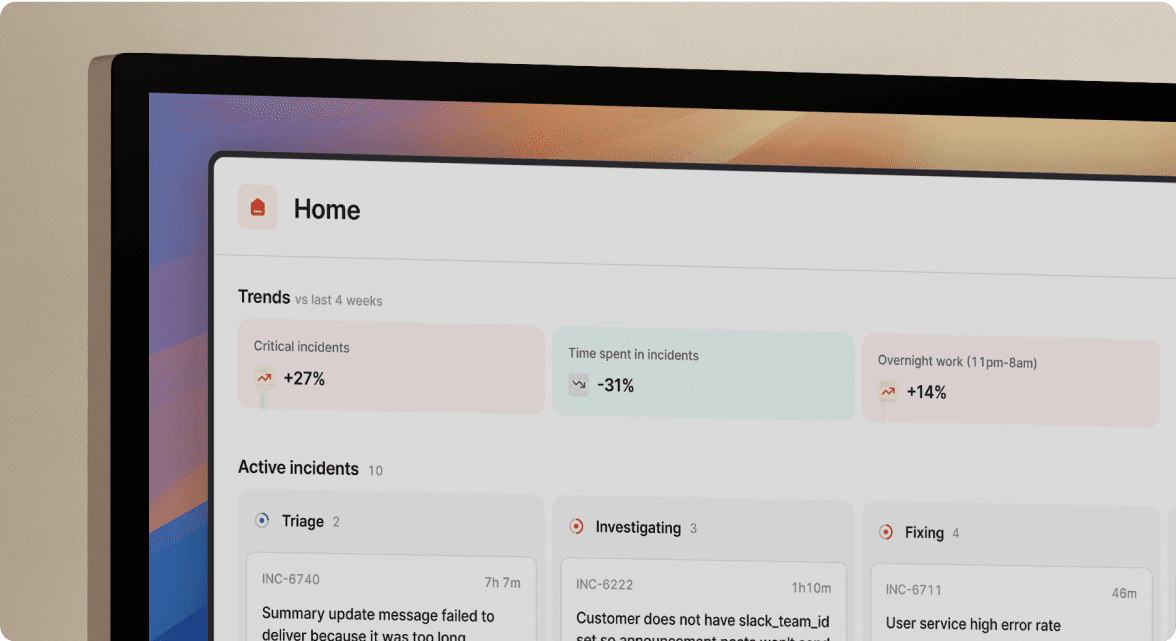

TL;DR: Intelligent escalation policies route alerts based on service ownership, severity, and availability, cutting team assembly time, not MTTR overall, but the coordination step before troubleshooting even starts, from 12 minutes to under 3 minutes. Manual routing is a direct contributor to blown SLAs and burned-out engineers. incident.io automates the entire escalation handoff inside Slack, including auto-created incident channels, tiered on-call paging, and fallback logic, so your team stops hunting for the right person and starts fixing the problem.

Manual escalation slows incident response by forcing engineers to hunt for the right people across PagerDuty, Slack, and spreadsheets during critical outages. This usually stems from unclear or outdated escalation policies, or over-reliance on a single on-call engineer.

Teams typically lose ~12 minutes per P1 incident just coordinating responders, adding up to hours of wasted time each month. This guide shows how to design and automate escalation policies so incidents route to the right people instantly, without manual coordination.

How incident escalation policies function

You configure alert routing by defining rules that direct each monitoring signal to the correct team or individual automatically. Traditional rule-based alerting triggers notifications when a threshold crosses a fixed value and sends that notification to a static distribution list. Intelligent routing goes further by layering in context: which service generated the alert, who owns that service right now, what severity the payload signals, and whether the primary on-call engineer is available.

This produces alerts that arrive with full context, reach exactly the right person, and follow your predetermined escalation path if no one acknowledges within your defined window. That is the difference between a policy that wakes everyone up and one that wakes the right person.

Designing effective escalation paths

You build an escalation path from three core components working in sequence:

- Primary responder: the on-call engineer whose rotation covers the affected service right now.

- Secondary responder: the backup engineer or team lead who gets paged if the primary does not acknowledge within your configured wait time.

- Fallback responder: a manager, incident commander, or all-hands channel that receives the escalation if both previous tiers go unanswered.

How escalation policies differ from on-call schedules

These two concepts get conflated constantly and the confusion costs teams real time during incidents. An on-call schedule answers "who is available right now?" and defines rotation windows, shift lengths, and coverage gaps. An escalation policy answers "how and when do we notify that person, and what happens if they do not respond?"

You need both configured correctly for reliable incident routing. A well-designed on-call schedule paired with a poorly configured escalation policy still produces missed pages and slow assembly. incident.io lets you configure schedules and escalation paths together in one place, so your rotation and notification logic stay aligned.

Why manual escalation slows MTTR

Manual escalation forces engineers to context-switch at exactly the worst moment. Without automation, an on-call SRE might hunt through spreadsheets to find backup contacts during outages, a delay that adds minutes before troubleshooting even starts. That 12-minute assembly window is the median for teams without automated routing.

For mid-size SaaS companies, downtime typically costs between $2,300 and $9,000 per minute, with some organizations seeing losses exceeding $300,000 per hour. The actual fix, once the right engineer was engaged, took only a fraction of the total incident time.

Ensuring alerts reach the right on-call

The most common failure mode in escalation design is routing alerts to a general team rather than to the specific team that owns the failing service. When a database connection pool exhausts, paging the general SRE rotation is slower than paging the database team directly. Service Catalog integration closes this gap by maintaining live ownership maps that link every service to its current on-call responder.

Map services to alert escalation paths

A Service Catalog ties each service to an escalation path so when Datadog fires an alert tagged service: payments-api, incident.io looks up the payments-api owner and pages that team directly, with no human lookup required. This approach, detailed in incident.io's alert routing documentation, eliminates the "who owns this?" delay that adds minutes to every incident.

The incident management best practices guide makes this concrete: establish clear ownership for every service. Each service should have at least one primary owner documented in the Service Catalog and reflected in the escalation path. Smaller services may have a single person fulfilling both roles, while larger services often designate separate primary and backup owners. When ownership is ambiguous, alerts may route to multiple teams, and accountability becomes unclear during the incident.

Configure tier timing and notification channels

High-urgency and low-urgency routing need to look different. P1 incidents (critical severity, with direct customer-facing impact) warrant immediate phone calls and SMS alongside Slack paging. P2 incidents (high severity, significant degradation without full outage) can start with a Slack notification and escalate to a phone call only if no acknowledgment arrives within your configured window. Configuring this distinction prevents alert fatigue on low-severity events while ensuring your P1s cut through every possible distraction.

incident.io delivers alerts through phone, SMS, email, Slack, and push notifications, and you configure which channels activate at each tier and priority level inside the escalation path builder.

Automated fallback responder logic

When nobody answers, coverage gaps in your escalation path can leave incidents without a clear next responder. When no one is on call for a level, the platform handles coverage gaps according to your configured escalation path settings, as detailed in the escalation delay documentation. You define who sits at each fallback tier, whether that is a manager, a staff engineer, or a dedicated war-room channel.

Why escalation policies reduce alert fatigue

Alert fatigue develops when engineers receive high volumes of low-signal notifications. When every minor spike pages the rotation with the same urgency as a production outage, engineers start treating truly critical alerts like noise. Intelligent routing solves this by ensuring alerts carry the right severity, reach only the people who need to act, and arrive with enough context to support a decision immediately.

Automating on-call team assignment

The "designated dispatcher" pattern, where one senior engineer manually figures out who to page for every incident, is a costly and fragile approach. Automating assignment through a Service Catalog and escalation path removes that tax entirely. The engineer who would have spent 8 minutes deciding who to call can instead spend those 8 minutes troubleshooting.

Routing alerts by availability and time zone

A follow-the-sun model distributes on-call coverage across geographic regions so no single team carries overnight load indefinitely. When teams are widely distributed, different regional teams cover incidents during their own daytime hours, which means faster response (the on-call engineer is awake) and lower burnout (no one carries overnight load every week). incident.io's scheduling engine supports this model with region-aware rotation configurations.

incident.io also supports dynamic escalation path selection, where alert payload content determines the path chosen. A CPU spike on your API tier routes to the platform team. A failed payment webhook routes to the payments engineering team. You configure this logic using catalog expressions that connect alert metadata to the appropriate path, without writing custom code.

Designing escalation policies that accelerate MTTR

Good policy design requires deliberate upfront choices. Use this checklist to cover the configuration areas with the highest impact on MTTR before you go live.

Pre-deployment escalation audit checklist:

- Every service in your Service Catalog maps to at least one primary owner

- Each escalation path defines wait times for P1 and P2 incidents separately

- Multi-channel delivery is configured for P1s, with notification channels selected based on the severity and urgency of the incident

- Fallback responder (Tier 3) routes to a manager or all-hands channel, not to an empty rotation

- Phone numbers and contact details in on-call schedules are current and verified before go-live

- A test alert has been fired through the production escalation path to confirm routing and notification channels are working as configured

Configure alert escalation tiers

The tier structure below gives most teams a solid starting point. Revisit wait times periodically as your team's operational patterns become clearer over time.

| Tier | Responder | Notification method | P1 wait | P2 wait |

|---|---|---|---|---|

| Tier 1 | Primary on-call responder | Phone + Slack | Short | Longer |

| Tier 2 | Secondary on-call | Phone + SMS + Slack | Short | Moderate |

| Tier 3 | Manager or staff engineer | Configurable | Immediate | Per policy |

Prevent missed alerts with robust on-call routing

Multi-channel delivery is not redundant, it is insurance. A Slack notification alone fails when the engineer's phone is on silent. Adding a phone call for P1s catches the cases that Slack misses. You configure which channels activate at each tier and priority level inside incident.io's escalation path builder.

Conduct incident response drills

Testing your escalation policy before a real P1 fires is the only way to confirm it actually works. Run a quarterly drill by firing a test alert through your production escalation path, then measuring how long it takes to reach each tier and whether all notification channels deliver successfully. This catches misconfigured backup rotations, stale phone numbers, and accidentally empty tiers before they cause a real incident to drag.

Incident escalation playbook examples

Single-team escalation (SRE to senior SRE to manager)

This pattern covers localized issues where one team owns the affected service end-to-end.

- When an alert fires, incident.io creates a dedicated Slack channel for coordination

- The primary on-call responder is paged via phone and Slack

- The incident timeline begins capturing activity automatically

- If there is no acknowledgment within the configured window, the escalation policy triggers the next tier

- Escalation continues to a secondary on-call responder or senior engineer if needed

- If still unresolved, the incident escalates to a manager or configured fallback channel

- Responders can escalate ownership within the workflow to bring in the correct team

Multi-team escalation (frontend to backend to database)

Complex microservice environments often surface root causes in a different layer than where the alert fired. The frontend team detects high error rates, acknowledges, and after investigating determines the root cause is downstream. Using incident.io's escalation command, they type /inc escalate @database-team directly in the incident channel, without leaving Slack. The database team joins the existing channel with the full timeline already populated, eliminating the context-sharing overhead that happens when you start a new thread.

Severity-based routing (P1 to all hands, P2 to on-call only)

P1 customer-facing outages justify waking multiple teams simultaneously. P2 degradations do not. Configure two separate escalation templates: the P1 template pages primary, backup, and manager in parallel from the start, while the P2 template starts with the primary on-call only and escalates sequentially if there is no acknowledgment.

Automating alert handoffs to on-call

Manual handoffs between monitoring tools and your incident response workflow are where context gets lost. Integrating your alert sources directly into incident.io closes that gap.

Connecting Datadog, PagerDuty, and other alert sources

When an alert fires, incident.io creates a dedicated Slack channel, pages the primary on-call via phone and Slack, and begins capturing the timeline automatically. Teams migrating from PagerDuty or Opsgenie can import schedules and policies directly, significantly reducing migration effort. If you are currently on Opsgenie, which sunsets in April 2027, this import path makes the transition straightforward.

For a broader look at how to evaluate and select an on-call tool, the 2026 on-call tool selection framework walks through the key criteria. You can also watch the incident.io On-call product overview to see the escalation workflow in action.

Mapping alert metadata to escalation rules

Alert tags and labels are the raw material for intelligent routing. A Datadog alert tagged env: production, service: payments-api, and severity: critical carries enough information to determine the correct escalation path without any human judgment. incident.io reads these tags, looks up the matching service in the Service Catalog, and routes to the payments team's escalation path automatically. The dynamic escalation configuration guide covers how to build expressions that connect alert fields to specific paths.

Real-time incident status across tools

Status page updates are exactly the kind of task that falls through the cracks when an SRE's cognitive load is maxed out at 2 AM. incident.io auto-updates your status page when an engineer types /inc resolve in Slack, with no manual steps. This is part of why the Slack-native architecture matters in practice: coordination and downstream updates happen in one place automatically.

"incident.io is an essential tool for our incident management. The way incident.io integrates with Slack has been such a valuable tool for GoCardless. It makes it easy to manage all aspects of an incident." - Amy T. on g2.

Measure escalation policy impact on MTTR

Designing better policies is only half the work. You also need to measure whether they are actually performing and have that data in a format your VP Engineering can present at a board review.

Time to initial incident response

Mean Time To Acknowledge (MTTA), the time from alert fire to first acknowledgment by a responder, directly measures escalation policy performance. It tracks the time from alert fire to first acknowledgment by a responder. If your MTTA is consistently high for P1s, review your escalation configuration for gaps: are contact details current, are multiple notification channels enabled for critical alerts, and is backup coverage configured for all time windows? Track this metric monthly and use it to tune tier timing and notification channel configuration.

Measuring on-call routing precision

Monitor how often the first-paged team is the team that resolves the incident. High first-contact precision indicates your Service Catalog ownership is accurate and your routing rules are well-configured. Low precision means alerts are landing on the wrong team, adding a re-escalation step to every incident.

Optimizing MTTR via escalation

Research on MTTR reduction shows that most SRE teams consume roughly 12 of their median 45-to-60-minute P1 MTTR on team assembly and context-gathering before troubleshooting begins. Automating that assembly step with intelligent routing and auto-created incident channels cuts it dramatically. Teams combining automated routing with AI investigation capabilities can see substantial MTTR reductions. incident.io's AI SRE can reduce MTTR by up to 80%, compressing the gap between alert and resolution even further.

Post-incident escalation review checklist:

- Did the alert route to the correct team on first attempt? Track this as a percentage across all incidents monthly

- Was MTTA within your target for this P1? If not, identify which tier caused the delay

- Did any responder miss the page due to incorrect contact info or notification channel failure?

- Would severity-based routing have paged a different tier? If yes, update severity classification rules

- Document any Service Catalog ownership gaps that caused routing confusion

Explore how teams that have already modernized on-call approached this in the incident.io On-call feature walkthrough and read how Flagstone's team transformed their incident management after moving to a Teams and incident.io setup.

Schedule a demo with our team to see the full routing workflow from Datadog alert to resolved incident and learn how teams are reducing MTTR by up to 80%.

Key terms glossary

MTTR (Mean Time To Resolution): the average time from when an incident is detected to when it is fully resolved, including team assembly, investigation, mitigation, and cleanup.

MTTA (Mean Time To Acknowledge): the time from alert fire to first acknowledgment by a responder. A direct measure of escalation policy performance.

Escalation path: the ordered sequence of responders and notification methods that an alert follows until someone acknowledges it.

On-call schedule: the rotation that defines which engineer carries the pager during a given time window. Feeds into the escalation path to determine who is Tier 1 at any moment.

Service Catalog: a registry mapping each service or system to its owner, dependencies, runbooks, and escalation path. The foundation of accurate alert routing because it eliminates the manual lookup step during incidents.

Alert fatigue: the state where engineers begin ignoring or suppressing alerts because notification volume is too high or signals are too low-quality to trust.

FAQs

See related articles

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom Wentworth

Humans aren’t fast enough for 4 9’s

Hitting 99.99% isn't a faster version of what you already do. It's a different problem to be solved: autonomous recovery, dependency ceilings, redundancies, and the discipline to build systems that buy you 15-30 minutes before you're needed at all.

Norberto Lopes

Norberto Lopes

Who's on call? How Claude helped us calculate this 2,500x faster

A look at how on-call schedules work, and how we made rendering them 2,500× faster — through profiling, smarter algorithms, and some Claude.

Rory Bain

Rory BainSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization