Slack-native incident management: Why it matters and how to evaluate it

Updated May 4, 2026

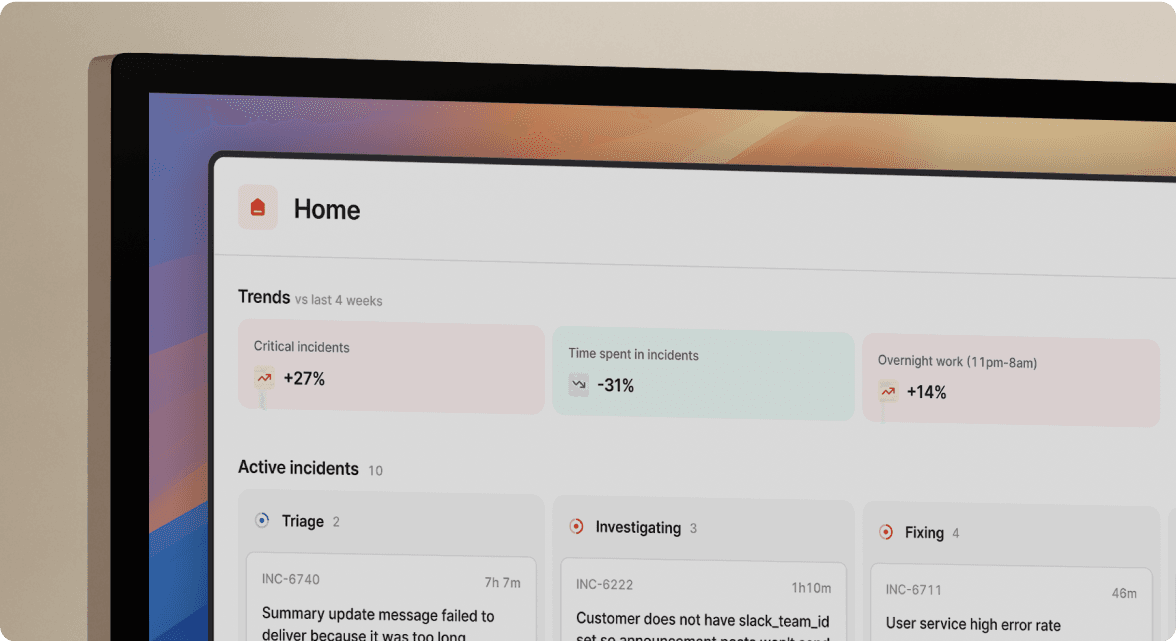

TL;DR: True Slack-native incident management eliminates the coordination tax that fires up every time production breaks. The difference between "Slack-native" and "Slack-integrated" is the difference between running your entire incident lifecycle inside chat and simply receiving a webhook notification in a channel. Platforms built natively for Slack, like incident.io, auto-create incident channels, capture timelines, assign roles, and draft post-mortems through slash commands, reducing post-mortem drafting time from 90 minutes to 10.

The tool-switching tax is where P1 responses stall. Your engineers toggle between PagerDuty, Datadog, Jira, Google Docs, and Statuspage while the clock ticks and customer complaints stack up.

Slack-native incident management fixes this by making the process invisible. Your team stays in the chat interface they already use, executes the full response lifecycle through slash commands, and lets the platform capture context automatically. This guide explains why that architectural distinction matters, how to test for it during vendor evaluations, and what the ROI looks like in real numbers.

AI in Slack-native incident management

We've seen AI change what "native" means in incident management. A few years ago, Slack-native meant your incidents started and ended in chat. Today, it means an AI SRE assistant handles the routine cognitive work, from identifying root causes to drafting post-mortems, so your engineers focus entirely on the fix.

Evaluating Slack-native vs. integrated

A Slack integration sends you a notification. A Slack-native platform runs the entire incident lifecycle inside Slack. That distinction sounds small until it's 3 AM and you're juggling five browser tabs.

With a Slack-integrated tool, an alert fires and a message appears in a channel. To assign an incident commander, change severity, or update the status page, you open the vendor's web dashboard. With a Slack-native tool, you type /inc assign @sarah, /inc severity high, and /inc resolve directly in the channel, and the platform captures every action automatically. No browser tabs. No context switch.

Key criteria for deep Slack integration

Three baseline capabilities separate genuine Slack-native tools from tools that bolt on a notification layer:

- Bidirectional sync: Actions taken in Slack (resolving an incident) propagate to connected tools (Jira tickets close, PagerDuty alerts acknowledge) without manual updates in each system.

- Slash command coverage: Declaration, escalation, role assignment, severity changes, and resolution all execute via

/inccommands without touching the web UI. - Automatic channel management: The platform creates dedicated incident channels (for example,

#inc-2847-api-latency) automatically when an alert fires, invites the relevant on-call engineers, and archives the channel cleanly after resolution.

Our AI assistant extends these capabilities with context surfacing during active incidents.

Keep incident context in Slack

Context loss is where MTTR goes to die. When an engineer opens Datadog to check which service is affected, then Jira to find recent deployments, then scrolls back through three Slack threads to find a decision made 20 minutes ago, they rebuild context each time instead of solving the problem.

Slack-native tools prevent this by pulling context into the incident channel itself. We surface the triggering Datadog monitor with runbook links, service catalog entries showing ownership information, and a live timeline of actions taken, all within the channel. You can identify the right incident management software by asking one question: can responders get what they need without opening a new tab?

The cognitive load problem in traditional incident response

During an incident, your team isn't experiencing one interruption. They're experiencing dozens as they switch between monitoring dashboards, chat threads, ticketing systems, and documentation tools.

Why context shifts delay MTTR

Every tab switch during an incident breaks the troubleshooting thread your brain is running. You're tracing a latency spike to a database query, you switch to Jira to log a ticket, and when you return to Datadog you've lost your place in the trace. This isn't a discipline problem. It's a systems design problem. When your tools don't share context, your engineers manually rebuild it every time they move between them, and that rebuilding time is pure MTTR overhead.

Tool sprawl tax: 5+ tools per incident

The typical incident response stack looks like this:

- PagerDuty: Fires the alert, wakes up the on-call engineer.

- Slack: Becomes the coordination layer, but without incident structure.

- Datadog or Grafana: Provides metrics and logs for diagnosis.

- Jira or Linear: Captures follow-up tasks, requiring manual creation.

- Confluence or Google Docs: Becomes the post-mortem, written from memory.

- Statuspage: Needs manual updates, often forgotten mid-incident.

That's six tools, each requiring login, context, and attention. The coordination overhead before troubleshooting starts typically runs 10-15 minutes for a P1: manually creating a Slack channel, pinging engineers, opening the monitoring dashboard, starting a notes doc, and remembering to update the status page.

SREs distracted by manual notes

During every major incident, someone gets pulled away from troubleshooting to become the designated note-taker. They type the timeline into a Google Doc while the rest of the team digs into the actual problem. The result: your best troubleshooter is half-distracted, your notes miss technical context, and you still end up with an incomplete record when it's time to write the post-mortem.

Memory loss and timeline reconstruction

Three days after a P1, you sit down to write the post-mortem. You scroll back through the incident Slack channel, cross-reference PagerDuty alert timestamps, pull Datadog event annotations, and try to remember what was decided on the Zoom call nobody transcribed. Ninety minutes later, you have an incomplete, probably inaccurate post-mortem that gets published late and read by no one. This is post-mortem archaeology, and it's a direct consequence of storing incident data across six tools that don't talk to each other.

Streamlining incident workflows in Slack

The "After" picture looks fundamentally different. When incident management runs natively in Slack, the process becomes invisible. Engineers manage the incident instead of managing the process.

Run the full incident lifecycle via slash commands

The /inc command used inside an active incident channel pops up a full action menu covering every lifecycle operation. You declare incidents, assign roles, escalate, update severity, and resolve without opening a browser, using /inc declare, /inc assign ,/inc escalate, /inc severity, and /inc resolve.

No-touch incident channel provisioning

When a Datadog alert fires, we automatically create a dedicated channel (#inc-2847-api-latency-spike), page the on-call engineer, invite service owners based on the Service Catalog, and start capturing the timeline. The on-call engineer joins a channel that already contains the triggering alert with context, service ownership information, a runbook link, and a live timeline already recording. Nobody created any of that manually.

AI-drafted incident timelines and unified context

Our built-in Scribe feature joins your incident calls and transcribes the conversation in real time. No third-party integration required. It extracts key decisions and flags root cause mentions. When someone on the call says "I think this correlates with the deploy at 14:32," Scribe can capture that in context.

Every status update, severity change, and command executed in Slack also feeds the timeline passively. When the incident closes, the timeline already covers 80% of what you need for the post-mortem, built from actual incident data rather than reconstructed from memory. The Service Catalog surfaces service owners, recent deployments, current health status, and runbook links directly in the incident channel, without anyone opening Datadog, checking GitHub, or pinging the platform team.

Eliminate wasted effort: save 10-15 minutes

The time savings from Slack-native incident management compound across every incident your team handles.

Mobilize incident teams fast

Manual team assembly during a P1 involves finding the on-call schedule, paging the engineer, waiting for acknowledgment, identifying which other teams are affected, pinging their leads individually, and waiting for everyone to find the right Slack channel. With automated escalation and channel provisioning, the team assembles in 2 minutes instead of 15. The on-call engineer is paged, service owners are invited based on the affected service, and the incident commander role is assigned automatically.

Eliminating coordination overhead reclaims 260 minutes per month at $650/month in value at a $150 loaded hourly cost, before counting MTTR reduction.

AI-drafted post-mortems: 90 min to 10

Post-mortem reconstruction from scratch takes up to 90 minutes. With our AI-powered post-mortem generation, the captured timeline becomes an auto-drafted document that engineers refine rather than reconstruct from memory.

Status page updates: manual to automatic

Running /inc resolve in Slack can update your status page, reducing the risk of forgetting to communicate resolution to customers. For teams handling production incidents, this eliminates the status page guilt that comes with maxing out troubleshooting focus while customers wait.

ROI calculation for 20 incidents/month

Here's the math for a team handling 20 incidents per month at a $150 loaded hourly engineer cost:

| Metric | Before Slack-native | After Slack-native | Monthly saving |

|---|---|---|---|

| Team assembly time | 15 min × 20 incidents = 300 min (5 hrs) | 2 min × 20 incidents = 40 min | 260 min saved = $650 |

| Post-mortem drafting | 90 min × 20 incidents = 1,800 min (30 hrs) | 10 min × 20 incidents = 200 min (3.33 hrs) | 1,600 min saved =$4,000 |

| Total time saved | — | — | 1,860 min (31 hrs) |

| Value at $150/hr loaded cost | — | — | $4,650/month |

Slack-native incident management reclaims substantial engineering time from coordination and documentation overhead, before counting MTTR reduction on customer-facing incidents.

Fast team adoption for incident response

Start incidents instantly in Slack

The adoption barrier for web-first tools is real: engineers must remember the URL, log in, navigate to the right screen, and declare the incident there before coordination begins. Slack-native tools remove that barrier entirely. Your team declares incidents in the same interface they already use for everything else, with commands that feel like natural Slack messages. Matthew B. on G2 described the onboarding directly:

"incident.io is very easy to pick up and use out of the box... The customer support has been fantastic and is world class and hard to beat." - Matthew B. on G2

New engineer onboarding: 3 days vs. 3 weeks

Ad-hoc verbal onboarding for on-call shifts creates anxiety and mistakes. "When paged, check PagerDuty, then post to #incidents, then look for the runbook in Notion, then ping me if you're stuck" is tribal knowledge that evaporates when the senior engineer is on vacation.

Slack-native incident management provides structured workflows that reduce dependency on tribal knowledge. A junior engineer joins their first on-call shift, gets paged, and the platform creates the incident channel and assigns them a role. They can escalate via /inc escalate @senior-sre if they need help. The tool documents the process automatically, which means new engineers can participate more quickly in their first incident.

Intuitive incident workflows for SREs

Web-first tools require training because users must learn a new interface under pressure. Slack-native tools feel intuitive because they use the same slash command and channel patterns your team already knows from daily work. Our opinionated defaults get teams operational quickly with strong workflow support for incident response.

7 tests for deep Slack integration

Run these tests during your vendor evaluation to separate Slack-native tools from Slack-integrated tools.

Question 1: Can I run the entire incident lifecycle in Slack? Test this literally. From declaration to post-mortem publication, does every action complete via Slack commands? If the answer is "almost everything except X," quantify how often X happens and whether it requires a browser during active incidents.

Question 2: Are core actions available via slash commands? Verify that severity changes, role assignments, escalations, and resolution all execute through commands, not clicks in a web UI. If changing severity requires opening a dashboard, that's a context switch during your highest-stress moments.

Question 3: Is incident timeline data automatic? Ask the vendor to show you a completed incident timeline. Did it require a designated note-taker, or did the platform build it passively from channel activity and call transcription? A timeline that writes itself is the foundation of a useful post-mortem.

Question 4: Can I avoid opening the web UI during incidents? Run a mock P2 incident during your trial without opening the vendor's web dashboard. If you can declare, escalate, troubleshoot, and resolve entirely in Slack, the tool is genuinely native. If you hit a wall that requires a browser, that's your answer.

Question 5: How deep are bidirectional integrations? Resolving an incident in Slack should trigger actions in connected tools, such as acknowledging PagerDuty alerts and creating Jira follow-up tickets with timeline context. Test each integration direction during your trial.

Question 6: Does the AI add signal or noise? The AI SRE should identify root causes and suggest fixes based on past incidents, not just surface log snippets. Ask the vendor for precision and recall metrics on their AI root cause identification. Our AI SRE automates up to 80% of incident response, including fix PR generation.

Question 7: What is the fallback when Slack is unavailable? Any platform that runs entirely in Slack must have a web dashboard backup for the rare cases where Slack itself has an outage. Confirm the fallback exists and covers your critical incident operations. We provide a fully functional web dashboard across our platform.

Evaluating Slack-native AI incident tools

Platform capabilities:

| Capability | incident.io | PagerDuty | FireHydrant |

|---|---|---|---|

| Slack integration depth | Native (full lifecycle in Slack) | Integrated (incident actions in Slack, web-first architecture) | Native (full operation from Slack) |

| AI capabilities | Automates up to 80% of incident response, fix PR generation, Scribe transcription | AI-powered platform capabilities including generative AI | AI summaries, Copilot suggestions, retrospectives |

| Unified platform | On-call + response + status pages + post-mortems | Alerting-focused, add-ons required | On-call + response + integrated status pages |

Support and viability:

| Vendor | Support model | Long-term viability |

|---|---|---|

| incident.io | Shared Slack channels, email, and 1:1calls; bug fixes in hours, features in days | Well-funded, active roadmap |

| PagerDuty | Email and chat (Premium), multiple channels | Established public company |

| FireHydrant | Email/help desk, knowledge base | Active development |

| Opsgenie | Continued until April 2027 | Sunsetting April 2027 |

incident.io: In-Slack incident workflows

We built the entire incident lifecycle as a Slack-native experience from day one. The full workflow, alert to post-mortem, runs through /inc commands without requiring engineers to open a browser tab. Our AI SRE assistant automates up to 80% of incident response, including root cause identification and automated fix PR generation. The unified platform covers on-call scheduling, alert routing, status pages, and post-mortem generation in a single tool, eliminating the six-tool stack described above.

"Amazing slack integration makes handling incidents completely in slack a breeze. This lets our team not have to think about yet another service and instead handle it in a more human and integrated way." - Brandon O. on G2

PagerDuty: Alerts for Slack incident response

PagerDuty is the reliable incumbent for alert routing and on-call scheduling, with a battle-tested alerting engine that handles complex routing rules well. Its Slack integration supports incident acknowledgment, escalation, and resolution via slash commands, though the core platform is web-first and advanced features require the PagerDuty dashboard. For teams where coordination overhead is the primary bottleneck, PagerDuty's architecture makes it harder to solve.

FireHydrant: Web platform + Slack response

FireHydrant is a strong peer competitor with capabilities that appeal to teams with complex microservice architectures. Its Slack integration supports incident response workflows. incident.io differentiates on support velocity and AI capabilities.

Opsgenie: Actionable alerts in Slack

Opsgenie delivers alert routing and on-call management with solid Slack integration covering both alert notifications and incident management workflows. Atlassian announced the Opsgenie sunset with new account creation ending June 4, 2025, and support running until April 2027. Any team still on Opsgenie faces a mandatory migration within a defined window. Evaluating a replacement now gives you time to run a proper pilot rather than a forced cutover. Our Opsgenie migration guide includes a 14-day timeline and parallel-run strategy.

Try incident.io

You've seen the ROI math, the vendor comparisons, and the seven tests for deep Slack integration. Ready to eliminate coordination overhead in your own stack? Schedule a demo and we'll show you how teams are reducing MTTR by up to 80% and reclaiming hundreds of engineer-hours per month.

Key terms glossary

MTTR (Mean Time To Resolution): The average time from when an incident is declared to when it is fully resolved and services are restored. MTTR is the primary metric for measuring incident response effectiveness. Top-performing teams target sub-30-minute P1 resolution.

SRE (Site Reliability Engineer): An engineer responsible for the reliability, availability, and performance of production systems. SREs typically own on-call rotations, incident response processes, post-mortems, and the tooling that supports them. In larger organizations, SRE teams set platform-wide reliability standards and manage escalation paths for engineering teams.

Slack-native: A product architecture where the entire workflow runs inside Slack through slash commands and channel interactions, rather than in a separate web application that sends notifications to Slack. A Slack-native tool means you complete the full incident lifecycle without opening a browser tab.

AI SRE: An AI assistant built into incident management platforms that automates routine response tasks such as timeline capture, root cause identification, post-mortem drafting, and fix suggestion. incident.io's AI SRE automates up to 80% of incident response tasks covering timeline capture, root cause identification, and fix PR generation so human responders focus on the remaining decisions that require engineering judgment.

P1, P2, P3 incidents: Priority or severity levels assigned to incidents based on business impact and urgency. P1 incidents are the most critical (for example, complete service outage affecting all customers), P2 incidents have significant impact but with workarounds available, and P3 incidents are lower-priority issues with minimal customer impact. These designations help teams triage response and allocate resources appropriately.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization