How to choose incident post-mortem software: 2026 buyer's guide for SREs

Updated February 16, 2026

TL;DR: Stop treating post-mortems as a writing assignment and start treating them as a data problem. The value of post-mortem software isn't providing a place to write, it's automating the collection of context during the incident so you can focus on analysis instead of archaeology. Look for five key criteria: automated timeline capture that eliminates manual reconstruction, deep integrations with your observability stack, AI that summarizes rather than hallucinates, Slack-native workflows that ensure adoption, and analytics that prove reliability ROI. Manual approaches fail because they rely on human memory to reconstruct high-stress events days later. Modern platforms record the "black box" flight data automatically.

You've been there: production is finally stable, and now you're scrolling through thousands of Slack messages, digging through logs, and trying to remember who did what at 2 AM. Your team spent hours fighting the fire, and now you're spending days reconstructing what actually happened. The engineers who were focused on resolution are stuck piecing together timelines and documenting decisions they made under pressure. The result: post-mortems that consume significant time yet rarely prevent similar issues from recurring.

The challenge isn't your team's capability. It's the inherent difficulty of accurately documenting complex, high-stress events from memory while you're managing multiple systems and ongoing operational demands. Effective post-mortem software addresses this by automating context collection (timelines, conversations, metrics) so you can focus on root cause analysis instead of reconstructing basic event chronology.

Why dedicated post-mortem software beats manual documentation

Project post-mortems (Agile retrospectives) happen after long cycles with time for reflection. Incident post-mortems happen in the chaos: production is down, customers are impacted, and you're juggling five tools while your team fights fires.

The manual trap: Google Docs and Confluence create three fatal problems. First, they produce incomplete data because the "designated note-taker" is busy troubleshooting instead of documenting. Second, without structured metadata, you can't query trends like "Are we getting better at database incidents?" Third, they rely on memory reconstruction days after the event when critical details have faded.

Subpar post-mortems with incomplete action items make recurrence far more likely. Without structured review and follow-through, you're destined to repeat the same mistakes. The Knight Capital Group incident provides a stark lesson: on August 1, 2012, inadequate root cause analysis of prior incidents contributed to a software glitch that cost the company approximately $440 million in under an hour, ultimately leading to its emergency rescue and acquisition by Getco LLC.

The software advantage: Dedicated platforms deliver three measurable benefits. Speed: automated timeline capture drastically reduces documentation time, letting engineers focus on root cause analysis. Consistency: forced templates and structured fields ensure every post-mortem captures the same critical data points. Data integrity: timestamped, immutable audit trails satisfy SOC 2 compliance requirements while providing the foundation for trend analysis.

"Without incident.io our incident response culture would be caustic, and our process would be chaos... Its post-mortem and follow-up tooling is simple, yet detailed, and gives us the structure to quickly share learnings from our incidents." - Verified user review of incident.io

What to look for in post-mortem software: 5 key evaluation criteria

1. Automated timeline capture vs. manual reconstruction

The biggest cost in post-mortems is the time spent manually reconstructing timelines from Slack and monitoring tools. Software must automate this.

What automation looks like in practice: incident.io automatically creates events on your timeline using incident updates (severity changes, status changes) and pinned Slack messages. When an engineer types /inc assign @sarah-devops in Slack, the platform records the role change with timestamp and context. When someone shares a Datadog graph, we preserve it. When the team discusses rollback options, the conversation becomes part of the timeline. Items pinned get stamped at the time of their actual posting, not when they were pinned, ensuring accurate sequencing.

Without automation, you're reconstructing this data from memory: scrolling Slack channels, cross-referencing Datadog, rebuilding the timeline manually, creating follow-up tickets, and linking call recordings. Each step risks data loss and introduces errors.

Integration depth matters: Look for platforms with native integrations for monitoring and alerting tools that pull metrics and alert data directly into timelines. Traditional ITSM platforms can sync alerts with monitoring tools, but they lack the conversational context where engineering teams actually make decisions, forcing you to manually assemble timelines from multiple sources after the incident.

A financial services user highlighted the transformation:

"Incident.io is extremely easy to use... It helps both during an incident and the post-incident/post-mortem process by allowing users with little training to manage incidents like pros." - Roro O. on G2

2. Deep integrations with your observability and ticketing stack

Tool sprawl kills incident response. When alerts fire in PagerDuty, coordination happens in Slack, tickets get created in Jira, and post-mortems get written in Confluence, you're losing critical time and context to coordination overhead.

Essential integrations to evaluate:

Alerting platforms: PagerDuty and Opsgenie integration ensures alert context flows into your post-mortem automatically. When an alert fires, the platform should capture not just the alert text but the full payload, affected services, and initial triage data.

Observability tools: Datadog and New Relic integration pulls metrics, graphs, and traces directly into timelines. During the incident, when someone pastes a graph showing CPU spike, that graph should become part of the permanent record with timestamp and context.

Ticketing systems: Bi-directional sync with ITSM tools like Jira or ServiceNow allows teams to automatically create or update tickets as part of the incident workflow. Follow-up actions identified during the post-mortem should auto-create tickets with full context, and ticket status should sync back to the incident record.

Communication bridges: Launch Zoom or Google Meet from within the platform, with real-time call transcription that captures decisions made verbally. incident.io's Scribe feature transcribes calls in real-time and shares notes in your incident channel, ensuring no valuable context is lost.

The integration test: During evaluation, create a test incident that fires an alert, pulls in monitoring data, assigns an incident commander, and generates follow-up tickets. If any step requires manual copy-paste, the integration isn't deep enough. As one SRE noted:

"The platform's compatibility with multiple external tools, such as Ops Genie, makes it an excellent central hub for managing incidents." - Vadym C. on G2

3. AI features that actually save time (not just generate text)

AI in post-mortem software falls into two categories: useful summarization and dangerous hallucination. Look for precision and recall metrics, not just "generative text."

Practical AI use cases:

- Real-time call transcription: Scribe writes notes in real-time during incidents and shares them in your incident channel, just like a human note-taker would. The AI captures who said what, when decisions were made, and what actions were committed to.

- Timeline auto-generation: Using captured timeline data, AI generates post-mortem drafts that include incident summary, timeline of events, contributing factors, and suggested action items. Your engineers spend minutes reviewing and refining instead of hours writing from scratch.

- Root cause pattern matching: Advanced platforms connect telemetry, code changes, and past incidents to surface root causes without manual prompting, helping you identify likely culprits like recent deploys, config changes, or resource limits.

The critical distinction: When we talk about automated post-mortems, we don't mean templates you fill out faster. We mean real-time capture of incident context, decisions, and timelines, synthesized by AI into structured reports that are substantially complete before you start writing. We're not replacing human judgment, we're eliminating the archaeology.

What to avoid: Generative AI that hallucinates root causes or invents conversations that never happened. During vendor demos, ask: What's your AI's precision and recall on real production data? How do you prevent hallucinations? Can I audit what the AI summarized versus what actually happened?

4. Ease of adoption and Slack-native workflows

If the tool requires leaving Slack or learning a complex UI during a P1, engineers will bypass it and your data will be incomplete.

What "Slack-native" actually means:

- Slash commands for all actions: You declare incidents with

/inc declare, assign roles with/inc assign, escalate with/inc escalate, and resolve with/inc resolve. Engineers immediately prefer this approach over web-first tools because there's no new interface to learn. - Conversational AI: Tag @incident in any incident channel and chat directly to draft updates, create follow-ups, pause incidents, and more. Any action you currently do via a command, you can do conversationally instead. The AI can also answer questions about the incident, connected alerts, and attachments.

- Automatic channel creation: When alerts fire, the platform auto-creates incident channels, invites owners by service, and sets topics with status and links. No manual channel creation means response starts in under 2 minutes instead of 12.

- Minimal context switching: The entire incident management process happens in Slack with slash commands, eliminating the cognitive load of remembering which tool does what at 2 AM.

One user captured the adoption benefit perfectly:

"My favourite thing about the product is how it lets you start somewhere simple, with a focus on helping you run incident response through Slack... I'm excited about all the new insights features they're building." - Chris S. on G2

Why web-first design fails: Web portals force you to toggle between multiple tools during high-stress moments: alert system for triggers, monitoring tools for metrics, chat for coordination, ticketing for follow-ups, wiki for documentation. Each context switch wastes cognitive load when you can least afford it.

PagerDuty was designed as a web-first platform, with Slack integration added later. Platforms built around chat from day one let engineers manage incidents without leaving their primary coordination tool.

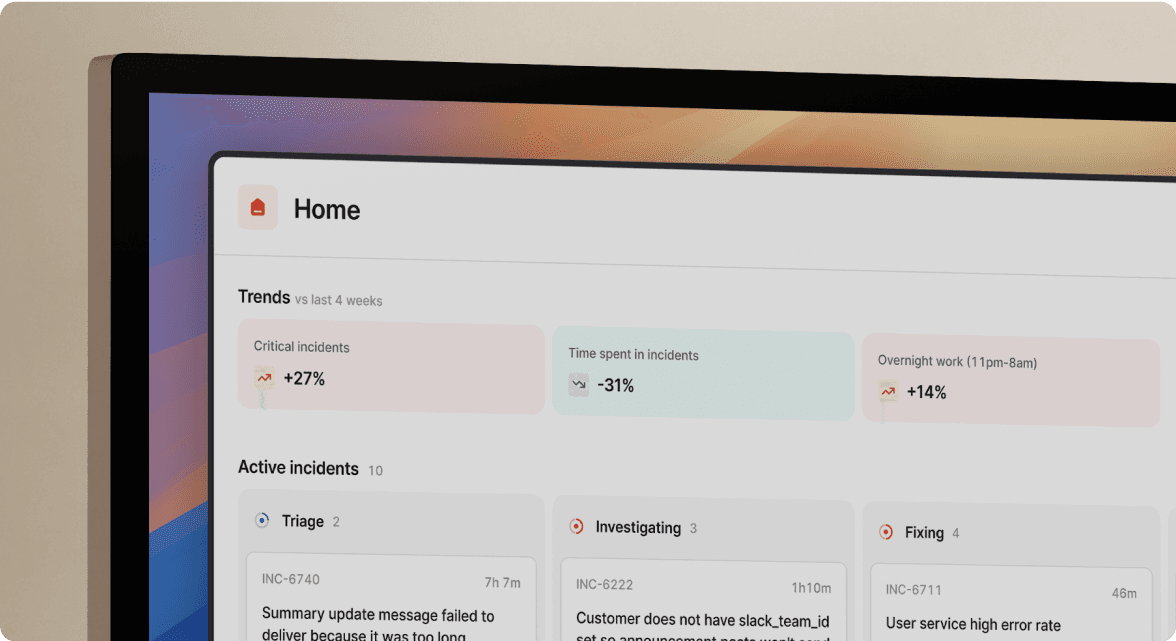

5. Analytics that prove reliability ROI

Your VP Engineering asks "Are we getting better at database incidents?" and you spend 4 hours manually exporting Jira tickets and building a spreadsheet. Analytics should answer this question in 30 seconds.

Essential metrics to track:

MTTR trends: Incident Analytics should provide insights into the efficiency and performance of your incident response process by allowing you to aggregate and analyze statistics from past incidents. Key metrics like time to resolution and customer impact should be trackable over time with clear trend lines showing improvement or regression.

Incident frequency by service/type: Tracking and visualization of incident management metrics including mean time to resolve and incident causes enables you to spot patterns. If database connection pool issues cause 35% of incidents, you can allocate budget for connection pool monitoring.

Cost of downtime calculations: Industry research shows downtime costs for small businesses range from $137 to $427 per minute (Pingdom, 2024), while enterprise averages run significantly higher. Your analytics should multiply incident duration by your actual cost per minute to show executives the business impact of reliability improvements.

Time reclaimed from automation: Show the hours saved when post-mortem completion drops dramatically. Multiply by loaded engineer cost of ~$150/hour (based on average SRE salary of $168,897/year per Glassdoor, 2026) to convert time savings into dollar savings.

Real customer impact: Favor's engineering team reduced MTTR by 37% after implementing automated incident coordination in Slack with incident.io.

"I would really highlight the customer-centricity of the team... 1-click post-mortem reports - this is a killer feature, time saving, that helps a lot to have relevant conversations around incidents (instead of spending time curating a timeline)." - Adrian M. on G2

Red flags: Signs a tool won't scale with you

Hidden pricing: When "Contact Sales" gates basic features like SSO or on-call scheduling, you're looking at unpredictable costs. Transparent pricing provides cost predictability for budgeting. Ask: What's the all-in cost including the features I actually need?

Heavy configuration requirements: When vendors emphasize "implementation consulting" as necessary rather than optional, or multi-week onboarding processes are standard, you're looking at delayed time-to-value. Ask vendors during trials: How long until our first real incident runs through your platform? If the answer is "after our 3-week onboarding program," that's your warning.

Web-first design forcing context switching: Platforms that require opening a web portal during incidents add cognitive load when engineers are maxed out troubleshooting production. The coordination work should happen where your team already works. If you're being told "just open this dashboard in another tab," that's a red flag.

Vague AI claims without metrics: "Revolutionary AI solves incidents automatically" without precision/recall numbers or customer validation means you're looking at marketing hype rather than proven capability. Ask for specific metrics and customer case studies showing AI performance in production.

Post-mortem tool comparison checklist

Use this framework when evaluating platforms:

| Criterion | Questions to ask | Good answer | Red flag |

|---|---|---|---|

| Timeline automation | How do you capture timeline data? | Auto-capture from Slack, integrations, status changes | Manual pinning or copy-paste required |

| Integration depth | Show me alert-to-post-mortem flow | Bi-directional sync, automatic data pull | One-way webhooks, manual updates |

| AI capabilities | What's your AI precision/recall? | Specific metrics, customer validation | "AI-powered" with no metrics |

| Adoption path | How long until operational? | Days not weeks, Slack-native commands | Heavy training required |

| Analytics | Show me MTTR trends | Real-time dashboards, exportable data | Static reports, manual analysis |

| Compliance | SOC 2? Audit trails? | SOC 2 Type II, immutable logs | "In progress" or vague answers |

| Pricing | All-in cost with features I need? | Transparent published pricing | "Contact sales" for essentials |

| Support | Response time for production issues? | Shared Slack channel, <2hr response | Email-only, "2 business days" |

Testing approach: Run 2-3 real incidents through the platform during trial. Measure assembly time, documentation time, and team adoption. If engineers naturally use it without training, that's your signal. If you're constantly explaining how to use it, that's your warning.

Making the business case to leadership

Finance and engineering leadership need ROI in dollars, not just "faster incidents."

ROI calculation template:

(Time you save per incident) × (Number of incidents per month) × (Average hourly engineering cost) + (Value of downtime reduction)

Worked example for a team handling 20 incidents/month:

Engineering time savings:

- Assume automation saves 60 minutes per incident on post-mortem work

- Incidents per month: 20

- Fully-loaded engineering cost: ~$150/hour (based on average SRE salary of $168,897/year per Glassdoor, 2026)

- Monthly savings: 60 min ÷ 60 × 20 × $150 = $3,000/month

- Annual savings: $36,000

Downtime reduction value:

- If the platform reduces MTTR by just 10 minutes per month: 10 min × $427/min = $4,270/month (cost for small business)

- Annual downtime savings: $51,240

Total ROI: $36,000 + $51,240 = $87,240 annually

When you show executives $87,240 in annual savings against platform costs, the approval conversation gets short. The business case is straightforward: coordination overhead eats significant time per incident, and automation reclaims that time for proactive reliability work. SOC 2 compliance requires documented incident response with timestamped logs guaranteeing a traceable audit trail, so the platform delivers both efficiency gains and compliance value.

"Incident has transformed our incident response to be calm and deliberate. It also ensures that we do proper post-mortems and complete our repair items." - Mike H. on G2

Stop writing post-mortems from scratch

The difference between good and great post-mortem software comes down to one question: Does it automate the collection of context during the incident, or does it just give you a prettier place to write after the fact?

Manual Google Docs fail because they ask engineers to do something unnatural: perfectly remember a high-stress event days later. Modern platforms record the "black box" data automatically, turning post-mortems from a writing assignment into an analysis exercise.

Start by running a pilot: Schedule a demo to see how incident.io automates post-mortem creation during a real incident. We'll walk through timeline capture, AI summarization, and analytics in your Slack workspace, then run 2-3 real incidents through the platform. Measure your current post-mortem completion time, then measure with automation. Track MTTR, team adoption, and how much context you capture compared to your current process. If you can't demonstrate measurable improvement in 30 days, the platform isn't delivering on its promise.

Your engineers shouldn't spend more time writing about incidents than fixing them. Automation makes the difference.

Key terminology

MTTR (Mean Time To Resolution): The average time from when an incident starts to when it's fully resolved and services are restored. Tracking MTTR trends shows whether your incident response process is improving or degrading over time.

Root Cause Analysis (RCA): The process of identifying the fundamental cause of an incident rather than just addressing symptoms. Effective RCA prevents recurrence by fixing underlying system issues.

Blameless Post-mortem: A culture and process focusing on systems and processes rather than individual mistakes. The goal is learning and improvement, not assigning fault, which encourages honest documentation and prevents defensive behavior.

Timeline Capture: The automated process of capturing all events, decisions, and actions during an incident with precise timestamps as they happen. High-quality timeline capture eliminates manual reconstruction and forms the foundation of effective post-mortems.

Incident Commander (IC): The person responsible for coordinating incident response, making decisions, and ensuring effective communication. The IC role should be clearly assigned and documented in your post-mortem process.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization