Best incident postmortem software for platform engineering teams in 2026

Updated February 16, 2026

TL;DR: If you're managing microservices on a platform team, you need automated post-mortem tools that capture context in real-time, not templates that rely on memory. Manual reconstruction from Slack threads, Datadog logs, and PagerDuty alerts takes 60-90 minutes per incident and loses critical context about decision rationale, failed investigation paths, and exact timing. We built incident.io to automate timeline capture directly in Slack, using AI to draft post-mortems before you start editing. For teams running 15-20 incidents monthly, that's 18+ hours reclaimed per month. PagerDuty excels at alerting but treats post-mortems as an afterthought. Jira and Confluence work as documentation storage but can't capture what happens during incidents.

When a Kubernetes cluster upgrade cascades latency across 15 microservices, the incident doesn't stay within one team's boundary. The platform team pages the database SRE. The database team escalates to networking. Three Slack channels spin up. Engineers share Datadog graphs in one thread while discussing rollback strategies in another. Someone mentions a suspicious deploy in a third channel that turns out to be the root cause.

The Google SRE book reports that dealing with an incident including root-cause analysis, remediation, writing the post-mortem, and fixing the bug takes an average of 6 hours. But the post-mortem writing phase alone consumes 60-90 minutes of manual reconstruction per incident.

The problem gets worse with platform complexity. Standard tools like Google Docs or Confluence templates rely on human memory to reconstruct timelines. Three days after resolution, when you finally sit down to write the post-mortem, critical details are easily missed, the sequence of events can get muddled, and the resulting analysis is often incomplete. You spend 90 minutes scrolling through Slack logs, correlating timestamps across Datadog and PagerDuty, and interviewing responders to fill gaps.

"Excellent post-mortem workflow that guides you through the steps from start to finish in checklist form... Everything can be done from Slack." - Verified user on G2

What gets lost in this archaeology exercise? Decision rationale for why you chose option A over option B. Dead-end investigation paths that rule out common failure modes. Exact timing of who did what when. Critical context gets lost when conversations happen across video calls, chat threads, and dashboards with no unified documentation.

Core requirements for platform-grade post-mortem software

You need more than a fancy template. Your post-mortem software should treat the analysis as a data artifact to generate, not a document to write from memory.

Automated timeline construction across microservices

Your tool needs to ingest alerts from Datadog, chat logs from Slack, actions from GitHub, and PagerDuty escalations into a single chronological timeline without manual correlation. The best platforms use integrated scheduling and routing to map alerts to services, services to owners, and ownership to schedules through a live service catalog.

Why this matters: When an incident spans 8 microservices and involves 12 engineers across 4 teams, no human can accurately reconstruct the sequence from memory. You need automated capture as events happen, timestamped to the second.

Cross-team dependency mapping and context

Platform incidents rarely respect service boundaries. Your post-mortem software should integrate with your service catalog to answer "Who owns the authentication service that's blocking checkout?" instantly during the incident, not during the write-up three days later.

Real-time timeline capture with automated recording of incident events, decisions, and role changes as they happen eliminates manual note-taking and ensures complete documentation. When an engineer types /inc assign @sarah-devops in Slack, that role change with timestamp and context needs to be preserved.

"Incident provides a great user experience when it comes to incident management. The app helps the incident lead when it comes to assigning tasks and following them up." - Verified user on G2

Blameless culture enforcement via tooling

Google's SRE guidance emphasizes that a blameless post-mortem should call out where and how services can be improved, focusing on system weaknesses rather than individual actions. Look for tools that prompt for "contributing factors" instead of singular "root cause" to reinforce systems thinking over blame assignment.

Platform engineering is about distributed systems, not human error. Your tooling structure should make blameless analysis the default path, not something teams have to remember to practice.

Top incident post-mortem software for platform teams

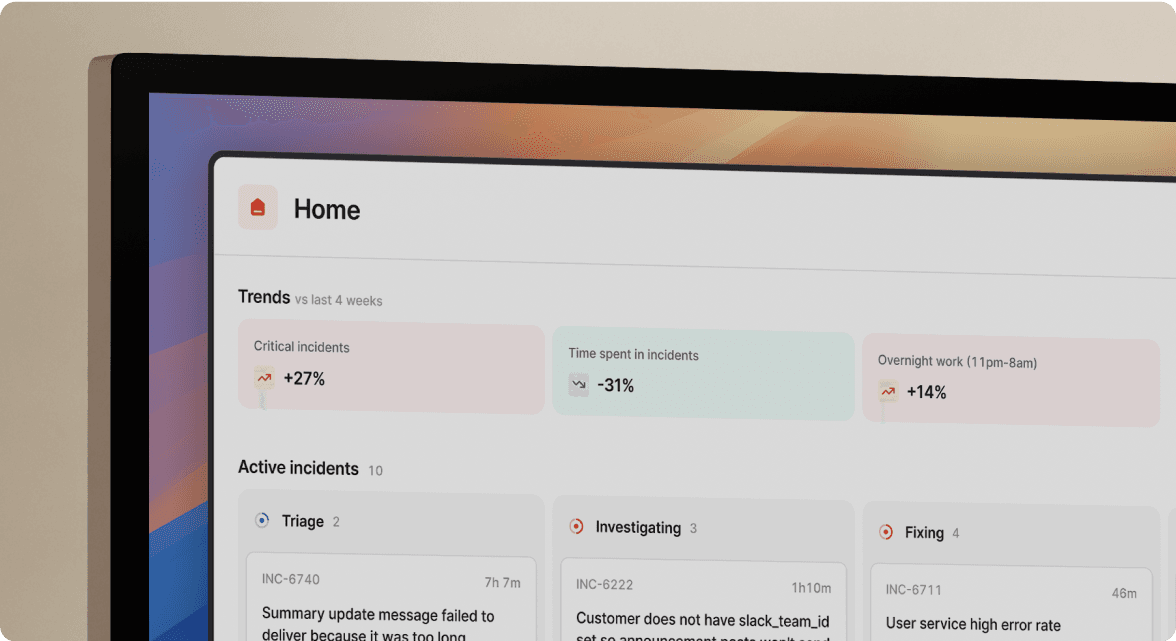

incident.io: Best for Slack-native automation and AI

We handle the complete lifecycle from on-call scheduling through post-mortem generation, all inside Slack where your team already coordinates. Our platform auto-creates incident channels, captures every /inc command and conversation, and uses AI to draft post-mortems before you start editing.

Key automation features:

When a Datadog alert fires, we create #inc-2847-api-latency, page the on-call engineer, pull in service owners from the catalog, and start recording the timeline automatically. Engineers manage everything with slash commands. /inc escalate @database-team. /inc severity critical. /inc resolve. Each action gets captured with timestamp and context.

Our AI SRE quickly drafts post-mortems including timeline, contributing factors, and follow-ups. Scribe transcribes incident calls, highlights key decisions, and flags root causes mentioned during live discussions. The AI spots the likely pull request behind the incident so engineers can review changes without leaving Slack.

"The customization of incident.io is fantastic... I'm a big fan of their blog and read most of their engineering articles with great interest." - Nathael A on G2

Export and integration:

Export post-mortems directly to Confluence or Notion with rich formatting preserved. Follow-up Jira tickets auto-create with timeline context flowing into task descriptions. You can configure different destinations for different services.

Pricing: Pro plan at $25/user/month for incident response plus $20/user/month for on-call ($45/user/month total). Enterprise plan with custom pricing includes SAML/SCIM, dedicated CSM, and sandbox environments.

PagerDuty: Best for enterprise alerting-heavy workflows

PagerDuty built its reputation on alerting infrastructure with 700+ integrations and proven reliability. If you're already invested in their ecosystem, the alerting layer is battle-tested.

However, post-mortem features are often add-ons rather than core capabilities. The UI is web-first rather than chat-native, so coordination still happens in unstructured Slack channels requiring manual documentation. If you're locked into PagerDuty for alerting, consider pairing it with incident.io for coordination and post-mortems to get best-of-breed in both areas.

FireHydrant: Best for service catalog integration

FireHydrant takes a service catalog-centric approach, emphasizing infrastructure mapping and service relationships to understand incident impact and blast radius. The platform provides strong focus on service dependencies and runbooks.

The philosophical difference: FireHydrant starts with the infrastructure map to understand impact, while we start with the human conversation in Slack to capture the narrative as it unfolds in real-time. For teams where service ownership clarity is the primary pain point, FireHydrant's catalog-first design offers value, though compare support velocity and AI maturity carefully.

Atlassian (Jira/Confluence): Best for existing ecosystem lock-in

Every engineering organization already has Jira for tickets and Confluence for docs. Atlassian provides post-mortem templates and features across Jira Service Management, Opsgenie, and Confluence, making their ecosystem a common storage destination for many teams.

The fundamental limitation: Jira and Confluence are destinations for data, not sources. They don't capture the "during" phase of incidents. Teams still spend 60-90 minutes manually reconstructing post-mortems, copying context from Slack threads and correlating timestamps across tools.

"Without incident.io our incident response culture would be caustic, and our process would be chaos. It empowers anybody to raise an incident - and helps us quickly coordinate any response across technical, operational and support teams." - Matt B. on G2

How to automate the post-mortem lifecycle

Replace the manual 90-minute reconstruction process with a three-step automated workflow.

Step 1: Capture context during the incident

You need a tool that sits in your war room, which for most teams is Slack. Don't designate a scribe who stops troubleshooting to take notes. Use slash commands to mark key moments as they happen.

Our @incident natural language interface lets you tag @incident in any incident channel to draft updates, create follow-ups, and answer questions about connected alerts. Ask "@incident pause this til monday" or "@incident draft an update for the summary to reflect the current state." We record each action with timestamp and context.

Scribe joins your incident calls through Google Meet or Zoom, transcribing discussions and extracting key decisions automatically.. When someone mentions "rolled back deploy abc123," Scribe flags it in the timeline without anyone stopping to type notes.

Step 2: Auto-generate the draft with AI

The goal is to edit, not write. Turn a blank page into a review task. When you type /inc resolve, our AI SRE analyzes root cause, recommends actions, and connects the dots between code changes, alerts, and past incidents to quickly uncover what went wrong and why it happened. The system generates a post-mortem draft including incident summary, chronological timeline, contributing factors, and suggested follow-up items.

Engineers spend 10-15 minutes reviewing and refining instead of 90 minutes writing from scratch. The timeline is accurate to the second because it was captured in real-time, not reconstructed from memory. Decision rationale is preserved because Scribe transcribed the call where options were discussed.

Step 3: Export to long-term storage

Push the finalized learning to Confluence or Notion with one click. We ensure follow-up actions tracked in Jira are linked so incident context flows into task descriptions and task completion updates the post-mortem status. The export maintains formatting, embedded charts, and metadata like severity and incident lead. Post-mortems become searchable in your existing knowledge base, making patterns visible across incidents.

"incident.io provides a one stop shop for all the orcestrations involved when managing an incident. Since implementing incident.io we have been able to manage all elements of an incident in a single place due to the integrations that the product offers, hugely improving our communication capabilities and response times." - Kay C. on G2

Manual vs. automated comparison

| Activity | Manual Time | Automated Time |

|---|---|---|

| Assemble timeline from Slack, Datadog, PagerDuty | 45-60 min | Almost instant (captured automatically) |

| Write incident summary and context | 15-20 min | 2-3 min (AI drafts, you edit) |

| Document decisions and dead-ends | 10-15 min | 0 min (Scribe transcribes calls) |

| Create follow-up Jira tickets | 5-10 min | 1 min (auto-created with context) |

| Total per incident | 75-105 min | 3-4 min |

Measuring the ROI of automated post-mortems

Time savings: For teams handling 15 incidents monthly, reducing post-mortem time from 90 minutes to 15 minutes saves 18.75 hours per month.

| Metric | Calculation | Monthly Savings | Annual Savings |

|---|---|---|---|

| Incidents per month | 15 incidents | - | - |

| Time saved per incident | 75 minutes | 18.75 hours | 225 hours |

| Engineer loaded cost | $150/hour | - | - |

| Total value reclaimed | 15 × 75 min × $150/hr | $2,812 | $33,750 |

MTTR reduction: Better documentation leads to faster resolution of similar future incidents. Lowe's reduced MTTR by 82% through streamlined workflow from alerting to post-mortems. Autodesk cut MTTR by 85% with event correlation. We've seen purpose-built platforms reduce MTTR by up to 80% through Slack-native coordination and automated post-mortems.

On-call health: New engineers onboard faster because past incidents are actually readable and searchable. Instead of asking "has this happened before?" and getting vague answers, they search the post-mortem database and find three similar incidents with documented resolution steps. Watch how WorkOS gained confidence to declare more incidents with better tooling.

Summary

Platform engineering incidents break traditional post-mortem tools because complexity makes human memory unreliable. Manual reconstruction from scattered data sources takes 60-90 minutes per incident and loses critical context about decision rationale, timing, and dead-end investigation paths.

The solution is automated capture that treats post-mortems as data artifacts, not documents to write. We capture the complete timeline in Slack as incidents unfold, using AI to draft post-mortems before you start editing. For teams managing 15-20 incidents monthly, that's 18+ hours reclaimed per month and measurable MTTR reduction through better learning.

Ready to eliminate the post-mortem archaeology tax? Book a demo to see how AI SRE drafts post-mortems from real timeline data in minutes instead of hours.

Key terminology

MTTR: Mean Time To Resolution measures average time from incident detection to full restoration of service.

Slack-native: Tools built entirely within the Slack interface rather than web-first applications with Slack notifications bolted on.

Service catalog: Centralized registry mapping services to owners, dependencies, runbooks, and current health status for rapid incident context.

Blameless post-mortem: Root cause analysis focused on system weaknesses and process improvements rather than individual fault assignment, following Google's SRE practices for organizational learning.

Timeline capture: Automated recording of incident events, chat messages, role assignments, and tool actions as they occur, eliminating manual note-taking and memory-based reconstruction.

FAQs

See related articles

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom Wentworth

Humans aren’t fast enough for 4 9’s

Hitting 99.99% isn't a faster version of what you already do. It's a different problem to be solved: autonomous recovery, dependency ceilings, redundancies, and the discipline to build systems that buy you 15-30 minutes before you're needed at all.

Norberto Lopes

Norberto LopesSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization