Best incident postmortem software for on-call engineers: The 2026 breakdown

Updated February 5, 2026

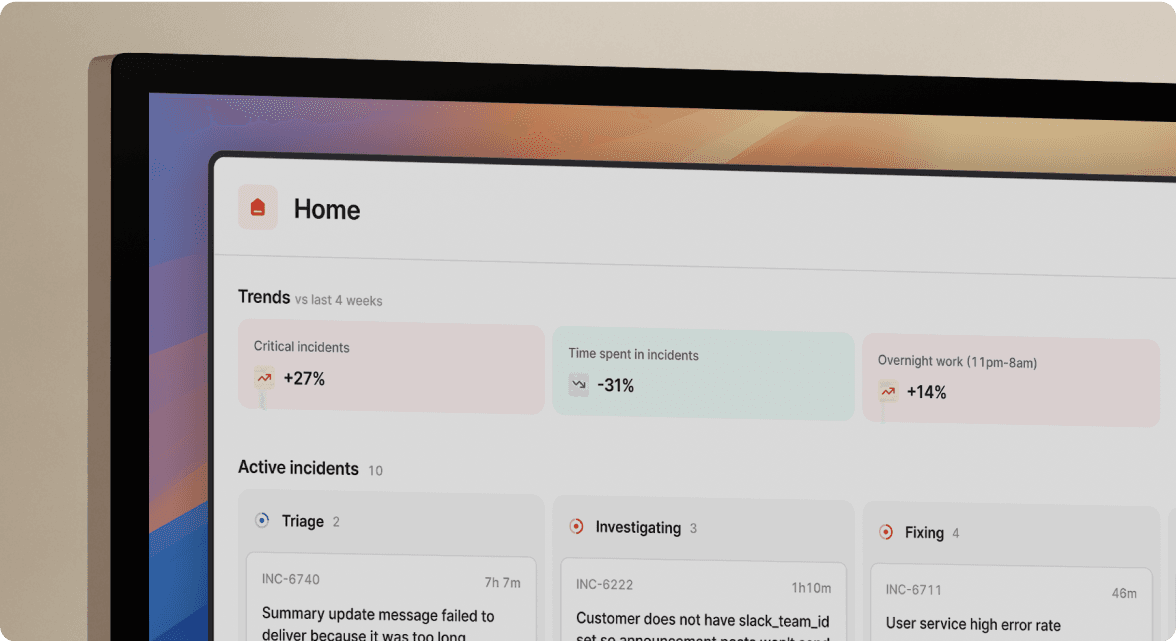

TL;DR: Manual postmortem reconstruction wastes 60-90 minutes per incident and loses critical timeline data. Effective postmortem software automates timeline capture during the incident itself, reducing documentation time by 75-85%. We built incident.io as a Slack-native platform that auto-captures every command, role assignment, and decision in real-time, cutting postmortem work from 90 minutes to 15 minutes. PagerDuty is sunsetting its Postmortems feature in January 2026, while Opsgenie shuts down completely in April 2027. For SRE teams handling 15+ incidents monthly, automated timeline capture eliminates post-incident archaeology and lets engineers focus on learning, not paperwork.

Postmortem archaeology is burning out SRE teams. Manual reconstruction means scrolling through Slack threads, correlating PagerDuty timestamps, and piecing together what happened from memory days after an incident. Which deploy triggered the cascade? Who suggested the rollback? What time did the database team join? The timeline is fuzzy, details are missing, and documentation deadlines loom.

Manual postmortem reconstruction wastes 90 minutes per incident and produces incomplete documentation. The solution isn't better documentation discipline. It's automated timeline capture that happens during the incident itself, not after.

Postmortem best practices and automation

Effective postmortems require accurate timelines and psychological safety. Most teams focus on the latter while ignoring that manual postmortem reconstruction leads to data loss and incomplete documentation. When engineers spend 90 minutes reconstructing events from memory, they're doing archaeology, not building a learning culture.

| Manual postmortems | Automated postmortems |

|---|---|

| 60-90 minutes reconstruction time | 10-15 minutes review time |

| Incomplete timeline (memory gaps) | Complete timeline (captured live) |

| High stress (designated note-taker) | Low stress (automatic logging) |

| Scattered across 5+ tools | Unified in incident platform |

| Published 3-5 days after incident | Published within 24 hours |

Core principles of effective postmortems

Blameless postmortems focus on systems, not people. The Google SRE book defines blamelessness as focusing on what went wrong with processes and tools rather than who made mistakes. This cultural foundation matters, but it falls apart when your timeline data is scattered across five tools.

Root cause analysis typically uses the 5 whys framework to drill down from symptoms to underlying causes. You ask "why did the API fail?" five times until you reach a systemic issue like "our connection pool monitoring alerts were disabled during the migration." This analysis only works when you have accurate incident data. If your timeline shows the database team joined at 3:15 AM when they actually joined at 2:58 AM, your root cause analysis starts on faulty assumptions.

The role of automation in post-incident review

Timeline automation captures incident data as events happen, not days later. Platforms like incident.io capture Slack messages, role assignments, and command execution in structured timelines without requiring designated note-takers. When your platform logs every /inc escalate command, every severity change, and every key decision automatically, you eliminate the 90-minute reconstruction tax.

We measured the efficiency difference with our customers. Manual postmortems consume 60-90 minutes of senior engineer time after every incident. Automated timeline capture reduces this to 10-15 minutes of review and refinement, representing 75-85% time savings. For a team handling 18 incidents monthly, that's reclaiming 22.5 hours of engineering capacity per month.

Automated tools capture more than just messages. They log alert context from Datadog or Prometheus, track which team members joined when, record Zoom call transcriptions, and link to related pull requests or deployments. This creates a complete audit trail that manual note-taking can't match.

"It empowers anybody to raise an incident and helps us quickly coordinate any response across technical, operational and support teams. Its postmortem and follow-up tooling is simple, yet detailed, and gives us the structure to quickly share learnings from our incidents." - Matt B. on G2

Top incident postmortem software solutions

The best postmortem software doesn't just give you templates to fill out. It captures the incident timeline automatically while your team focuses on resolution, then generates documentation from that real-time data. We evaluated on-call software platforms based on timeline automation, AI capabilities, Slack integration depth, and total cost of ownership for teams running 50-500 engineers.

incident.io

We run entirely in Slack, capturing incident timelines automatically from the moment an alert fires. incident.io creates dedicated incident channels, logs every /inc command, tracks role assignments, and records which engineers participated. When you type /inc resolve, the system already has 80% of your postmortem written from captured timeline data.

Our AI Scribe joins Zoom or Google Meet calls as a participant, providing real-time transcription and decision capture without requiring a designated note-taker. When someone says "let's roll back to version 2.4.1" during a war room call, Scribe logs that decision automatically. This eliminates the "designated note-taker tax" where one engineer stops troubleshooting to document.

Our AI SRE assistant automates up to 80% of incident response by identifying likely code changes behind incidents, suggesting fixes, and opening pull requests directly in Slack. When integrated with your service catalog, it pulls relevant run-books, recent deployments, and dependency information automatically. Favor's engineering team reduced MTTR by 37% after implementing incident.io having previously wasted 20-30 minutes just on manual coordination.

We generate postmortems automatically when you resolve an incident, exporting complete timelines to Confluence, Notion, or Google Docs with one click. Engineers spend 10-15 minutes refining root cause analysis instead of 90 minutes reconstructing events. Follow-up tasks flow into Jira or Linear with full context, and status page updates happen automatically without manual effort.

Strengths for on-call engineers:

- Zero context-switching during incidents (everything happens in Slack)

- No training required (feels like using Slack naturally)

- Timeline capture is automatic, not manual

- AI identifies root causes with validated accuracy in production environments

- Support responds in hours with shared Slack channels for real-time help

Limitations:

- Requires Slack or Microsoft Teams as your primary communication platform

- Opinionated design with strong defaults; less customizable than PagerDuty's flexible workflows

- On-call scheduling costs an additional $10-20/user/month depending on plan tier

- Not built for microservice SLO tracking; integrates with specialized tools instead

Pricing: Team plan starts at $15/user/month for incident response, with on-call adding $10/user/month on annual billing ($25/user/month total). Pro plan runs $25/user/month for incident response plus $20/user/month for on-call ($45/user/month total). Enterprise pricing is custom. Compare detailed pricing tiers based on your team size.

"incident.io does everything for incident management, from the moment you receive an alert to the moment you close a postmortem. They always come up with solutions you didn't know you needed, that make the tool super powerful." - Pablo P. on G2

PagerDuty

PagerDuty's Postmortems feature will be removed from the product and become unavailable on January 30, 2026. After the sunset, Enterprise Incident Management customers can create post-incident reviews in Jeli, with Professional and Business plans limited to 300 reviews annually. This fragments the workflow further, you'll alert through PagerDuty, coordinate in Slack, and document in a third tool.

PagerDuty still dominates the alerting market with battle-tested reliability and 200+ integrations. The platform excels at getting the right person paged at the right time, with sophisticated escalation paths and mobile notifications that work reliably. The PagerDuty mobile app maintains 4.8 stars on iOS, providing dependable on-call notifications. Integration with Slack allows basic incident updates, but coordination still happens primarily in PagerDuty's web interface rather than chat-natively.

Strengths for on-call engineers:

- Rock-solid alerting reliability with decade-long track record

- Sophisticated escalation policies and schedule management

- Deep integration ecosystem across monitoring tools

- Proven at enterprise scale with customers running thousands of services

Limitations:

- Postmortems feature sunsetting in January 2026

- Web-first interface requires context-switching during incidents

- Per-seat pricing escalates quickly ($40+/user/month for full features)

- Support quality has declined according to recent G2 reviews

- Postmortem automation is weak compared to Slack-native alternatives

Pricing: Professional plan starts at $21/user/month annually. Full incident management with advanced features typically runs $40+/user/month with add-ons.

For teams already invested in PagerDuty for alerting, consider keeping it for that purpose while using incident.io for coordination and postmortems. This "best tool for each job" approach is common among mature SRE teams.

FireHydrant

FireHydrant takes a web-first approach with customizable run-books and workflow automation. The platform logs incident events to a timeline and allows responders to star key items during incidents to highlight them for retrospectives. This semi-manual approach requires more active curation than fully automated alternatives.

FireHydrant integrates with Slack for notifications and updates but doesn't run the entire incident lifecycle in chat. You declare incidents in Slack but manage run-books, assign tasks, and track progress in the FireHydrant web interface. This creates context-switching during high-stress moments when engineers need information consolidated.

The platform provides solid workflow automation for teams wanting more customization than incident.io's opinionated approach. You can build elaborate run-books with conditional branching and automated task creation. However, AI capabilities are basic compared to incident.io's root cause analysis and automated fix generation.

Strengths for on-call engineers:

- Flexible run-book customization for complex workflows

- Good Slack integration for basic coordination

- Timeline logging with manual curation options

Limitations:

- Web-first design requires leaving Slack during incidents

- Basic AI capabilities without deep root cause analysis

- Support velocity slower than incident.io's shared Slack channels

Pricing: Not publicly listed. Typically comparable to incident.io Pro plan ($40-50/user/month range) based on competitive analysis.

Rootly

Rootly runs natively in Slack with a supporting web UI, similar to incident.io's approach. The platform automatically reconstructs complete incident timelines by pulling data from Slack conversations, Jira tickets, monitoring alerts, and integrated tools, creating a single source of truth without manual note-taking.

Rootly offers advanced AI including summaries and root cause analysis suggestions. The platform integrates with major monitoring tools and ticketing systems, automatically linking relevant context to incidents. Postmortem generation happens from captured timeline data, similar to incident.io's approach. The key differentiator is Rootly's focus on post-incident learning analytics, tracking patterns across incidents and measuring whether action items actually get completed.

Strengths for on-call engineers:

- Slack-native with minimal context-switching

- Automated timeline reconstruction from multiple sources

- Advanced AI for root cause suggestions

Limitations:

- Smaller customer base compared to incident.io

- Rootly publishes fewer public case studies, making it harder to verify claimed MTTR improvements with third-party evidence

Pricing: Not publicly disclosed. Estimated similar to incident.io Team/Pro tier range.

Atlassian (Jira Service Management and Opsgenie)

Atlassian's incident management story is complicated. Opsgenie, their dedicated alerting and on-call tool, will reach end of support on April 5, 2027, with all data deleted after that date. Customers must migrate to Jira Service Management (JSM) or Compass, but the JSM-Compass combination doesn't include integrated monitoring that many teams rely on.

For organizations already invested in the Atlassian ecosystem (Jira for tickets, Confluence for documentation), JSM provides familiar integration. You can create incidents from Jira tickets, track follow-ups in the same system, and export postmortems to Confluence pages. This consolidation appeals to teams wanting fewer vendors.

However, JSM is service-desk software adapted for incident management, not purpose-built for real-time SRE response. The interface prioritizes ticketing workflows over rapid coordination. JSM Standard lacks advanced incident tools, and Premium costs 2x more, making it feel heavyweight for teams only needing incident management.

Strengths for on-call engineers:

- Natural fit for existing Atlassian shops

- Unified ticketing and incident tracking

- Familiar Confluence integration for documentation

Limitations:

- Opsgenie sunset forces migration by April 2027

- JSM feels heavyweight for real-time incident response

- Not chat-native (Slack integration is secondary)

- Complex pricing with per-object fees and tier limitations

Pricing: JSM Standard starts at $20/agent/month. Premium runs approximately $40-48/agent/month depending on team size. Understand JSM's total cost including hidden per-object fees after included limits.

For teams evaluating Opsgenie alternatives, consider whether you need deep Jira integration or would prefer Slack-native incident management built specifically for SRE workflows.

Datadog

Datadog excels at monitoring, metrics, and log aggregation, providing deep observability into your infrastructure, applications, and services. However, Datadog is fundamentally a monitoring tool, not an incident coordination platform. You use Datadog to identify problems, not to coordinate response teams.

Datadog's incident management features allow you to declare incidents from monitors and dashboards, add responders, track status, and generate basic timelines. But this coordination happens in Datadog's interface, separate from where your team communicates in Slack. The platform lacks automated role assignment, slash command workflows, or AI-generated postmortems.

The best practice is using Datadog for what it does best (monitoring) while integrating it with incident management platforms. incident.io integrates with Datadog to automatically create incident channels when Datadog alerts fire, pulling relevant metrics and dashboard links into Slack context.

Strengths for on-call engineers:

- Industry-leading observability and metrics

- Powerful query language for debugging

- Excellent mobile app for on-call dashboard access

Limitations:

- Not built for incident coordination or postmortems

- Coordination features are basic compared to dedicated platforms

- Best used integrated with purpose-built incident management

Pricing: Starts at $15/host/month for Infrastructure monitoring. Full observability suite with APM, logs, and incident management runs $31+/host/month.

On-call engineering and incident response

Effective postmortems are inseparable from effective incident response. The best postmortem software recognizes this by unifying alerting, coordination, and documentation in one continuous workflow.

Well-designed on-call schedules determine who receives alerts during specific time windows. Building them requires balancing coverage across time zones and providing clear escalation paths when primary responders are unavailable. Escalation policies define what happens when the first person paged doesn't acknowledge within a threshold. According to PagerDuty's documentation, the default escalation timeout is 30 minutes, though some teams configure shorter timeouts for critical incidents.

Alert fatigue destroys on-call effectiveness when teams receive too many low-priority notifications. Manual coordination amplifies this by requiring responders to triage alerts, determine severity, page additional team members, and document everything simultaneously — exactly the problem that on-call features like automated escalation and Slack-native workflows solve. When postmortems reveal "we spent 15 minutes figuring out who to page," the problem isn't the people, it's the tools forcing manual coordination overhead.

"I enjoy that everything (or most things) is on Slack. I'm on slack all day at work, so not having to flick through other apps to get all my information is vital. Also, bringing in more people is as easy as calling their slack handle." - Kimia P. on G2

Slack-native platforms handle the entire incident lifecycle through slash commands: /inc declare creates the incident, /inc escalate @database-team pages additional responders, /inc assign @sarah designates an incident commander, and /inc resolve closes the incident and triggers postmortem generation. This approach eliminates the context-switching tax of toggling between PagerDuty for alerts, Slack for communication, Jira for tracking, and Google Docs for documentation.

The efficiency gain is measurable. Teams typically spend 10-15 minutes per incident on coordination overhead that Slack-native platforms eliminate by auto-paging responders and creating dedicated channels instantly. The MTTR improvement comes not from faster troubleshooting but from eliminating coordination overhead that shouldn't exist.

"My favourite thing about the product is how it lets you start somewhere simple, with a focus on helping you run incident response through Slack. Once you're ready for more, it's got great features you can dive into (postmortem templates, action item tracking) and integrations with all the major tools." - Chris S. on G2

Persona gaps and notable omissions

Most incident management comparisons focus on feature checklists without addressing the questions SREs actually care about. Here are the issues that vendor marketing rarely discusses transparently.

Pricing transparency and TCO

Hidden costs in incident management platforms include on-call management as a paid add-on, essential features locked behind expensive higher tiers, automation caps that active teams hit quickly, and per-object fees after included limits.

incident.io's Team plan advertises $15/user/month but on-call scheduling adds $10/user/month on annual billing, making the real cost $25/user/month for most teams. PagerDuty's Professional plan starts at $21/user/month but full incident management with noise reduction and AI features typically runs $40+/user/month. JSM Standard lacks advanced incident tools, forcing upgrades to Premium at approximately $40-48/agent/month.

The indirect costs matter more. A team handling 18 incidents monthly spending 90 minutes per postmortem burns 27 engineering hours monthly on documentation. At typical SaaS engineering loaded costs of $120-180/hour, that's $3,240-$4,860 monthly or $38,880-$58,320 annually in pure documentation overhead. Automated timeline capture reducing this to 15 minutes per postmortem saves 22.5 hours monthly, worth $2,700-$4,050 monthly or $32,400-$48,600 annually.

Budget conversations should focus on total cost: platform fees plus engineering time saved. A $5,000/month platform saving 20 engineer-hours monthly ($3,000 value) costs $2,000 net. A $2,000/month platform saving zero engineer hours costs $2,000 net. The cheaper option isn't always cheaper.

AI model specificity and reality

"AI-powered" has become meaningless marketing jargon. The questions that matter are: What does the AI actually do? What's the measured accuracy? What happens when it's wrong?

We built incident.io's AI SRE to identify the likely code change causing incidents and generate fix pull requests, achieving production-grade accuracy with faster resolution times. Our AI Scribe transcribes incident calls in real-time, capturing decisions without designated note-takers. These capabilities take action, opening PRs, pulling metrics, suggesting specific commands, rather than just reformatting text.

Generic "AI summarization" that rephrases your Slack messages into bullet points provides minimal value. Root cause analysis that suggests "check recent deployments" is basic pattern matching, not intelligence. The meaningful distinction is whether AI takes action or just reformats text.

When evaluating AI claims, ask for precision and recall metrics. Ask what training data the model uses. Ask what happens when the AI suggests the wrong fix. Platforms that dodge these questions with vague "cutting-edge machine learning" language are hiding behind hype instead of demonstrating results.

Stop reconstructing incidents from memory

The best incident postmortem software eliminates the distinction between "managing the incident" and "documenting the incident." When your platform captures timelines automatically as you coordinate response, postmortems become 15-minute review tasks instead of 90-minute reconstruction projects.

For Slack-centric teams handling 15+ incidents monthly, incident.io delivers the highest ROI through automated timeline capture, AI-powered root cause analysis, and support that responds in hours rather than days. We reduce postmortem time by 80% while improving accuracy and completeness.

Teams already invested in PagerDuty for alerting should note the January 2026 Postmortems feature sunset and evaluate whether to add incident.io for coordination, or migrate entirely to a unified platform. Organizations with deep Atlassian investments face a similar choice given Opsgenie's April 2027 shutdown and JSM's heavyweight service-desk architecture.

The evaluation criteria that matter most are timeline automation depth, AI capabilities beyond basic summarization, mobile reliability for on-call responders, and total cost including both platform fees and saved engineering time. Feature checklists matter less than whether the tool eliminates post-incident archaeology entirely.

Schedule a demo to see automated timeline capture and AI postmortem generation in your actual Slack workspace, or start with the free plan to run your first few incidents through the platform before committing.

Key terminology

MTTR (Mean Time To Resolution): Average time from incident detection to full resolution. Industry median for P1 incidents ranges from 45-60 minutes for most SRE teams.

Timeline capture: Automatic logging of incident events including Slack messages, slash commands, role assignments, and call transcriptions without manual note-taking.

Blameless postmortem: Retrospective analysis focusing on systemic issues and process improvements rather than individual mistakes, enabling honest discussion of what went wrong and why.

Slack-native: Architecture where the entire incident lifecycle happens through chat commands and channels rather than a web interface that sends Slack notifications.

AI SRE: Autonomous agent that investigates incidents, correlates data across monitoring tools, identifies root causes, and generates environment-specific fixes including opening pull requests.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization