Best incident postmortem software: Complete guide for 2026

Updated February 20 2026

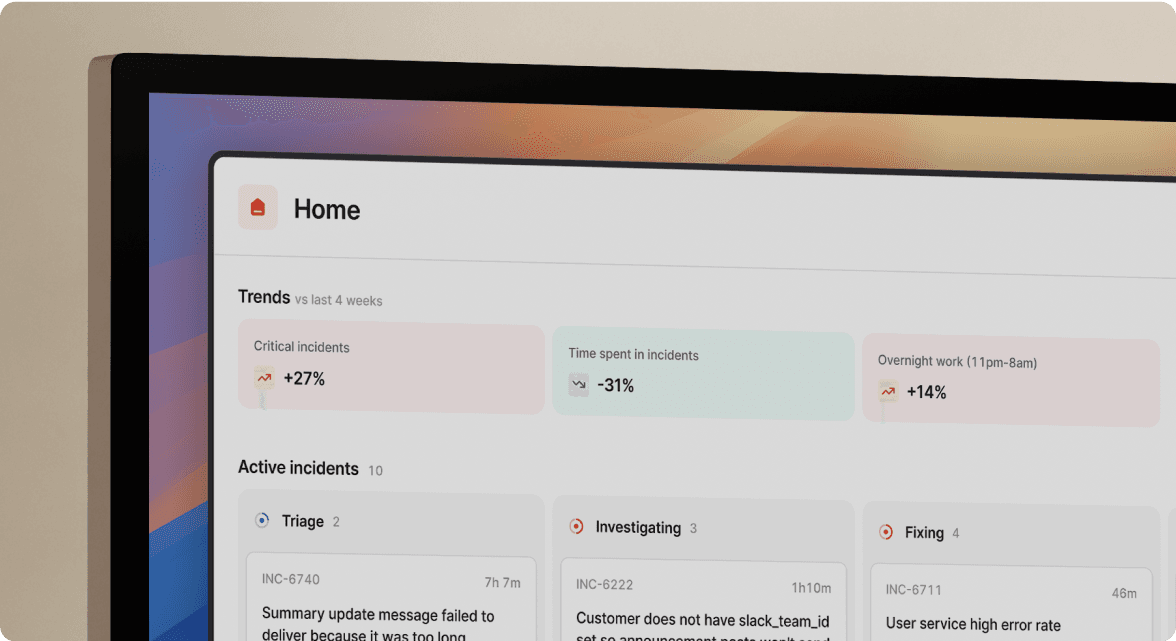

TL;DR: The best incident postmortem software automates timeline capture during response, eliminating the 60-90 minutes engineers waste reconstructing events from memory and chat logs. incident.io leads with Slack-native automation that drafts postmortems in 15 minutes using data captured in real-time. Rootly offers strong automation with more configuration options than opinionated platforms. PagerDuty excels at alerting but requires manual effort or add-ons for deep post-incident analysis. Manual systems like Google Docs cost nothing upfront but burn significant engineering time. Choose tools that capture data where your team already works, not platforms that add documentation overhead.

It's 48 hours after a P1 outage and you're staring at a blank postmortem template. You spent 20 minutes in the incident itself, but now you're burning 90 minutes scrolling through Slack threads, Datadog dashboards, and PagerDuty alert logs trying to reconstruct what happened. The engineer who identified the root cause is on PTO. The timeline in your head doesn't match the timestamps in your monitoring tools. Half the decisions made during the incident happened in a Zoom call nobody recorded.

This is the reconstruction tax, and it hits every team that treats postmortems as a documentation exercise instead of an automated byproduct of incident response. For teams handling 15-20 incidents per month, that's 22-30 hours of engineering time spent writing about problems instead of fixing the systems that caused them.

The tools in this guide approach the problem differently. Instead of asking engineers to remember and document after the fact, they capture timeline data during the incident and use it to draft postmortems automatically. We evaluated eight platforms based on what matters to SRE teams: automated timeline capture, integration depth with your existing stack, AI-assisted drafting, analytics for identifying patterns, and security controls for sensitive incidents.

What is incident postmortem software?

Incident postmortem software facilitates retrospective analysis after system outages or degraded performance, supporting what SRE practitioners call the learning phase of incident management. These platforms help you understand root causes, identify contributing factors, and develop action items to prevent recurrence. Modern platforms have evolved from simple documentation editors (fill out a template in Confluence) into automated analysis platforms that ingest real-time incident data, capture timelines without manual logging, and generate insights from patterns across hundreds of incidents.

These tools support what Atlassian identifies as the Check and Act phases of reliability engineering, transforming raw incident data into structured knowledge. The shift matters because manual documentation creates three critical problems: engineers spend 60-90 minutes per incident reconstructing timelines from memory, critical technical details get lost when the person taking notes is also troubleshooting, and unstructured documents rarely get analyzed for trends that could prevent future incidents.

Critical distinction: This guide covers software for technical incident analysis in DevOps and SRE contexts, not physical safety incident reporting or forensic pathology tools. We're evaluating platforms that integrate with monitoring systems (Datadog, Prometheus), communication tools (Slack, Microsoft Teams), and issue trackers (Jira, Linear) to reduce Mean Time To Resolution and improve reliability practices.

The hidden cost of manual post-incident reviews

Manual postmortem reconstruction wastes 60-90 minutes per incident as you search through chat history, monitoring tools, and call recordings trying to piece together what happened. For teams handling 20 incidents monthly at 90 minutes per post-mortem, that's 30 hours spent on documentation overhead, not reliability improvements. At $150 loaded engineer cost, you're burning $4,500 monthly on reconstruction work that automated tools handle in minutes.

The context gap compounds the time cost. Datadog's research shows you need to manually collate data from across incident management timelines, graphs, monitors, and collaboration tools. This fragmented, labor-intensive process leads to incomplete and inaccurate postmortems, hindering your team's ability to learn from incidents. When you designate one engineer as note-taker during firefights, they miss technical context while documenting, creating a bottleneck where knowledge lives in one person's memory instead of a shared timeline.

The write-only problem shows up three months later. You rarely read or analyze manual docs stored in Notion or Confluence for patterns. You can't answer basic questions like "What percentage of incidents stem from database connection pools?" or "Did our Kubernetes migration reduce outages?" because your data exists as unstructured prose scattered across dozens of documents. Logging and documenting incidents clearly creates a quick reference guide for similar future issues, ultimately leading to better MTTR. Without structure, that reference guide doesn't exist.

Core features: What makes postmortem software effective?

Automated timeline construction

The foundation of effective postmortem software is capturing what happened without requiring manual logging during incidents. You need tools that automatically record Slack messages, slash commands, role assignments (incident commander appointed at 3:14 AM), alert timestamps, and status changes. This eliminates the reconstruction tax where you scroll through chat logs days later trying to remember who did what.

"Without incident.io our incident response culture would be caustic, and our process would be chaos... Its postmortem and follow-up tooling is simple, yet detailed, and gives us the structure to quickly share learnings from our incidents." - Matt B. on G2

The best implementations capture timeline data where work happens, not in separate logging interfaces. If your team coordinates in Slack, the tool should ingest Slack threads automatically. If incidents involve Zoom calls, transcription should happen in real-time without designated note-takers.

Bi-directional integrations with your stack

Your postmortem platform needs to connect deeply with monitoring (Datadog, Prometheus, New Relic), alerting (PagerDuty, Opsgenie), task tracking (Jira, Linear), and documentation (Confluence, Notion). Shallow integrations that require copy-paste add friction. Strong integrations create follow-up Jira tickets automatically populated with timeline context, link PagerDuty alerts to specific incident phases, and export finished postmortems to your team wiki in one click.

AI and summarization capabilities

Using AI to draft postmortems from captured timeline data means your engineers spend 10-15 minutes reviewing and refining instead of 90 minutes writing from scratch. The AI generates incident summaries, timeline of events, contributing factors, and suggested action items based on what actually happened, not what someone remembers three days later.

Important limitations: Reliable accuracy in extracting numerical data like customer impact, repair time, and financial loss from postmortems remains challenging. AI requires human curation for root cause analysis and corrective actions. Tools that claim "AI writes perfect postmortems with zero human effort" are overselling. The realistic value is 80% automation with 20% expert review, which still saves massive time. Google's Gemini CLI demonstrates how AI can assist at every stage while keeping SREs in control for safety and validation.

Analytics and insights for continuous improvement

Effective tools don't just document individual incidents. They help you track metrics like MTTR across your organization, identify incident frequency by service, and surface patterns in root causes. MTTR (mean time to resolve) equals the average time to fully resolve a failure, including detection, diagnosis, repair, and ensuring recurrence prevention.

Automation provides comprehensive datasets for root cause analysis by generating knowledge graphs and insights from incident data. For example, if your median P1 MTTR dropped from 48 to 30 minutes after adopting a new platform, that's a 37.5% improvement. Across 15 incidents per month, that's 270 minutes saved monthly, or 4.5 hours of engineering time reclaimed for proactive reliability work.

Security and access controls

You need production incidents to remain private when they involve sensitive data. Customer PII exposure, security vulnerabilities, and infrastructure weaknesses require private incident channels where only authorized responders participate. SOC 2 Type II certification, GDPR compliance, SAML/SCIM support for enterprise SSO, and audit trails showing who accessed what become table stakes for regulated industries.

8 best incident postmortem software tools for 2026

1. incident.io

Best for: Slack-centric teams wanting zero-friction automation where timeline capture and postmortem drafting happen automatically.

incident.io treats the entire incident lifecycle as one workflow. Alerts from Datadog or PagerDuty auto-create dedicated Slack channels where responders coordinate using slash commands (/inc escalate, /inc assign, /inc resolve). The platform captures every message, command, and role assignment automatically. When you type /inc resolve, the system has already logged who did what and when.

Key postmortem features:

- Auto-drafted postmortems: Scribe transcribes incident calls in real-time while the platform captures Slack timelines automatically, drafting postmortems in 15 minutes

- Service Catalog integration: The platform knows which service failed and who owns it, automatically pulling in the right people

- Native status pages: Update customer-facing status in the same workflow

- Export flexibility: Push finished postmortems to Confluence or Notion with one click

"I would really highlight the customer-centricity of the team working in incident.io... the 1-click postmortem reports - this is a killer feature, time saving, that helps a lot to have relevant conversations around incidents (instead of spending time curating a timeline)." - Adrian M. on G2

Pricing transparency: incident.io offers four tiers: Basic (free), Team, Pro, and Enterprise (contact sales). The Team plan starts at $19 per user per month, but on-call management costs an extra $12 per user per month. Pro plan costs $25 per user per month with on-call at $20 per user per month ($45 total). Pro includes unlimited workflows, custom incident types, private incidents for security issues, and advanced analytics.

Why it wins for postmortems: The tool doesn't ask you to log timeline data separately. It captures what happened during the incident, then uses that data to draft the post-mortem. You review and refine instead of writing from scratch.

2. PagerDuty

Best for: Enterprise organizations prioritizing rock-solid alerting who can invest in add-ons for deeper post-incident analysis.

PagerDuty dominates the alerting category with battle-tested reliability for waking up on-call engineers. Their core platform excels at routing alerts based on complex escalation policies and maintaining global on-call schedules. For postmortems specifically, PagerDuty recently added GenAI innovation for incident postmortems, but deeper analytics often require additional products or manual work.

Postmortem approach: PagerDuty captures alert data and basic response actions (who acknowledged, who resolved) automatically. Their newer AI features can draft summaries, but the level of timeline detail typically lags behind Slack-native platforms where all coordination happens in one place.

Strengths: If your on-call rotation is complex (multiple teams, follow-the-sun coverage, intricate escalation chains), PagerDuty's flexibility handles it. Their analytics show incident volume trends and responder workload, which helps prevent on-call burnout.

Limitations for postmortems: The platform wasn't designed to capture detailed coordination context. If your team debugs in Slack, writes commands in terminal sessions, and hops on Zoom calls, PagerDuty knows the alert fired and resolved but misses the middle. You'll often find your team supplementing PagerDuty with Jira for follow-ups and Confluence for documentation, creating the tool sprawl problem where incident context fragments across platforms.

3. Rootly

Best for: SRE teams wanting strong automation with more configuration options than opinionated platforms offer.

Rootly provides workflow automation focused on incident response and post-incident learning. Their approach emphasizes customizable playbooks, integration with multiple communication platforms, and AI-assisted postmortem generation. Teams choosing Rootly typically value flexibility to match their specific processes over opinionated defaults.

Postmortem capabilities: Rootly drives learning through automated postmortem tools by providing comprehensive datasets for root cause analysis, helping teams track MTTR trends and identify recurring issues. Their automation captures incident data across integrations and surfaces patterns.

Differentiation: Where incident.io prioritizes simplicity and Slack-nativity, Rootly offers more knobs and dials. If your team has specific workflow requirements that don't fit common patterns, the extra configurability helps. The trade-off is longer setup time and more ongoing maintenance compared to simpler platforms.

4. FireHydrant

Best for: Teams wanting service catalog-driven incident management with dependency mapping.

FireHydrant centers on understanding how services relate to each other. Their service catalog shows which microservices depend on which databases, how API failures cascade through your architecture, and which teams own which components. This context enriches postmortems by connecting incidents to architectural decisions.

Postmortem approach: FireHydrant automates timeline capture and integrates with monitoring tools to draft initial postmortem summaries. Their strength is adding service ownership and dependency context automatically, so postmortems include "this API failure affected 7 downstream services owned by 3 different teams" without manual annotation.

Consideration: User feedback sometimes notes UI complexity. The service catalog power requires upfront investment in mapping your architecture, which pays off for large, complex environments but may feel heavy for smaller teams.

5. Atlassian (Jira Service Management with Opsgenie)

Best for: Organizations already standardized on Atlassian stack for project management who want incident response in the same ecosystem.

Jira Service Management combines ITSM capabilities with Opsgenie (acquired by Atlassian) for alerting and on-call management. For teams living in Jira for sprint planning, task tracking, and service desk operations, keeping incident management in the same platform reduces context switching.

Postmortem workflow: Incidents create Jira tickets automatically. Postmortems become linked pages in Confluence with structured templates. The data model is familiar if you already use Jira, making adoption easier for teams comfortable with Atlassian conventions.

Limitations: The experience feels web-heavy and form-based compared to Slack-native tools. Coordination still happens in Slack for most teams, creating a disconnect where incident discussion lives in chat but documentation lives in Jira. This split often means manual copying and pasting to build the postmortem timeline.

Opsgenie note: Atlassian sunset Opsgenie's standalone product with forced migration to Jira Service Management by April 2027. Teams using Opsgenie today should evaluate whether deeper Atlassian integration aligns with their workflows or if alternatives fit better. We've published tools to make migrating from Opsgenie easier if you're exploring options.

6. Blameless

Best for: Organizations committed to SRE best practices including SLOs, error budgets, and building blameless culture.

Blameless takes a methodology-first approach. Beyond incident management software, they emphasize blameless postmortem culture where analysis focuses on system issues, not individual mistakes. Their platform includes SLO tracking, error budget monitoring, and reliability scoring alongside incident response.

Postmortem philosophy: Blameless postmortems focus on identifying contributing causes without indicting individuals. The approach assumes everyone involved had good intentions and did the right thing with available information, emphasizing system-level issues rather than personal mistakes.

Consideration: The platform's strength (deep methodology focus) can feel heavy to implement. Teams wanting quick time-to-value may find the learning curve steep. Blameless works best when your organization commits to adopting SRE practices holistically, not just installing software.

7. Squadcast

Best for: Mid-market teams looking for PagerDuty alternatives with modern UX and improving post-incident capabilities.

Squadcast provides on-call scheduling, alert routing, and incident response workflows at price points below PagerDuty. Their platform has evolved from pure alerting into fuller incident management including post-incident analysis.

Postmortem capabilities: Squadcast captures incident timelines, integrates with communication tools, and provides templates for structured postmortems. The platform emphasizes reducing alert fatigue and improving on-call experience, which indirectly improves postmortem quality by ensuring responders have clear context.

Positioning: Squadcast sits between startup-focused tools (simpler, more opinionated) and enterprise platforms (complex, expensive). Good fit for 50-200 person engineering organizations wanting reliability without heavyweight enterprise processes.

8. Notion and Google Docs (the manual option)

Best for: Very small teams handling occasional incidents who prioritize flexibility over automation.

Manual documentation in Notion or Google Docs offers infinite flexibility. You can structure postmortems exactly how your team thinks about incidents, add custom fields without software configuration, and change templates instantly. The marginal cost is zero if you already pay for these tools.

When it works: Startups with 3-5 engineers handling occasional incidents often succeed with manual docs. The coordination overhead is low enough that one person can reasonably capture timeline, root cause, and follow-ups without automated tooling. Template consistency matters more than automation.

When it breaks down: As incident volume increases, the manual tax compounds. You lose 60-90 minutes per incident on reconstruction. Data stays unstructured, blocking trend analysis. New team members lack clear process ("do we use this template or that template?"). Action items tracked in docs get forgotten without automated reminders.

Cost reality: Zero software cost but high opportunity cost. At 20 incidents monthly with 90 minutes reconstruction time per incident, you're burning the same 30 hours monthly on manual documentation that automated platforms handle in 15 minutes per incident (a calculation detailed in the hidden cost section above).

Comparison: Top postmortem tools at a glance

| Tool | Slack-native | Auto-timeline | AI capabilities | Pricing model | Best for |

|---|---|---|---|---|---|

| incident.io | Yes (primary interface) | Yes (captures all Slack activity) | Scribe for calls, AI drafts 80% complete | Transparent tiers starting at $19/user/month | Slack-centric teams wanting zero-friction automation |

| PagerDuty | No (web-based) | Partial (alert data only) | GenAI add-on available | Contact sales | Enterprise alerting with complex on-call needs |

| Rootly | Integration available | Yes | AI-assisted generation | Contact sales | Teams wanting configurable automation |

| FireHydrant | Integration available | Yes | AI summaries with service context | Contact sales | Service catalog-driven organizations |

| Atlassian/Opsgenie | No (Jira/Confluence) | Manual to Jira tickets | Limited | Tiered pricing per agent | Existing Atlassian customers |

| Blameless | Integration available | Yes | SLO-driven insights | Contact sales (enterprise) | SRE methodology adoption |

| Squadcast | Integration available | Yes | Improving | Published tiers | Mid-market PagerDuty alternatives |

| Notion/Docs | No (manual entry) | No (manual) | None | Free (time cost: 90 min/incident) | Small teams with low incident volume |

How to choose: A decision framework for SREs

Evaluate based on team size and incident volume

Small teams (5-15 engineers, low incident volume): Choose tools with fast time-to-value and minimal configuration. incident.io's Basic plan or Squadcast work well. Avoid platforms requiring weeks of professional services to deploy.

Mid-market (15-100 engineers, 10-30 incidents/month): You need real automation now. Manual docs are costing you significant engineering hours monthly. Prioritize Slack-native tools (incident.io) or strong integrations (Rootly, FireHydrant) that eliminate reconstruction time.

Enterprise (100+ engineers, 30+ incidents/month): You need RBAC, SAML/SCIM, sandbox environments, and dedicated support. incident.io Enterprise, PagerDuty, or Blameless fit. Calculate saved engineering hours to justify investment: 30 incidents × 75 minutes saved × $150 loaded cost = $5,625 monthly value.

Match your current stack

Slack-centric teams: If 80% of your coordination happens in Slack, choose Slack-native tools. Context switching to web dashboards during 3 AM incidents kills efficiency. incident.io treats Slack as the primary interface, while tools like Rootly and Blameless offer deep Slack integration through bots.

Atlassian-standardized teams: If your org runs on Jira for everything, staying in that ecosystem reduces training overhead. Accept that coordination-in-Slack plus documentation-in-Jira creates some manual bridging.

Microsoft Teams users: incident.io supports Microsoft Teams on Pro plan and above. PagerDuty and other enterprise platforms typically offer Teams integrations.

Calculate total cost of ownership

Don't compare list prices alone. Calculate fully-loaded costs including:

License fees: incident.io Pro with on-call for 50 users runs approximately $2,250 monthly or $27,000 annually based on current published pricing.

Integration costs: Some tools charge separately for premium integrations. incident.io includes major integrations in base plans.

Implementation time: Quick deployment reduces time-to-value. Longer implementations may require professional services investment.

Opportunity savings: If automation saves you 60 minutes per incident across 20 incidents monthly, you reclaim 20 hours. At $150 loaded cost, that's $3,000 monthly or $36,000 annually. The software pays for itself if it costs less than your time savings.

Test adoption risk with free trials

The best tool is the one your team actually uses. If engineers find the platform clunky, they'll skip the postmortem or do minimal documentation. incident.io reviews consistently mention ease of use as a key strength.

"My favourite thing about the product is how it lets you start somewhere simple, with a focus on helping you run incident response through Slack... It was also a step up in our maturity regarding incident communication." - Nathael A. on G2

Run 2-3 real incidents through trial platforms. Measure how long postmortems take to complete. Survey responders: "Was this easier or harder than our old process?" Adoption feedback matters more than feature checkboxes.

Key metrics to track for postmortem effectiveness

Time to publish

Target: Postmortems published within 24-48 hours of incident resolution for most teams. Industry sources suggest publishing within 24-72 hours after resolution or within five business days, with faster publication preserving context and driving action.

Why it matters: Fast publishing means your stakeholders learn quickly. Engineering teams can implement fixes while context is fresh. When you wait three days, you write incomplete analyses and forget action items.

How to measure: Track timestamp of incident resolution to timestamp of postmortem published. Set reminders and nudges in incident.io to keep the process moving.

Action item completion rate

Target: 80%+ of postmortem action items completed within agreed timeframes. Low completion rates mean you're documenting learnings but not acting on them, resulting in repeated incidents.

Why it matters: Conducting postmortems with your team to point out where things went wrong prepares the team for future success by learning from mistakes. Value only materializes if identified fixes get implemented.

How to measure: Track follow-up tasks created from postmortems (typically in Jira or Linear). Set due dates and reminders for post-incident tasks to maintain accountability and report completion percentages in quarterly reliability reviews.

Incident recurrence rate

Target: For incidents with clear root causes, recurrence should approach zero. If the same database connection pool issue causes three outages, your postmortem process isn't driving fixes.

Why it matters: The point of post-incident analysis is learning. Recurring incidents signal the process is performative (write the doc, check the box) rather than substantive (identify root cause, fix the system). Past incidents become opportunities to learn and prepare for the future, leading to better MTTR through improved documentation.

How to measure: Tag incidents by type (database, API timeout, deployment failure) and track whether each represents a repeat of a previous incident.

MTTR improvement trends

Baseline: Median resolution time for P1 incidents before adopting new tooling. Track for 90 days to establish baseline.

Target: 20-80% MTTR reduction within 90 days of adopting automated postmortem tools, depending on baseline maturity and incident volume. Improvements come from faster pattern recognition (remembering similar past incidents), better runbook quality (action items from previous postmortems), and reduced coordination overhead.

How to measure: MTTR equals total time spent from the start of each issue to resolution, divided by the total frequency of issues. Export incident duration data monthly, plot trend lines, and celebrate improvements in team reviews.

Conclusion

The best incident postmortem software captures what happened during response automatically, transforming the 90-minute reconstruction slog into a 15-minute review process. Choose platforms that work where your team already coordinates. For Slack-centric teams, that means Slack-native tools like incident.io that eliminate context switching. For Atlassian-standardized orgs, that might mean accepting Jira Service Management's web-heavy workflow to stay in one ecosystem.

The right tool depends on your team's size, incident volume, and where engineering work happens. Small teams can succeed with manual Google Docs for a while. Mid-market teams handling 15-30 incidents monthly need automation now or they're burning engineering hours. Enterprise teams require SOC 2 compliance, SAML/SCIM, and vendor support capable of responding when production is down at 2 AM.

Schedule a demo and we'll walk you through a real incident on the platform. You'll see auto-captured timelines, AI-drafted summaries, and the difference between documenting after the fact versus capturing as you work. We'll show you how teams handling 30+ incidents monthly use Insights dashboards to prove reliability improvements to executives.

Key terminology

MTTR (Mean Time To Resolution): The average time to fully resolve a failure, including detection, diagnosis, repair, and ensuring recurrence prevention. Calculated as total resolution time divided by number of incidents.

Root Cause Analysis (RCA): Systematic investigation to identify the fundamental reason a failure occurred, distinguishing root causes from symptoms. Effective RCA asks "why" repeatedly until reaching system-level issues.

Blameless culture: An approach focusing on learning from failures without assigning fault to individuals. Assumes everyone had good intentions and did the right thing with available information, emphasizing system problems over personal mistakes.

Timeline reconstruction: The process of recreating the sequence of events during an incident. Manual reconstruction requires you to spend 60-90 minutes searching chat logs and monitoring tools, while automated capture happens in real-time during response.

Service catalog: A structured inventory of services, their dependencies, ownership, and on-call contacts. Enables automated escalation and enriches postmortems with architectural context.

FAQs

See related articles

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom Wentworth

Humans aren’t fast enough for 4 9’s

Hitting 99.99% isn't a faster version of what you already do. It's a different problem to be solved: autonomous recovery, dependency ceilings, redundancies, and the discipline to build systems that buy you 15-30 minutes before you're needed at all.

Norberto Lopes

Norberto LopesSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization