Best incident postmortem software for cloud-native SaaS teams in 2026

Updated February 5, 2026

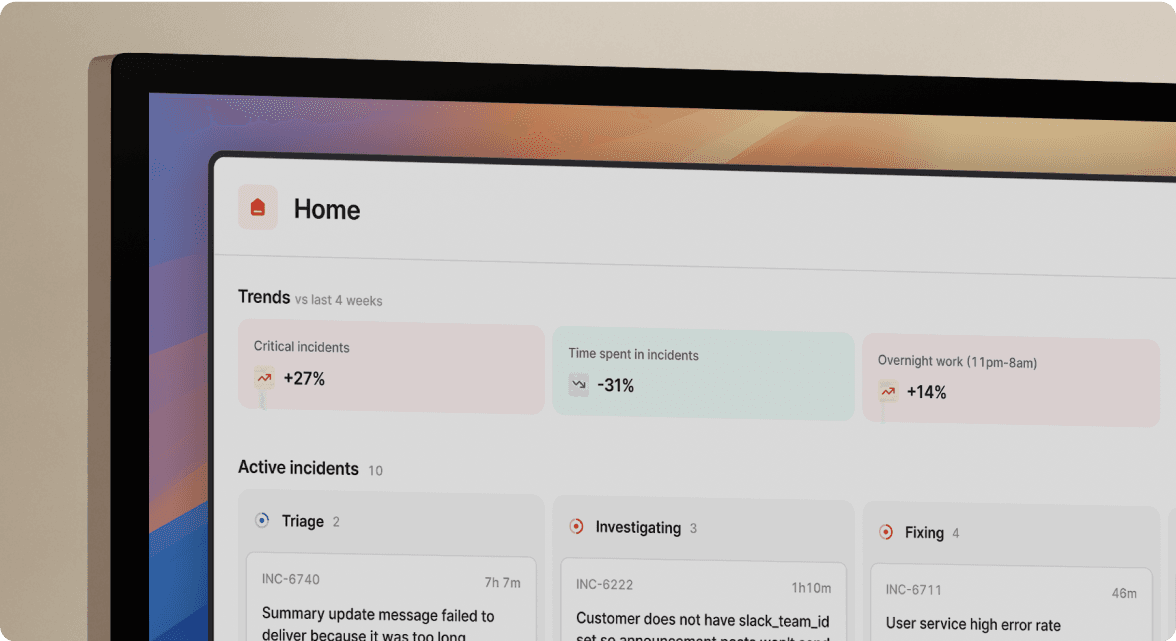

TL;DR: In Kubernetes environments where pods disappear and logs rotate, the best postmortem software automatically captures context during the incident rather than forcing manual reconstruction afterward. incident.io reduces postmortem effort from 90 minutes to 15 minutes by converting Slack conversations, system events, and incident calls into structured reports. Traditional tools like Google Docs and Confluence cannot correlate ephemeral infrastructure data with human decisions, making them poorly suited for microservices architectures. Look for platforms that integrate with your observability stack (Datadog, Prometheus, Grafana) and capture timelines in real-time through Slack or Microsoft Teams.

Pod termination in Kubernetes clusters erases critical evidence. Three days after an incident, SRE teams face a reconstruction problem: Which of 80 microservices failed first? What was the exact timestamp when the database connection pool exhausted? Who suggested the rollback, and why did the team decide against it initially?

Manual postmortem reconstruction wastes 60-90 minutes per incident as teams search through chat history, monitoring tools, and call recordings trying to piece together what happened. In monolithic architectures, teams could reconstruct incidents from memory. In cloud-native environments with ephemeral infrastructure and distributed traces, memory fails. Teams need software that captures the "who, what, and when" automatically as it happens.

Why traditional postmortem tools fail in Kubernetes environments

Google Docs and Confluence were built for static documentation, not dynamic incident response. When your infrastructure is ephemeral, these tools create dangerous gaps in your learning process.

Ephemeral infrastructure erases evidence

Pods are ephemeral, and their logs disappear with them. Once a pod terminates and a new instance starts, critical evidence vanishes:

- Log files: Container logs associated with the old pod get deleted entirely

- Resource snapshots: CPU, memory, and network state become unrecoverable

- Network topology: Pod IP addresses and connection patterns disappear

- Configuration state: Environment variables of crashed containers are lost

As one SRE documented after a node OOM incident: "Unfortunately, I do not have access to the log files from right before the issue occurred anymore."

When using manual documentation tools, you lose the exact timestamps of manual interventions, and without automated linking to your observability stack at the precise moment of failure, this evidence disappears forever.

Distributed complexity creates correlation gaps

A failure in Service A causes latency in Service B, which triggers rate limiting in Service C. Manual documentation rarely captures the exact cascade timing. In micro-services architectures, understanding the sequence matters as much as understanding the root cause.

When pods are evicted, crashed, deleted, or rescheduled, you lose information about why the anomaly occurred. Traditional postmortem templates cannot automatically correlate a 14:32:17 spike in API latency with the 14:31:54 deployment that introduced a connection leak.

Context switching breaks incident flow

During high-stress incidents, SREs cannot stop troubleshooting to update a Jira ticket or type notes into a Google Doc. The "designated note-taker" problem pulls one engineer away from resolution work. Critical decisions made in Zoom calls or Slack threads never make it into the final document because nobody had the bandwidth to capture them in real-time.

"Without incident.io our incident response culture would be caustic, and our process would be chaos." - Matt B. on G2

Traditional tools force teams to choose between resolving the incident quickly and documenting it thoroughly.

Key features to look for in cloud-native postmortem software

The right postmortem platform for Kubernetes environments must do more than provide a text editor. It must function as an active participant during the incident, capturing context automatically.

Automated timeline collection from multiple sources

Your postmortem tool should scrape chat logs from Slack or Microsoft Teams, system events from PagerDuty or Datadog, and integration events from your entire stack. Every role assignment, severity change, shared dashboard link, and decision point should populate the timeline automatically without manual logging.

Modern platforms capture timelines automatically. Every Slack message, slash command, role assignment, and integration event gets logged with precise timestamps. This approach eliminates reconstruction work entirely, reducing post-mortem writing time from 90 minutes to 15 minutes by converting real-time incident data into structured reports.

Real-time call transcription and decision capture

Voice communication during incidents contains critical context that rarely makes it into documentation. Look for AI-powered transcription that captures verbal decisions, flags key moments (like "I think this correlates with the 2:30 AM deployment"), and converts unstructured conversation into searchable, structured data.

incident.io's Scribe feature joins Zoom or Google Meet calls automatically and transcribes everything in real-time. When someone says "let's rollback first," that decision is captured with timestamp and speaker attribution.

Deep observability stack integration

Your postmortem software must integrate bidirectionally with your monitoring stack:

- Alert ingestion: Datadog, Prometheus, Grafana, New Relic alerts flow into incident channels automatically

- Dashboard preservation: Monitoring snapshots embed in timelines with preserved time windows

- Trace correlation: Distributed tracing systems like Jaeger link directly from postmortem text

- Metric context: System performance data correlates with incident events automatically

incident.io offers 70+ integrations with faster time-to-value. Datadog fires alerts that create incident channels automatically, Prometheus routes through Alertmanager into the platform, and Grafana dashboards wire into workflows seamlessly.

AI-assisted summarization and root cause analysis

500 Slack messages and 40 minutes of Zoom conversation contain the story of your incident, but extracting the narrative manually takes hours. AI should parse this unstructured data into a "Root Cause" summary, "Timeline" section, and "Contributing Factors" list that's 80% complete automatically.

Our AI SRE assistant pulls data from alerts, telemetry, code changes, and past incidents to cut through noise. The technology identifies likely causes based on patterns, automates up to 80% of incident response, and helps teams resolve incidents faster without manual context gathering.

Bidirectional sync with ticketing systems

When you identify follow-up actions during the incident, they should flow into Jira or Linear automatically with full context. Changes to ticket status should reflect back in your incident timeline. This eliminates the "we discussed five action items but only two made it into Jira" problem.

incident.io's Jira integration auto-creates tickets with full field mapping and keeps fields synchronized. Confluence export pushes post-mortems with one click, maintaining formatting and embedded links.

Top incident postmortem software for microservices teams

I've evaluated the leading platforms based on cloud-native context capture, Kubernetes integration depth, automation capabilities, and time-to-value for SRE teams.

incident.io: Best for automated timeline capture and Slack-native workflows

incident.io positions itself as the postmortem tool that writes itself. Instead of providing a blank document after the incident, it captures the entire incident as it unfolds through Slack commands and integrations.

How it works: When you run an incident using /inc commands, every action auto-populates the timeline. Role assignments (/inc assign @sarah), severity changes (/inc severity critical), Slack threads, shared Datadog links, and escalations all become timeline entries. When someone shares a dashboard snapshot, we preserve it with timestamp. When the team discusses rollback options in Slack, the conversation is already part of the record.

Our Scribe feature records and transcribes incident calls, capturing decisions made verbally. When you type /inc resolve, our AI drafts the postmortem automatically using all captured data. The result is typically 80% complete, requiring 10-15 minutes of review and refinement instead of 90 minutes of writing from scratch.

Cloud-native strengths: Deep integration with Kubernetes observability tools. Datadog integration connects monitors to incidents, Prometheus Alertmanager routes into the platform, and service catalog functionality maps incidents to specific microservices automatically. When a pod crashes, the platform knows which service owns it and who to page.

Pricing: Team plan starts at $19 per user per month for incident response, with on-call adding $12 per user per month. Pro plan costs $25 per user per month for incident response plus $20 per user per month for on-call ($45 total), adding unlimited workflows, Microsoft Teams support, and AI-powered postmortem generation.

Setup time: Typical 3-5 day implementation versus 2-3 weeks for complex platforms.

"I enjoy that everything (or most things) is on Slack. I'm on slack all day at work, so not having to flick through other apps to get all my information is vital." - Kimia P. on G2

Best for: Teams already centered on Slack or Microsoft Teams who want zero-friction postmortem automation and prefer opinionated defaults over infinite customization.

Limitations: Not designed for microservice SLO tracking (Blameless is stronger there). Requires Slack or Microsoft Teams as the primary collaboration platform. Less alerting customization than PagerDuty's sophisticated rules engine.

Blameless: Best for SRE-focused reliability engineering and SLOs

Blameless (now part of the FireHydrant ecosystem) positions itself as the platform for teams deeply invested in Google SRE methodology. It emphasizes error budgets, SLO tracking, and the mathematical framework of reliability engineering.

How it works: The platform automatically builds incident timelines and generates postmortem drafts directly in collaboration tools. Workflow automation handles repetitive tasks during incidents through defined rules and triggers.

Cloud-native strengths: SRE AI extends traditional practices by adding context to SLIs, incorporating ML-based anomaly scores to make error and latency measurements more meaningful. The platform automates root cause analysis by reconstructing incident timelines and surfacing probable causes from logs and metrics.

Best for: Organizations prioritizing SLO/SLI frameworks and error budget management. Teams that want deeper reliability engineering features beyond incident response coordination.

Trade-offs: Heavier implementation compared to incident.io's rapid deployment. More sophisticated in reliability mathematics but potentially slower time-to-value for teams that just need effective postmortems without full SRE program adoption.

PagerDuty: Best for enterprise alerting with basic postmortem capabilities

PagerDuty built its reputation on rock-solid alerting and escalation. The platform excels at getting the right person paged at the right time with battle-tested reliability.

How it works: PagerDuty focuses on alert routing, noise reduction, and on-call scheduling. Postmortem features exist but feel like a separate module rather than core functionality. Teams typically use PagerDuty for alerting and then switch to other tools (Confluence, Jira, incident.io) for postmortem work.

Pricing: Professional plan costs $25 per user per month, Business plan $49 per user per month. However, the base platform plus AI features, noise reduction, and runbooks can reach $60-80 per user per month once you add the capabilities needed for complete incident management.

Best for: Large enterprises with complex alerting requirements, teams already invested in PagerDuty's ecosystem, organizations that need maximum alerting customization and can accept higher costs.

Limitations: Pricing escalates quickly with per-seat charges and add-ons. Post-incident learning features are weaker than dedicated postmortem platforms. The "smoke detector versus fire response team" distinction applies here: PagerDuty excels at alerting but leaves coordination and documentation gaps.

FireHydrant: Best for service catalog integration and structured retrospectives

FireHydrant competes directly with incident.io in the modern incident management space, emphasizing service catalog depth and customizable retrospective templates.

How it works: FireHydrant logs all incident events to a timeline, and responders can star key items during the incident to highlight them for later retrospectives. AI Copilot drafts answers to retrospective questions based on gathered context. The platform emphasizes structured data collection with templates.

Cloud-native strengths: Strong service catalog functionality that maps dependencies between microservices, identifies downstream impacts, and surfaces ownership information during incidents. Good for teams wanting deep service relationship modeling.

Trade-offs: Both platforms cut postmortem time by 60-80%. Our strength is rapid narrative generation ready for immediate review. FireHydrant's strength is structured, template-driven retrospectives with deep customization. Setup pricing requires custom quotes rather than transparent published pricing.

Comparison of postmortem tools for SaaS teams

| Feature | incident.io | Blameless | PagerDuty | FireHydrant |

|---|---|---|---|---|

| Automation level | High (80% auto-draft) | High (AI timeline) | Moderate | High (AI-assisted) |

| K8s/cloud context | Deep integrations | SLO/SLI focused | Alert-focused | Service catalog |

| Setup time | 3-5 days | 2-3 weeks | 1-2 weeks | 1-2 weeks |

| Pricing | Published ($19-45/user) | Contact sales | Published ($25-80+/user) | Contact sales |

| AI features | Advanced (Scribe + auto-draft) | Strong (RCA) | Add-on cost | Strong (Copilot) |

| Call transcription | Yes (Scribe) | No | No | Yes |

| Slack-native | Full workflow | Integration | Integration | Integration |

Checklist for effective cloud-native postmortems

Use this framework to evaluate whether your postmortem process captures what matters in Kubernetes environments:

Timeline precision:

- Does the timeline include exact timestamps for every deployment, configuration change, and manual intervention?

- Are alert timestamps correlated with system metric spikes automatically?

- Can you trace the cascade: when Service A failed → when Service B started showing latency → when Service C began rate limiting?

Ephemeral data preservation:

- Are pod logs captured before termination and linked in the postmortem with search URLs?

- Do you have container resource snapshots (CPU, memory, network) from the failure window?

- Are distributed trace IDs embedded so you can replay the exact request path months later?

Human decision context:

- Are verbal decisions from incident calls transcribed and timestamped?

- Do you know who suggested each mitigation approach and why the team chose one over another?

- Is the "why we didn't rollback immediately" context preserved?

Integration completeness:

- Do Datadog or Grafana dashboard snapshots preserve the exact time window, not just current state?

- Are GitHub PR links included for the deployment that triggered the incident?

- Do follow-up action items flow into Jira or Linear automatically with full context?

Learning accessibility:

- Can new engineers search past postmortems by service name, error type, or root cause?

- Are postmortems published within 24-48 hours while memory is fresh?

- Do postmortems link to related incidents that share patterns?

If your team is still wrestling with the postmortem criteria, schedule a demo to see how cloud-native teams are cutting postmortem preparation time from hours to minutes. You'll see live examples of Kubernetes incidents flowing through automated capture, real-time collaboration during incident response, and how teams turn those insights into Jira tickets without manual translation.

Key terms

Ephemeral infrastructure: Kubernetes pods, containers, and compute resources that exist temporarily and disappear when terminated, taking their logs and state with them. This makes post-incident reconstruction difficult without automated capture.

Distributed tracing: A method of tracking requests as they flow through multiple microservices, assigning unique trace IDs that allow engineers to reconstruct the complete path of a single transaction across dozens of services.

Timeline capture: The automated recording of all incident events (alerts, chat messages, decisions, system changes) with precise timestamps, eliminating the need to manually reconstruct what happened after resolution.

RCA (root cause analysis): The specific investigation into the underlying technical or process failure that triggered an incident, answering "why did this happen?" rather than just "what happened?"

Service catalog: A centralized registry mapping microservices to their owners, dependencies, and operational context, enabling incident management platforms to automatically page the right team when a specific service fails.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization