5 reasons why you shouldn't buy incident.io

Not many companies will tell you why you shouldn’t use their product, but any product that tries to be everything to everyone is doomed to failure.

When you build without a specific user in mind, your target becomes the intersection of many viewpoints, and what you build is the lowest common denominator. What usually follows is software that can technically do everything, but feels unfocused, complex, and unpleasant to use. Something everyone is equally unhappy with. I’m sure you’ve got a piece of software in mind that fits the bill here!

So why write this post? At incident.io we do have opinions, and we know exactly the kind of organizations where we’re a good fit. We make trade-offs around what we’re building and our long-term goals to fit those organizations and individuals. Naturally, because we’re building for these folks specifically, this isn’t going to be the ideal product for everyone, which is very OK.

Rather than sell you on why you should use us, I thought it might be fun (and maybe easier?) to highlight where we’re not a good fit. And as with most things in life this isn’t a set of hard-and-fast rules, but rather a rough guide intended to help you make the right decision.

If you’re in any doubt over whether we’re the right choice for levelling up how you deal with incidents at your organization, come and talk to us!

So let’s get into it! My top five reasons why you shouldn’t buy incident.io:

1. You’re looking for an SRE-only tool

When things go wrong in organizations, it’s pretty common that the folks in Site Reliability Engineering (SRE), platform and other lower level engineering teams get involved. These teams are often the ones the have the most acute pain when it comes to incidents, and as a result there’s no shortage of SRE-focused tools to help make this better.

But incidents don’t exist solely within the technical space. They require people from all corners of the organization to contribute and collaborate, and the tools that cater for engineering alone are leaving significant value on the table.

When your site or service is down, engineers fix whatever's broken, but customer support deal with inbound from customers, execs need to be looped in to make decisions, teams in compliance need to assess impact on regulation, etc. And when the dust has settled, you want all of these different invovled to help determine how you can do it better next time.

It’s not that incident.io isn’t a good fit for an SRE team – and we have many SRE teams using us – but when we need to make trade-offs for specific teams vs the whole organization, we’re leaning toward the latter.

In practice that means things like building rock solid integrations with customer support products before we build service catalog integrations, and building a policy engine that allows senior stakeholders and compliance teams to have confidence that the right things are being done before we have a terraform provider.

If you’re aligned on our vision of engaging your whole organization in incident response, incident.io will be a great fit. If you don’t buy it, and you’re after a tool for tracking incidents against your microservices and measure your SLOs, you might find better alternatives elsewhere.

2. You’re looking for a Slack bot

If you look at our prices and feel like we’re an overpriced Slack bot, then you’re either looking for something that we’re not, or we haven’t done a good enough job of communicating the breadth and capabilities of the platform.

We’re building incident.io on top of Slack because it’s where our customers are communicating, and effective incident response is founded on rock-solid communication. We exist in Slack because it's simple, ergonomic, and allows the whole organization to get involved with minimal friction.

But incident response is one part of a much bigger picture when it comes to dealing with failure. To increase the resilience of your organization you need to be able to:

- Define and encode how you should be responding, so it actually happens. Paper-based processes just don't work!

- Integrate with multiple tools within different corners of your org. Nobody wants to be hopping between five different tools when they’re under pressure.

- Keep track of important events within an incident to help build up an accurate timeline. This is helpful for folks catching up, and a useful as the basis for follow-up activities.

- Collect the perspectives of people involved and collaborate on curating an accurate picture within the timeline.

- Build and track post-mortem documents, action items and follow-up work

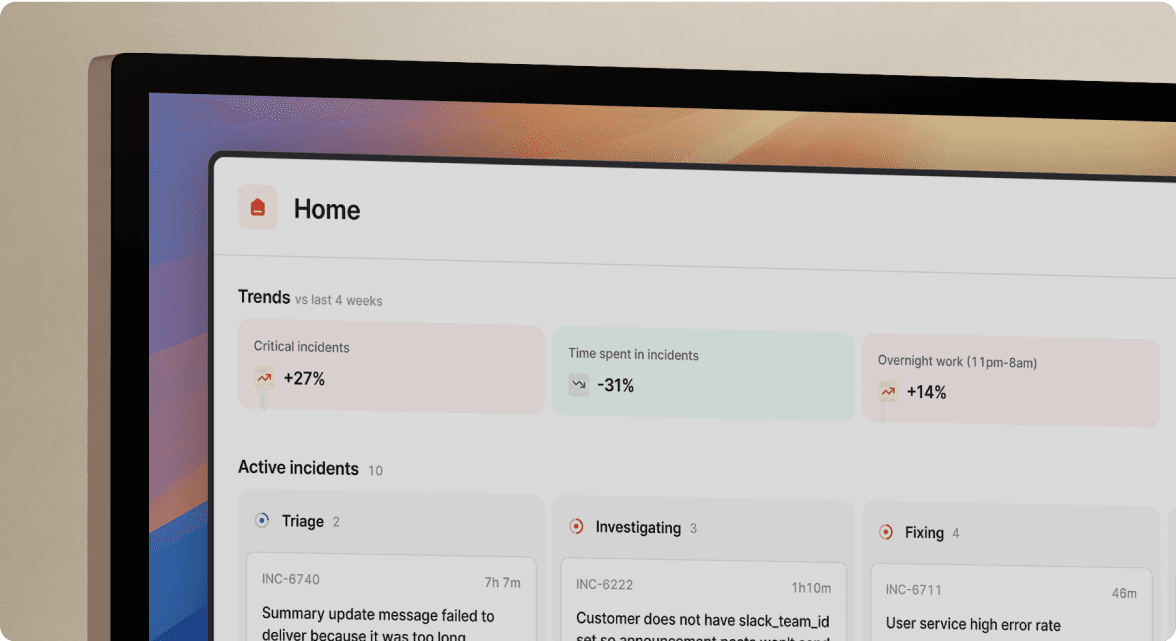

- Find, review and learn from past incidents, whilst identifying trends and gathering useful insights

Hopefully you get the picture – there’s a lot more to being a resilient organization than simply creating a new channel in Slack!

If none of that resonates, and what you're after is a new channel and some slash commands, incident.io is going to feel overpriced.

3. Your organization has fewer than 20 people

It’s not that smaller organizations don’t have incidents, or that the ones they have aren’t important, but when you know the name, role and coffee order of everyone within your company you probably aren’t facing the kind of complexity we help to resolve.

It’s never too early to think about getting good foundations in place, but during the early days of a company you need incredibly judicious with your time and effort, and deploying an incident management solution might not be the kind of the thing you want to prioritise.

If I’ve not done enough to dissuade you, or you’re small but growing fast and want to be prepared, feel free to check our Starter plan which provides access to basics and a solid foundation for you to build on.

4. You want to customise everything

We often describe our approach as “being opinionated but with enough flexibility to imprint your process”. We have no idea what your organization needs to do when a critical incident is declared so we allow you to customise that to your need, but we do have opinions about how you should respond, and that your incidents should have a severity, a lead, and some key statuses.

To highlight a few examples:

| Opinions | Flexibility |

|---|---|

| Every incident has a lead | We let you define what that role is called |

| Every incident has a title, summary, severity and status | We let you define any number of additional data points using custom fields. If you need to collect the number of impacted customers, the url of the Google drive folder for evidence, or the impacted service, we’ve got you covered. |

| Declaring an incident creates a new channel | We let you define any other automated actions that are important to your response. Need to page the CEO when a critical incident is declared? Need to create a set of default actions when the database crashes? Workflows allow you to bring your process to us. |

| Follow-up work should be tracked and logged against incidents | You can track your work where it makes sense to you, whether that’s Jira, Linear, GitHub, etc. |

| Post-mortems should be attached to incidents | We let you create these automatically, by integrating with document providers, and defining the templates for how they should look. We also allow you to define which incidents need them using policies. |

We’re not building Jira here, so if you’re looking for infinite flexibility you might find us a little rigid. If you want to import years of experience and a host of sensible defaults though, you know where we are!

5. Your goal is to minimise MTTR

MTTR, or Mean Time to Recovery, is a measure of how quickly you manage to restore your service after it fails. Broadly speaking it works by looking at all your past incidents, and averaging how long was taken from the start to the end.

It seems entirely natural and intuitive to want to drive your recovery times down, but unlike a number of other incident platforms, we’re not going to promise to do this.

To be very, very clear: we’re not against speeding up your response, and we firmly believe we can help to make your process smoother, faster, and less effortful. But if your focus is on the MTTR graph rather than the overall resilience of your organization, the happiness of your people, and the longer term benefits that a healthy incident culture can drive, then you might find we’re not the perfect fit.

If you’ve made it here, the chances are you’ve not found a good reason why incident.io wouldn’t be an excellent fit for you. Obviously then, the next step is to chat to us or get started by yourself. And if you’re not ready to take a look but want to share your opinions, I’m @evnsio on Twitter 😉

I'm one of the co-founders, and the Chief Product Officer here at incident.io.

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization