SRE incident post-mortem best practices: Templates, process & learning culture

Updated March 13, 2026

TL;DR: Most post-mortems fail not because engineers lack skill, but because the process punishes honesty and drowns teams in manual reconstruction work. Effective post-mortems need three things working together: a blameless culture where "human error" is the start of the investigation, not the end; automated timeline capture that eliminates 60-90 minutes of archaeology per incident; and disciplined action item tracking that closes the loop on systemic fixes. This guide covers all three, with a copy-paste template and a five-step process your team can run this week.

Three days after a P1 resolves, you open a blank Google Doc to write the post-mortem. You spend 90 minutes scrolling through Slack threads, cross-referencing PagerDuty alerts, and trying to remember what your team decided on that 2 AM Zoom call. You publish the document five days late with an incomplete timeline, file it in Confluence, and watch the same incident recur three months later because nobody completed the action items.

This is the post-mortem archaeology problem, and it repeats at almost every engineering organization at scale. We solve it by addressing three failure points: blameless culture (so engineers tell the truth), automated timeline capture (so you skip the archaeology), and disciplined action tracking (so fixes actually happen). Google's SRE Book on postmortem culture established the cultural foundation, and modern tooling automates the data layer, but you need both working together.

What is an SRE incident post-mortem?

A post-mortem is a written record of what happened during an incident, why it happened, and what the team will do to prevent recurrence. Google's postmortem documentation defines the standard artifact as including a summary, timeline, root cause analysis, impact assessment, and corrective action items with owners and due dates.

Two terms worth clarifying:

- The post-mortem: The document and the overall process covering data gathering, analysis, and action tracking.

- The incident review: The synchronous meeting where the team walks through the drafted document together.

These terms get used interchangeably in practice, but keeping the distinction matters for process design. A review is a meeting and a post-mortem is an artifact, and the artifact should exist before the meeting starts, not get created during it.

Why run post-mortems at all? Not as punishment or compliance theater. The goal is organizational learning: turn one team's outage into a shared improvement that reduces the probability of the same failure pattern hitting a different service next month. As Google's SRE Workbook puts it, postmortems should reach "the widest possible audience that would benefit from the knowledge or lessons imparted."

The blameless post-mortem: Moving beyond "human error"

Why accountability doesn't mean blame

"Blameless" is one of the most misunderstood terms in SRE. It doesn't mean "no consequences" or "anything goes." It means assuming that everyone involved in an incident had good intentions and acted on the best information available to them at the time.

Removing the fear of consequences frees people up to be honest about their missteps and misunderstandings. That's the only way to fix systemic issues. Accountability shifts from "who broke it" to "what system condition allowed this to break, and how do we fix the system?"

The language you use in the document determines what your team investigates. Here's the same scenario written two ways:

| Blame-oriented | Blameless |

|---|---|

| "Engineer X deployed a buggy change" | "The CI/CD pipeline did not catch the bug before production" |

| "The on-call was slow to respond" | "Alert noise caused fatigue, delaying triage of a critical signal" |

| "The team missed a warning sign" | "Warning signs were not documented in runbooks, making them easy to miss under pressure" |

The first column produces "retrain the engineer" action items. The second column produces fixable system changes. Modern SRE thinking views incidents as arising from complex interactions between tools, processes, and communication breakdowns, not individual negligence. That framing is not just compassionate, it's analytically correct.

Creating psychological safety for honest reporting

Culture is upstream of process. If engineers believe admitting a mistake leads to punishment, they hide details, minimize impact estimates, and produce post-mortems that are technically complete but factually thin. Google's SRE Book on postmortem culture is explicit: "for a postmortem to be truly blameless, it must focus on identifying the contributing causes of the incident without indicting any individual or team for bad or inappropriate behavior."

Build this safety by modeling honesty yourself. When a VP of Engineering says "here's the deployment I approved that contributed to last Tuesday's outage, and here's what I'm changing in my review process," it does more for psychological safety than any all-hands speech about blameless culture. Review every post-mortem before publishing to catch blame-oriented language.

"incident.io helps promote a blameless incident culture by promoting clearly defined roles and helping show that dealing with an incident is a collective responsibility." - Saurav C. on G2

How to conduct an incident post-mortem: A 5-step process

Step 1: Appoint an owner and gather the timeline

Assign a single post-mortem owner immediately after the incident resolves, ideally the Incident Commander. This person drives the document through to completion and doesn't need to write every section, but ensures it gets done.

The hardest part of step one is building the timeline. Reconstruction wastes 60-90 minutes per incident as teams search through chat history, monitoring tools, and call recordings trying to piece together what happened. Start reconstruction within 24 hours of resolution, while the Slack thread remains readable and details stay fresh. Context degrades fast and the longer you wait, the more narrative replaces evidence.

The incident.io post-incident flow automates this entirely. Every /inc command, role assignment, severity change, and key message timestamps automatically as the incident runs in Slack, so when you type /inc resolve, the timeline is already built. More on this in the automation section below.

Step 2: Analyze root causes using the 5 Whys

The 5 Whys technique moves your analysis from proximate symptom to systemic cause by asking "why" five times in succession. Here's a concrete example using a database CPU spike:

- Why did the service degrade? The database CPU hit 100%.

- Why did CPU hit 100%? A specific query was running a full table scan on a large table.

- Why was it doing a full table scan? A new code path queried a column with no index.

- Why was unindexed code deployed? The deployment pipeline has no query performance check against production-scale data.

- Why doesn't the pipeline have this check? The code review process has no standard for query performance.

Systemic root cause: Two specific process gaps, each owned and fixable.

Modern SRE theory prefers "contributing factors" over "root cause" for complex incidents, because failures arise from multiple interacting conditions rather than a single broken part. Use the 5 Whys to surface contributing factors, then list them explicitly rather than forcing everything into a single cause. The breakdown of the post-mortem problem explains why single-cause framing consistently produces weaker post-mortems.

Step 3: Draft the document asynchronously before the meeting

Never open the review meeting with a blank document. The meeting's job is to challenge, refine, and extend an existing draft, not to create one from scratch in real time. Pre-populate the timeline, impact summary, and a first pass at contributing factors before you put eight engineers in a room together.

Google's SRE Workbook recommends sharing the first draft with a small group of senior engineers to assess completeness before wider review. This two-stage review catches gaps and blame-oriented language before it becomes org-wide.

Step 4: Run the review meeting

The review meeting has one job: deepen the analysis and align on action items. It's not a blame session, a status update, or a therapy circle.

Who attends: the Incident Commander (leads discussion), on-call engineers who responded, service owners for impacted systems, and optionally the Engineering Manager for pattern context or product/support stakeholders for customer impact context.

Facilitation rules:

- Start with the timeline, not the root cause (facts first, analysis second). If discussion veers toward "who," redirect to "what condition allowed this."

- End every meeting with action items assigned to specific owners. "We should improve our deployment pipeline" is not an action item. "Sarah adds query performance checks to the staging deploy step by March 19" is.

Step 5: Assign and track follow-up actions

This is where most post-mortem programs collapse. The document gets written, the meeting gets held, five action items get listed, and three months later the same incident recurs because those items lived in a Google Doc nobody reopened.

If your action item completion rate falls below 50%, your postmortems are theater written to satisfy a process, not to change anything.

What makes an action item effective:

- Specific: "Add rate limiting to the

/searchAPI endpoint at 100 req/s" not "improve rate limiting." - Owned: One named person is responsible, not "the team."

- Due-dated: A specific date, not "soon" or "Q2."

- Prioritized: Distinguish between mitigative actions (fixes the immediate gap) and preventative actions (prevents the class of problem).

Move action items to Jira, Linear, or your existing task tracker immediately after the meeting. incident.io's auto-export follow-ups does this automatically, so action items created during the incident or review land immediately in your existing workflow rather than orphaned in a document. The AI-suggested follow-ups feature analyzes the incident timeline to surface potential action items you might have missed, such as gaps in monitoring coverage or configuration changes that need documentation, so you catch follow-ups before they fall through the cracks.

SRE incident post-mortem template

Core components checklist

Before you publish, confirm every section is present:

- Summary: Two to three sentences explaining what happened, when, and what was affected.

- Impact: Quantified customer and business effects (users affected, error rate, revenue, SLO budget consumed).

- Timeline: Chronological list of key events with UTC timestamps, from first alert to full resolution.

- Contributing factors: Two to five systemic causes, framed blameless (process gaps, tool limitations, documentation failures).

- What went well: Explicitly note what worked during response, to reinforce those behaviors.

- Action items: Specific, owned, due-dated. Separated into mitigative (fixes this specific gap) and preventative (addresses the class of failure).

Google's canonical postmortem example follows this structure. Keep it as your reference for completeness, not as a template to copy field-for-field. Complex templates get abandoned.

Example structure

Copy this directly into your Confluence page, Notion doc, or incident.io post-mortem editor:

## Post-mortem: [Incident Title]

**Date:** YYYY-MM-DD

**Severity:** P1 / P2 / P3

**Owner:** [Name]

**Status:** Draft / In Review / Published

---

### Summary

[2-3 sentences: what broke, when, for how long, what was affected]

### Impact

- Users affected: [number or %]

- Duration: [X minutes]

- SLO budget consumed: [X%]

- Revenue / business impact: [if applicable]

### Timeline (UTC)

- HH:MM — First alert fired in [tool]

- HH:MM — On-call paged

- HH:MM — Incident channel created, [name] assigned as IC

- HH:MM — [Key diagnostic action]

- HH:MM — Root cause identified: [brief description]

- HH:MM — Fix deployed / mitigation applied

- HH:MM — Incident resolved

### Contributing factors

1. [Process/tool/system gap 1]

2. [Process/tool/system gap 2]

3. [Process/tool/system gap 3]

### What went well

- [Behavior or tool that worked effectively]

### Action items

| Action | Owner | Priority | Due date | Status |

|--------|-------|----------|----------|--------|

| [Specific fix] | [Name] | High | YYYY-MM-DD | Open |

| [Specific fix] | [Name] | Medium | YYYY-MM-DD | Open |

incident.io's post-mortem creation flow pre-populates this structure from captured timeline data, so you're editing and refining rather than starting from scratch.

Measuring the effectiveness of your post-mortems

Reducing incident recurrence is the goal. Writing post-mortems is the mechanism. Track these three metrics to know whether your process is working:

1. Action item completion rate

This is the most direct signal. The 50% threshold is the failure point: below that, your team writes post-mortems to satisfy a process, not to change anything. Target 80% or above, with High-priority items resolved within 30 days.

2. Incident recurrence rate

The percentage of incidents that share a root cause with a previous incident in the last 12 months. Teams with healthy post-mortem programs track this as primary outcome metric. If your repeat incident rate climbs above 30%, your post-mortems are producing documentation but not learning. KPI Depot benchmarks a good repeat incident rate at below 5%, indicating strong resolution processes and effective follow-through on action items.

3. Post-mortem completion time

The number of hours from incident resolution to published document. Target under 48 hours. Google's SRE Workbook recommends tracking this metric for trend analysis to identify systemic root-cause types over time.

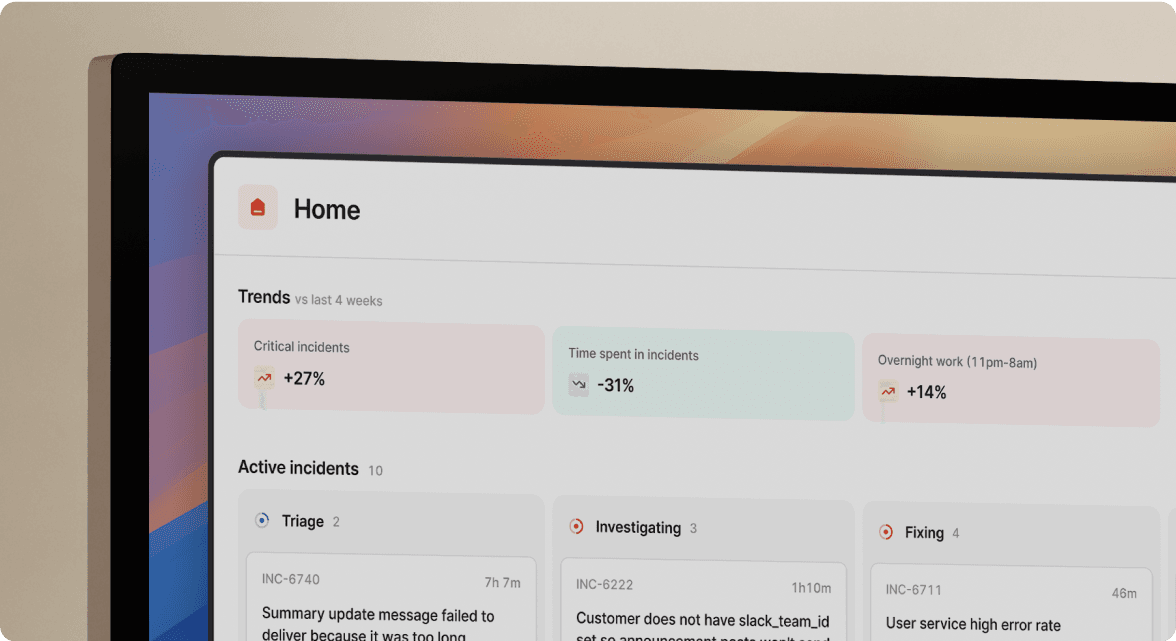

The incident.io Insights dashboard surfaces all three automatically: action item completion rates, incident frequency by service, and recurrence patterns across your incident history. Use this data directly in quarterly reliability reviews rather than building spreadsheets manually. For enforcing follow-up completion by severity, the follow-up policies configuration lets you require P1 action items to be resolved before an incident fully closes.

Automating the "archaeology": How tools reduce the burden

Auto-capturing timelines in Slack

Reconstruction is the biggest barrier to effective post-mortems. Manual reconstruction means scrolling back through Slack channels and pulling alert history from your monitoring tool three days after an incident. You rely on engineers' memories for decisions made on a 2 AM Zoom call, and context degrades fast.

incident.io eliminates this by building the timeline as the incident runs. Rather than reconstructing events afterward, every significant action auto-populates the record as the incident unfolds. Role changes, severity updates, shared monitoring links, and pinned decisions all capture automatically /inc escalate/inc assign as part of normal incident management activity, through commands like /inc escalate and /inc assign. Scribe records and transcribes incident calls in real time, capturing verbal decisions without requiring a dedicated note-taker.

"Another handy feature is its ability to automate routine actions, such as postmortem reports generation. This automation can significantly reduce the time spent on manual, repetitive tasks, reusing the incident communication channel on Slack as a basis for the postmortems summary." - Vadym C. on G2

When you type /inc resolve, post-mortem creation is already in progress. No archaeology. The document opens with the timeline pre-populated, so your team starts the post-mortem meeting with 80% of the data work already done.

Using AI to draft the narrative

Timeline capture gives you the raw data. The incident.io AI platform takes that data and generates a post-mortem draft covering incident summary, contributing factors, and suggested action items, based on what actually happened in the channel, not what someone remembers three days later.

The new post-mortem editor uses the incident timeline and participant context to help you move from raw information to a clear narrative. Instead of starting with a blank page, engineers spend 10-15 minutes reviewing and refining an AI-generated draft rather than 90 minutes reconstructing events from memory.

Do the math for a typical SRE team. Eighteen incidents per month at 90 minutes of manual reconstruction each, times $110/hour fully-loaded SRE cost, equals approximately $35,640 annually in documentation work alone. Teams shifting from 90-minute manual reconstruction to 10-15 minute AI-assisted review can reclaim approximately $30,000 to $32,000 per year in engineering time before accounting for MTTR improvements.

The SRECon 2025 AI in SRE reflects the broader industry shift: teams that automate the documentation layer free their engineers to focus on the analysis layer, which is the part that actually drives reliability improvements.

"My favorite thing about the product is how it lets you start somewhere simple, with a focus on helping you run incident response through Slack. Once you're ready for more, it's got great features you can dive into... and integrations with all the major tools you'd expect." - Chris S. on G2

Automated timeline capture plus AI drafting turns your post-mortem meeting into a focused 45-minute analysis session rather than a two-hour reconstruction exercise. That shift makes post-mortems worth attending, which drives adoption, which compounds the learning over time.

Put this framework to work

Culture, process, and automation form the three legs of an effective post-mortem program. Remove culture and you get polished documents that don't tell the whole story. Remove automation and you get burned-out engineers who stop writing post-mortems. Remove process and you get documentation that changes nothing.

The incident.io post-mortem workflow handles the automation leg, so your team can focus on the culture and process legs where human judgment is irreplaceable.

Schedule a demo and we'll show you how the full incident-to-post-mortem workflow operates end to end, including how Intercom cut post-mortem completion from 5 days to 24 hours.

Key terms glossary

Blameless culture: A cultural operating principle where incident investigations focus on identifying the systemic conditions that led to failure, rather than assigning fault to individuals. Assumes good intentions and sufficient information as the default, and asks "how did the system allow this" rather than "who caused this."

Root cause analysis (RCA): A structured method for moving from incident symptoms to underlying systemic causes. Modern SRE practice prefers "contributing factors" over a single root cause, because complex systems fail through multiple interacting conditions rather than a single broken part.

MTTR (Mean Time to Resolution): The average time from first alert to full service restoration across all incidents in a measurement period. Calculated by summing total resolution time and dividing by incident count.

Timeline: A chronological record of key events, communications, role assignments, and decisions during an incident with UTC timestamps. Forms the foundational data for post-mortem analysis and replaces the need for memory-based reconstruction.

Action item: A discrete, trackable task assigned to a single named owner with a specific due date, designed to address a contributing factor identified in the post-mortem. Effective action items are specific enough that their completion is objectively verifiable.

Contributing factors: The set of systemic conditions (process gaps, tool limitations, documentation failures, communication breakdowns) that combined to produce an incident. Distinguished from a single "root cause" by acknowledging that production failures are typically multi-causal.

Post-incident flow: The structured sequence of steps after an incident resolves, covering timeline review, post-mortem drafting, review meeting, action item creation, and follow-up tracking. Configurable in incident.io to enforce the process automatically.

FAQs

See related articles

Customers over control: how we measure On-call reliability

Instead of thinking about reliability as an exercise in figuring out what we can control, and ignoring anything beyond that, we think about what we'll be really proud to offer to customers.

Mike Fisher

Mike Fisher

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom WentworthSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization