We rebuilt our post-mortems from the ground up

Every team we talk to says some version of the same things about post-mortems: they take too long to draft, nobody reads them, and the follow-ups never get done.

It’s a huge shame, because really well written and researched post-mortems are genuinely one of the most useful things you can do after an incident. They’re how you turn a bad day into something that actually makes you, and your team, better.

The challenge usually isn't that teams don't care, it's that the process around them is broken. You close the incident, open a blank document, and then spend the next few hours reviewing a tonne of information and copy-pasting from Slack, trying to remember what happened, sometimes days ago.

We think that's fixable, and we’ve rebuilt our post-mortems product from the ground up. Today we're launching the new experience, and I want to walk you through what we’ve done and why.

Let AI do the heavy lifting

Much of the heavy lifting when writing a post-mortem is pulling information together; it’s digging through Slack threads or Teams conversations, cross-referencing the timeline, checking PRs, or just trying to remember what happened at 2am. Unless you’ve been living under a rock, this will sound like “something AI could just do for me”. We totally agree!

Here's how incident.io AI can now help with post-mortem workflows:

- Generate a first draft in one click. We consolidate all the context from your Slack or Teams channels, timeline, pull requests, custom fields, and any AI investigations you’ve run on the platform to write a full first draft. Not a template, a real write-up specific to your incident.

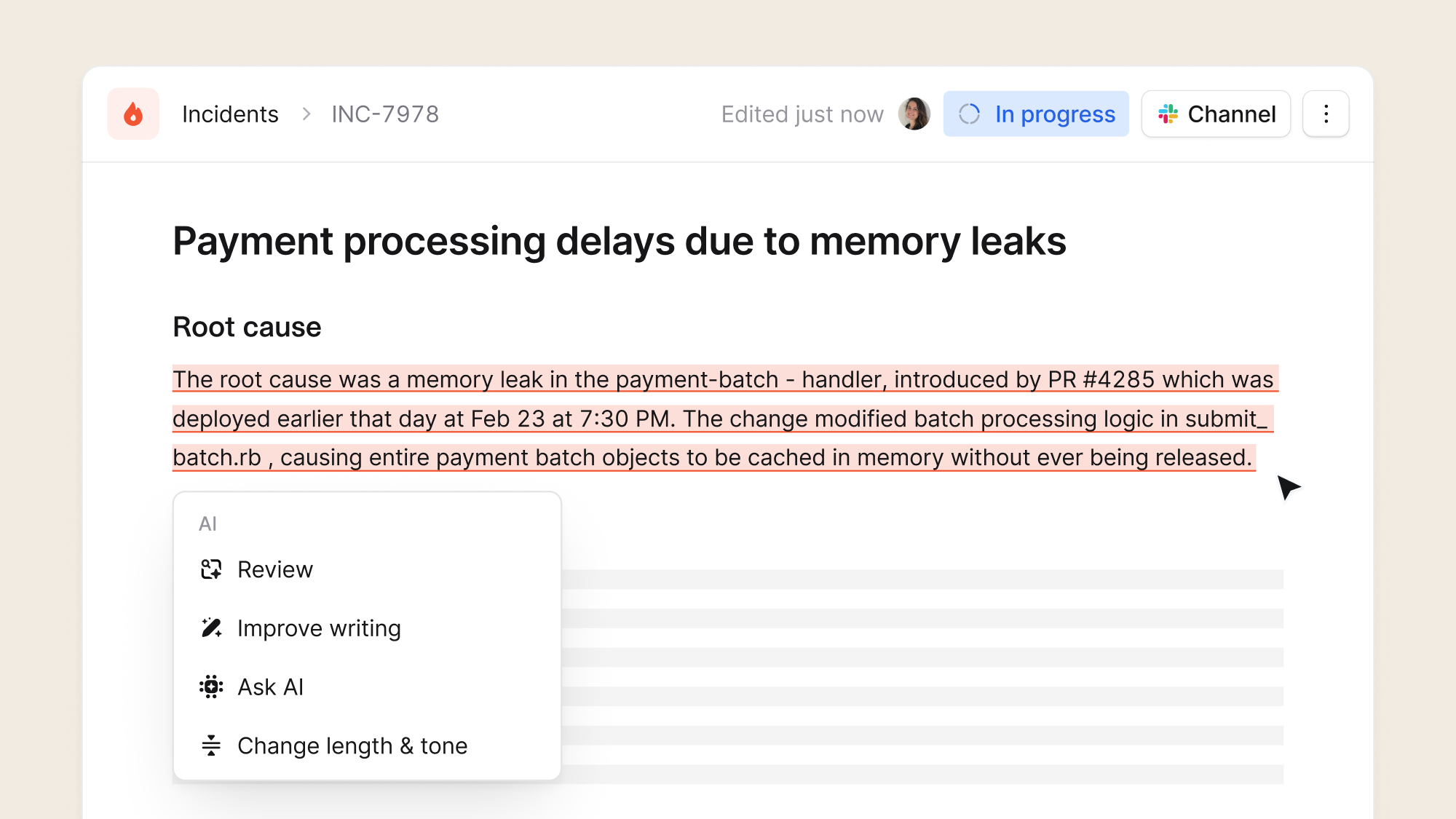

- Rewrite and enrich any section. Select any text and rework it to tighten the writing, pull in genuine incident context, change tone for different audiences. It knows everything that happened during your incident, not just what's on the page.

- Review your draft for accuracy. Once you've got your draft in a good place, you can check it against what actually happened. It goes through your document like a post-mortem expert, leaving comments where details are missing, or numbers don't match. Comments are only visible to you, so you can run it as many times as you need to surface any glaring issues, before you send it to a colleague to review.

- Take meeting notes with Scribe. If your team is running a debrief meeting and you’d like us to take notes automatically or you, simply add Scribe. It transcribes your conversation and pulls key takeaways directly into your post-mortem, so you can focus on having a great discussion.

- Ask questions without leaving the editor. Open the chatbot and ask about your incident while you write, things like what happened, when things started, what the impact looked like, all without breaking your flow.

For the full rundown on each new feature, check out the changelog.

An editor tailor-made for postmortems

Post-mortems are full of references to things that change as you learn more about what happened over time. Services get renamed, follow-ups get completed, timelines get updated.

With our new editor, all your incident data lives right inside the document, so everything stays accurate and in context without you having to maintain it manually. Variables like, timeline, people involved, services affected, custom fields are all live and synced.

- Type

@to pull in a GitHub PR, a Slack channel, a catalog entry. - Use

/commands to add callouts, code blocks, images.

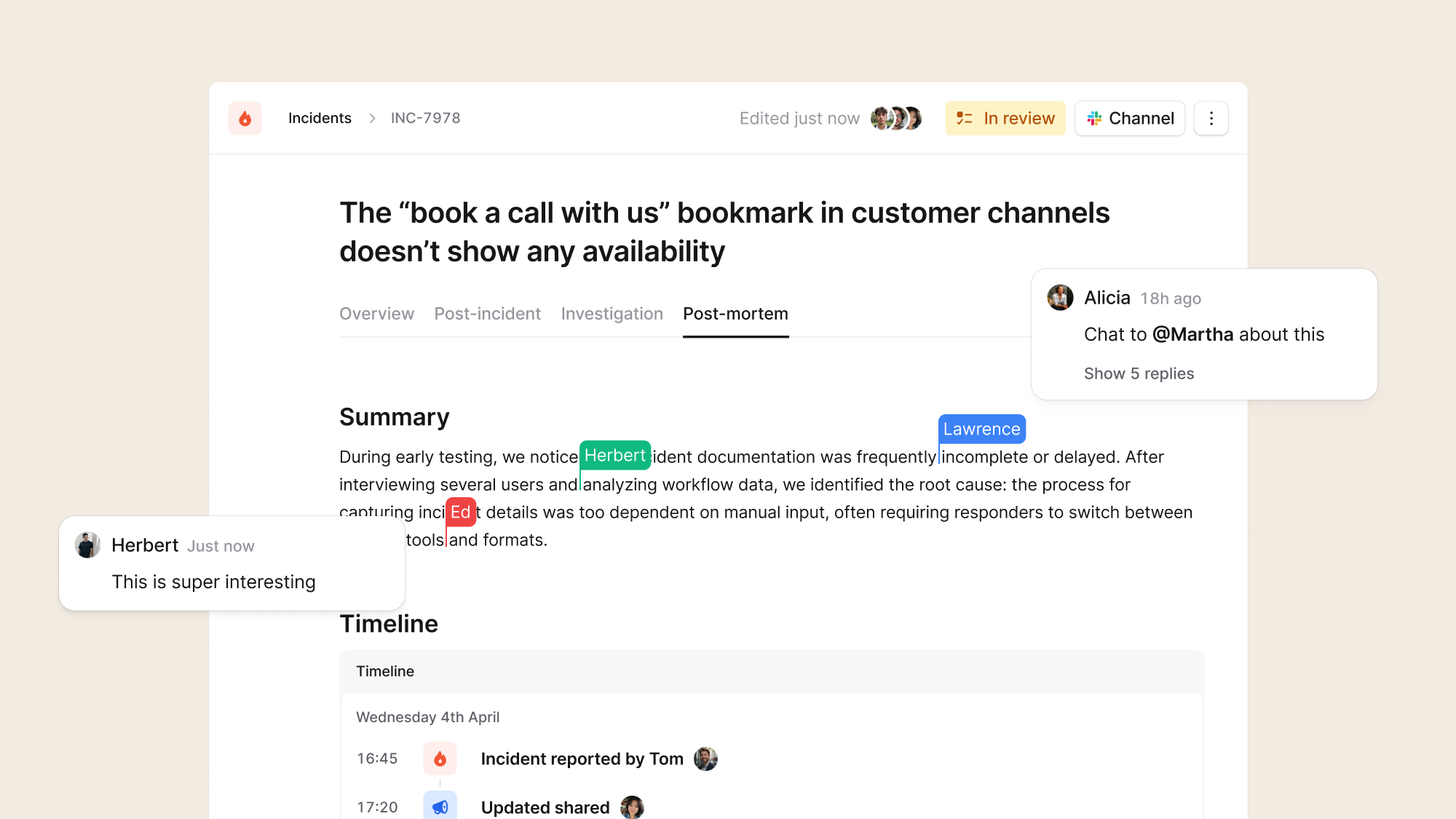

We also know post-mortems are rarely a solo activity. So, now multiple people can work in the document at the same time with live cursors, real-time edits, and inline comments with threaded replies. Tag a teammate on a specific section and have the conversation right where it's needed.

Up-level post-mortems across your whole organisation

Lastly, when you're running post-mortems at scale, you need to know what's been written, what's overdue, and whether anyone's actually reading them. We've built all the tooling to give you that visibility, so you can raise the bar across your whole team:

- Multiple documents per incident. Create different document types for specific audiences. You could have an internal post-mortem, a customer-facing RCA, then a non-technical summary for leadership, each with its own template and status.

- Post-mortem dashboard. Filter by status, severity, due dates, and incident lead. See what's overdue and what follow-ups are still open.

- Analytics. See who's read what, who's edited, and when, so you know if your post-mortems are having their intended impact.

- Automated exports. Send finished post-mortems to Google Docs, Confluence, Notion, or SharePoint. Or simply use Workflows to do it automatically when a post-mortem is marked complete.

- Document API. Use your post-mortem data via custom integrations, LLM agents and knowledge bases.

The goal is to make it easy to manage post-mortems effectively at scale, so the focus stays on learning from incidents, not herding cats and tracking documents.

Why we built this

We've had post-mortems in the platform for a while (it was one of the earliest things we prototyped in addition to our Slack integration!). But the previous experience was starting to feel really tired and not in keeping with the quality across the rest of our platform.

We care deeply about this part of the incident lifecycle because it’s where you go from "that was bad" to "here's what we're going to do about it”. Making that process genuinely feel good felt like one of the things we’re really well placed to solve and one of the most important things we could work on.

It was functional, and you could use it but it wasn't the kind of thing that made you want to actually write a post-mortem or love it, and that matters. The whole point of a post-mortem is learning, and if the tool makes the process painful, people either skip it or do the bare minimum.

We wanted to build something which would change these dynamics. Where the honest reaction is "oh, that was actually pretty easy". Where an AI draft saves you a blank page and hours of admin, where we catch the things you missed automatically, and for the average case, quality postmortems can be written in hours, not days.

Available today

The new post-mortems editor is available today for all incident.io users. If you're not already using it, this is a pretty good reason to start.

Go try it out, we'd love to hear what you think!

See related articles

Bloom filters: the niche trick behind a 16× faster API

This post is a deep dive into how we improved the P95 latency of an API endpoint from 5s to 0.3s using a niche little computer science trick called a bloom filter.

Mike Fisher

Mike Fisher

My first three months at incident.io

Hear from Edd - one of our recent joiners in the On-Call team - how have they found their first three months and what's it been like working here.

Edd Sowden

Edd Sowden

Impact review: Scribe under the microscope

In this post we review the impact of our AI-powered transcription feature, Scribe, as we analyse key metrics, user behaviour, and feedback to drive future improvements.

Kelsey Mills

Kelsey MillsSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization