How to empower your team to own incident response

Responding to and managing incidents feels fairly straightforward when you’re in a small team. As your team grows, it becomes harder to figure out the ownership of your services, especially during critical times. In those moments, you need everyone to know exactly what their role is in order to recover fast.

Moving to incident.io as the 7th engineer, from a scaleup of around 70 engineers, has given me a new perspective on what it means to own your code. Switching from somewhere with a centralised platform team who hold the pager and coordinate response, to being part of the on-call rotation has been really eye-opening.

Previously I worked in a team that was working towards a you build it, you run it approach. The intention was to give delivery teams autonomy and control over their own services – from creation all the way through to monitoring. As delivery teams grew, incident response became complicated. When the first responders didn't have context on the code it could be really hard to identify the root of a problem. This is magnified as systems become more varied and complex. Incident channels could have 20 people in before anyone relevant was identified and looped in. That’s a lot of people interrupted from their flow without adding much value.

Personally, having a disconnect between the code I wrote and what was going on in production gave me a lack of confidence. I felt like I didn’t understand the platform or how it worked since I didn’t look at my code’s journey beyond clicking “merge”. It’s easy to not fully understand the importance of quality and reliability when your bugs aren’t waking you up at night.

It’s really hard to get engineers excited about ownership when it’s been someone else’s job for a long time. Teams feel uncomfortable about the idea of their 9-5 job description changing, evening and weekend pagers are scary and companies understandably do not want to come across as adding extra responsibilities without clear communication of why this will actually save time.

It’s hard but worthwhile to push past this. The core sense of ownership is vital to maintaining an empowered and skilled workforce of engineers that care about what they build. These are some suggestions for what can work to get engineers back to being excited about ownership.

Start small

The idea of 24/7 support is scary. When you’re used to being shielded it’s easy to feel like you can’t deal with incidents independently, especially at 4am.

1. Find the path (or team) of least resistance

Is there a team that has a good monitoring approach already? Or a smaller product area with a clear sense of ownership? Even a team with just a few engineers who feel excited about the idea. It’s easy to get the momentum going when there’s a head start.

It’s vital to encourage this publicly and loudly to start a culture change where other teams follow the example.

2. Don’t drop people in the deep end

Handing over a pager 9-5 is a great way to test the sensitivity of alerts without consequences. It’s nice to have the security of dealing with things when others are around, to give you the confidence to take it alone in the future.

Define clear expectations that teams should handle their code within working hours. Document what support is available, and where people can turn if things go wrong. Setting up pairs of engineers to collaborate for the first couple of rotations can go along way in making things feel less scary.

3. Keep it simple

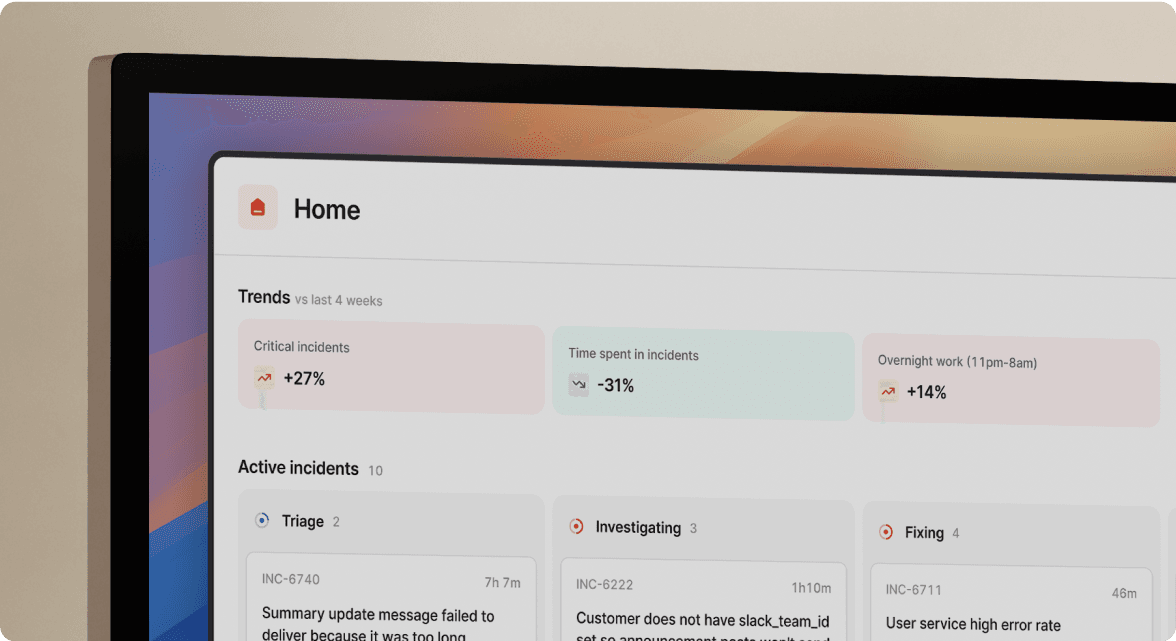

Declaring and managing incidents should be easy. If your team uses Slack, make sure they don’t have to leave it in order to coordinate response. Using an incident management tool like incident.io means people can raise incidents, deal with them, and escalate directly from Slack.

Configuring an expected incident flow through a tool like this goes a long way in supporting engineers to make the right decisions under pressure, removing the “am I doing this right” worry. Maintaining a clear timeline of what decisions were made in previous incidents and when is a great resource to show people scenarios they might encounter as they onboard into incident response. Keep note of a few past incidents, they’re great learning resources for newer engineers to follow.

Remove the noise

When pagers aren’t involved, it’s easy to let alerting get messy. I’ve missed urgent issues before because of a lack of clarity and trust in what is pinging us.

Invest in streamlining your alerts to only include things that are “drop everything” moments.

Make sure your alerting process is easy to understand and modify. I’ve been in, and contributed to situations where monitoring gets messier and messier because people just aren’t empowered to fix it themselves.

Encourage teams to get together and decide what they really care about, and give them time to implement those thoughts in their paging process.

Google’s 4 golden signals are a great place to start with knowing what to monitor.

Empower everyone

There are naturally some people in each team that are the firefighters. Perhaps they have the most legacy knowledge, understand the platform, and are the first to turn to when things go wrong. You need to be actively working away from relying on these roles and knowledge silos for code ownership to really work. The responsibility to take the pager and triage incidents coming in must be baked into the culture of the team. Focus on empowering the more hesitant members. The aim should be that anyone and everyone in a team can make sensible decisions about how to deal with incidents on the fly.

A good support network and process to follow can really help here. Who can someone turn to if they’re stuck? What should the default flow be? How can they get others involved?

These are all questions I’d want well documented before being responsible for my team’s code out of hours.

In conclusion

Reaching an effective culture of end-to-end code ownership is difficult. Big cultural change can be uncomfortable. It’s crucial to be empathetic and understand that technical solutions will only go so far. Keeping people at the heart of the approach is essential to getting empowered supporters behind you and making a real difference.

See related articles

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom Wentworth

Humans aren’t fast enough for 4 9’s

Hitting 99.99% isn't a faster version of what you already do. It's a different problem to be solved: autonomous recovery, dependency ceilings, redundancies, and the discipline to build systems that buy you 15-30 minutes before you're needed at all.

Norberto Lopes

Norberto LopesSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization