Rootly for mid-market teams: Does it scale? plus better alternatives

Updated Apr 21, 2026

TL;DR: Rootly is a capable incident management platform with deep Slack integration and flexible workflows, but its pricing model can create cost uncertainty as teams scale from 50 to 150+ engineers. For mid-market engineering teams (50-250 engineers) that need predictable costs, fast onboarding, and AI that automates incident response workflows, we deliver transparent per-user pricing and a faster path to reduced MTTR.

Teams scaling from 50 to 150 engineers often see their incident management costs climb faster than headcount growth. The challenge isn't just licensing costs. It's pricing structures that can create unpredictability exactly when you're hiring fastest, combined with configuration overhead that grows with team size.

This article evaluates Rootly specifically for mid-market engineering organizations (50-250 engineers), covering where it works well, where it creates friction, and which alternatives eliminate the coordination tax at this growth stage.

Key traits of mid-market engineering teams

Mid-market engineering organizations occupy a specific zone: large enough to need structured incident response processes, but often without dedicated headcount for tooling management. Three challenges define this stage.

Team size and structure

At 50 engineers, most teams run a patchwork approach: PagerDuty or Opsgenie for alerting, manual Slack channels for coordination, and Google Docs for post-mortems. By 100 engineers, three problems compound:

- On-call rotation complexity: Scheduling across multiple squads and time zones breaks spreadsheets quickly.

- Incident ownership ambiguity: "Who owns the payments service?" stops being answerable from memory.

- Post-mortem debt: Manual documentation falls behind, action items get lost, and the same incidents repeat.

Onboarding new on-call engineers

A tool that requires weeks of training before a new engineer can take primary rotation creates two operational risks: burnout among the small group of experienced responders who carry the load, and single points of failure when those engineers are unavailable. The real cost shows up in engineer-hours the senior SRE shadowing every incident until the new hire can handle escalation independently, the delayed rotation coverage during hiring sprints, and the compounding risk as team growth outpaces onboarding capacity.

Our structured Slack-native workflows scale incident response across growing teams.

Avoiding tool context-switching

Coordination overhead (assembling the team, finding context, updating tools) can consume 10–15 minutes per incident before actual troubleshooting begins. For teams handling numerous incidents monthly, this overhead compounds into substantial lost engineering time that could be spent on feature development or reliability improvements.

Rootly's incident workflow for growing teams

Rootly is a working incident management platform with deep Slack integration, workflow automation, on-call scheduling, and post-mortem features. For teams with bandwidth to configure it, it works well. Understanding where it creates friction matters before you commit at scale.

Workflow configuration and scaling

Rootly's alert routing and workflow engine offer flexibility for configuring incident response processes. Rootly supports multi-team incident collaboration.

The friction point is configuration maintenance. Every time a service launches, a team restructures, or an on-call rotation changes, someone needs to update routing rules to match. For smaller teams this is straightforward. As organizations grow to 100+ engineers with multiple service teams, configuration maintenance becomes an ongoing workload.

Customer reviews on AWS Marketplace report basic setup in roughly 2 hours and majority configuration within a day, but building out comprehensive workflow automation, RBAC, and multi-team routing to match a 100-engineer organization's actual incident process takes considerably longer and requires ongoing maintenance.

Streamlining on-call onboarding

Rootly offers on-call scheduling and incident response as integrated products, though they are priced and purchased as separate product lines which is worth factoring into your budget when evaluating it against tools that require separate products for alerting and coordination. The platform provides escalation policies and scheduling capabilities alongside incident management features.

For teams migrating from PagerDuty or Opsgenie, unified incident and on-call management is a meaningful step forward. The implementation timeline matters when your team is handling production incidents during the migration.

Rootly pricing at mid-market scale: TCO analysis

If you're the one forecasting next quarter's tooling budget, this is the section that matters most. For teams scaling from 50 to 250 engineers, pricing structures shift from straightforward to opaque exactly when you need predictability most. You're hiring fast, often adding 20–40 engineers per year, and per-seat costs compound quickly when vendors bundle features into opaque tiers or charge separately for on-call, AI features, and advanced routing. Unlike early-stage startups (where a few thousand dollars won't make or break the budget) or large enterprises (where procurement teams negotiate multi-year contracts with volume discounts), mid-market teams face active hiring phases where next quarter's headcount directly impacts your incident management budget and you need to forecast that cost without waiting for a sales call.

Rootly per-user costs: mid-market 50-250

Rootly uses platform-tier pricing structured around capability bundles rather than strict per-seat billing. Based on Vendr's procurement data, the Essentials tier at 50 users runs $12,000/year list price (approximately $13,067 overall median across 64 purchases). Rootly's Enterprise tier covers larger deployments with custom pricing. Vendr data shows Enterprise deployments can exceed $60,000 annually depending on scale and negotiation.

Rootly's on-call add-on pricing

Rootly bundles on-call functionality into the platform. The exact pricing structure depends on your tier and user count. This bundling approach differs from pure per-seat models, which can make it challenging to predict costs as your team grows without consulting directly with Rootly's sales team.

Rootly's pricing model targets the same enterprise segment as PagerDuty. PagerDuty's pricing tiers include Professional and Business options, with costs that can escalate through per-seat charges and paid add-ons for AI features, noise reduction, and runbooks.

Rootly's incident overhead for growing teams

Slack integration depth

The meaningful difference from our architecture shows up in edge cases and complex tasks. Our full incident lifecycle runs entirely through slash commands and channel interactions, eliminating context switching during high-pressure incidents.

Timeline capture

Rootly provides incident timeline and post-mortem capabilities. The question is how much of that capture happens automatically versus requiring manual input.

Rootly's AI root cause accuracy

Rootly offers AI-driven features aimed at reducing incident resolution time.

Our AI SRE takes a more specific approach: it investigates the moment alerts fire, connects telemetry, code changes, and past incidents, and identifies the likely pull request behind the incident without leaving Slack. If the root cause is a code issue, it generates a fix and opens a pull request directly. This is AI that reduces the actual investigative workload, not just AI that correlates logs.

Incident solutions for growth-stage firms

incident.io: resolve in Slack, avoid context-switching

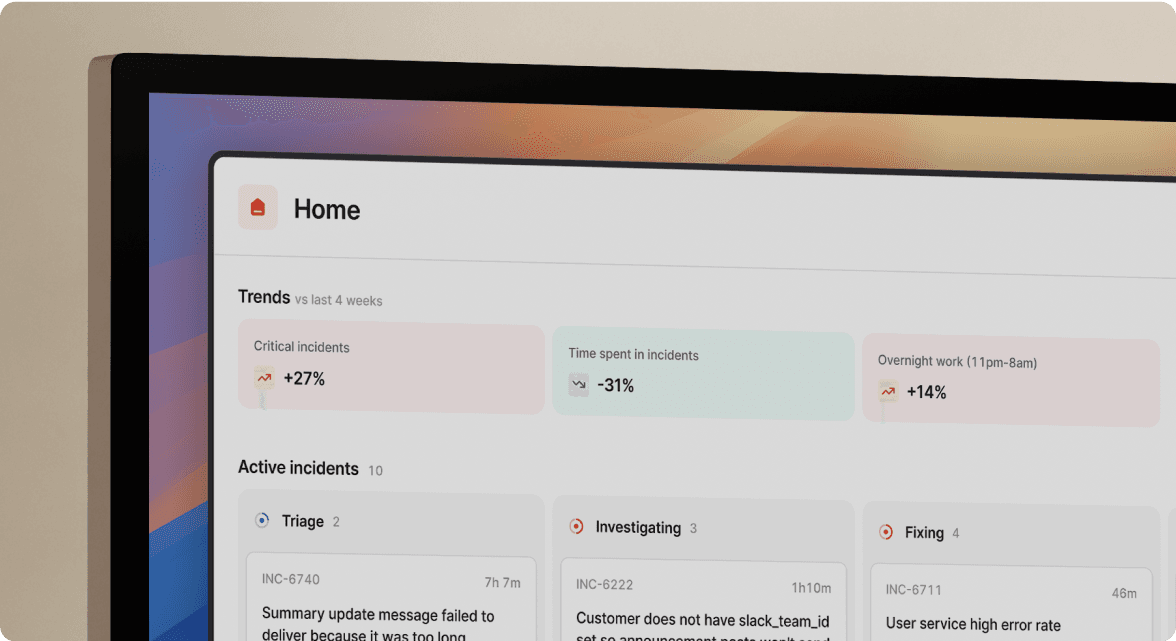

When a Datadog alert fires, we auto-create a dedicated incident channel, page the on-call engineer, and start capturing the timeline all without leaving Slack. Here's what that looks like in practice:

- Datadog alert fires on API latency spike

- we create

#inc-2847-api-latency, page the on-call engineer, and pull in the service owner - Timeline capture starts automatically with no manual logging

- AI SRE connects telemetry, recent deploys, and past incidents to surface the likely root cause

The entire response lifecycle runs through /inc slash commands. The AI SRE automates up to 80% of incident response: note-taking, timeline capture, root cause identification, and post-mortem generation. Favor reduced MTTR by 37% after adopting incident.io, according to the Favor case study, reclaiming hours previously lost to coordination overhead.

FireHydrant: AI suite without fix PR generation

FireHydrant targets a similar mid-market audience and markets a substantial AI suite: AI-enriched incident summaries, live video transcription from Zoom and Google Meet, AI-enhanced retrospectives, and AI triage channels. These are core advertised features, not afterthoughts. Where incident.io differentiates is narrower but meaningful: automated root cause identification and fix PR generation. incident.io's AI SRE connects telemetry, recent deploys, and past incidents to surface the likely root cause and open a fix pull request directly from Slack, a workflow FireHydrant does not offer. incident.io also ships feature requests in days rather than quarters, which matters when you file a bug during an active incident.

PagerDuty: mature alerting, enterprise pricing

PagerDuty has a sophisticated alert routing engine built over years of enterprise deployments. At a premium price point, it's expensive. We offer migration support to help teams transition from PagerDuty with reduced friction.

Rootly alternatives: feature matrix

| Feature | incident.io | Rootly | PagerDuty |

|---|---|---|---|

| Slack architecture | Full lifecycle in Slack | Deep Slack integration | Slack actions available |

| AI incident automation | Automates up to 80% of incident response, including PR generation | AI features available | AI features as add-ons |

| Post-mortem generation | Auto-drafted from captured data | Template-based | Timeline features available |

| On-call scheduling | Add-on ($20/user/mo Pro) | Available | Included in base plans |

| Pricing model | Per-user: $45/mo all-in (Pro) | Contact for pricing | Per-user + add-ons |

| Status page | Included | Available | Available |

| Time-to-operational | Days, not weeks | Contact vendor for details | Varies by deployment |

| Support model | Shared Slack channels | Shared Slack channels | Email; chat for premium tiers |

Which tool fits your incident budget?

The table below compares total annual costs at common mid-market headcount milestones. incident.io's pricing is transparent and published: $45/user/month for Pro with on-call included. Rootly and PagerDuty require vendor contact for accurate quotes, which can make budget forecasting harder during active hiring phases when you need cost predictability most.

| Team size | incident.io Pro | Rootly | PagerDuty Business |

|---|---|---|---|

| 50 users | $27,000/yr | Contact for pricing | Varies by negotiation |

| 100 users | $54,000/yr | Contact for pricing | Varies by negotiation |

| 150 users | $81,000/yr | Contact for pricing | Varies by negotiation |

Note: Rootly and PagerDuty pricing varies based on team size, negotiation, and feature requirements. Contact vendors directly for accurate quotes. PagerDuty costs escalate with add-ons for AI, noise reduction, and runbooks. All incident.io figures are based on published per-user pricing of $45/user/month for Pro with on-call included. Status page is included from the Basic plan and above. The Team and Pro plans inherit this.

Quantifying MTTR reduction ROI

When you're evaluating vendors, don't rely on published benchmarks alone. Here's how to run a proof of concept that produces numbers you can take to a budget conversation. The pricing comparison matters most when set against MTTR improvement. That 37% MTTR reduction at Favor is a useful baseline: hours reclaimed from coordination overhead add up fast: 15 minutes saved per incident × 20 incidents per month = 5 hours of engineering time returned to your team every month. Measure these four things directly during your trial rather than relying on vendor claims:

- Time from alert to coordinated response: Track alert-to-assembled-team time in week 1 vs. week 4 of your proof of concept.

- Post-mortem completion rate: Measure how many post-mortems publish within 24 hours and 48 hours after resolution.

- New engineer confidence: Require vendors to demonstrate onboarding speed. A capable platform should have a new on-call engineer running primary rotation without supervision within 3 incidents.

- Stack integration depth: Confirm native integrations across your full stack alerting (Datadog, Prometheus), task tracking (Jira, Linear), comms (Slack or Teams), and code (GitHub) without requiring custom scripting as a condition of your trial approval. We cover all four categories natively.

Vendor negotiation checklist

Before signing any contract, ask vendors directly: Is on-call included or a separate add-on? What headcount threshold triggers a tier upgrade? Are AI features on your plan or behind a paywall? Get renewal pricing language in writing before you sign.

If you want to see the Slack-native workflow in action before committing, Schedule a demo of incident.io and run a live incident through the platform end-to-end.

Key terms glossary

MTTR (Mean Time To Resolution): The average time from when an incident is declared to when it is fully resolved. MTTR is the primary benchmark for measuring incident response performance. A lower MTTR means less downtime, less engineering time lost, and less customer impact.

On-call rotation: A schedule that assigns engineers to be primary responders for production incidents during a defined time window. Rotations typically cycle across team members to distribute overnight and weekend coverage and prevent burnout.

Incident commander: The engineer who leads an active incident: coordinating the response team, delegating investigation tasks, and making decisions on escalation and resolution. Also called incident lead.

Post-mortem: A structured document written after a resolved incident that captures what happened, why it happened, and what action items will prevent recurrence. Published post-mortems are the primary output of a healthy incident review process.

RBAC (Role-Based Access Control): A permission system that restricts what actions users can take based on their role. In incident management, RBAC controls who can declare incidents, modify routing rules, access sensitive timelines, or manage on-call schedules.

Alert routing: The logic that determines which team or engineer receives a notification when a monitoring tool fires an alert. Routing rules typically reference service ownership, severity thresholds, and time-of-day schedules.

Escalation policy: A defined sequence of notification steps that fires if the primary on-call engineer does not acknowledge an alert within a set time. Escalation policies prevent alerts from going unanswered during incidents.

AI SRE: incident.io's AI assistant that investigates incidents the moment alerts fire, connecting telemetry, recent deploys, and past incidents to identify the likely root cause. It can generate a fix and open a pull request directly from Slack, automating up to 80% of incident response.

TCO (Total Cost of Ownership): The full cost of a platform beyond its list price, including onboarding time, configuration maintenance, training, add-on features, and renewal pricing. TCO is the relevant number when comparing incident management tools at mid-market scale.

SRE (Site Reliability Engineer): An engineer responsible for the availability, performance, and reliability of production systems. SREs typically own on-call rotations, incident response processes, and post-mortem programs.

FAQs

See related articles

Engineering teams in 2027

A forward look at where engineering teams are heading with AI, based on conversations with design partners who are visibly six-to-twelve months ahead of the average. Tailored code agents, MCP gateways, agentic products that talk to each other — most of the picture is already there in pockets, and the rest of the industry is closing the gap fast.

Lawrence Jones

Lawrence Jones

incident.io launches PagerDuty Rescue Program

incident.io just launched the PagerDuty Rescue Program, making it easier than ever for engineering teams to ditch their decade-old on-call tooling. The program includes a contract buyout (up to a year free), AI-powered white glove migration, a 99.99% uptime SLA, and AI-first on-call that investigates alerts autonomously the moment they fire.

Tom Wentworth

Tom Wentworth

Humans aren’t fast enough for 4 9’s

Hitting 99.99% isn't a faster version of what you already do. It's a different problem to be solved: autonomous recovery, dependency ceilings, redundancies, and the discipline to build systems that buy you 15-30 minutes before you're needed at all.

Norberto Lopes

Norberto LopesSo good, you’ll break things on purpose

Ready for modern incident management? Book a call with one of our experts today.

We’d love to talk to you about

- All-in-one incident management

- Our unmatched speed of deployment

- Why we’re loved by users and easily adopted

- How we work for the whole organization